Right now, I’m publishing the Distributed Computing Manifesto, a canonical

doc from the early days of Amazon that reworked the structure

of Amazon’s ecommerce platform. It highlights the challenges we have been

dealing with on the finish of the 20th century, and hints at the place we have been

headed.

In relation to the ecommerce facet of Amazon, architectural info

was hardly ever shared with the general public. So, after I was invited by Amazon in

2004 to offer a speak about my distributed methods analysis, I virtually

didn’t go. I used to be pondering: internet servers and a database, how exhausting can

that be? However I’m completely satisfied that I did, as a result of what I encountered blew my

thoughts. The dimensions and variety of their operation was not like something I

had ever seen, Amazon’s structure was a minimum of a decade forward of what

I had encountered at different corporations. It was greater than only a

high-performance web site, we’re speaking about all the pieces from

high-volume transaction processing to machine studying, safety,

robotics, binning tens of millions of merchandise – something that you could possibly discover

in a distributed methods textbook was occurring at Amazon, and it was

occurring at unbelievable scale. Once they supplied me a job, I couldn’t

resist. Now, after virtually 18 years as their CTO, I’m nonetheless blown away

each day by the inventiveness of our engineers and the methods

they’ve constructed.

To invent and simplify

A steady problem when working at unparalleled scale, whenever you

are many years forward of anybody else, and rising by an order of magnitude

each few years, is that there is no such thing as a textbook you may depend on, neither is

there any business software program you should buy. It meant that Amazon’s

engineers needed to invent their approach into the longer term. And with each few

orders of magnitude of development the present structure would begin to

present cracks in reliability and efficiency, and engineers would begin to

spend extra time with digital duct tape and WD40 than constructing

new modern merchandise. At every of those inflection factors, engineers

would invent their approach into a brand new architectural construction to be prepared

for the following orders of magnitude development. Architectures that no one had

constructed earlier than.

Over the following 20 years, Amazon would transfer from a monolith to a

service-oriented structure, to microservices, then to microservices

working over a shared infrastructure platform. All of this was being

carried out earlier than phrases like service-oriented structure existed. Alongside

the best way we discovered a number of classes about working at web scale.

Throughout my keynote at AWS

re:Invent

in a few weeks, I plan to speak about how the ideas on this doc

began to form what we see in microservices and occasion pushed

architectures. Additionally, within the coming months, I’ll write a sequence of

posts that dive deep into particular sections of the Distributed Computing

Manifesto.

A really temporary historical past of system structure at Amazon

Earlier than we go deep into the weeds of Amazon’s architectural historical past, it

helps to know a little bit bit about the place we have been 25 years in the past.

Amazon was transferring at a speedy tempo, constructing and launching merchandise each

few months, improvements that we take without any consideration right now: 1-click shopping for,

self-service ordering, on the spot refunds, suggestions, similarities,

search-inside-the-book, associates promoting, and third-party merchandise.

The checklist goes on. And these have been simply the customer-facing improvements,

we’re not even scratching the floor of what was occurring behind the

scenes.

Amazon began off with a standard two-tier structure: a

monolithic, stateless utility

(Obidos) that was

used to serve pages and a complete battery of databases that grew with

each new set of product classes, merchandise inside these classes,

prospects, and international locations that Amazon launched in. These databases have been a

shared useful resource, and finally turned the bottleneck for the tempo that

we needed to innovate.

Again in 1998, a collective of senior Amazon

engineers began to put the groundwork for a radical overhaul of

Amazon’s structure to help the following technology of buyer centric

innovation. A core level was separating the presentation layer, enterprise

logic and information, whereas guaranteeing that reliability, scale, efficiency and

safety met an extremely excessive bar and protecting prices underneath management.

Their proposal was referred to as the Distributed Computing Manifesto.

I’m sharing this now to offer you a glimpse at how superior the pondering

of Amazon’s engineering group was within the late nineties. They constantly

invented themselves out of hassle, scaling a monolith into what we

would now name a service-oriented structure, which was essential to

help the speedy innovation that has change into synonymous with Amazon. One

of our Management Rules is to invent and simplify – our

engineers actually stay by that moto.

Issues change…

One factor to bear in mind as you learn this doc is that it

represents the pondering of just about 25 years in the past. Now we have come a good distance

since — our enterprise necessities have developed and our methods have

modified considerably. You might learn issues that sound unbelievably

easy or widespread, chances are you’ll learn issues that you just disagree with, however within the

late nineties these concepts have been transformative. I hope you take pleasure in studying

it as a lot as I nonetheless do.

The total textual content of the Distributed Computing Manifesto is on the market under.

You too can view it as a PDF.

Created: Could 24, 1998

Revised: July 10, 1998

Background

It’s clear that we have to create and implement a brand new structure if

Amazon’s processing is to scale to the purpose the place it could help ten

instances our present order quantity. The query is, what type ought to the

new structure take and the way can we transfer in the direction of realizing it?

Our present two-tier, client-server structure is one that’s

primarily information certain. The purposes that run the enterprise entry

the database immediately and have data of the info mannequin embedded in

them. This implies that there’s a very tight coupling between the

purposes and the info mannequin, and information mannequin adjustments must be

accompanied by utility adjustments even when performance stays the

identical. This strategy doesn’t scale nicely and makes distributing and

segregating processing primarily based on the place information is positioned troublesome since

the purposes are delicate to the interdependent relationships

between information parts.

Key Ideas

There are two key ideas within the new structure we’re proposing to

tackle the shortcomings of the present system. The primary, is to maneuver

towards a service-based mannequin and the second, is to shift our processing

in order that it extra carefully fashions a workflow strategy. This paper doesn’t

tackle what particular expertise needs to be used to implement the brand new

structure. This could solely be decided when we’ve got decided

that the brand new structure is one thing that can meet our necessities

and we embark on implementing it.

Service-based mannequin

We suggest transferring in the direction of a three-tier structure the place presentation

(shopper), enterprise logic and information are separated. This has additionally been

referred to as a service-based structure. The purposes (purchasers) would no

longer have the ability to entry the database immediately, however solely via a

well-defined interface that encapsulates the enterprise logic required to

carry out the perform. Because of this the shopper is now not dependent

on the underlying information construction and even the place the info is positioned. The

interface between the enterprise logic (within the service) and the database

can change with out impacting the shopper because the shopper interacts with

the service although its personal interface. Equally, the shopper interface

can evolve with out impacting the interplay of the service and the

underlying database.

Companies, together with workflow, should present each

synchronous and asynchronous strategies. Synchronous strategies would probably

be utilized to operations for which the response is speedy, equivalent to

including a buyer or trying up vendor info. Nonetheless, different

operations which can be asynchronous in nature won’t present speedy

response. An instance of that is invoking a service to cross a workflow

factor onto the following processing node within the chain. The requestor does

not anticipate the outcomes again instantly, simply a sign that the

workflow factor was efficiently queued. Nonetheless, the requestor could also be

enthusiastic about receiving the outcomes of the request again finally. To

facilitate this, the service has to offer a mechanism whereby the

requestor can obtain the outcomes of an asynchronous request. There are

a few fashions for this, polling or callback. Within the callback mannequin

the requestor passes the tackle of a routine to invoke when the request

accomplished. This strategy is used mostly when the time between the

request and a reply is comparatively quick. A big drawback of

the callback strategy is that the requestor might now not be lively when

the request has accomplished making the callback tackle invalid. The

polling mannequin, nevertheless, suffers from the overhead required to

periodically test if a request has accomplished. The polling mannequin is the

one that can probably be probably the most helpful for interplay with

asynchronous providers.

There are a number of necessary implications that must be thought-about as

we transfer towards a service-based mannequin.

The primary is that we should undertake a way more disciplined strategy

to software program engineering. Presently a lot of our database entry is advert hoc

with a proliferation of Perl scripts that to a really actual extent run our

enterprise. Transferring to a service-based structure would require that

direct shopper entry to the database be phased out over a interval of

time. With out this, we can not even hope to appreciate the advantages of a

three-tier structure, equivalent to data-location transparency and the

means to evolve the info mannequin, with out negatively impacting purchasers.

The specification, design and improvement of providers and their

interfaces isn’t one thing that ought to happen in a haphazard vogue. It

needs to be fastidiously coordinated in order that we don’t find yourself with the identical

tangled proliferation we at the moment have. The underside line is that to

efficiently transfer to a service-based mannequin, we’ve got to undertake higher

software program engineering practices and chart out a course that permits us to

transfer on this path whereas nonetheless offering our “prospects” with the

entry to enterprise information on which they rely.

A second implication of a service-based strategy, which is expounded to

the primary, is the numerous mindset shift that might be required of all

software program builders. Our present mindset is data-centric, and after we

mannequin a enterprise requirement, we achieve this utilizing a data-centric strategy.

Our options contain making the database desk or column adjustments to

implement the answer and we embed the info mannequin throughout the accessing

utility. The service-based strategy would require us to interrupt the

answer to enterprise necessities into a minimum of two items. The primary

piece is the modeling of the connection between information parts simply as

we all the time have. This contains the info mannequin and the enterprise guidelines that

might be enforced within the service(s) that work together with the info. Nonetheless,

the second piece is one thing we’ve got by no means carried out earlier than, which is

designing the interface between the shopper and the service in order that the

underlying information mannequin isn’t uncovered to or relied upon by the shopper.

This relates again strongly to the software program engineering points mentioned

above.

Workflow-based Mannequin and Information Domaining

Amazon’s enterprise is nicely suited to a workflow-based processing mannequin.

We have already got an “order pipeline” that’s acted upon by numerous

enterprise processes from the time a buyer order is positioned to the time

it’s shipped out the door. A lot of our processing is already

workflow-oriented, albeit the workflow “parts” are static, residing

principally in a single database. An instance of our present workflow

mannequin is the development of customer_orders via the system. The

situation attribute on every customer_order dictates the following exercise in

the workflow. Nonetheless, the present database workflow mannequin won’t

scale nicely as a result of processing is being carried out in opposition to a central

occasion. As the quantity of labor will increase (a bigger variety of orders per

unit time), the quantity of processing in opposition to the central occasion will

improve to some extent the place it’s now not sustainable. An answer to

that is to distribute the workflow processing in order that it may be

offloaded from the central occasion. Implementing this requires that

workflow parts like customer_orders would transfer between enterprise

processing (“nodes”) that could possibly be positioned on separate machines.

As a substitute of processes coming to the info, the info would journey to the

course of. Because of this every workflow factor would require all the

info required for the following node within the workflow to behave upon it.

This idea is identical as one utilized in message-oriented middleware

the place models of labor are represented as messages shunted from one node

(enterprise course of) to a different.

A problem with workflow is how it’s directed. Does every processing node

have the autonomy to redirect the workflow factor to the following node

primarily based on embedded enterprise guidelines (autonomous) or ought to there be some

kind of workflow coordinator that handles the switch of labor between

nodes (directed)? As an instance the distinction, contemplate a node that

performs bank card prices. Does it have the built-in “intelligence”

to refer orders that succeeded to the following processing node within the order

pipeline and shunt those who did not another node for exception

processing? Or is the bank card charging node thought-about to be a

service that may be invoked from anyplace and which returns its outcomes

to the requestor? On this case, the requestor can be accountable for

coping with failure circumstances and figuring out what the following node in

the processing is for profitable and failed requests. A serious benefit

of the directed workflow mannequin is its flexibility. The workflow

processing nodes that it strikes work between are interchangeable constructing

blocks that can be utilized in several mixtures and for various

functions. Some processing lends itself very nicely to the directed mannequin,

as an example bank card cost processing since it could be invoked in

totally different contexts. On a grander scale, DC processing thought-about as a

single logical course of advantages from the directed mannequin. The DC would

settle for buyer orders to course of and return the outcomes (cargo,

exception circumstances, and many others.) to no matter gave it the work to carry out. On

the opposite hand, sure processes would profit from the autonomous

mannequin if their interplay with adjoining processing is fastened and never

prone to change. An instance of that is that multi-book shipments all the time

go from picklist to rebin.

The distributed workflow strategy has a number of benefits. One among these

is {that a} enterprise course of equivalent to fulfilling an order can simply be

modeled to enhance scalability. For example, if charging a bank card

turns into a bottleneck, extra charging nodes could be added with out

impacting the workflow mannequin. One other benefit is {that a} node alongside the

workflow path doesn’t essentially must depend upon accessing distant

databases to function on a workflow factor. Because of this sure

processing can proceed when different items of the workflow system (like

databases) are unavailable, enhancing the general availability of the

system.

Nonetheless, there are some drawbacks to the message-based distributed

workflow mannequin. A database-centric mannequin, the place each course of accesses

the identical central information retailer, permits information adjustments to be propagated

shortly and effectively via the system. For example, if a buyer

desires to alter the credit-card quantity getting used for his order as a result of

the one he initially specified has expired or was declined, this may be

carried out simply and the change can be immediately represented in every single place in

the system. In a message-based workflow mannequin, this turns into extra

sophisticated. The design of the workflow has to accommodate the truth that

a number of the underlying information might change whereas a workflow factor is

making its approach from one finish of the system to the opposite. Moreover,

with basic queue-based workflow it’s harder to find out the

state of any explicit workflow factor. To beat this, mechanisms

must be created that enable state transitions to be recorded for the

profit of outdoor processes with out impacting the provision and

autonomy of the workflow course of. These points make right preliminary

design way more necessary than in a monolithic system, and communicate again

to the software program engineering practices mentioned elsewhere.

The workflow mannequin applies to information that’s transient in our system and

undergoes well-defined state adjustments. Nonetheless, there’s one other class of

information that doesn’t lend itself to a workflow strategy. This class of

information is basically persistent and doesn’t change with the identical frequency

or predictability as workflow information. In our case this information is describing

prospects, distributors and our catalog. It is crucial that this information be

extremely obtainable and that we keep the relationships between these

information (equivalent to realizing what addresses are related to a buyer).

The concept of making information domains permits us to separate up this class of

information in keeping with its relationship with different information. For example, all

information pertaining to prospects would make up one area, all information about

distributors one other and all information about our catalog a 3rd. This permits us

to create providers by which purchasers work together with the assorted information

domains and opens up the potential of replicating area information in order that

it’s nearer to its client. An instance of this is able to be replicating

the client information area to the U.Okay. and Germany in order that buyer

service organizations may function off of a neighborhood information retailer and never be

depending on the provision of a single occasion of the info. The

service interfaces to the info can be similar however the copy of the

area they entry can be totally different. Creating information domains and the

service interfaces to entry them is a vital factor in separating

the shopper from data of the inner construction and placement of the

information.

Making use of the Ideas

DC processing lends itself nicely for example of the applying of the

workflow and information domaining ideas mentioned above. Information circulate via

the DC falls into three distinct classes. The primary is that which is

nicely suited to sequential queue processing. An instance of that is the

received_items queue crammed in by vreceive. The second class is that

information which ought to reside in an information area both due to its

persistence or the requirement that or not it’s extensively obtainable. Stock

info (bin_items) falls into this class, as it’s required each

within the DC and by different enterprise features like sourcing and buyer

help. The third class of information suits neither the queuing nor the

domaining mannequin very nicely. This class of information is transient and solely

required regionally (throughout the DC). It’s not nicely suited to sequential

queue processing, nevertheless, since it’s operated upon in mixture. An

instance of that is the info required to generate picklists. A batch of

buyer shipments has to build up in order that picklist has sufficient

info to print out picks in keeping with cargo methodology, and many others. As soon as

the picklist processing is completed, the shipments go on to the following cease in

their workflow. The holding areas for this third kind of information are referred to as

aggregation queues since they exhibit the properties of each queues

and database tables.

Monitoring State Modifications

The power for out of doors processes to have the ability to monitor the motion and

change of state of a workflow factor via the system is crucial.

Within the case of DC processing, customer support and different features want

to have the ability to decide the place a buyer order or cargo is within the

pipeline. The mechanism that we suggest utilizing is one the place sure nodes

alongside the workflow insert a row into some centralized database occasion

to point the present state of the workflow factor being processed.

This type of info might be helpful not just for monitoring the place

one thing is within the workflow however it additionally offers necessary perception into

the workings and inefficiencies in our order pipeline. The state

info would solely be stored within the manufacturing database whereas the

buyer order is lively. As soon as fulfilled, the state change info

can be moved to the info warehouse the place it could be used for

historic evaluation.

Making Modifications to In-flight Workflow Parts

Workflow processing creates an information forex drawback since workflow

parts include all the info required to maneuver on to the following

workflow node. What if a buyer desires to alter the transport tackle

for an order whereas the order is being processed? Presently, a CS

consultant can change the transport tackle within the customer_order

(offered it’s earlier than a pending_customer_shipment is created) since

each the order and buyer information are positioned centrally. Nonetheless, in a

workflow mannequin the client order might be someplace else being processed

via numerous phases on the best way to changing into a cargo to a buyer.

To have an effect on a change to an in-flight workflow factor, there needs to be a

mechanism for propagating attribute adjustments. A publish and subscribe

mannequin is one methodology for doing this. To implement the P&S mannequin,

workflow-processing nodes would subscribe to obtain notification of

sure occasions or exceptions. Attribute adjustments would represent one

class of occasions. To vary the tackle for an in-flight order, a message

indicating the order and the modified attribute can be despatched to all

processing nodes that subscribed for that specific occasion.

Moreover, a state change row can be inserted within the monitoring desk

indicating that an attribute change was requested. If one of many nodes

was in a position to have an effect on the attribute change it could insert one other row in

the state change desk to point that it had made the change to the

order. This mechanism signifies that there might be a everlasting report of

attribute change occasions and whether or not they have been utilized.

One other variation on the P&S mannequin is one the place a workflow coordinator,

as an alternative of a workflow-processing node, impacts adjustments to in-flight

workflow parts as an alternative of a workflow-processing node. As with the

mechanism described above, the workflow coordinators would subscribe to

obtain notification of occasions or exceptions and apply these to the

relevant workflow parts because it processes them.

Making use of adjustments to in-flight workflow parts synchronously is an

different to the asynchronous propagation of change requests. This has

the advantage of giving the originator of the change request on the spot

suggestions about whether or not the change was affected or not. Nonetheless, this

mannequin requires that each one nodes within the workflow be obtainable to course of

the change synchronously, and needs to be used just for adjustments the place it

is appropriate for the request to fail as a result of non permanent unavailability.

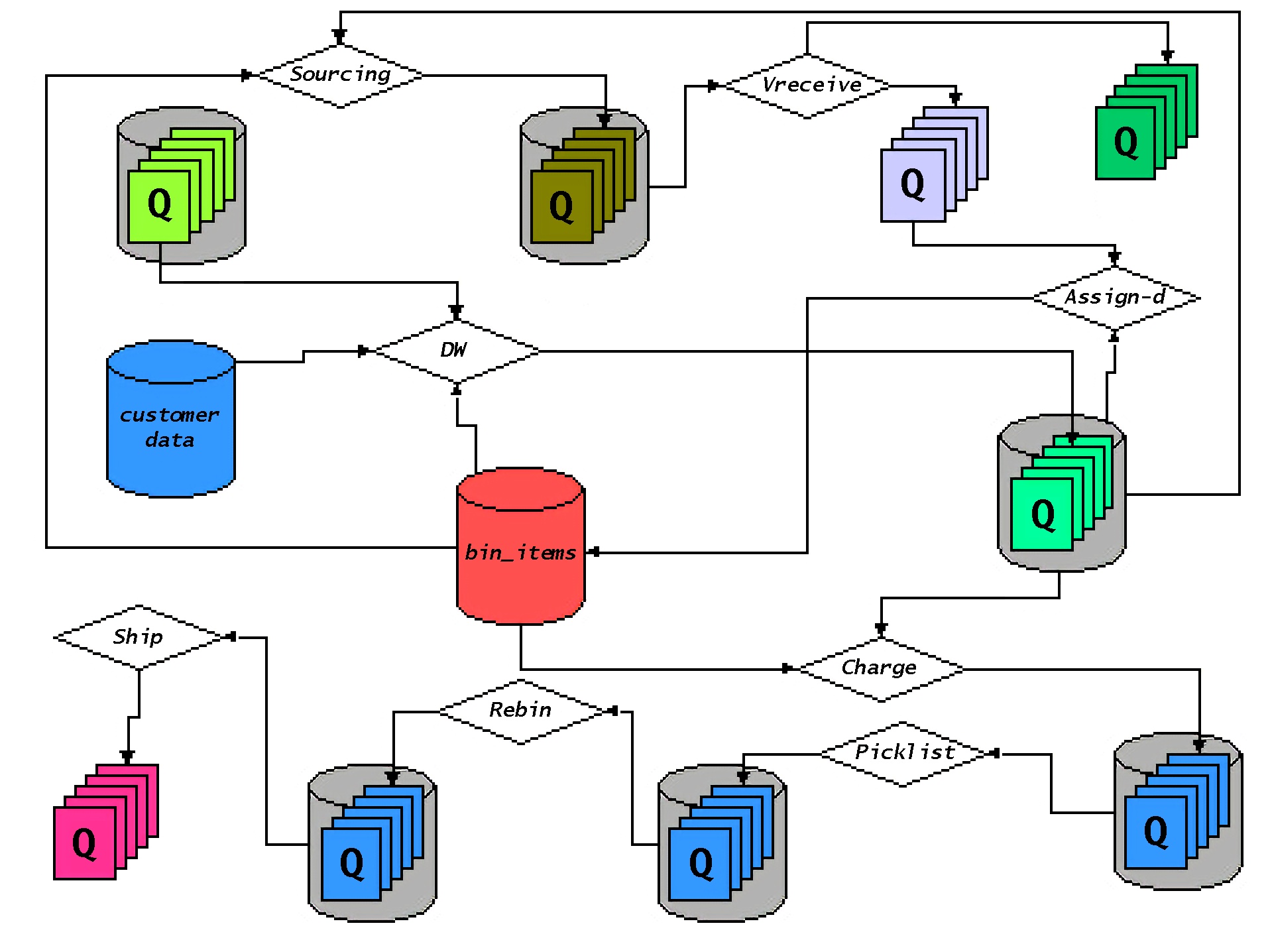

Workflow and DC Buyer Order Processing

The diagram under represents a simplified view of how a buyer

order moved via numerous workflow phases within the DC. That is modeled

largely after the best way issues at the moment work with some adjustments to

symbolize how issues will work as the results of DC isolation. On this

image, as an alternative of a buyer order or a buyer cargo remaining in

a static database desk, they’re bodily moved between workflow

processing nodes represented by the diamond-shaped containers. From the

diagram, you may see that DC processing employs information domains (for

buyer and stock info), true queue (for obtained objects and

distributor shipments) in addition to aggregation queues (for cost

processing, picklisting, and many others.). Every queue exposes a service interface

via which a requestor can insert a workflow factor to be processed

by the queue’s respective workflow-processing node. For example,

orders which can be able to be charged can be inserted into the cost

service’s queue. Cost processing (which can be a number of bodily

processes) would take away orders from the queue for processing and ahead

them on to the following workflow node when carried out (or again to the requestor of

the cost service, relying on whether or not the coordinated or autonomous

workflow is used for the cost service).

© 1998, Amazon.com, Inc. or its associates.