The proliferation of huge diffusion fashions for picture technology has led to a major improve in mannequin dimension and inference workloads. On-device ML inference in cellular environments requires meticulous efficiency optimization and consideration of trade-offs on account of useful resource constraints. Operating inference of huge diffusion fashions (LDMs) on-device, pushed by the necessity for price effectivity and person privateness, presents even larger challenges because of the substantial reminiscence necessities and computational calls for of those fashions.

We handle this problem in our work titled “Pace Is All You Want: On-Gadget Acceleration of Giant Diffusion Fashions by way of GPU-Conscious Optimizations” (to be offered on the CVPR 2023 workshop for Environment friendly Deep Studying for Laptop Imaginative and prescient) specializing in the optimized execution of a foundational LDM mannequin on a cellular GPU. On this weblog put up, we summarize the core methods we employed to efficiently execute giant diffusion fashions like Steady Diffusion at full decision (512×512 pixels) and 20 iterations on trendy smartphones with high-performing inference pace of the unique mannequin with out distillation of underneath 12 seconds. As mentioned in our earlier weblog put up, GPU-accelerated ML inference is usually restricted by reminiscence efficiency, and execution of LDMs isn’t any exception. Subsequently, the central theme of our optimization is environment friendly reminiscence enter/output (I/O) even when it means selecting memory-efficient algorithms over those who prioritize arithmetic logic unit effectivity. Finally, our main goal is to cut back the general latency of the ML inference.

|

| A pattern output of an LDM on Cellular GPU with the immediate textual content: “a photograph reasonable and excessive decision picture of a cute pet with surrounding flowers”. |

Enhanced consideration module for reminiscence effectivity

An ML inference engine sometimes supplies quite a lot of optimized ML operations. Regardless of this, reaching optimum efficiency can nonetheless be difficult as there’s a specific amount of overhead for executing particular person neural web operators on a GPU. To mitigate this overhead, ML inference engines incorporate intensive operator fusion guidelines that consolidate a number of operators right into a single operator, thereby decreasing the variety of iterations throughout tensor components whereas maximizing compute per iteration. As an example, TensorFlow Lite makes use of operator fusion to mix computationally costly operations, like convolutions, with subsequent activation capabilities, like rectified linear models, into one.

A transparent alternative for optimization is the closely used consideration block adopted within the denoiser mannequin within the LDM. The eye blocks enable the mannequin to give attention to particular elements of the enter by assigning increased weights to vital areas. There are a number of methods one can optimize the eye modules, and we selectively make use of one of many two optimizations defined under relying on which optimization performs higher.

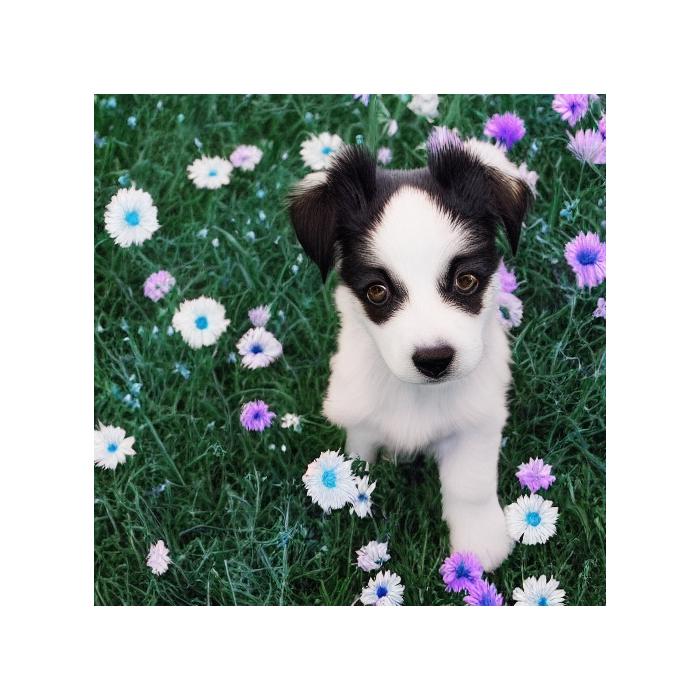

The primary optimization, which we name partially fused softmax, removes the necessity for intensive reminiscence writes and reads between the softmax and the matrix multiplication within the consideration module. Let the eye block be only a easy matrix multiplication of the shape Y = softmax(X) * W the place X and W are 2D matrices of form a×b and b×c, respectively (proven under within the prime half).

For numerical stability, T = softmax(X) is often calculated in three passes:

- Decide the utmost worth within the listing, i.e., for every row in matrix X

- Sum up the variations of the exponential of every listing merchandise and the utmost worth (from cross 1)

- Divide the exponential of the gadgets minus the utmost worth by the sum from cross 2

Finishing up these passes naïvely would end in an enormous reminiscence write for the non permanent intermediate tensor T holding the output of the complete softmax perform. We bypass this massive reminiscence write if we solely retailer the outcomes of passes 1 and a couple of, labeled m and s, respectively, that are small vectors, with a components every, in comparison with T which has a·b components. With this system, we’re in a position to scale back tens and even lots of of megabytes of reminiscence consumption by a number of orders of magnitude (proven under within the backside half).

|

| Consideration modules. Prime: A naïve consideration block, composed of a SOFTMAX (with all three passes) and a MATMUL, requires a big reminiscence write for the massive intermediate tensor T. Backside: Our memory-efficient consideration block with partially fused softmax in MATMUL solely must retailer two small intermediate tensors for m and s. |

The opposite optimization includes using FlashAttention, which is an I/O-aware, precise consideration algorithm. This algorithm reduces the variety of GPU high-bandwidth reminiscence accesses, making it a great match for our reminiscence bandwidth–restricted use case. Nevertheless, we discovered this system to solely work for SRAM with sure sizes and to require numerous registers. Subsequently, we solely leverage this system for consideration matrices with a sure dimension on a choose set of GPUs.

Winograd quick convolution for 3×3 convolution layers

The spine of widespread LDMs closely depends on 3×3 convolution layers (convolutions with filter dimension 3×3), comprising over 90% of the layers within the decoder. Regardless of elevated reminiscence consumption and numerical errors, we discovered that Winograd quick convolution to be efficient at rushing up the convolutions. Distinct from the filter dimension 3×3 utilized in convolutions, tile dimension refers back to the dimension of a sub area of the enter tensor that’s processed at a time. Growing the tile dimension enhances the effectivity of the convolution by way of arithmetic logic unit (ALU) utilization. Nevertheless, this enchancment comes on the expense of elevated reminiscence consumption. Our assessments point out {that a} tile dimension of 4×4 achieves the optimum trade-off between computational effectivity and reminiscence utilization.

| Reminiscence utilization | |||

| Tile dimension | FLOPS financial savings | Intermediate tensors | Weights |

| 2×2 | 2.25× | 4.00× | 1.77× |

| 4×4 | 4.00× | 2.25× | 4.00× |

| 6×6 | 5.06× | 1.80× | 7.12× |

| 8×8 | 5.76× | 1.56× | 11.1× |

| Impression of Winograd with various tile sizes for 3×3 convolutions. |

Specialised operator fusion for reminiscence effectivity

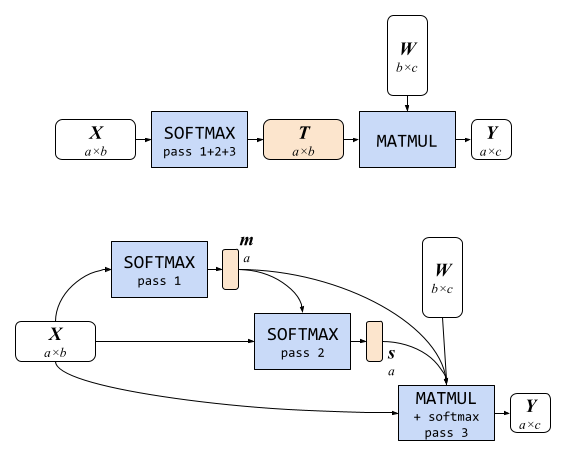

We found that performantly inferring LDMs on a cellular GPU requires considerably bigger fusion home windows for generally employed layers and models in LDMs than present off-the-shelf on-device GPU-accelerated ML inference engines present. Consequently, we developed specialised implementations that would execute a bigger vary of neural operators than typical fusion guidelines would allow. Particularly, we targeted on two specializations: the Gaussian Error Linear Unit (GELU) and the group normalization layer.

An approximation of GELU with the hyperbolic tangent perform requires writing to and studying from seven auxiliary intermediate tensors (proven under as mild orange rounded rectangles within the determine under), studying from the enter tensor x 3 times, and writing to the output tensor y as soon as throughout eight GPU packages implementing the labeled operation every (mild blue rectangles). A customized GELU implementation that performs the eight operations in a single shader (proven under within the backside) can bypass all of the reminiscence I/O for the intermediate tensors.

Outcomes

After making use of all of those optimizations, we performed assessments of Steady Diffusion 1.5 (picture decision 512×512, 20 iterations) on high-end cellular gadgets. Operating Steady Diffusion with our GPU-accelerated ML inference mannequin makes use of 2,093MB for the weights and 84MB for the intermediate tensors. With newest high-end smartphones, Steady Diffusion might be run in underneath 12 seconds.

Conclusion

Acting on-device ML inference of huge fashions has confirmed to be a considerable problem, encompassing limitations in mannequin file dimension, intensive runtime reminiscence necessities, and protracted inference latency. By recognizing reminiscence bandwidth utilization as the first bottleneck, we directed our efforts in the direction of optimizing reminiscence bandwidth utilization and placing a fragile stability between ALU effectivity and reminiscence effectivity. Consequently, we achieved state-of-the-art inference latency for giant diffusion fashions. You’ll be able to study extra about this work in the paper.

Acknowledgments

We might prefer to thank Yu-Hui Chen, Jiuqiang Tang, Frank Barchard, Yang Zhao, Joe Zou, Khanh LeViet, Chuo-Ling Chang, Andrei Kulik, Lu Wang, and Matthias Grundmann.