At NVIDIA GTC, Microsoft and NVIDIA are asserting new choices throughout a breadth of resolution areas from main AI infrastructure to new platform integrations, and business breakthroughs. At this time’s information expands our long-standing collaboration, which has paved the best way for revolutionary AI improvements that prospects at the moment are bringing to fruition.

Microsoft and NVIDIA collaborate on Grace Blackwell 200 Superchip for next-generation AI fashions

Microsoft and NVIDIA are bringing the facility of the NVIDIA Grace Blackwell 200 (GB200) Superchip to Microsoft Azure. The GB200 is a brand new processor designed particularly for large-scale generative AI workloads, information processing, and excessive efficiency workloads, that includes up to an enormous 16 TB/s of reminiscence bandwidth and as much as an estimated 45 instances the inference on trillion parameter fashions relative to the earlier Hopper era of servers.

Microsoft has labored intently with NVIDIA to make sure their GPUs, together with the GB200, can deal with the newest giant language fashions (LLMs) educated on Azure AI infrastructure. These fashions require monumental quantities of information and compute to coach and run, and the GB200 will allow Microsoft to assist prospects scale these sources to new ranges of efficiency and accuracy.

Microsoft may also deploy an end-to-end AI compute material with the not too long ago introduced NVIDIA Quantum-X800 InfiniBand networking platform. By benefiting from its in-network computing capabilities with SHARPv4, and its added help for FP8 for modern AI methods, NVIDIA Quantum-X800 extends the GB200’s parallel computing duties into large GPU scale.

Azure will probably be one of many first cloud platforms to ship on GB200-based cases

Microsoft has dedicated to bringing GB200-based cases to Azure to help prospects and Microsoft’s AI companies. The brand new Azure instances-based on the newest GB200 and NVIDIA Quantum-X800 InfiniBand networking will assist speed up the era of frontier and foundational fashions for pure language processing, pc imaginative and prescient, speech recognition, and extra. Azure prospects will be capable to use GB200 Superchip to create and deploy state-of-the-art AI options that may deal with large quantities of information and complexity, whereas accelerating time to market.

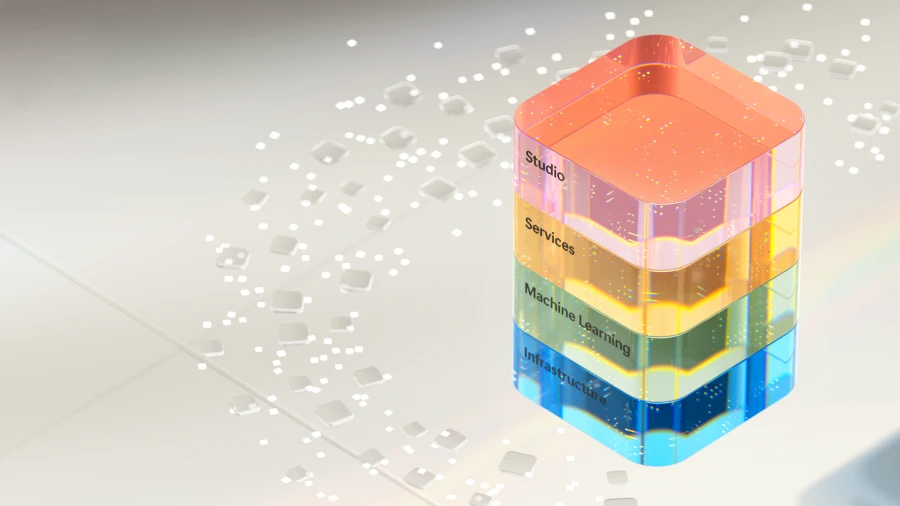

Azure additionally presents a variety of companies to assist prospects optimize their AI workloads, similar to Microsoft Azure CycleCloud, Azure Machine Studying, Microsoft Azure AI Studio, Microsoft Azure Synapse Analytics, and Microsoft Azure Arc. These companies present prospects with an end-to-end AI platform that may deal with information ingestion, processing, coaching, inference, and deployment throughout hybrid and multi-cloud environments.

Delivering on the promise of AI to prospects worldwide

With a robust basis of Azure AI infrastructure that makes use of the newest NVIDIA GPUs, Microsoft is infusing AI throughout each layer of the know-how stack, serving to prospects drive new advantages and productiveness positive factors. Now, with greater than 53,000 Azure AI prospects, Microsoft gives entry to one of the best number of basis and open-source fashions, together with each LLMs and small language fashions (SLMs), all built-in deeply with infrastructure information and instruments on Azure.

The not too long ago introduced partnership with Mistral AI can be an ideal instance of how Microsoft is enabling main AI innovators with entry to Azure’s cutting-edge AI infrastructure, to speed up the event and deployment of next-generation LLMs. Azure’s rising AI mannequin catalogue presents, greater than 1,600 fashions, letting prospects select from the newest LLMs and SLMs, together with OpenAI, Mistral AI, Meta, Hugging Face, Deci AI, NVIDIA, and Microsoft Analysis. Azure prospects can select one of the best mannequin for his or her use case.

“We’re thrilled to embark on this partnership with Microsoft. With Azure’s cutting-edge AI infrastructure, we’re reaching a brand new milestone in our enlargement propelling our modern analysis and sensible purposes to new prospects all over the place. Collectively, we’re dedicated to driving impactful progress within the AI business and delivering unparalleled worth to our prospects and companions globally.”

Arthur Mensch, Chief Govt Officer, Mistral AI

Basic availability of Azure NC H100 v5 VM collection, optimized for generative inferencing and high-performance computing

Microsoft additionally introduced the final availability of Azure NC H100 v5 VM collection, designed for mid-range coaching, inferencing, and excessive efficiency compute (HPC) simulations; it presents excessive efficiency and effectivity.

As generative AI purposes broaden at unbelievable pace, the basic language fashions that empower them will broaden additionally to incorporate each SLMs and LLMs. As well as, synthetic slender intelligence (ANI) fashions will proceed to evolve, targeted on extra exact predictions slightly than creation of novel information to proceed to reinforce its use instances. Their purposes embrace duties similar to picture classification, object detection, and broader pure language processing.

Utilizing the strong capabilities and scalability of Azure, we provide computational instruments that empower organizations of all sizes, no matter their sources. Azure NC H100 v5 VMs is one more computational device made typically out there as we speak that may just do that.

The Azure NC H100 v5 VM collection is predicated on the NVIDIA H100 NVL platform, which presents two courses of VMs, starting from one to 2 NVIDIA H100 94GB PCIe Tensor Core GPUs related by NVLink with 600 GB/s of bandwidth. This VM collection helps PCIe Gen5, which gives the best communication speeds (128GB/s bi-directional) between the host processor and the GPU. This reduces the latency and overhead of information switch and permits quicker and extra scalable AI and HPC purposes.

The VM collection additionally helps NVIDIA multi-instance GPU (MIG) know-how, enabling prospects to partition every GPU into as much as seven cases, offering flexibility and scalability for various AI workloads. This VM collection presents as much as 80 Gbps community bandwidth and as much as 8 TB of native NVMe storage on full node VM sizes.

These VMs are perfect for coaching fashions, operating inferencing duties, and creating cutting-edge purposes. Be taught extra concerning the Azure NC H100 v5-series.

“Snorkel AI is proud to accomplice with Microsoft to assist organizations quickly and cost-effectively harness the facility of information and AI. Azure AI infrastructure delivers the efficiency our most demanding ML workloads require plus simplified deployment and streamlined administration options our researchers love. With the brand new Azure NC H100 v5 VM collection powered by NVIDIA H100 NVL GPUs, we’re excited to proceed to can speed up iterative information growth for enterprises and OSS customers alike.”

Paroma Varma, Co-Founder and Head of Analysis, Snorkel AI

Microsoft and NVIDIA ship breakthroughs for healthcare and life sciences

Microsoft is increasing its collaboration with NVIDIA to assist rework the healthcare and life sciences business by way of the mixing of cloud, AI, and supercomputing.

Through the use of the worldwide scale, safety, and superior computing capabilities of Azure and Azure AI, together with NVIDIA’S DGX Cloud and NVIDIA Clara suite, healthcare suppliers, pharmaceutical and biotechnology corporations, and medical gadget builders can now quickly speed up innovation throughout your complete medical analysis to care supply worth chain for the good thing about sufferers worldwide. Be taught extra.

New Omniverse APIs allow prospects throughout industries to embed large graphics and visualization capabilities

At this time, NVIDIA’s Omniverse platform for creating 3D purposes will now be out there as a set of APIs operating on Microsoft Azure, enabling prospects to embed superior graphics and visualization capabilities into present software program purposes from Microsoft and accomplice ISVs.

Constructed on OpenUSD, a common information interchange, NVIDIA Omniverse Cloud APIs on Azure do the mixing work for purchasers, giving them seamless bodily primarily based rendering capabilities on the entrance finish. Demonstrating the worth of those APIs, Microsoft and NVIDIA have been working with Rockwell Automation and Hexagon to indicate how the bodily and digital worlds will be mixed for elevated productiveness and effectivity. Be taught extra.

Microsoft and NVIDIA envision deeper integration of NVIDIA DGX Cloud with Microsoft Material

The 2 corporations are additionally collaborating to carry NVIDIA DGX Cloud compute and Microsoft Material collectively to energy prospects’ most demanding information workloads. Because of this NVIDIA’s workload-specific optimized runtimes, LLMs, and machine studying will work seamlessly with Material.

NVIDIA DGX Cloud and Material integration embrace extending the capabilities of Material by bringing in NVIDIA DGX Cloud’s giant language mannequin customization to deal with data-intensive use instances like digital twins and climate forecasting with Material OneLake because the underlying information storage. The mixing may also present DGX Cloud as an possibility for purchasers to speed up their Material information science and information engineering workloads.

Accelerating innovation within the period of AI

For years, Microsoft and NVIDIA have collaborated from {hardware} to methods to VMs, to construct new and modern AI-enabled options to deal with complicated challenges within the cloud. Microsoft will proceed to broaden and improve its world infrastructure with essentially the most cutting-edge know-how in each layer of the stack, delivering improved efficiency and scalability for cloud and AI workloads and empowering prospects to realize extra throughout industries and domains.

Be a part of Microsoft at NVIDIA CTA AI Convention, March 18 by way of 21, at sales space #1108 and attend a session to study extra about options on Azure and NVIDIA.