Giant deep studying fashions have gotten the workhorse of quite a lot of essential machine studying (ML) duties. Nevertheless, it has been proven that with none safety it’s believable for dangerous actors to assault quite a lot of fashions, throughout modalities, to disclose data from particular person coaching examples. As such, it’s important to guard towards this kind of data leakage.

Differential privateness (DP) supplies formal safety towards an attacker who goals to extract details about the coaching information. The most well-liked methodology for DP coaching in deep studying is differentially non-public stochastic gradient descent (DP-SGD). The core recipe implements a standard theme in DP: “fuzzing” an algorithm’s outputs with noise to obscure the contributions of any particular person enter.

In observe, DP coaching might be very costly and even ineffective for very giant fashions. Not solely does the computational price usually enhance when requiring privateness ensures, however the noise additionally will increase proportionally. Given these challenges, there has just lately been a lot curiosity in growing strategies that allow environment friendly DP coaching. The purpose is to develop easy and sensible strategies for producing high-quality large-scale non-public fashions.

The ImageNet classification benchmark is an efficient check mattress for this purpose as a result of 1) it’s a difficult job even within the non-private setting, that requires sufficiently giant fashions to efficiently classify giant numbers of assorted photographs and a pair of) it’s a public, open-source dataset, which different researchers can entry and use for collaboration. With this strategy, researchers could simulate a sensible state of affairs the place a big mannequin is required to coach on non-public information with DP ensures.

To that finish, immediately we talk about enhancements we’ve made in coaching high-utility, large-scale non-public fashions. First, in “Giant-Scale Switch Studying for Differentially Personal Picture Classification”, we share robust outcomes on the difficult job of picture classification on the ImageNet-1k dataset with DP constraints. We present that with a mix of large-scale switch studying and thoroughly chosen hyperparameters it’s certainly potential to considerably scale back the hole between non-public and non-private efficiency even on difficult duties and high-dimensional fashions. Then in “Differentially Personal Picture Classification from Options”, we additional present that privately fine-tuning simply the final layer of pre-trained mannequin with extra superior optimization algorithms improves the efficiency even additional, resulting in new state-of-the-art DP outcomes throughout quite a lot of in style picture classification benchmarks, together with ImageNet-1k. To encourage additional improvement on this route and allow different researchers to confirm our findings, we’re additionally releasing the related supply code.

Switch studying and differential privateness

The primary concept behind switch studying is to reuse the information gained from fixing one downside after which apply it to a associated downside. That is particularly helpful when there may be restricted or low-quality information out there for the goal downside because it permits us to leverage the information gained from a bigger and extra various public dataset.

Within the context of DP, switch studying has emerged as a promising approach to enhance the accuracy of personal fashions, by leveraging information realized from pre-training duties. For instance, if a mannequin has already been skilled on a big public dataset for the same privacy-sensitive job, it may be fine-tuned on a smaller and extra particular dataset for the goal DP job. Extra particularly, one first pre-trains a mannequin on a big dataset with no privateness considerations, after which privately fine-tunes the mannequin on the delicate dataset. In our work, we enhance the effectiveness of DP switch studying and illustrate it by simulating non-public coaching on publicly out there datasets, specifically ImageNet-1k, CIFAR-100, and CIFAR-10.

Higher pre-training improves DP efficiency

To start out exploring how switch studying might be efficient for differentially non-public picture classification duties, we fastidiously examined hyperparameters affecting DP efficiency. Surprisingly, we discovered that with fastidiously chosen hyperparameters (e.g., initializing the final layer to zero and selecting giant batch sizes), privately fine-tuning simply the final layer of a pre-trained mannequin yields vital enhancements over the baseline. Coaching simply the final layer additionally considerably improves the cost-utility ratio of coaching a high-quality picture classification mannequin with DP.

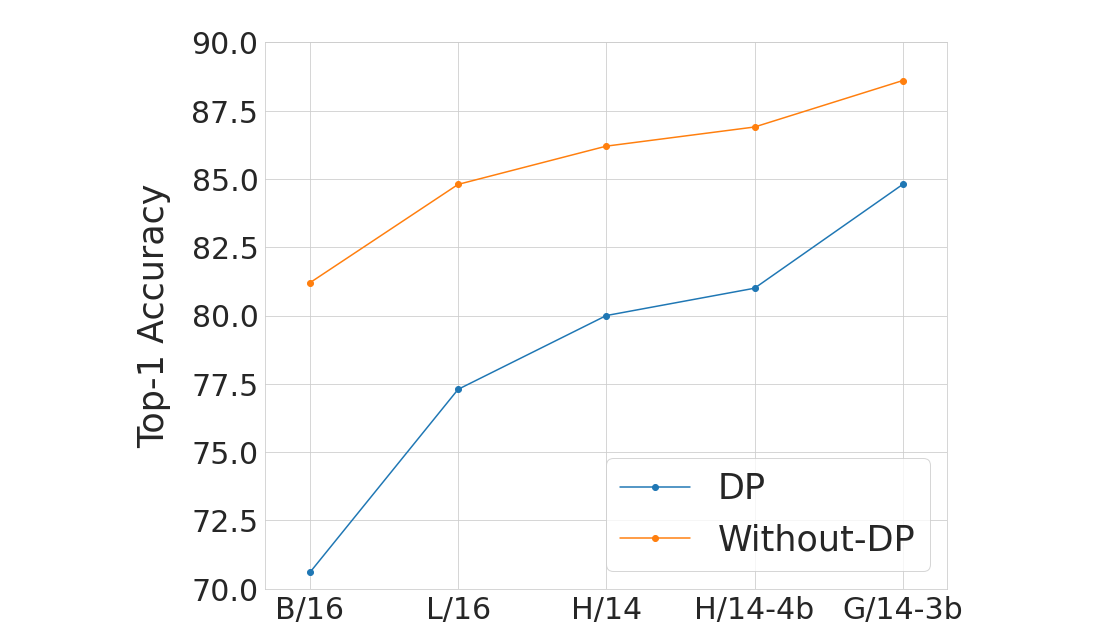

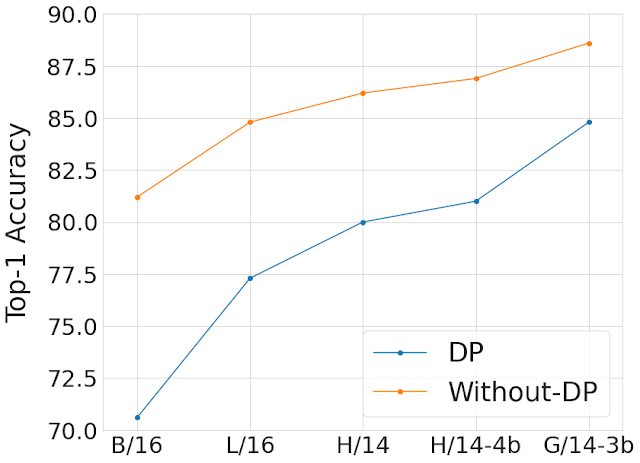

As proven beneath, we evaluate the efficiency on ImageNet of one of the best hyperparameter suggestions each with and with out privateness and throughout quite a lot of mannequin and pre-training dataset sizes. We discover that scaling the mannequin and utilizing a bigger pre-training dataset decreases the hole in accuracy coming from the addition of the privateness assure. Sometimes, privateness ensures of a system are characterised by a optimistic parameter ε, with smaller ε corresponding to raised privateness. Within the following determine, we use the privateness assure of ε = 10.

|

| Evaluating our greatest fashions with and with out privateness on ImageNet throughout mannequin and pre-training dataset sizes. The X-axis reveals the totally different Imaginative and prescient Transformer fashions we used for this examine in ascending order of mannequin measurement from left to proper. We used JFT-300M to pretrain B/16, L/16 and H/14 fashions, JFT-4B (a bigger model of JFT-3B) to pretrain H/14-4b and JFT-3B to pretrain G/14-3b. We do that with the intention to examine the effectiveness of collectively scaling the mannequin and pre-training dataset (JFT-3B or 4B). The Y-axis reveals the High-1 accuracy on ImageNet-1k check set as soon as the mannequin is finetuned (within the non-public or non-private means) with the ImageNet-1k coaching set. We constantly see that the scaling of the mannequin and the pre-training dataset measurement decreases the hole in accuracy coming from the addition of the privateness assure of ε = 10. |

Higher optimizers enhance DP efficiency

Considerably surprisingly, we discovered that privately coaching simply the final layer of a pre-trained mannequin supplies one of the best utility with DP. Whereas previous research [1, 2, 3] largely relied on utilizing first-order differentially non-public coaching algorithms like DP-SGD for coaching giant fashions, within the particular case of privately studying simply the final layer from options, we observe that computational burden is usually low sufficient to permit for extra subtle optimization schemes, together with second-order strategies (e.g., Newton or Quasi-Newton strategies), which might be extra correct but additionally extra computationally costly.

In “Differentially Personal Picture Classification from Options”, we systematically discover the impact of loss features and optimization algorithms. We discover that whereas the generally used logistic regression performs higher than linear regression within the non-private setting, the state of affairs is reversed within the non-public setting: least-squares linear regression is far more efficient than logistic regression from each a privateness and computational standpoint for typical vary of ε values ([1, 10]), and much more efficient for stricter epsilon values (ε < 1).

We additional discover utilizing DP Newton’s methodology to unravel logistic regression. We discover that that is nonetheless outperformed by DP linear regression within the excessive privateness regime. Certainly, Newton’s methodology includes computing a Hessian (a matrix that captures second-order data), and making this matrix differentially non-public requires including much more noise in logistic regression than in linear regression, which has a extremely structured Hessian.

Constructing on this statement, we introduce a technique that we name differentially non-public SGD with characteristic covariance (DP-FC), the place we merely exchange the Hessian in logistic regression with privatized characteristic covariance. Since characteristic covariance solely will depend on the inputs (and neither on mannequin parameters nor class labels), we’re capable of share it throughout courses and coaching iterations, thus drastically lowering the quantity of noise that must be added to guard it. This permits us to mix the advantages of utilizing logistic regression with the environment friendly privateness safety of linear regression, resulting in improved privacy-utility trade-off.

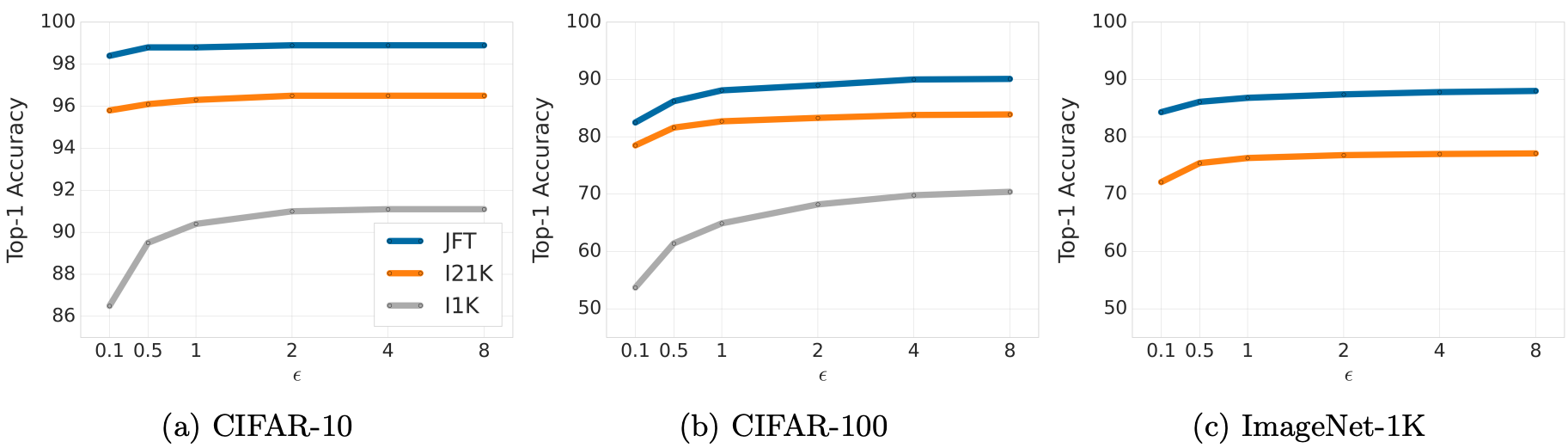

With DP-FC, we surpass earlier state-of-the-art outcomes significantly on three non-public picture classification benchmarks, specifically ImageNet-1k, CIFAR-10 and CIFAR-100, simply by performing DP fine-tuning on options extracted from a robust pre-trained mannequin.

|

| Comparability of top-1 accuracies (Y-axis) with non-public fine-tuning utilizing DP-FC methodology on all three datasets throughout a spread of ε (X-axis). We observe that higher pre-training helps much more for decrease values of ε (stricter privateness assure). |

Conclusion

We show that large-scale pre-training on a public dataset is an efficient technique for acquiring good outcomes when fine-tuned privately. Furthermore, scaling each mannequin measurement and pre-training dataset improves efficiency of the non-public mannequin and narrows the standard hole in comparison with the non-private mannequin. We additional present methods to successfully use switch studying for DP. Be aware that this work has a number of limitations value contemplating — most significantly our strategy depends on the supply of a big and reliable public dataset, which might be difficult to supply and vet. We hope that our work is helpful for coaching giant fashions with significant privateness ensures!

Acknowledgements

Along with the authors of this blogpost, this analysis was carried out by Abhradeep Thakurta, Alex Kurakin and Ashok Cutkosky. We’re additionally grateful to the builders of Jax, Flax, and Scenic libraries. Particularly, we wish to thank Mostafa Dehghani for serving to us with Scenic and high-performance imaginative and prescient baselines and Lucas Beyer for assist with deduping the JFT information. We’re additionally grateful to Li Zhang, Emil Praun, Andreas Terzis, Shuang Track, Pierre Tholoniat, Roxana Geambasu, and Steve Chien for exciting discussions on differential privateness all through the challenge. Moreover, we thank nameless reviewers, Gautam Kamath and Varun Kanade for useful suggestions all through the publication course of. Lastly, we wish to thank John Anderson and Corinna Cortes from Google Analysis, Borja Balle, Soham De, Sam Smith, Leonard Berrada, and Jamie Hayes from DeepMind for beneficiant suggestions.