It’s no secret to anybody that high-performing ML fashions need to be equipped with massive volumes of high quality coaching knowledge. With out having the info, there’s hardly a approach a corporation can leverage AI and self-reflect to grow to be extra environment friendly and make better-informed selections. The method of turning into a data-driven (and particularly AI-driven) firm is thought to be not straightforward.

28% of corporations that undertake AI cite lack of entry to knowledge as a cause behind failed deployments. – KDNuggets

Moreover, there are points with errors and biases inside current knowledge. They’re considerably simpler to mitigate by numerous processing strategies, however this nonetheless impacts the supply of reliable coaching knowledge. It’s a significant issue, however the lack of coaching knowledge is a a lot more durable downside, and fixing it would contain many initiatives relying on the maturity degree.

Apart from knowledge availability and biases there’s one other facet that is essential to say: knowledge privateness. Each corporations and people are constantly selecting to forestall knowledge they personal for use for mannequin coaching by third events. The dearth of transparency and laws round this matter is well-known and had already grow to be a catalyst of lawmaking throughout the globe.

Nevertheless, within the broad panorama of data-oriented applied sciences, there’s one which goals to unravel the above-mentioned issues from somewhat surprising angle. This expertise is artificial knowledge. Artificial knowledge is produced by simulations with numerous fashions and eventualities or sampling strategies of current knowledge sources to create new knowledge that isn’t sourced from the true world.

Artificial knowledge can substitute or increase current knowledge and be used for coaching ML fashions, mitigating bias, and defending delicate or regulated knowledge. It’s low cost and could be produced on demand in massive portions based on specified statistics.

Artificial datasets hold the statistical properties of the unique knowledge used as a supply: strategies that generate the info get hold of a joint distribution that additionally could be personalized if obligatory. In consequence, artificial datasets are much like their actual sources however don’t comprise any delicate data. That is particularly helpful in extremely regulated industries corresponding to banking and healthcare, the place it might probably take months for an worker to get entry to delicate knowledge due to strict inner procedures. Utilizing artificial knowledge on this atmosphere for testing, coaching AI fashions, detecting fraud and different functions simplifies the workflow and reduces the time required for improvement.

All this additionally applies to coaching massive language fashions since they’re educated totally on public knowledge (e.g. OpenAI ChatGPT was educated on Wikipedia, elements of net index, and different public datasets), however we predict that it’s artificial knowledge is an actual differentiator going additional since there’s a restrict of obtainable public knowledge for coaching fashions (each bodily and authorized) and human created knowledge is pricey, particularly if it requires specialists.

Producing Artificial Information

There are numerous strategies of manufacturing artificial knowledge. They are often subdivided into roughly 3 main classes, every with its benefits and downsides:

- Stochastic course of modeling. Stochastic fashions are comparatively easy to construct and don’t require a whole lot of computing assets, however since modeling is concentrated on statistical distribution, the row-level knowledge has no delicate data. The only instance of stochastic course of modeling could be producing a column of numbers based mostly on some statistical parameters corresponding to minimal, most, and common values and assuming the output knowledge follows some recognized distribution (e.g. random or Gaussian).

- Rule-based knowledge era. Rule-based techniques enhance statistical modeling by together with knowledge that’s generated based on guidelines outlined by people. Guidelines could be of varied complexity, however high-quality knowledge requires complicated guidelines and tuning by human specialists which limits the scalability of the tactic.

- Deep studying generative fashions. By making use of deep studying generative fashions, it’s potential to coach a mannequin with actual knowledge and use that mannequin to generate artificial knowledge. Deep studying fashions are capable of seize extra complicated relationships and joint distributions of datasets, however at a better complexity and compute prices.

Additionally, it’s value mentioning that present LLMs can be used to generate artificial knowledge. It doesn’t require in depth setup and could be very helpful on a smaller scale (or when performed simply on a consumer request) as it might probably present each structured and unstructured knowledge, however on a bigger scale it could be costlier than specialised strategies. Let’s not overlook that state-of-the-art fashions are susceptible to hallucinations so statistical properties of artificial knowledge that comes from LLM ought to be checked earlier than utilizing it in eventualities the place distribution issues.

An attention-grabbing instance that may function an illustration of how using artificial knowledge requires a change in method to ML mannequin coaching is an method to mannequin validation.

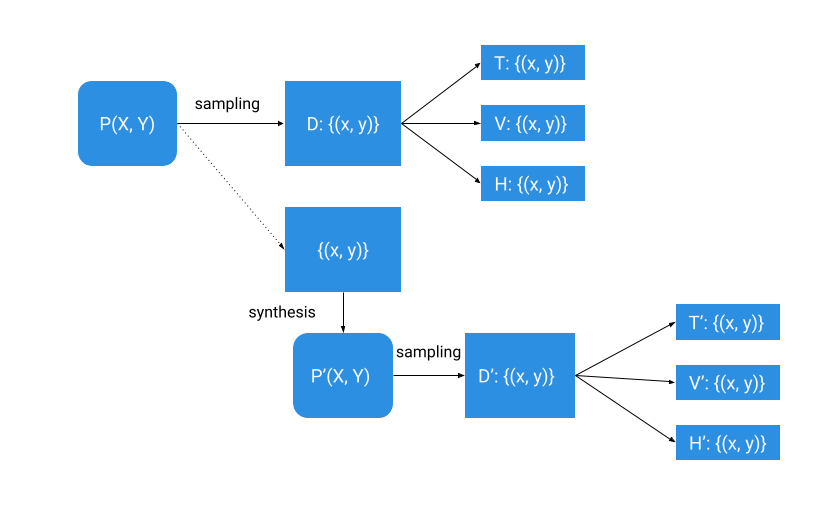

In conventional knowledge modeling, now we have a dataset (D) that could be a set of observations drawn from some unknown real-world course of (P) that we need to mannequin. We divide that dataset right into a coaching subset (T), a validation subset (V) and a holdout (H) and use it to coach a mannequin and estimate its accuracy.

To do artificial knowledge modeling, we synthesize a distribution P’ from our preliminary dataset and pattern it to get the artificial dataset (D’). We subdivide the artificial dataset right into a coaching subset (T’), a validation subset (V’), and a holdout (H’) like we subdivided the true dataset. We would like distribution P’ to be as virtually near P as potential since we would like the accuracy of a mannequin educated on artificial knowledge to be as near the accuracy of a mannequin educated on actual knowledge (after all, all artificial knowledge ensures ought to be held).

When potential, artificial knowledge modeling also needs to use the validation (V) and holdout (H) knowledge from the unique supply knowledge (D) for mannequin analysis to make sure that the mannequin educated on artificial knowledge (T’) performs properly on real-world knowledge.

So, a superb artificial knowledge resolution ought to permit us to mannequin P(X, Y) as precisely as potential whereas retaining all privateness ensures held.

Though the broader use of artificial knowledge for mannequin coaching requires altering and enhancing current approaches, in our opinion, it’s a promising expertise to deal with present issues with knowledge possession and privateness. Its correct use will result in extra correct fashions that may enhance and automate the choice making course of considerably decreasing the dangers related to using personal knowledge.

In regards to the creator

Nick Volynets is a senior knowledge engineer working with the workplace of the CTO the place he enjoys being on the coronary heart of DataRobot innovation. He’s keen on massive scale machine studying and keen about AI and its affect.