Massive machine studying (ML) fashions are ubiquitous in fashionable purposes: from spam filters to recommender techniques and digital assistants. These fashions obtain outstanding efficiency partially as a result of abundance of obtainable coaching information. Nonetheless, these information can typically comprise non-public data, together with private identifiable data, copyright materials, and many others. Due to this fact, defending the privateness of the coaching information is important to sensible, utilized ML.

Differential Privateness (DP) is likely one of the most generally accepted applied sciences that permits reasoning about information anonymization in a proper approach. Within the context of an ML mannequin, DP can assure that every particular person person’s contribution is not going to lead to a considerably totally different mannequin. A mannequin’s privateness ensures are characterised by a tuple (ε, δ), the place smaller values of each signify stronger DP ensures and higher privateness.

Whereas there are profitable examples of defending coaching information utilizing DP, acquiring good utility with differentially non-public ML (DP-ML) methods might be difficult. First, there are inherent privateness/computation tradeoffs that will restrict a mannequin’s utility. Additional, DP-ML fashions usually require architectural and hyperparameter tuning, and tips on how to do that successfully are restricted or troublesome to search out. Lastly, non-rigorous privateness reporting makes it difficult to match and select the perfect DP strategies.

In “Find out how to DP-fy ML: A Sensible Information to Machine Studying with Differential Privateness”, to seem within the Journal of Synthetic Intelligence Analysis, we talk about the present state of DP-ML analysis. We offer an summary of widespread methods for acquiring DP-ML fashions and talk about analysis, engineering challenges, mitigation methods and present open questions. We’ll current tutorials based mostly on this work at ICML 2023 and KDD 2023.

DP-ML strategies

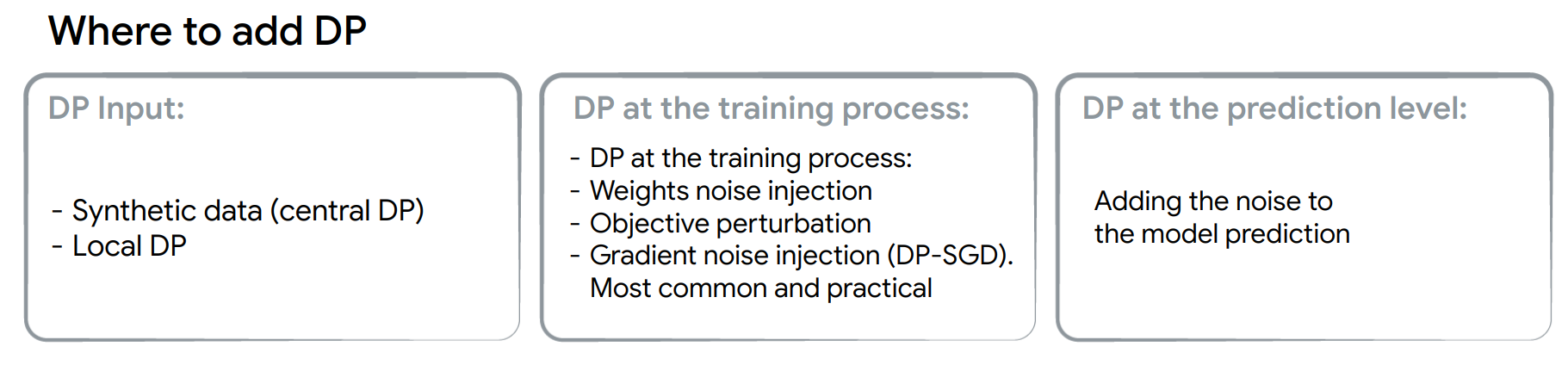

DP might be launched through the ML mannequin growth course of in three locations: (1) on the enter information stage, (2) throughout coaching, or (3) at inference. Every choice offers privateness protections at totally different phases of the ML growth course of, with the weakest being when DP is launched on the prediction stage and the strongest being when launched on the enter stage. Making the enter information differentially non-public implies that any mannequin that’s skilled on this information will even have DP ensures. When introducing DP through the coaching, solely that exact mannequin has DP ensures. DP on the prediction stage implies that solely the mannequin’s predictions are protected, however the mannequin itself is just not differentially non-public.

|

| The duty of introducing DP will get progressively simpler from the left to proper. |

DP is often launched throughout coaching (DP-training). Gradient noise injection strategies, like DP-SGD or DP-FTRL, and their extensions are presently probably the most sensible strategies for attaining DP ensures in advanced fashions like massive deep neural networks.

DP-SGD builds off of the stochastic gradient descent (SGD) optimizer with two modifications: (1) per-example gradients are clipped to a sure norm to restrict sensitivity (the affect of a person instance on the general mannequin), which is a gradual and computationally intensive course of, and (2) a loud gradient replace is fashioned by taking aggregated gradients and including noise that’s proportional to the sensitivity and the energy of privateness ensures.

Present DP-training challenges

Gradient noise injection strategies normally exhibit: (1) lack of utility, (2) slower coaching, and (3) an elevated reminiscence footprint.

Lack of utility:

One of the best methodology for decreasing utility drop is to make use of extra computation. Utilizing bigger batch sizes and/or extra iterations is likely one of the most distinguished and sensible methods of enhancing a mannequin’s efficiency. Hyperparameter tuning can be extraordinarily essential however usually ignored. The utility of DP-trained fashions is delicate to the entire quantity of noise added, which is determined by hyperparameters, just like the clipping norm and batch dimension. Moreover, different hyperparameters like the training charge must be re-tuned to account for noisy gradient updates.

An alternative choice is to acquire extra information or use public information of comparable distribution. This may be performed by leveraging publicly obtainable checkpoints, like ResNet or T5, and fine-tuning them utilizing non-public information.

Slower coaching:

Most gradient noise injection strategies restrict sensitivity through clipping per-example gradients, significantly slowing down backpropagation. This may be addressed by selecting an environment friendly DP framework that effectively implements per-example clipping.

Elevated reminiscence footprint:

DP-training requires vital reminiscence for computing and storing per-example gradients. Moreover, it requires considerably bigger batches to acquire higher utility. Rising the computation sources (e.g., the quantity and dimension of accelerators) is the best answer for additional reminiscence necessities. Alternatively, a number of works advocate for gradient accumulation the place smaller batches are mixed to simulate a bigger batch earlier than the gradient replace is utilized. Additional, some algorithms (e.g., ghost clipping, which relies on this paper) keep away from per-example gradient clipping altogether.

Greatest practices

The next finest practices can attain rigorous DP ensures with the perfect mannequin utility potential.

Choosing the proper privateness unit:

First, we must be clear a couple of mannequin’s privateness ensures. That is encoded by choosing the “privateness unit,” which represents the neighboring dataset idea (i.e., datasets the place just one row is totally different). Instance-level safety is a standard alternative within the analysis literature, however might not be excellent, nonetheless, for user-generated information if particular person customers contributed a number of data to the coaching dataset. For such a case, user-level safety could be extra applicable. For textual content and sequence information, the selection of the unit is tougher since in most purposes particular person coaching examples are usually not aligned to the semantic which means embedded within the textual content.

Selecting privateness ensures:

We define three broad tiers of privateness ensures and encourage practitioners to decide on the bottom potential tier beneath:

- Tier 1 — Robust privateness ensures: Selecting ε ≤ 1 offers a robust privateness assure, however ceaselessly ends in a big utility drop for giant fashions and thus could solely be possible for smaller fashions.

- Tier 2 — Affordable privateness ensures: We advocate for the presently undocumented, however nonetheless extensively used, purpose for DP-ML fashions to realize an ε ≤ 10.

- Tier 3 — Weak privateness ensures: Any finite ε is an enchancment over a mannequin with no formal privateness assure. Nonetheless, for ε > 10, the DP assure alone can’t be taken as enough proof of knowledge anonymization, and extra measures (e.g., empirical privateness auditing) could also be crucial to make sure the mannequin protects person information.

Hyperparameter tuning:

Selecting hyperparameters requires optimizing over three inter-dependent targets: 1) mannequin utility, 2) privateness price ε, and three) computation price. Frequent methods take two of the three as constraints, and deal with optimizing the third. We offer strategies that may maximize the utility with a restricted variety of trials, e.g., tuning with privateness and computation constraints.

Reporting privateness ensures:

Numerous works on DP for ML report solely ε and presumably δ values for his or her coaching process. Nonetheless, we consider that practitioners ought to present a complete overview of mannequin ensures that features:

- DP setting: Are the outcomes assuming central DP with a trusted service supplier, native DP, or another setting?

- Instantiating the DP definition:

- Information accesses lined: Whether or not the DP assure applies (solely) to a single coaching run or additionally covers hyperparameter tuning and many others.

- Closing mechanism’s output: What is roofed by the privateness ensures and might be launched publicly (e.g., mannequin checkpoints, the complete sequence of privatized gradients, and many others.)

- Unit of privateness: The chosen “privateness unit” (example-level, user-level, and many others.)

- Adjacency definition for DP “neighboring” datasets: An outline of how neighboring datasets differ (e.g., add-or-remove, replace-one, zero-out-one).

- Privateness accounting particulars: Offering accounting particulars, e.g., composition and amplification, are essential for correct comparability between strategies and may embody:

- Kind of accounting used, e.g., Rényi DP-based accounting, PLD accounting, and many others.

- Accounting assumptions and whether or not they maintain (e.g., Poisson sampling was assumed for privateness amplification however information shuffling was utilized in coaching).

- Formal DP assertion for the mannequin and tuning course of (e.g., the particular ε, δ-DP or ρ-zCDP values).

- Transparency and verifiability: When potential, full open-source code utilizing customary DP libraries for the important thing mechanism implementation and accounting parts.

Listening to all of the parts used:

Often, DP-training is a simple software of DP-SGD or different algorithms. Nonetheless, some parts or losses which might be usually utilized in ML fashions (e.g., contrastive losses, graph neural community layers) must be examined to make sure privateness ensures are usually not violated.

Open questions

Whereas DP-ML is an energetic analysis space, we spotlight the broad areas the place there’s room for enchancment.

Creating higher accounting strategies:

Our present understanding of DP-training ε, δ ensures depends on various methods, like Rényi DP composition and privateness amplification. We consider that higher accounting strategies for present algorithms will exhibit that DP ensures for ML fashions are literally higher than anticipated.

Creating higher algorithms:

The computational burden of utilizing gradient noise injection for DP-training comes from the necessity to use bigger batches and restrict per-example sensitivity. Creating strategies that may use smaller batches or figuring out different methods (aside from per-example clipping) to restrict the sensitivity can be a breakthrough for DP-ML.

Higher optimization methods:

Instantly making use of the identical DP-SGD recipe is believed to be suboptimal for adaptive optimizers as a result of the noise added to denationalise the gradient could accumulate in studying charge computation. Designing theoretically grounded DP adaptive optimizers stays an energetic analysis subject. One other potential course is to raised perceive the floor of DP loss, since for traditional (non-DP) ML fashions flatter areas have been proven to generalize higher.

Figuring out architectures which might be extra sturdy to noise:

There’s a chance to raised perceive whether or not we have to alter the structure of an present mannequin when introducing DP.

Conclusion

Our survey paper summarizes the present analysis associated to creating ML fashions DP, and offers sensible tips about how one can obtain the perfect privacy-utility commerce offs. Our hope is that this work will function a reference level for the practitioners who wish to successfully apply DP to advanced ML fashions.

Acknowledgements

We thank Hussein Hazimeh, Zheng Xu , Carson Denison , H. Brendan McMahan, Sergei Vassilvitskii, Steve Chien and Abhradeep Thakurta, Badih Ghazi, Chiyuan Zhang for the assistance making ready this weblog put up, paper and tutorials content material. Due to John Guilyard for creating the graphics on this put up, and Ravi Kumar for feedback.