MySQL and PostgreSQL are extensively used as transactional databases. In relation to supporting high-scale and analytical use circumstances, it’s possible you’ll usually should tune and configure these databases, which ends up in a better operational burden. Some challenges when doing analytics on MySQL and Postgres embrace:

- working a lot of concurrent queries/customers

- working with massive knowledge sizes

- needing to outline and handle tons of indexes.

There are workarounds for these issues, but it surely requires extra operational burden:

- scaling to bigger servers

- creating extra learn replicas

- transferring to a NoSQL database

Rockset lately introduced assist for MySQL and PostgreSQL that simply means that you can energy real-time, advanced analytical queries. This mitigates the necessity to tune these relational databases to deal with heavy analytical workloads.

By integrating MySQL and PostgreSQL with Rockset, you may simply scale out to deal with demanding analytics.

Preface

Within the twitch stream

I’ll cowl the primary highlights of what we did within the twitch stream on this weblog. In the event you’re not sure about sure elements of the directions, positively try the video down under.

Set Up MySQL Server

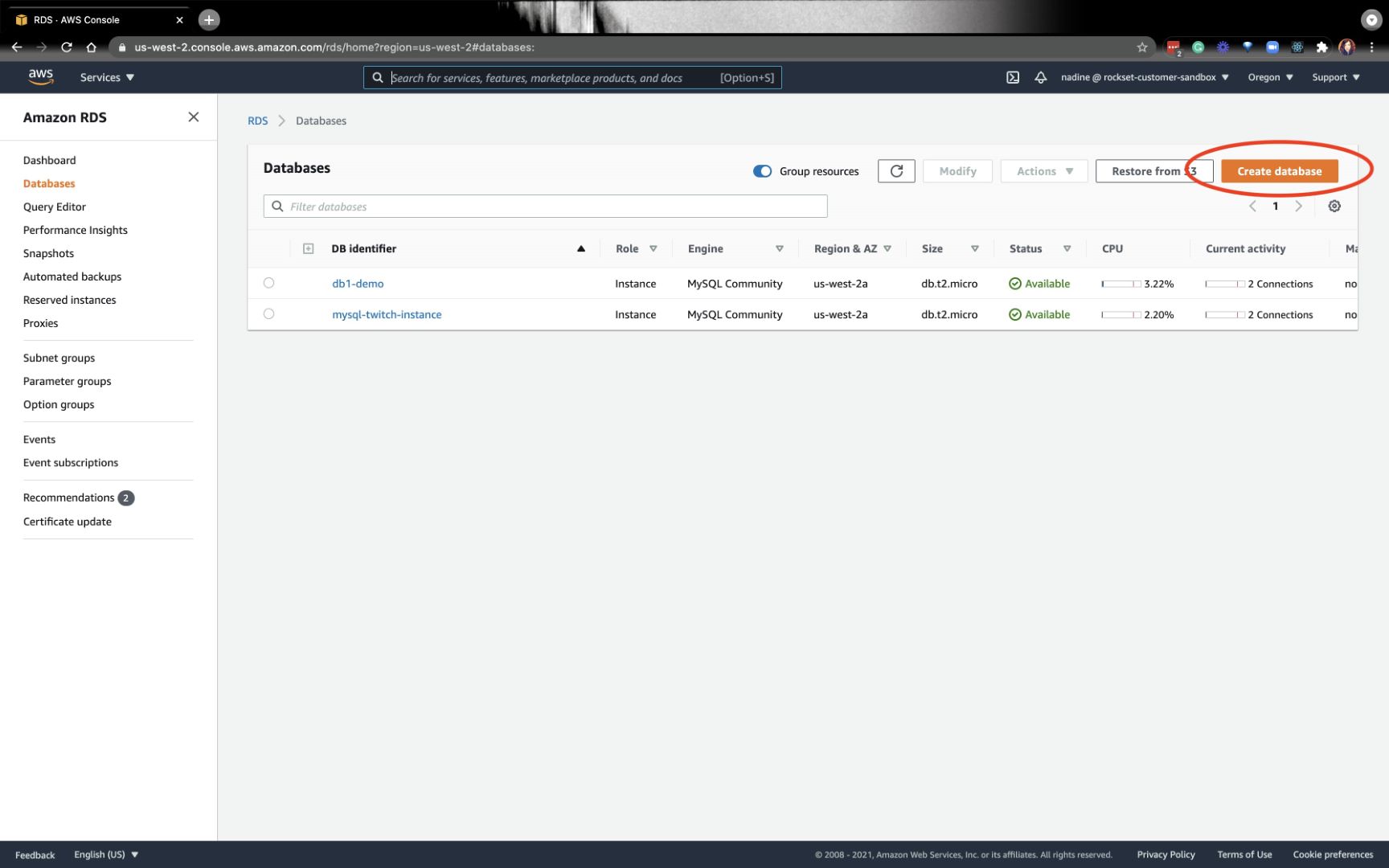

In our stream, we created a MySQL server on Amazon RDS. You may click on on Create database on the higher right-hand nook and work by the directions:

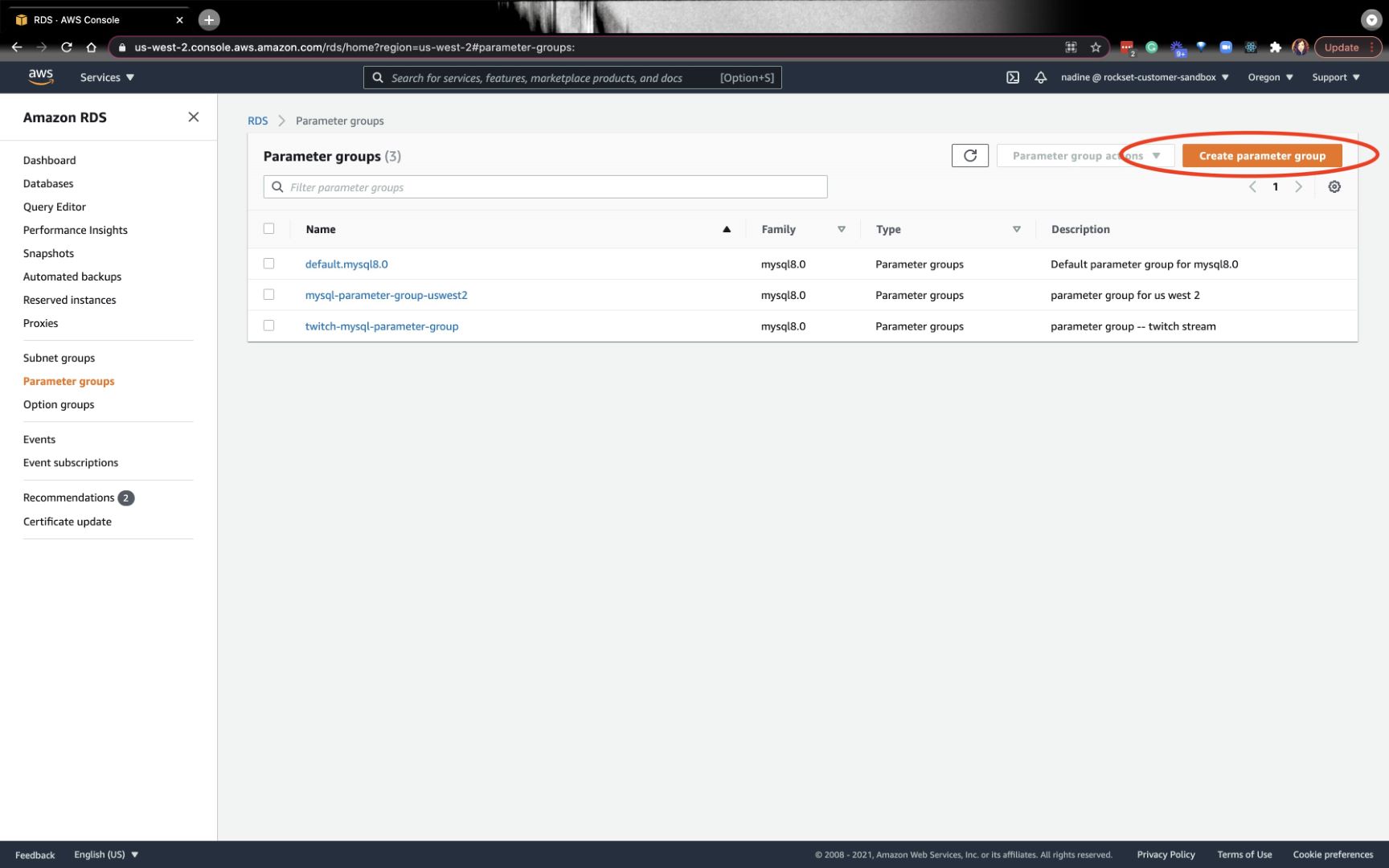

Now, we’ll create the parameter teams. By making a parameter group, we’ll be capable to change the binlog_format to Row so we will dynamically replace Rockset as the information adjustments in MySQL. Click on on Create parameter group on the higher right-hand nook:

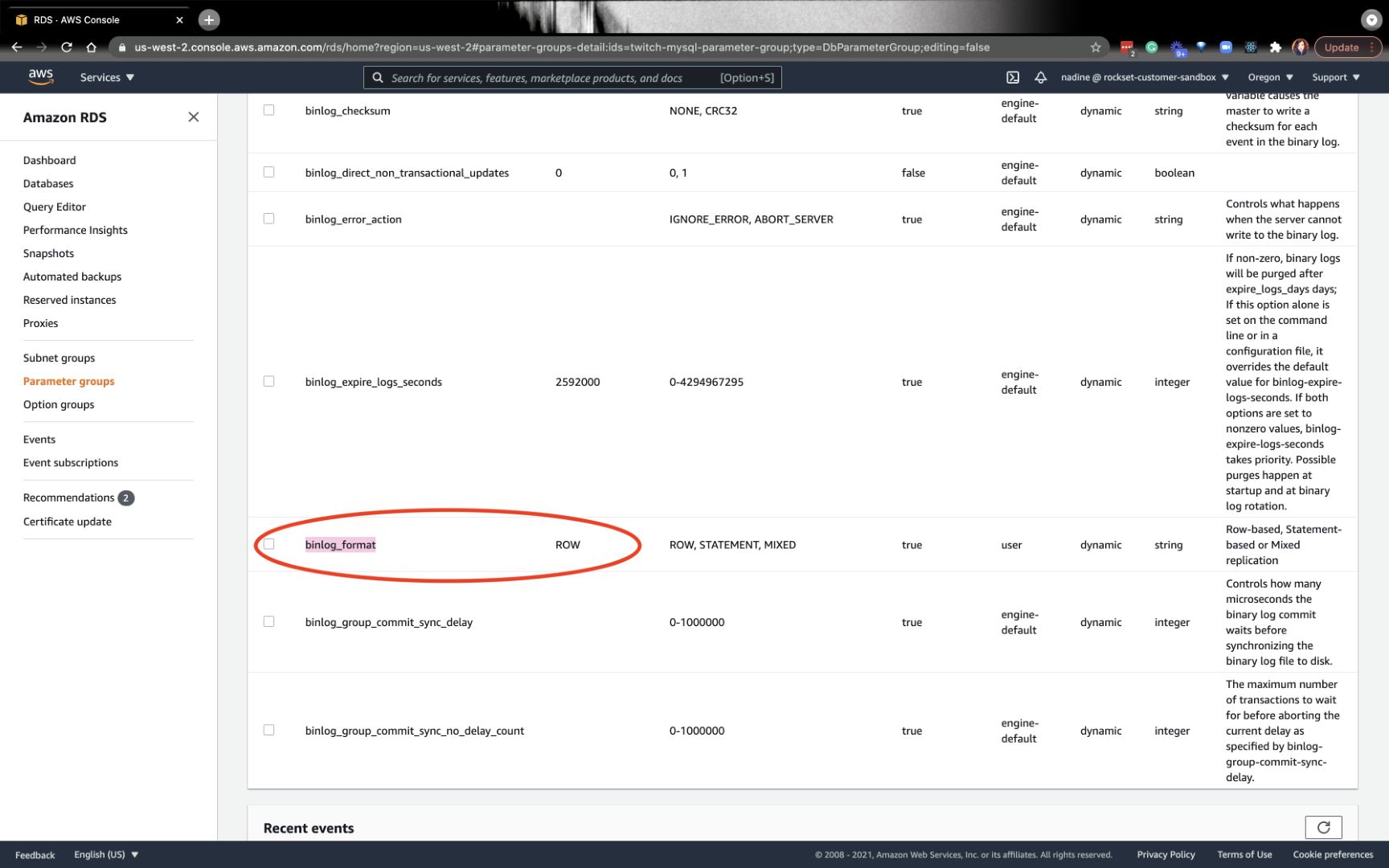

After you create your parameter group, you wish to click on on the newly created group and alter binlog_format to Row:

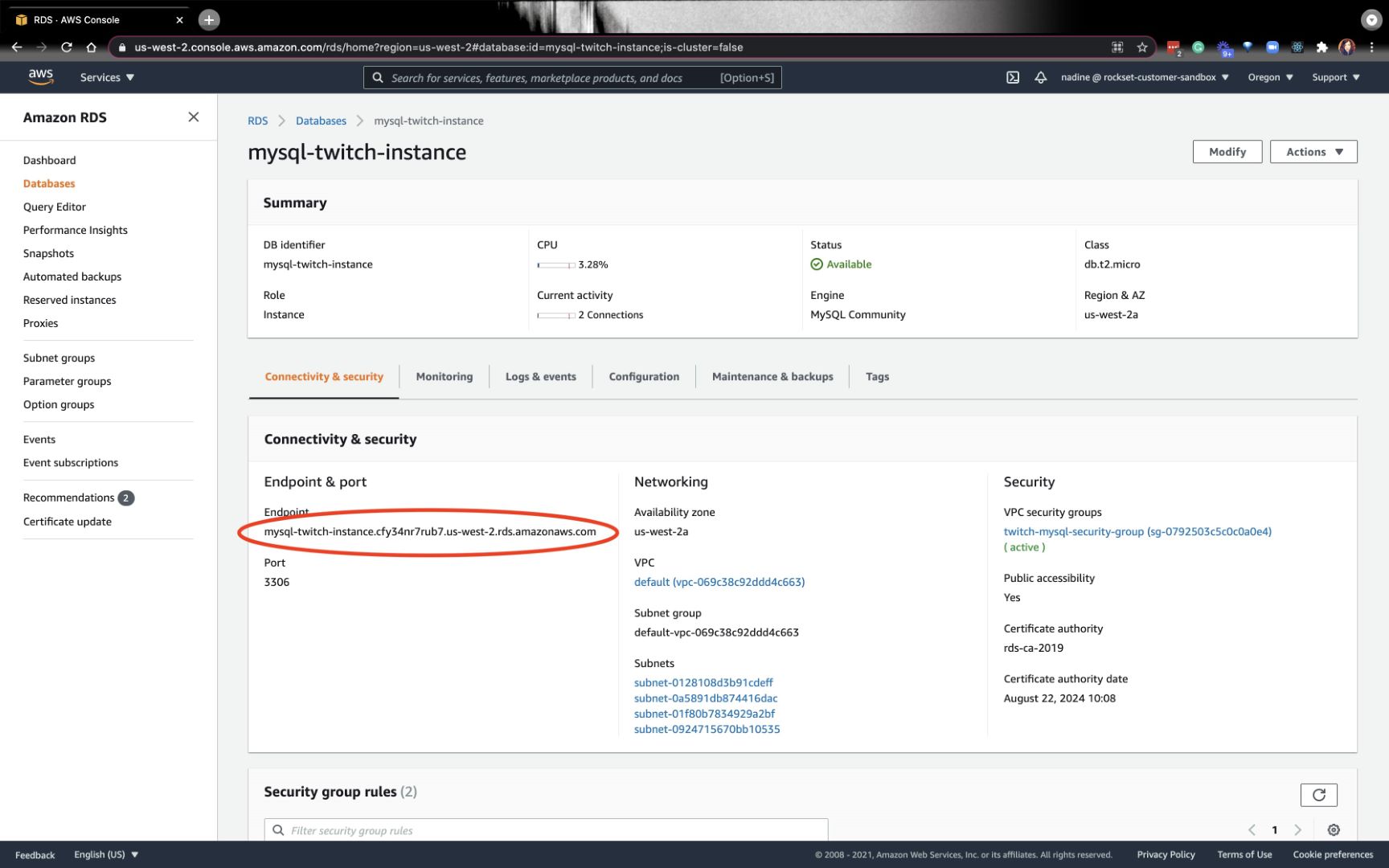

After that is set, you wish to entry the MySQL server from the CLI so you may set the permissions. You may seize the endpoint from the Databases tab on the left and underneath the Connectivity & safety settings:

On terminal, sort

$ mysql -u admin -p -h Endpoint

It’ll immediate you for the password.

As soon as inside, you wish to sort this:

mysql> CREATE USER 'aws-dms' IDENTIFIED BY 'youRpassword';

mysql> GRANT SELECT ON *.* TO 'aws-dms';

mysql> GRANT REPLICATION SLAVE ON *.* TO 'aws-dms';

mysql> GRANT REPLICATION CLIENT ON *.* TO 'aws-dms';

That is in all probability a very good level to create a desk and insert some knowledge. I did this half somewhat later within the stream, however you may simply do it right here too.

mysql> use yourDatabaseName

mysql> CREATE TABLE MyGuests ( id INT(6) UNSIGNED AUTO_INCREMENT PRIMARY KEY, firstname VARCHAR(30) NOT NULL, lastname VARCHAR(30) NOT NULL, electronic mail VARCHAR(50), reg_date TIMESTAMP DEFAULT CURRENT_TIMESTAMP ON UPDATE CURRENT_TIMESTAMP );

mysql> INSERT INTO MyGuests (firstname, lastname, electronic mail)

-> VALUES ('John', 'Doe', 'john@instance.com');

mysql> present tables;

That’s a wrap for this part. We arrange a MySQL server, desk, and inserted some knowledge.

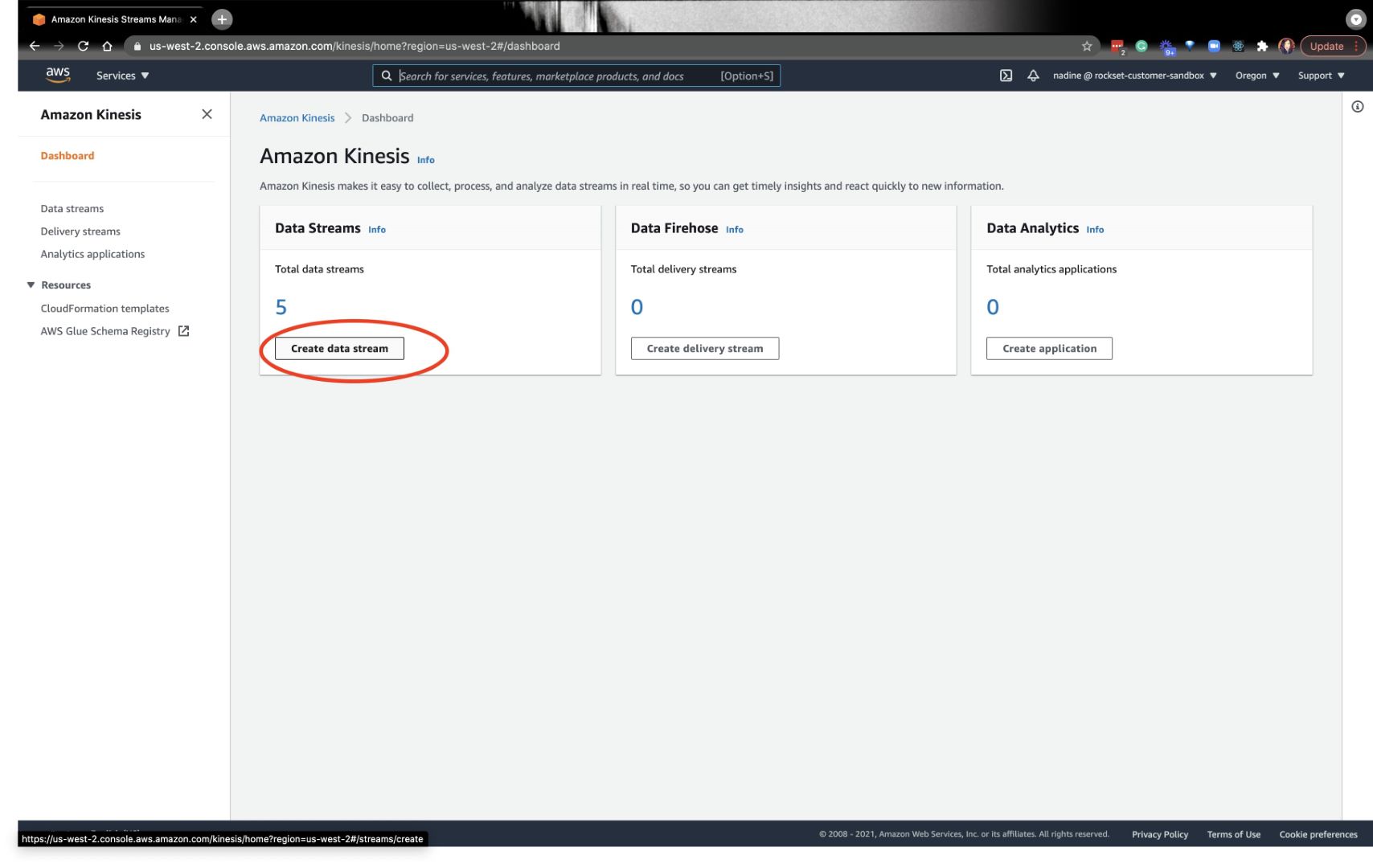

Create a Goal AWS Kinesis Stream

Every desk on MySQL will map to 1 Kinesis Information Stream. The AWS Kinesis Stream is the vacation spot that DMS makes use of because the goal of a migration job. Each MySQL desk we want to connect with Rockset would require a person migration job.

To summarize: Every desk on MySQL desk would require a Kinesis Information Stream and a migration job.

Go forward and navigate to the Kinesis Information Stream and create a stream:

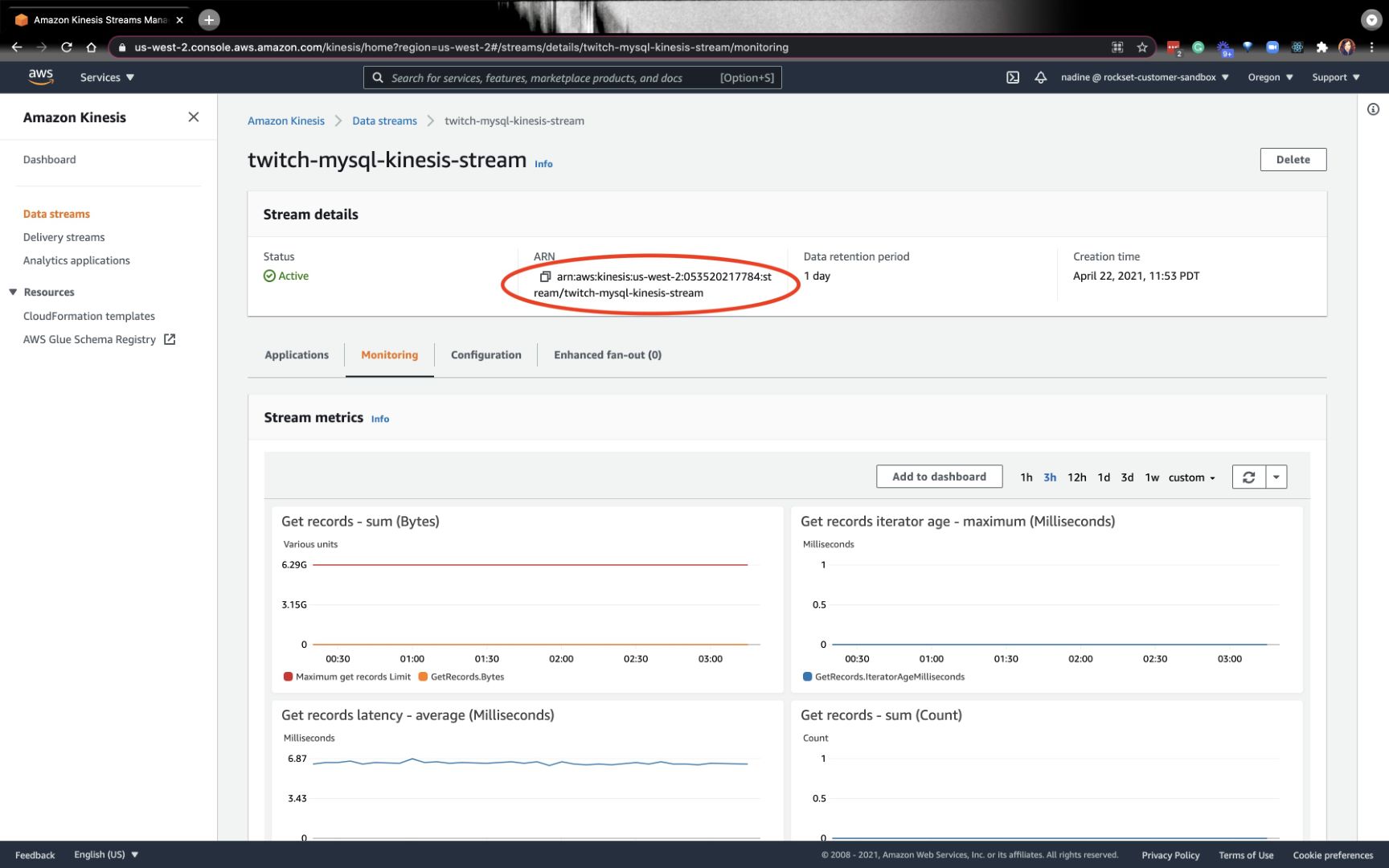

Be sure you bookmark the ARN in your stream — we’re going to want it later:

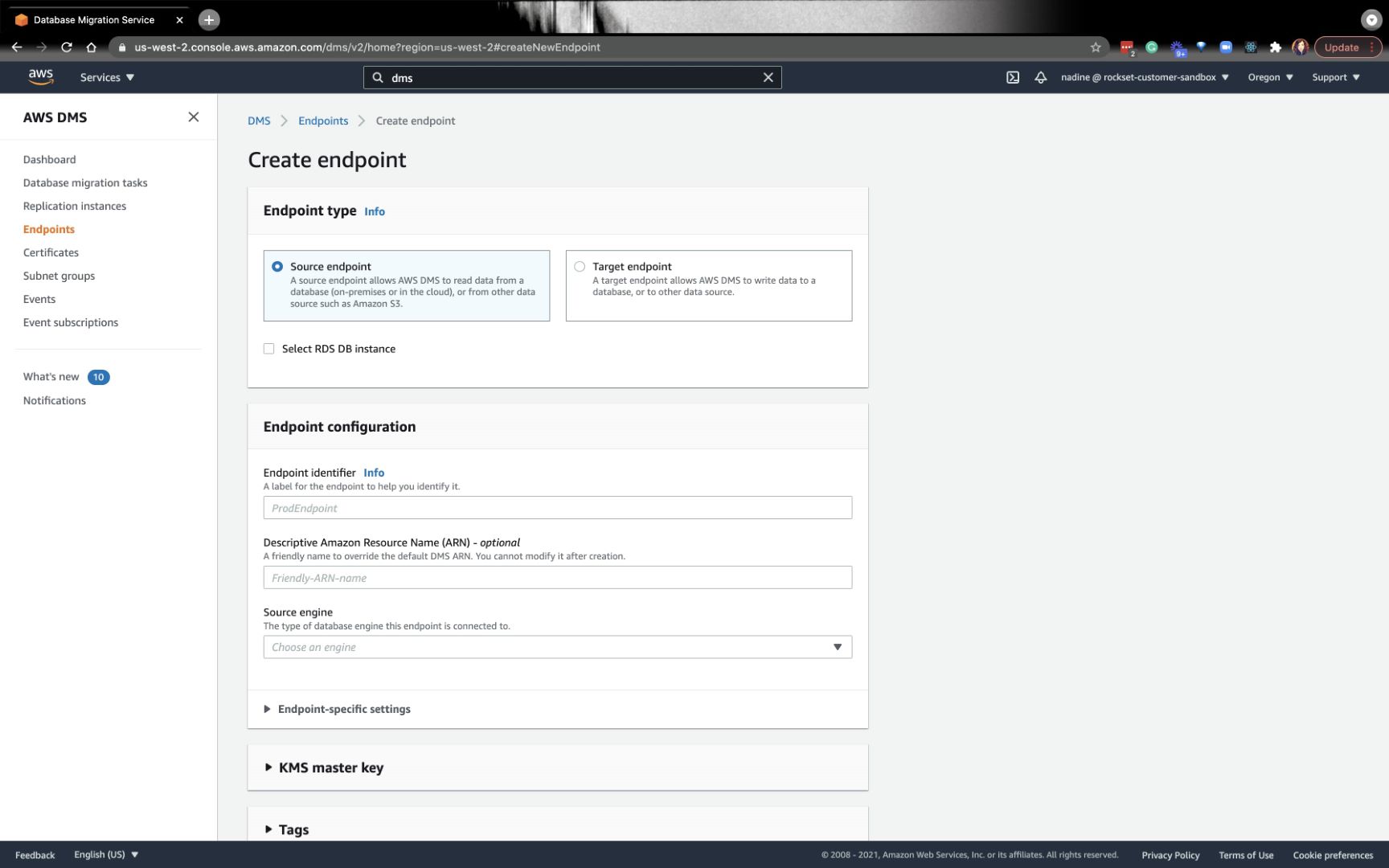

Create an AWS DMS Replication Occasion and Migration Job

Now, we’re going to navigate to AWS DMS (Information Migration Service). The very first thing we’re going to do is create a supply endpoint and a goal endpoint:

Whenever you create the goal endpoint, you’ll want the Kinesis Stream ARN that we created earlier. You’ll additionally want the Service entry function ARN. In the event you don’t have this function, you’ll have to create it on the AWS IAM console. You’ll find extra particulars about tips on how to create this function within the stream proven down under.

From there, we’ll create the replication situations and knowledge migration duties. You may mainly comply with this a part of the directions on our docs or watch the stream.

As soon as the information migration job is profitable, you’re prepared for the Rockset portion!

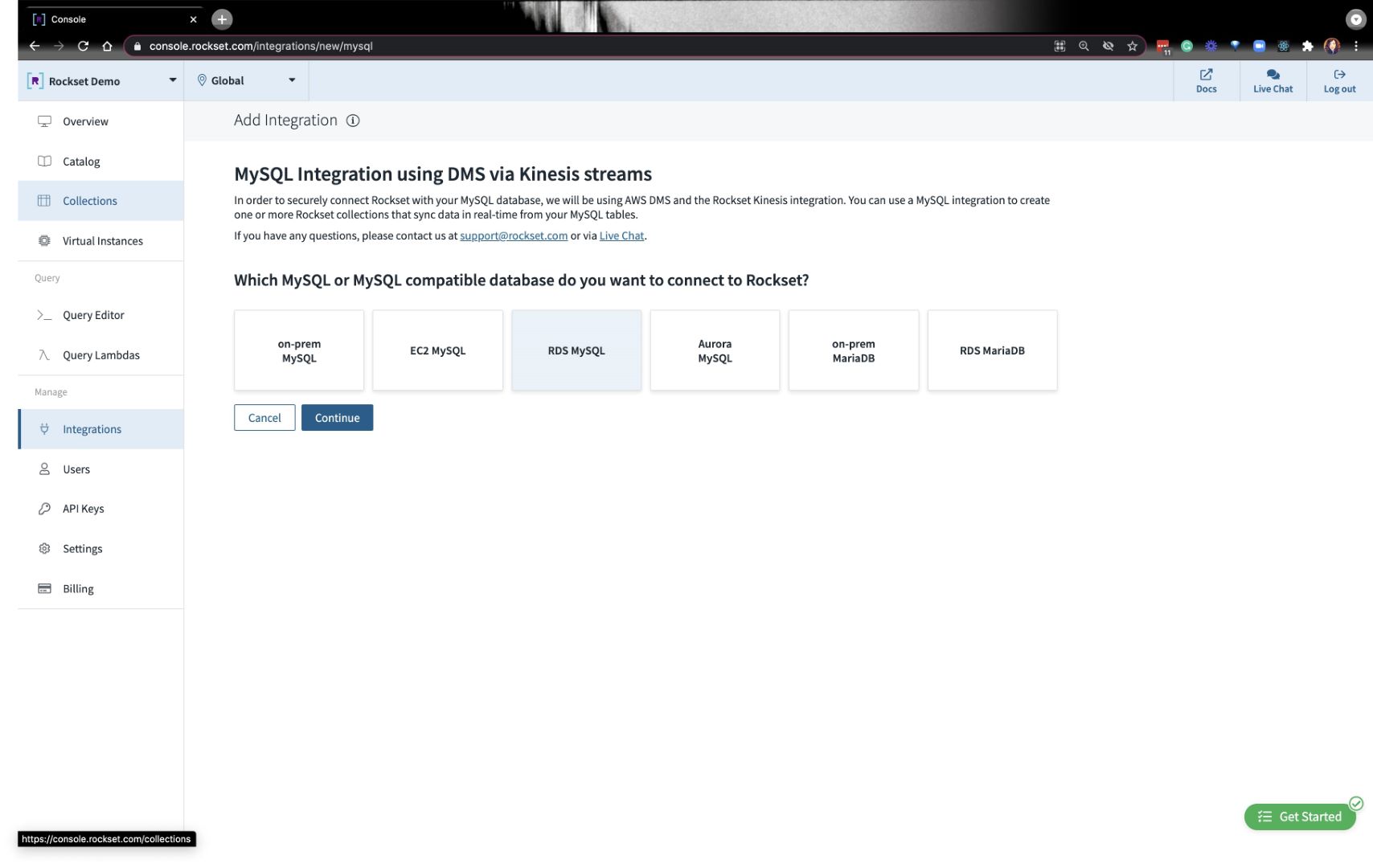

Scaling MySQL analytical workloads on Rockset

As soon as MySQL is related to Rockset, any knowledge adjustments finished on MySQL will register on Rockset. You’ll be capable to scale your workloads effortlessly as effectively. Whenever you first create a MySQL integration, click on on RDS MySQL you’ll see prompts to make sure that you probably did the varied setup directions we simply lined above.

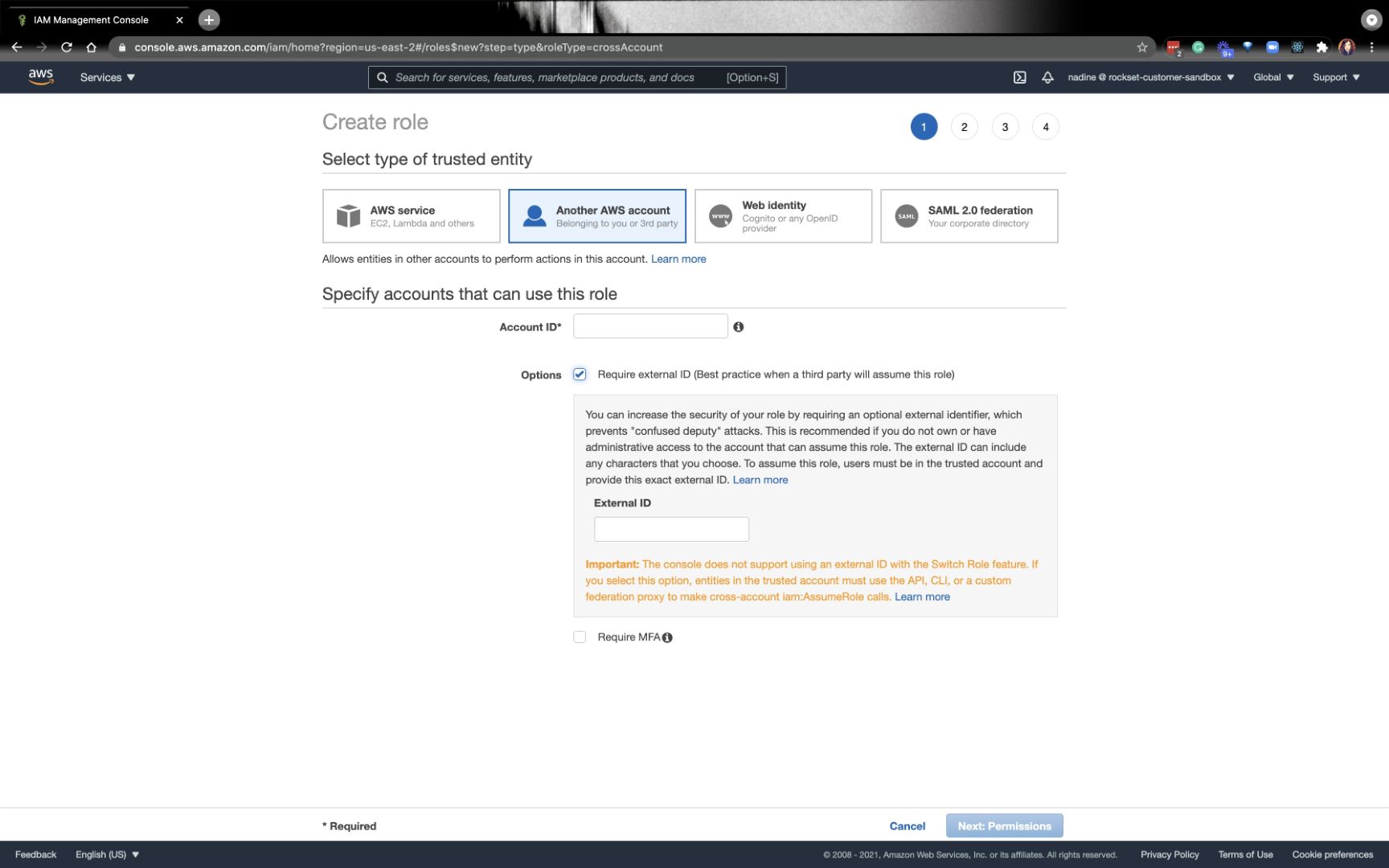

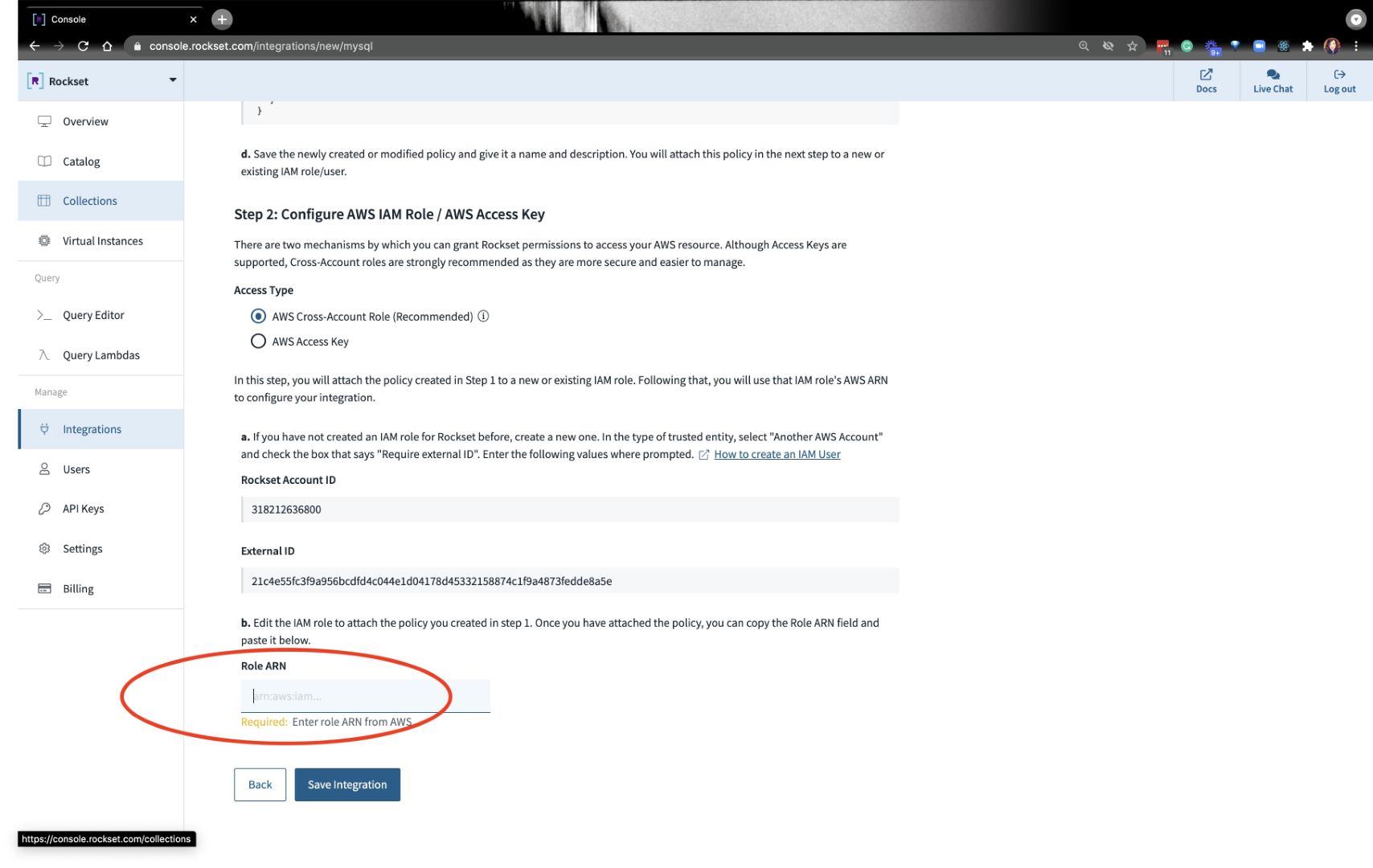

The very last thing you’ll have to do is create a particular IAM function with Rockset’s Account ID and Exterior ID:

You’ll seize the ARN from the function we created and paste it on the backside the place it requires that info:

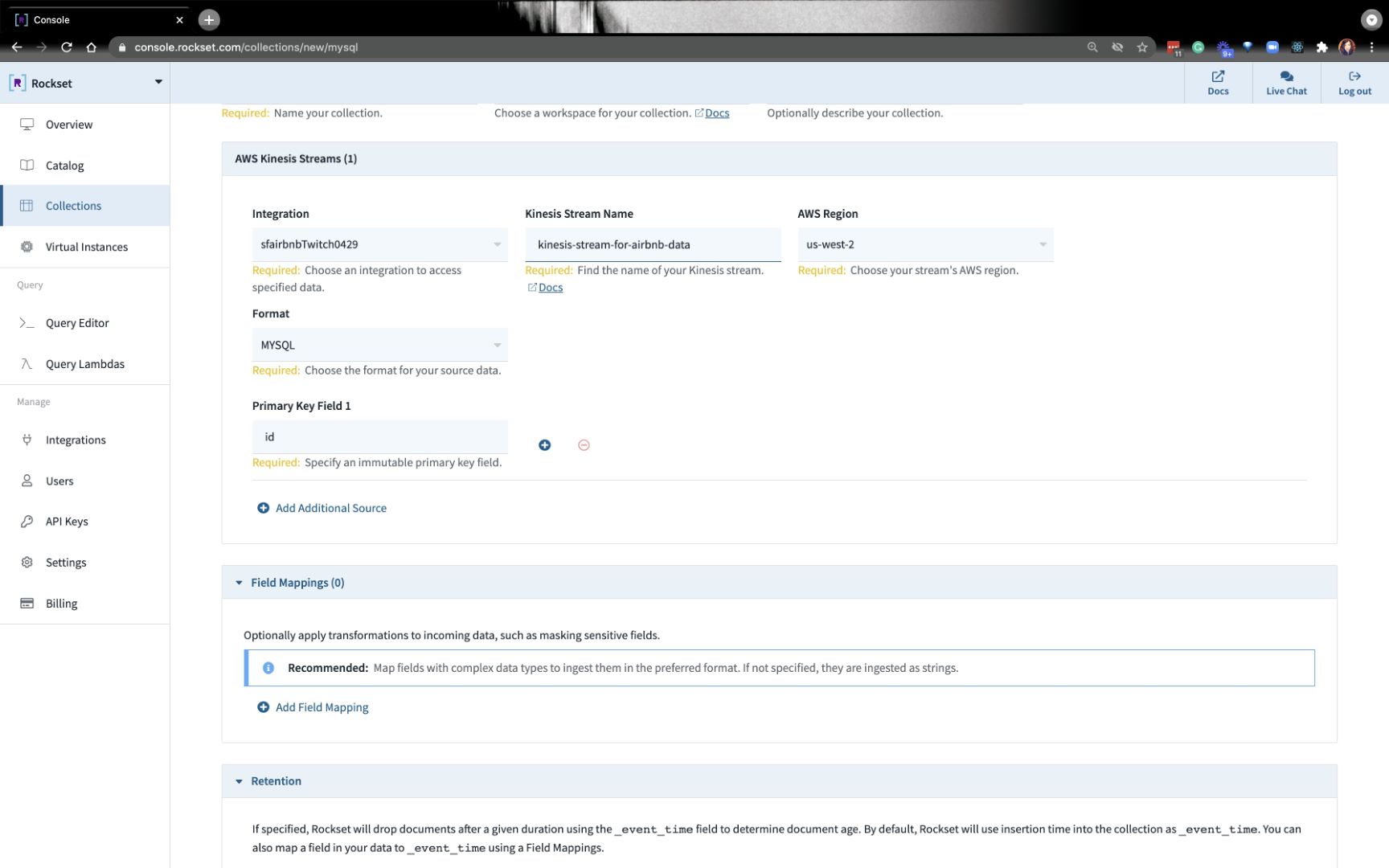

As soon as the mixing is ready up, you’ll have to create a group. Go forward and put it your assortment identify, AWS area, and Kinesis stream info:

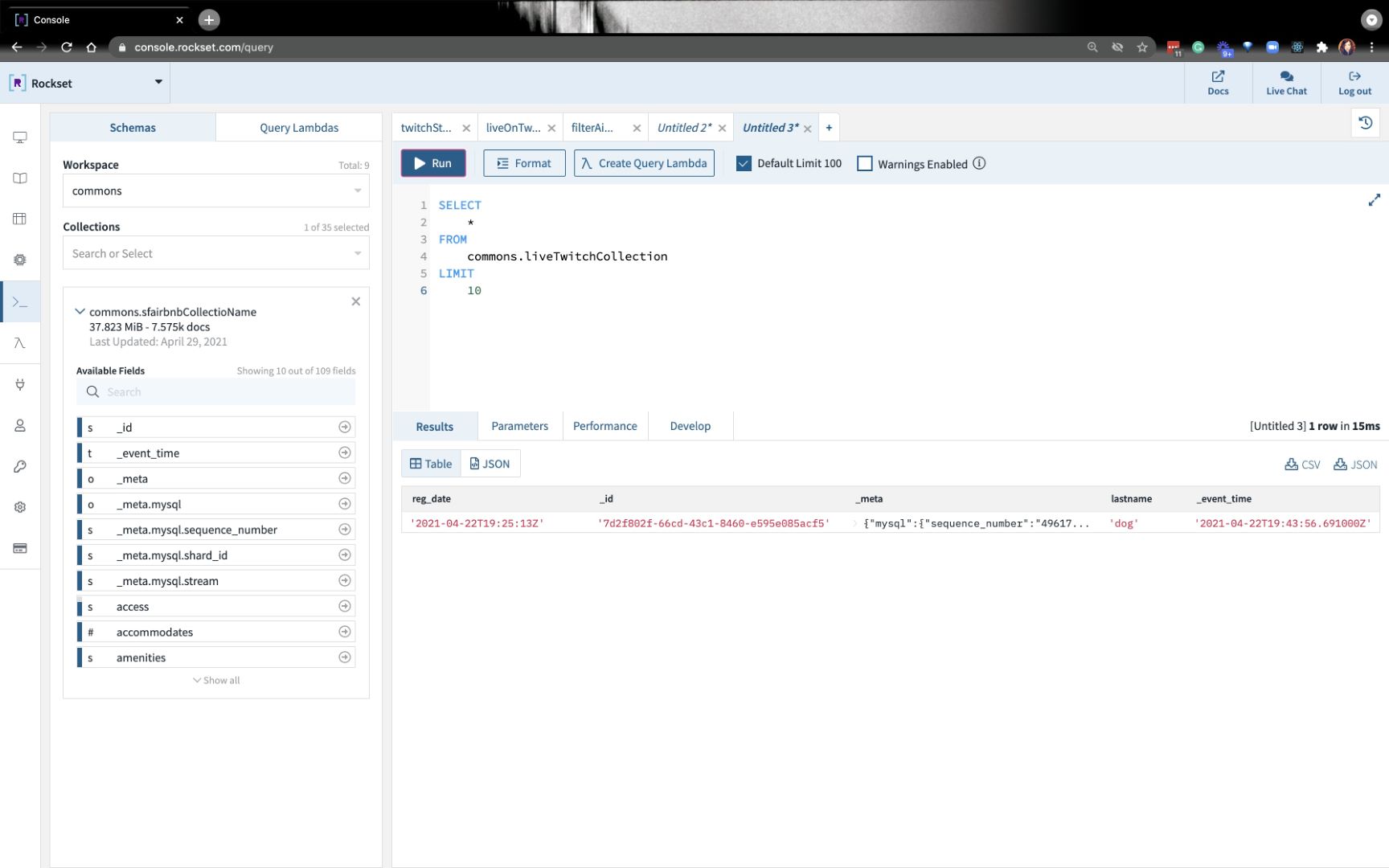

After a minute or so, it’s best to be capable to question your knowledge that’s coming in from MySQL!

We simply did a easy insert into MySQL to check if all the things is working appropriately. Within the subsequent weblog, we’ll create a brand new desk and add knowledge to it. We’ll work on a number of SQL queries.

You may catch the total replay of how we did this end-to-end right here:

Embedded content material: https://youtu.be/oNtmJl2CZf8

Or you may comply with the directions on docs.

TLDR: yow will discover all of the assets you want within the developer nook.