Driving the wave of the generative AI revolution, third celebration giant language mannequin (LLM) companies like ChatGPT and Bard have swiftly emerged because the speak of the city, changing AI skeptics to evangelists and reworking the best way we work together with know-how. For proof of this megatrend look no additional than the moment success of ChatGPT, the place it set the document for the fastest-growing person base, reaching 100 million customers in simply 2 months after its launch. LLMs have the potential to remodel virtually any trade and we’re solely on the daybreak of this new generative AI period.

There are various advantages to those new companies, however they actually will not be a one-size-fits-all resolution, and that is most true for industrial enterprises trying to undertake generative AI for their very own distinctive use instances powered by their knowledge. For all the great that generative AI companies can convey to your organization, they don’t accomplish that with out their very own set of dangers and drawbacks.

On this weblog, we are going to delve into these urgent points, and in addition give you enterprise-ready alternate options. By shedding mild on these issues, we goal to foster a deeper understanding of the constraints and challenges that include utilizing such AI fashions within the enterprise, and discover methods to deal with these issues with a view to create extra accountable and dependable AI-powered options.

Information Privateness

Information privateness is a important concern for each firm as people and organizations alike grapple with the challenges of safeguarding private, buyer, and firm knowledge amid the quickly evolving digital applied sciences and improvements which might be fueled by that knowledge.

Generative AI SaaS purposes like ChatGPT are an ideal instance of the sorts of technological advances that expose people and organizations to privateness dangers and hold infosec groups up at night time. Third-party purposes could retailer and course of delicate firm info, which might be uncovered within the occasion of a knowledge breach or unauthorized entry. Samsung could have an opinion on this after their expertise.

Contextual limitations of LLMs

One of many vital challenges confronted by LLM fashions is their lack of contextual understanding of particular enterprise questions. LLMs like GPT-4 and BERT are educated on huge quantities of publicly obtainable textual content from the web, encompassing a variety of subjects and domains. Nevertheless, these fashions don’t have any entry to enterprise data bases or proprietary knowledge sources. Consequently, when queried with enterprise-specific questions, LLMs could exhibit two widespread responses: hallucinations or factual however out-of-context solutions.

Hallucinations describe an inclination of LLMs to resort to producing fictional info that appears practical. The problem with discerning LLM hallucinations is they’re an efficient mixture of reality and fiction. A current instance is fictional authorized citations steered by ChatGPT, and subsequently being utilized by the legal professionals within the precise court docket case. Utilized in enterprise context, as an worker if we had been to ask about firm journey and relocation insurance policies, a generic LLM will hallucinate affordable sounding insurance policies, which won’t match what the corporate publishes.

Factual however out-of-context solutions outcome when an LLM is not sure concerning the particular reply to a domain-specific question, and the LLM will present a generic however true response that’s not tailor-made to the context. An instance can be asking concerning the value of CDW (Cloudera Information Warehouse), because the language mannequin doesn’t have entry to the enterprise value record and commonplace low cost charges the reply will in all probability present the standard charges for a collision injury waiver (additionally abbreviated as CDW), the reply will probably be factual however out of context.

Enterprise hosted LLMs Guarantee Information Privateness

One possibility to make sure knowledge privateness is to make use of enterprise developed and hosted LLMs within the purposes. Whereas coaching an LLM from scratch could appear enticing, it’s prohibitively costly. Sam Altman, Open AI’s CEO, estimates the price to coach GPT-4 to be over $100 million.

The excellent news is that the open supply neighborhood stays undefeated. Day by day new LLMs developed by varied analysis groups and organizations are launched on HuggingFace, constructed upon cutting-edge methods and architectures, leveraging the collective experience of the broader AI neighborhood. HuggingFace additionally makes entry to those pre-trained open supply fashions trivial, so your organization can begin their LLM journey from a extra helpful start line. And new and highly effective open alternate options proceed being contributed at a speedy tempo (MPT-7B from MosaicML, Vicuna)

Open supply fashions allow enterprises to host their AI options in-house inside their enterprise with out spending a fortune on analysis, infrastructure, and improvement. This additionally signifies that the interactions with this mannequin are saved in home, thus eliminating the privateness issues related to SaaS LLM options like ChatGPT and Bard.

Including Enterprise Context to LLMs

Contextual Limitation is just not distinctive to enterprises. SaaS LLM companies like OpenAI have paid choices to combine your knowledge into their service, however this has very apparent privateness implications. The AI neighborhood has additionally acknowledged this hole and have already delivered quite a lot of options, so you may add context to enterprise hosted LLMs with out exposing your knowledge.

By leveraging open supply applied sciences akin to Ray or LangChain, builders can fine-tune language fashions with enterprise-specific knowledge, thereby bettering response high quality by way of the event of task-specific understanding and adherence to desired tones. This empowers the mannequin to know buyer queries, present higher responses, and adeptly deal with the nuances of customer-specific language. Wonderful tuning is efficient at including enterprise context to LLMs.

One other highly effective resolution to contextual limitations is the usage of architectures like Retrieval-Augmented Era (RAG). This method combines generative capabilities with the flexibility to retrieve info out of your data base utilizing vector databases like Milvus populated together with your paperwork. By integrating a data database, LLMs can entry particular info in the course of the technology course of. This integration permits the mannequin to generate responses that aren’t solely language-based but additionally grounded within the context of your personal data base.

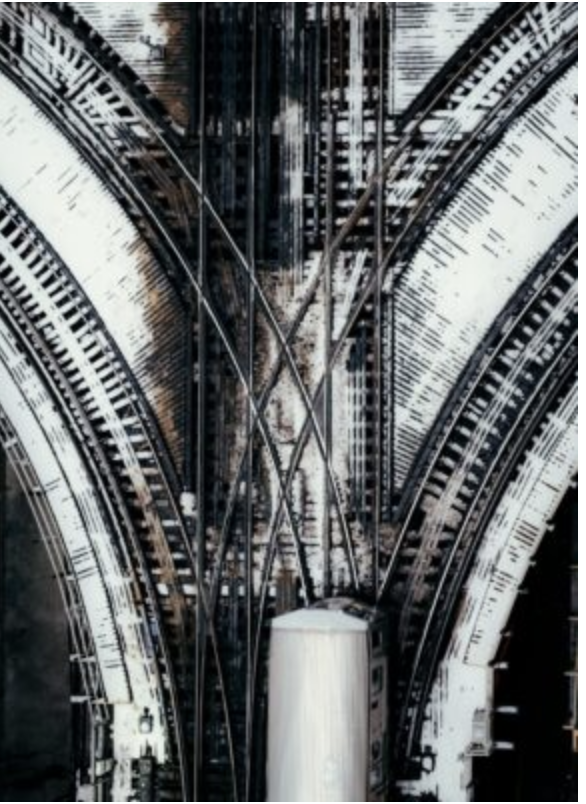

RAG Structure Diagram for data context injection into LLM Prompts

With these open supply superpowers, enterprises are enabled to create and host material knowledgeable LLMs, which might be tuned to excel at particular use instances slightly than generalized to be fairly good at the whole lot.

Cloudera – Enabling Generative AI for the Enterprise

If taking up this new frontier of Generative AI feels daunting, don’t fear, Cloudera is right here to assist information you on this journey. We’ve a number of distinctive benefits that place us as the right associate to extract most worth from LLMs with your personal proprietary or regulated knowledge, with out the danger of exposing it.

Cloudera is the one firm that gives an open knowledge lakehouse in each private and non-private clouds. We offer a set of objective constructed knowledge companies enabling improvement throughout the information lifecycle, from the sting to AI. Whether or not that’s real-time knowledge streaming, storing and analyzing knowledge in open lakehouses, or deploying and monitoring machine studying fashions, the Cloudera Information Platform (CDP) has you lined.

Cloudera Machine Studying (CML) is one in all these knowledge companies offered in CDP. With CML, companies can construct their very own AI utility powered by an open supply LLM of their alternative, with their knowledge, all hosted internally within the enterprise, empowering all their builders and contours of enterprise – not simply knowledge scientists and ML groups – and really democratizing AI.

It’s Time to Get Began

In the beginning of this weblog, we described Generative AI as a wave, however to be sincere it’s extra like a tsunami. To remain related corporations want to start out experimenting with the know-how as we speak in order that they will put together to productionize within the very close to future. To this finish, we’re comfortable to announce the discharge of a brand new Utilized ML Prototype (AMP) to speed up your AI and LLM experimentation. LLM Chatbot Augmented with Enterprise Information is the primary of a sequence of AMPs that can display tips on how to make use of open supply libraries and applied sciences to allow Generative AI for the enterprise.

This AMP is an indication of the RAG resolution mentioned on this weblog. The code is 100% open supply, so anybody could make use of it, and all Cloudera prospects can deploy with a single click on of their CML workspace.