Generative AI stretches our present copyright legislation in unexpected and uncomfortable methods. Within the US, the Copyright Workplace has issued steering stating that the output of image-generating AI isn’t copyrightable, except human creativity has gone into the prompts that generated the output. This ruling in itself raises many questions: how a lot creativity is required, and is that the identical sort of creativity that an artist workouts with a paintbrush? If a human writes software program to generate prompts that in flip generate a picture, is that copyrightable? If the output of a mannequin can’t be owned by a human, who (or what) is accountable if that output infringes current copyright? Is an artist’s type copyrightable, and in that case, what does that imply?

One other group of instances involving textual content (sometimes novels and novelists) argue that utilizing copyrighted texts as a part of the coaching knowledge for a Massive Language Mannequin (LLM) is itself copyright infringement,1 even when the mannequin by no means reproduces these texts as a part of its output. However studying texts has been a part of the human studying course of so long as studying has existed; and, whereas we pay to purchase books, we don’t pay to be taught from them. These instances typically level out that the texts utilized in coaching have been acquired from pirated sources—which makes for good press, though that declare has no authorized worth. Copyright legislation says nothing about whether or not texts are acquired legally or illegally.

How can we make sense of this? What ought to copyright legislation imply within the age of synthetic intelligence?

In an article in The New Yorker, Jaron Lanier introduces the thought of knowledge dignity, which implicitly distinguishes between coaching a mannequin and producing output utilizing a mannequin. Coaching an LLM means instructing it find out how to perceive and reproduce human language. (The phrase “instructing” arguably invests an excessive amount of humanity into what remains to be software program and silicon.) Producing output means what it says: offering the mannequin directions that trigger it to supply one thing. Lanier argues that coaching a mannequin needs to be a protected exercise, however that the output generated by a mannequin can infringe on somebody’s copyright.

This distinction is engaging for a number of causes. First, present copyright legislation protects “transformative use.” You don’t have to know a lot about AI to comprehend {that a} mannequin is transformative. Studying concerning the lawsuits reaching the courts, we generally have the sensation that authors imagine that their works are in some way hidden contained in the mannequin, that George R. R. Martin thinks that if he searched by means of the trillion or so parameters of GPT-4, he’d discover the textual content to his novels. He’s welcome to strive, and he gained’t succeed. (OpenAI gained’t give him the GPT fashions, however he can obtain the mannequin for Meta’s Llama-2 and have at it.) This fallacy was in all probability inspired by one other New Yorker article arguing that an LLM is sort of a compressed model of the net. That’s a pleasant picture, however it’s essentially flawed. What’s contained within the mannequin is a gigantic set of parameters based mostly on all of the content material that has been ingested throughout coaching, that represents the likelihood that one phrase is more likely to comply with one other. A mannequin isn’t a replica or a replica, in entire or partly, lossy or lossless, of the information it’s educated on; it’s the potential for creating new and totally different content material. AI fashions are likelihood engines; an LLM computes the following phrase that’s almost definitely to comply with the immediate, then the following phrase almost definitely to comply with that, and so forth. The flexibility to emit a sonnet that Shakespeare by no means wrote: that’s transformative, even when the brand new sonnet isn’t superb.

Lanier’s argument is that constructing a greater mannequin is a public good, that the world can be a greater place if we’ve computer systems that may work instantly with human language, and that higher fashions serve us all—even the authors whose works are used to coach the mannequin. I can ask a imprecise, poorly shaped query like “During which twenty first century novel do two ladies journey to Parchman jail to choose up considered one of their husbands who’s being launched,” and get the reply “Sing, Unburied, Sing by Jesmyn Ward.” (Extremely really useful, BTW, and I hope this point out generates a couple of gross sales for her.) I can even ask for a studying record about plagues in sixteenth century England, algorithms for testing prime numbers, or the rest. Any of those prompts may generate guide gross sales—however whether or not or not gross sales consequence, they’ll have expanded my data. Fashions which might be educated on all kinds of sources are a very good; that good is transformative and needs to be protected.

The issue with Lanier’s idea of information dignity is that, given the present cutting-edge in AI fashions, it’s unimaginable to differentiate meaningfully between “coaching” and “producing output.” Lanier acknowledges that downside in his criticism of the present technology of “black field” AI, wherein it’s unimaginable to attach the output to the coaching inputs on which the output was based mostly. He asks, “Why don’t bits come hooked up to the tales of their origins?,” mentioning that this downside has been with us for the reason that starting of the Net. Fashions are educated by giving them smaller bits of enter and asking them to foretell the following phrase billions of instances; tweaking the mannequin’s parameters barely to enhance the predictions; and repeating that course of hundreds, if not thousands and thousands, of instances. The identical course of is used to generate output, and it’s essential to know why that course of makes copyright problematic. In case you give a mannequin a immediate about Shakespeare, it would decide that the output ought to begin with the phrase “To.” Provided that it has already chosen “To,” there’s a barely greater likelihood that the following phrase within the output can be “be.” Provided that, there’s a good barely greater likelihood that the following phrase can be “or.” And so forth. From this standpoint, it’s exhausting to say that the mannequin is copying the textual content. It’s simply following chances—a “stochastic parrot.” It’s extra like monkeys typing randomly at keyboards than a human plagiarizing a literary textual content—however these are extremely educated, probabilistic monkeys that really have an opportunity at reproducing the works of Shakespeare.

An essential consequence of this course of is that it’s not doable to attach the output again to the coaching knowledge. The place did the phrase “or” come from? Sure, it occurs to be the following phrase in Hamlet’s well-known soliloquy; however the mannequin wasn’t copying Hamlet, it simply picked “or” out of the a whole bunch of hundreds of phrases it may have chosen, on the idea of statistics. It isn’t being inventive in any method we as people would acknowledge. It’s maximizing the likelihood that we (people) will understand the output it generates as a legitimate response to the immediate.

We imagine that authors needs to be compensated for the usage of their work—not within the creation of the mannequin, however when the mannequin produces their work as output. Is it doable? For an organization like O’Reilly Media, a associated query comes into play. Is it doable to differentiate between inventive output (“Write within the type of Jesmyn Ward”) and actionable output (“Write a program that converts between present costs of currencies and altcoins”)? The response to the primary query could be the beginning of a brand new novel—which could be considerably totally different from something Ward wrote, and which doesn’t devalue her work any greater than her second, third, or fourth novels devalue her first novel. People copy one another’s type on a regular basis! That’s why English type post-Hemingway is so distinctive from the type of nineteenth century authors, and an AI-generated homage to an writer may really improve the worth of the unique work, a lot as human “fan-fic” encourages somewhat than detracts from the recognition of the unique.

The response to the second query is a chunk of software program that would take the place of one thing a earlier writer has written and printed on GitHub. It may substitute for that software program, presumably chopping into the programmer’s income. However even these two instances aren’t as totally different as they first seem. Authors of “literary” fiction are protected, however what about actors or screenwriters whose work could possibly be ingested by a mannequin and reworked into new roles or scripts? There are 175 Nancy Drew books, all “authored” by the non-existent Carolyn Keene, however written by an extended chain of ghostwriters. Sooner or later, AIs could also be included amongst these ghostwriters. How can we account for the work of authors—of novels, screenplays, or software program—to allow them to be compensated for his or her contributions? What concerning the authors who educate their readers find out how to grasp an advanced know-how subject? The output of a mannequin that reproduces their work supplies a direct substitute somewhat than a transformative use that could be complementary to the unique.

It will not be doable should you use a generative mannequin configured as a chat server by itself. However that isn’t the tip of the story. Within the 12 months or so since ChatGPT’s launch, builders have been constructing purposes on prime of the state-of-the-art basis fashions. There are numerous other ways to construct purposes, however one sample has grow to be outstanding: Retrieval-Augmented Era, or RAG. RAG is used to construct purposes that “learn about” content material that isn’t within the mannequin’s coaching knowledge. For instance, you may wish to write a stockholders’ report, or generate textual content for a product catalog. Your organization has all the information you want—however your organization’s financials clearly weren’t in ChatGPT’s coaching knowledge. RAG takes your immediate, hundreds paperwork in your organization’s archive which might be related, packages all the things collectively, and sends the immediate to the mannequin. It might embrace directions like “Solely use the information included with this immediate within the response.” (This can be an excessive amount of info, however this course of typically works by producing “embeddings” for the corporate’s documentation; storing these embeddings in a vector database; and retrieving the paperwork which have embeddings just like the person’s authentic query. Embeddings have the essential property that they mirror relationships between phrases and texts. They make it doable to seek for related or comparable paperwork.)

Whereas RAG was initially conceived as a option to give a mannequin proprietary info with out going by means of the labor- and compute-intensive course of of coaching, in doing so it creates a connection between the mannequin’s response and the paperwork from which the response was created. The response is not constructed from random phrases and phrases which might be indifferent from their sources. We’ve got provenance. Whereas it nonetheless could also be troublesome to guage the contribution of the totally different sources (23% from A, 42% from B, 35% from C), and whereas we will anticipate lots of pure language “glue” to have come from the mannequin itself, we’ve taken a giant step ahead in the direction of Lanier’s knowledge dignity. We’ve created traceability the place we beforehand had solely a black field. If we printed somebody’s forex conversion software program in a guide or coaching course and our language mannequin reproduces it in response to a query, we will attribute that to the unique supply and allocate royalties appropriately. The identical would apply to new novels within the type of Jesmyn Ward or, maybe extra appropriately, to the never-named creators of pulp fiction and screenplays.

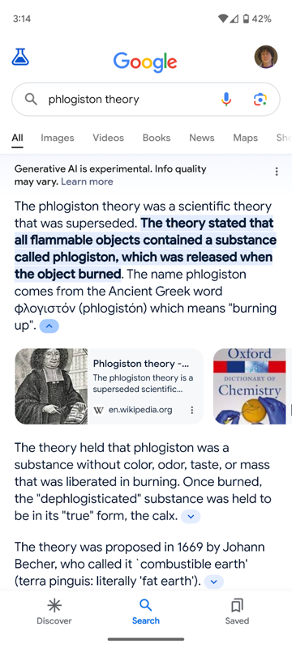

Google’s “AI Powered Overview” characteristic2 is an efficient instance of what we will anticipate with RAG. We will’t say for sure that it was applied with RAG, but it surely clearly follows the sample. Google, which invented Transformers, is aware of higher than anybody that Transformer-based fashions destroy metadata, except you do lots of particular engineering. However Google has the most effective search engine on the planet. Given a search string, it’s easy for Google to carry out the search, take the highest few outcomes, after which ship them to a language mannequin for summarization. It depends on the mannequin for language and grammar, however derives the content material from the paperwork included within the immediate. That course of may give precisely the outcomes proven under: a abstract of the search outcomes, with down arrows that you would be able to open to see the sources from which the abstract was generated. Whether or not this characteristic improves the search expertise is an efficient query: whereas an person can hint the abstract again to its supply, it locations the supply two steps away from the abstract. It’s a must to click on the down arrow, then click on on the supply to get to the unique doc. Nevertheless, that design difficulty isn’t germane to this dialogue. What’s essential is that RAG (or one thing like RAG) has enabled one thing that wasn’t doable earlier than: we will now hint the sources of an AI system’s output.

Now that we all know that it’s doable to supply output that respects copyright and if applicable, compensates the writer, it’s as much as regulators to carry corporations accountable for failing to take action, simply as they’re held accountable for hate speech and different types of inappropriate content material. We should always not purchase into the assertion of the massive LLM suppliers that that is an unimaginable activity. It’s another of the numerous enterprise fashions and moral challenges that they need to overcome.

The RAG sample has different benefits. We’re all acquainted with the flexibility of language fashions to “hallucinate,” to make up details that always sound very convincing. We continuously must remind ourselves that AI is just enjoying a statistical recreation, and that its prediction of the almost definitely response to any immediate is commonly flawed. It doesn’t know that it’s answering a query, nor does it perceive the distinction between details and fiction. Nevertheless, when your utility provides the mannequin with the information wanted to assemble a response, the likelihood of hallucination goes down. It doesn’t go to zero, however it’s considerably decrease than when a mannequin creates a response based mostly purely on its coaching knowledge. Limiting an AI to sources which might be recognized to be correct makes the AI’s output extra correct.

We’ve solely seen the beginnings of what’s doable. The easy RAG sample, with one immediate orchestrator, one content material database, and one language mannequin, will little doubt grow to be extra complicated. We are going to quickly see (if we haven’t already) methods that take enter from a person, generate a collection of prompts (presumably for various fashions), mix the outcomes into a brand new immediate, which is then despatched to a distinct mannequin. You possibly can already see this occurring within the newest iteration of GPT-4: once you ship a immediate asking GPT-4 to generate an image, it processes that immediate, then sends the outcomes (in all probability together with different directions) to DALL-E for picture technology. Simon Willison has famous that if the immediate consists of a picture, GPT-4 converts by no means sends that picture to DALL-E; it converts the picture right into a immediate, which is then despatched to DALL-E with a modified model of your authentic immediate. Tracing provenance with these extra complicated methods can be troublesome—however with RAG, we now have the instruments to do it.

AI at O’Reilly Media

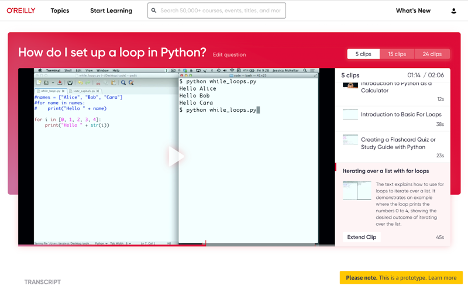

We’re experimenting with a wide range of RAG-inspired concepts on the O’Reilly studying platform. The primary extends Solutions, our AI-based search device that makes use of pure language queries to search out particular solutions in our huge corpus of programs, books, and movies. On this subsequent model, we’re putting Solutions instantly inside the studying context and utilizing an LLM to generate content-specific questions concerning the materials to boost your understanding of the subject.

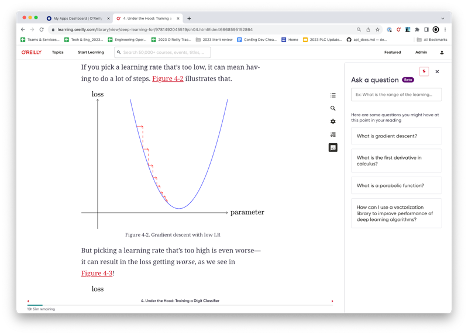

For instance, should you’re studying about gradient descent, the brand new model of Solutions will generate a set of associated questions, similar to find out how to compute a spinoff or use a vector library to extend efficiency. On this occasion, RAG is used to establish key ideas and supply hyperlinks to different assets within the corpus that may deepen the educational expertise.

Our second challenge is geared in the direction of making our long-form video programs easier to browse. Working with our associates at Design Programs Worldwide, we’re creating a characteristic known as “Ask this course,” which can will let you “distill” a course into simply the query you’ve requested. Whereas conceptually just like Solutions, the thought of “Ask this course” is to create a brand new expertise inside the content material itself, somewhat than simply linking out to associated sources. We use LLM to offer part titles and a abstract to sew collectively disparate snippets of content material right into a extra cohesive narrative.