Boba is an experimental AI co-pilot for product technique & generative ideation,

designed to enhance the artistic ideation course of. It’s an LLM-powered

software that we’re constructing to find out about:

An AI co-pilot refers to a synthetic intelligence-powered assistant designed

to assist customers with varied duties, typically offering steerage, assist, and automation

in numerous contexts. Examples of its software embody navigation techniques,

digital assistants, and software program growth environments. We like to think about a co-pilot

as an efficient accomplice {that a} consumer can collaborate with to carry out a selected area

of duties.

Boba as an AI co-pilot is designed to enhance the early levels of technique ideation and

idea era, which rely closely on speedy cycles of divergent

pondering (often known as generative ideation). We usually implement generative ideation

by intently collaborating with our friends, prospects and material specialists, in order that we will

formulate and take a look at revolutionary concepts that handle our prospects’ jobs, pains and positive aspects.

This begs the query, what if AI may additionally take part in the identical course of? What if we

may generate and consider extra and higher concepts, quicker in partnership with AI? Boba begins to

allow this by utilizing OpenAI’s LLM to generate concepts and reply questions

that may assist scale and speed up the artistic pondering course of. For the primary prototype of

Boba, we determined to concentrate on rudimentary variations of the next capabilities:

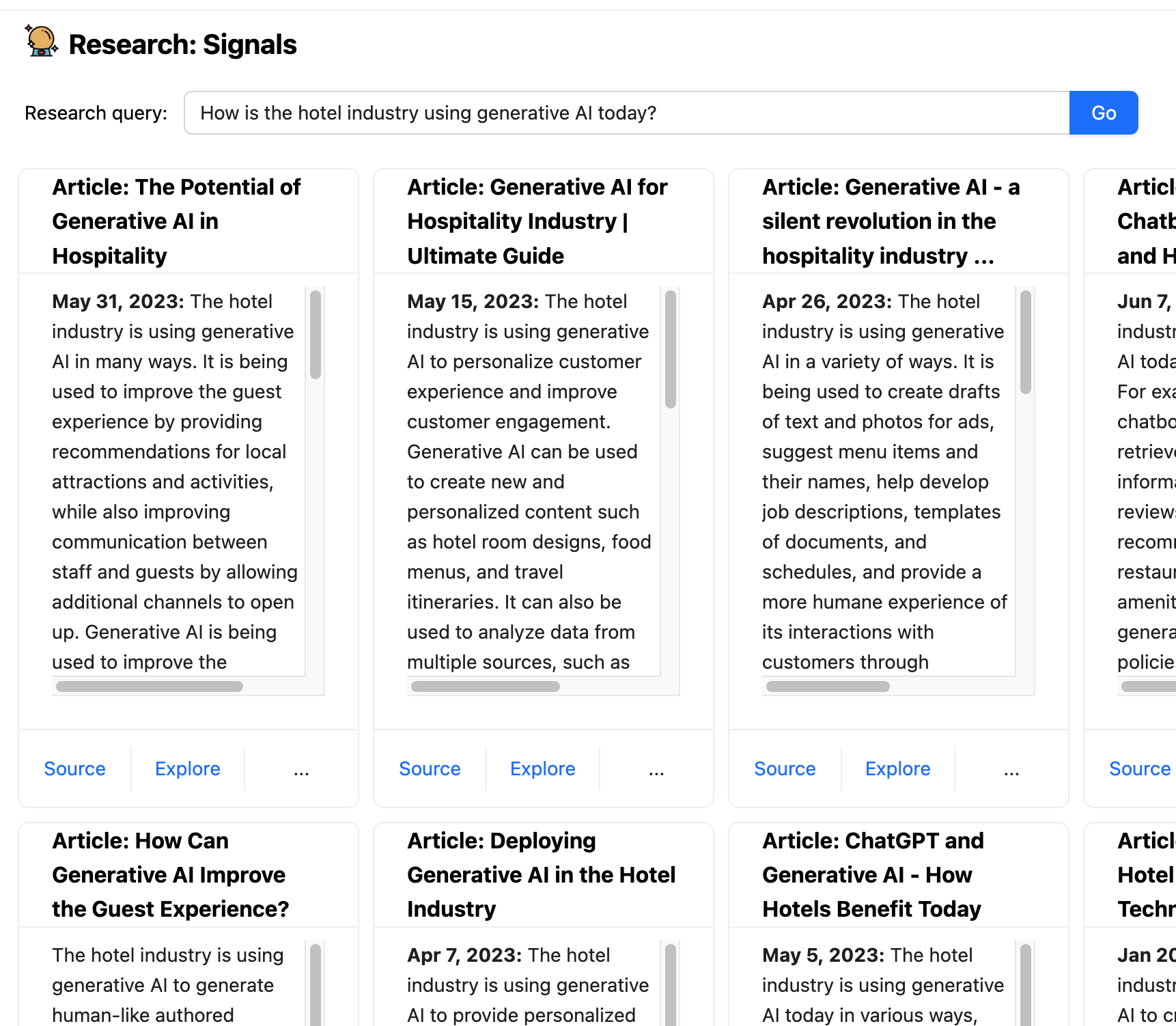

1. Analysis alerts and traits: Search the net for

articles and information that can assist you reply qualitative analysis questions,

like:

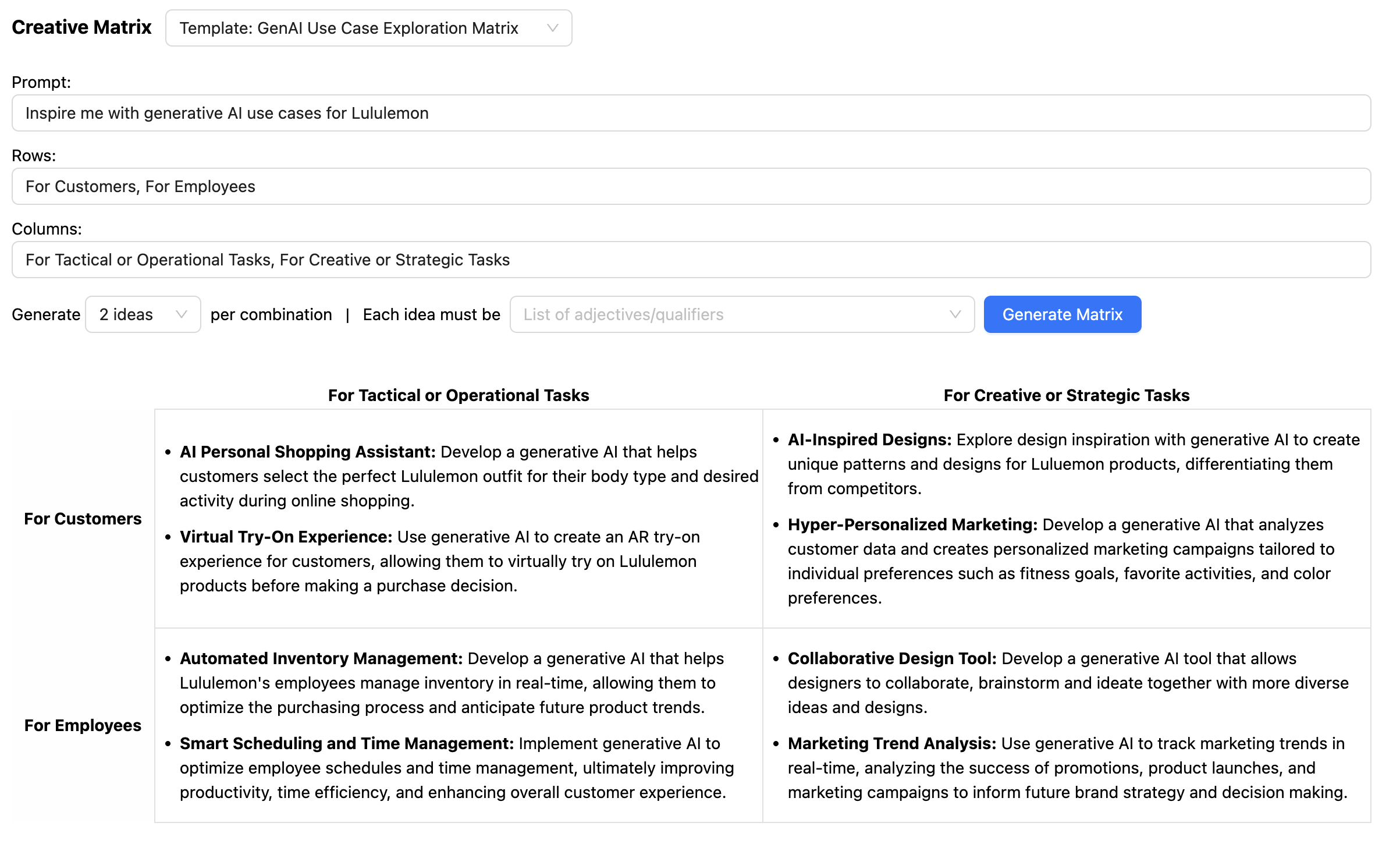

2. Artistic Matrix: The artistic matrix is a concepting methodology for

sparking new concepts on the intersections of distinct classes or

dimensions. This includes stating a strategic immediate, typically as a “How would possibly

we” query, after which answering that query for every

mixture/permutation of concepts on the intersection of every dimension. For

instance:

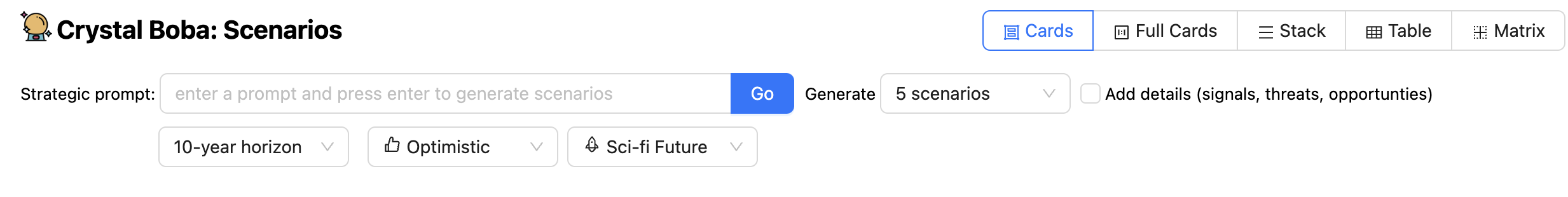

3. Situation constructing: Situation constructing is a means of

producing future-oriented tales by researching alerts of change in

enterprise, tradition, and expertise. Situations are used to socialize learnings

in a contextualized narrative, encourage divergent product pondering, conduct

resilience/desirability testing, and/or inform strategic planning. For

instance, you may immediate Boba with the next and get a set of future

situations primarily based on completely different time horizons and ranges of optimism and

realism:

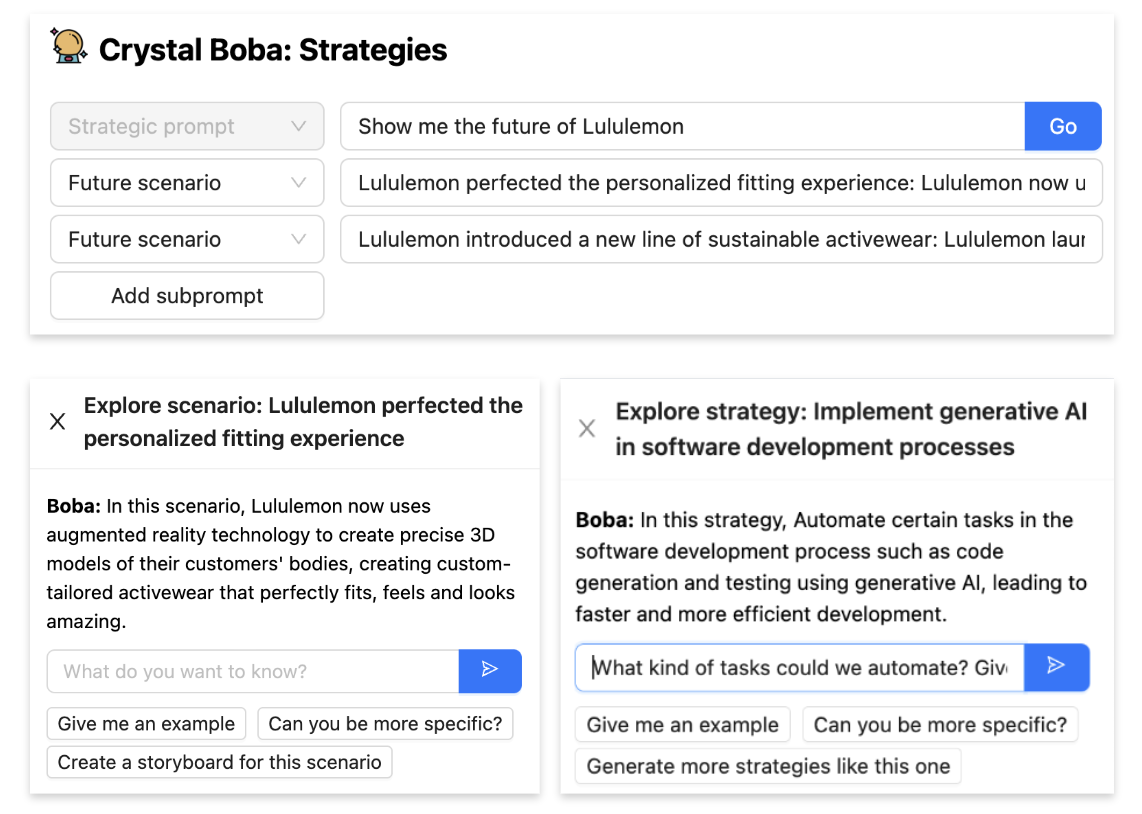

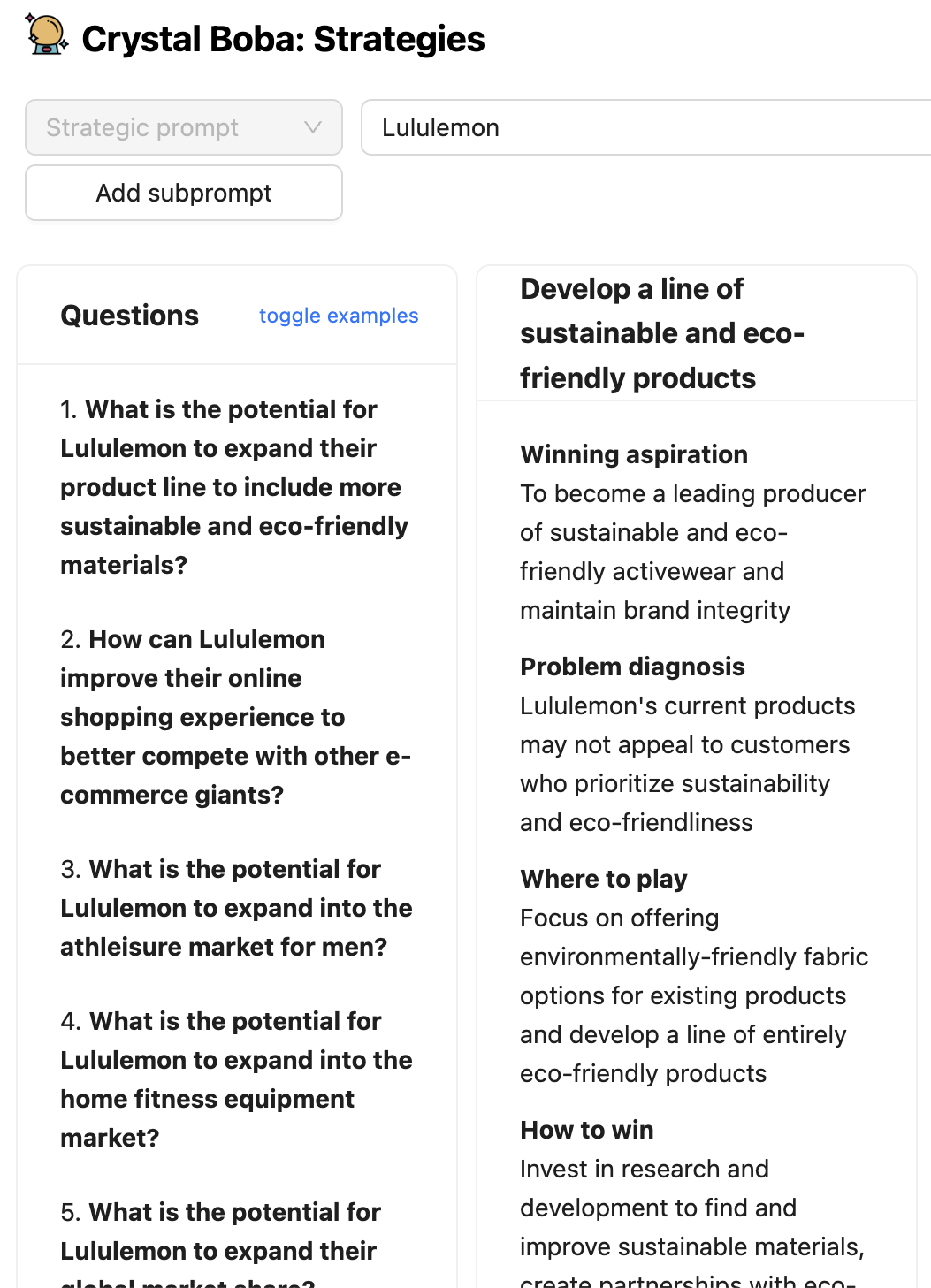

4. Technique ideation: Utilizing the Enjoying to Win technique

framework, brainstorm “the place to play” and “how one can win” decisions

primarily based on a strategic immediate and potential future situations. For instance you

can immediate it with:

5. Idea era: Based mostly on a strategic immediate, akin to a “how would possibly we” query, generate

a number of product or characteristic ideas, which embody worth proposition pitches and hypotheses to check.

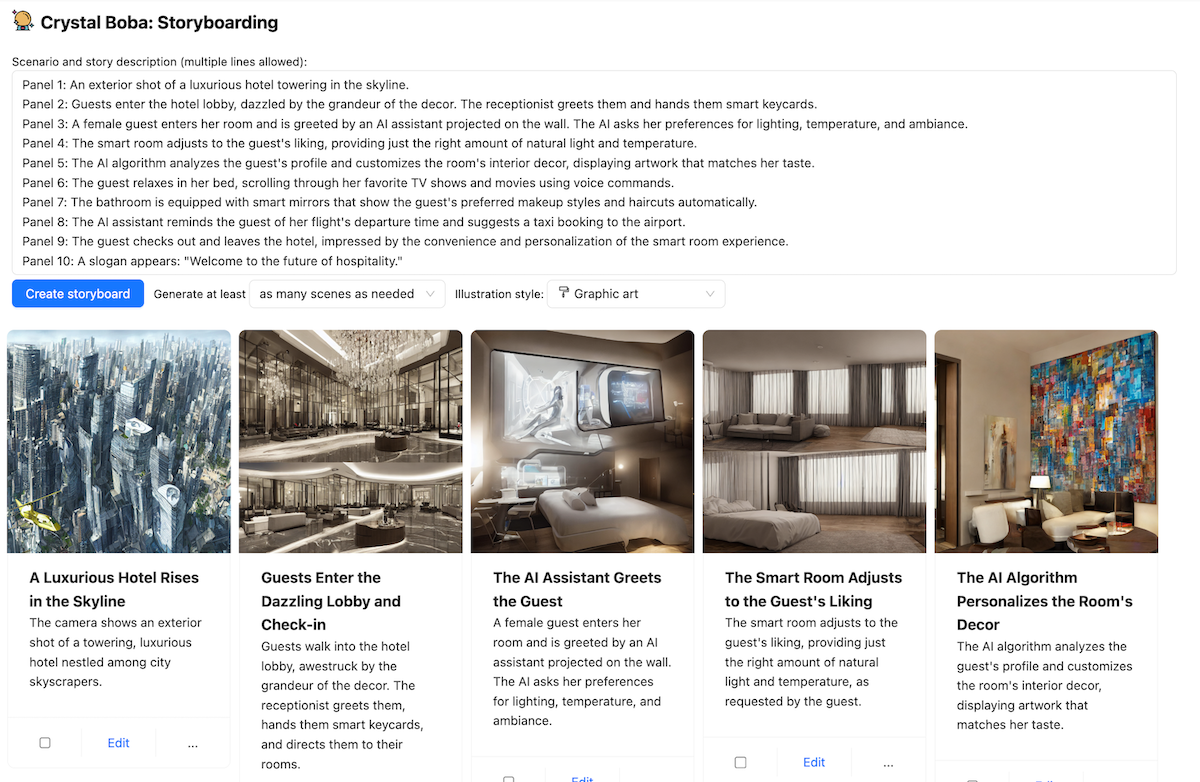

6. Storyboarding: Generate visible storyboards primarily based on a easy

immediate or detailed narrative primarily based on present or future state situations. The

key options are:

Utilizing Boba

Boba is an internet software that mediates an interplay between a human

consumer and a Giant-Language Mannequin, at present GPT 3.5. A easy internet

front-end to an LLM simply affords the flexibility for the consumer to converse with

the LLM. That is useful, however means the consumer must discover ways to

successfully work together the LLM. Even within the brief time that LLMs have seized

the general public curiosity, we have discovered that there’s appreciable ability to

developing the prompts to the LLM to get a helpful reply, leading to

the notion of a “Immediate Engineer”. A co-pilot software like Boba provides

a spread of UI components that construction the dialog. This enables a consumer

to make naive prompts which the appliance can manipulate, enriching

easy requests with components that can yield a greater response from the

LLM.

Boba can assist with plenty of product technique duties. We can’t

describe all of them right here, simply sufficient to provide a way of what Boba does and

to offer context for the patterns later within the article.

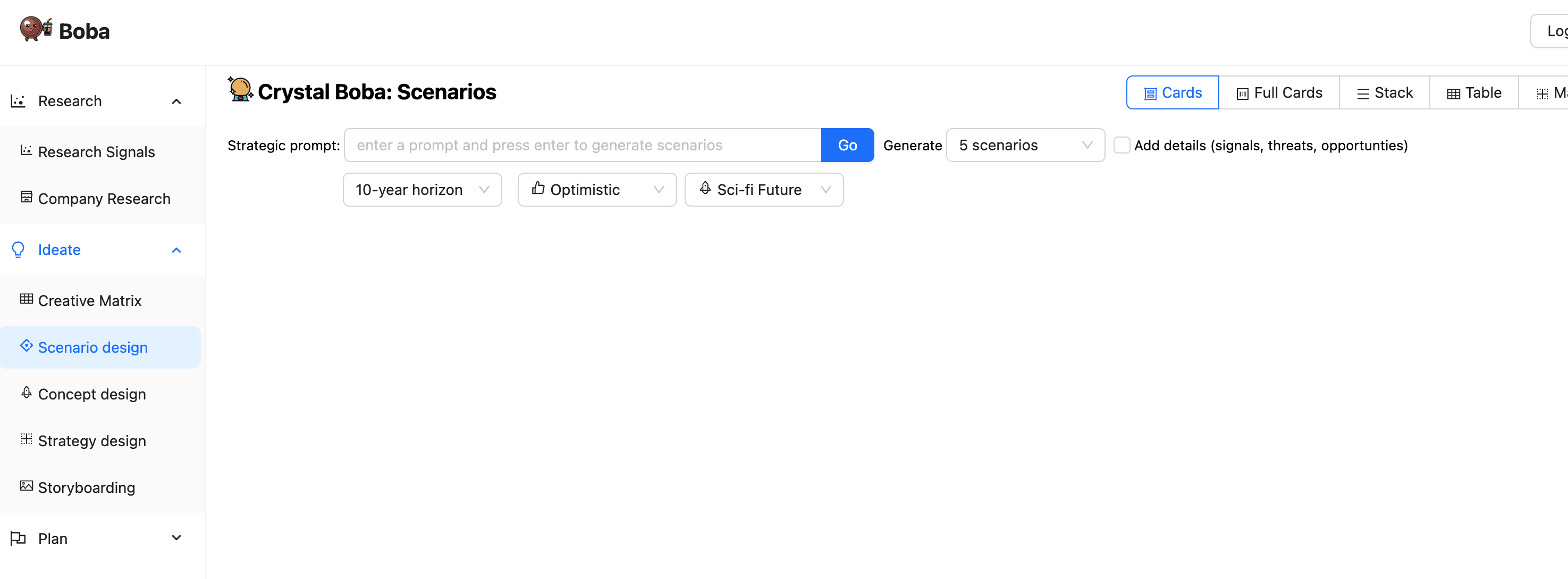

When a consumer navigates to the Boba software, they see an preliminary

display much like this

The left panel lists the varied product technique duties that Boba

helps. Clicking on one among these modifications the principle panel to the UI for

that process. For the remainder of the screenshots, we’ll ignore that process panel

on the left.

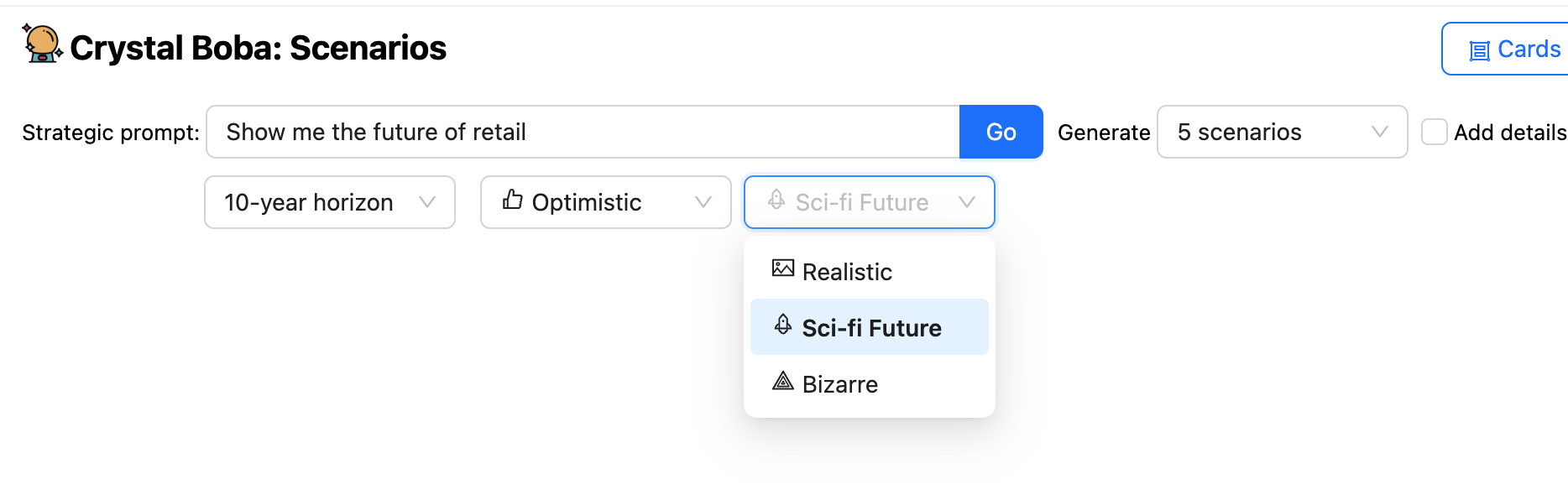

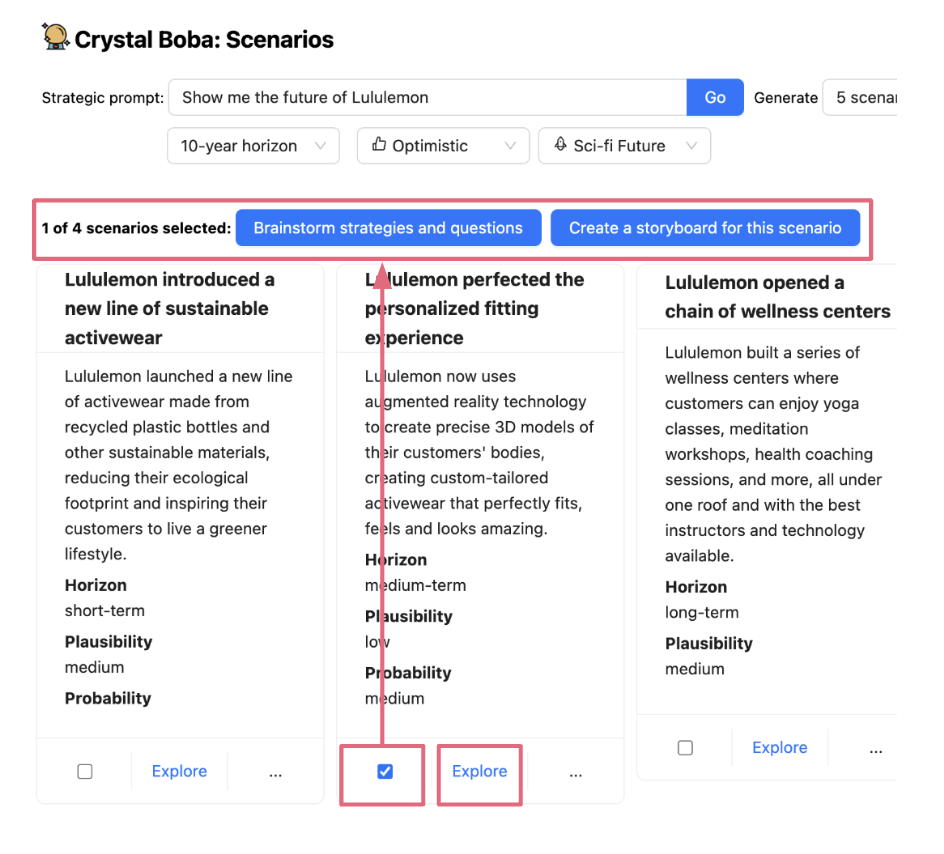

The above screenshot appears to be like on the situation design process. This invitations

the consumer to enter a immediate, akin to “Present me the way forward for retail”.

The UI affords plenty of drop-downs along with the immediate, permitting

the consumer to counsel time-horizons and the character of the prediction. Boba

will then ask the LLM to generate situations, utilizing Templated Immediate to counterpoint the consumer’s immediate

with extra components each from common information of the situation

constructing process and from the consumer’s alternatives within the UI.

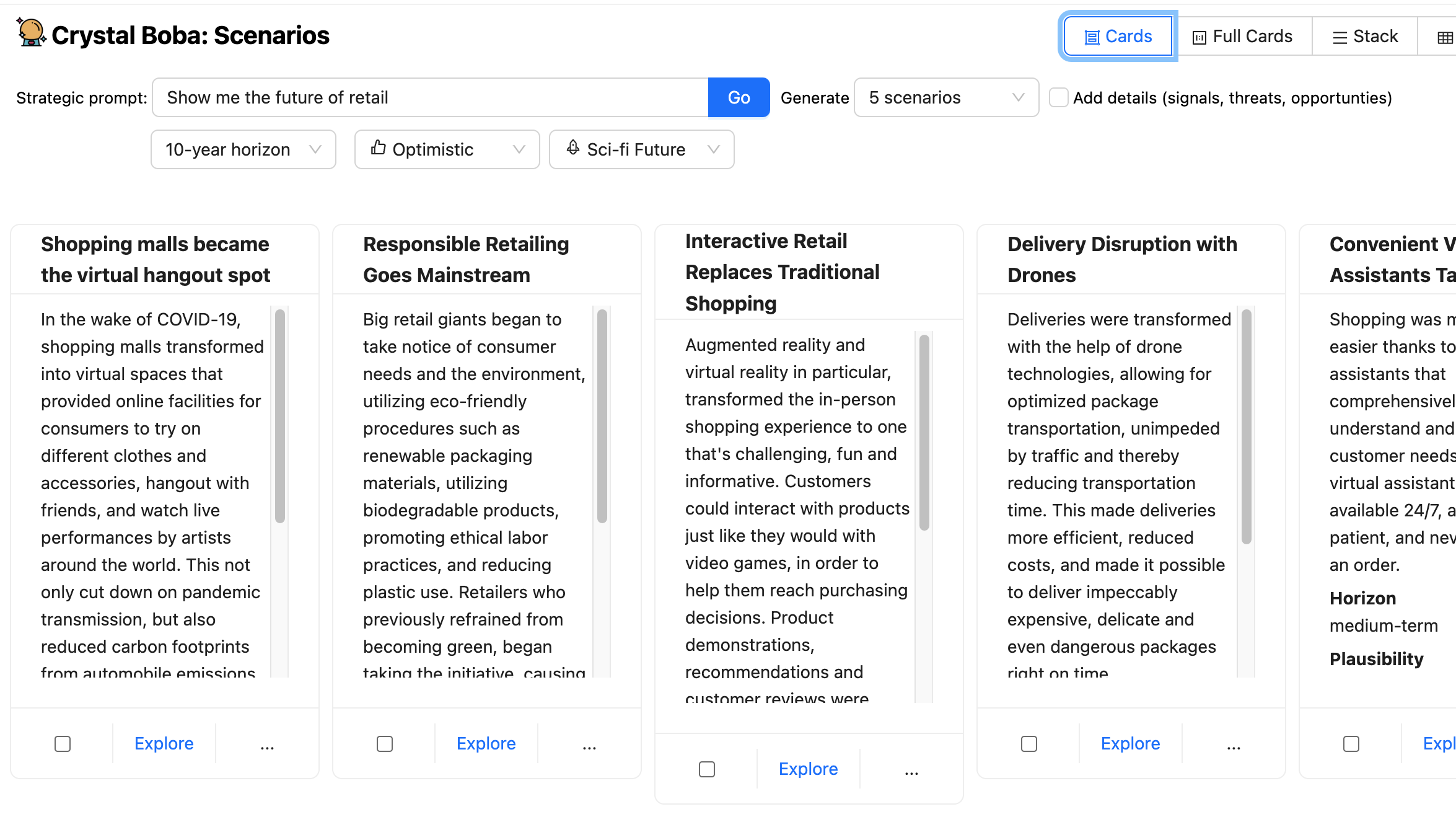

Boba receives a Structured Response from the LLM and shows the

end result as set of UI components for every situation.

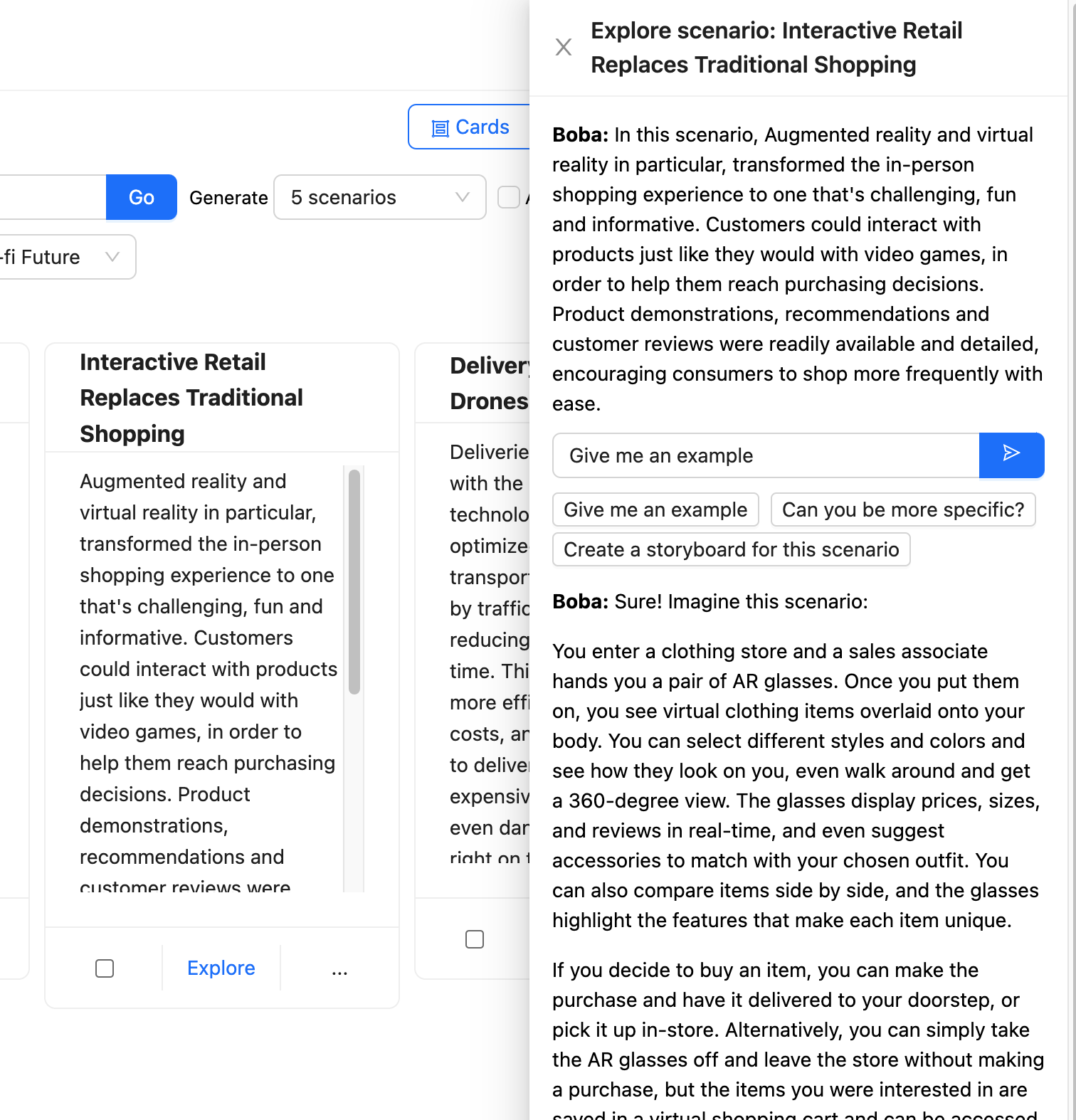

The consumer can then take one among these situations and hit the discover

button, citing a brand new panel with an extra immediate to have a Contextual Dialog with Boba.

Boba takes this immediate and enriches it to concentrate on the context of the

chosen situation earlier than sending it to the LLM.

Boba makes use of Choose and Carry Context

to carry onto the varied elements of the consumer’s interplay

with the LLM, permitting the consumer to discover in a number of instructions with out

having to fret about supplying the fitting context for every interplay.

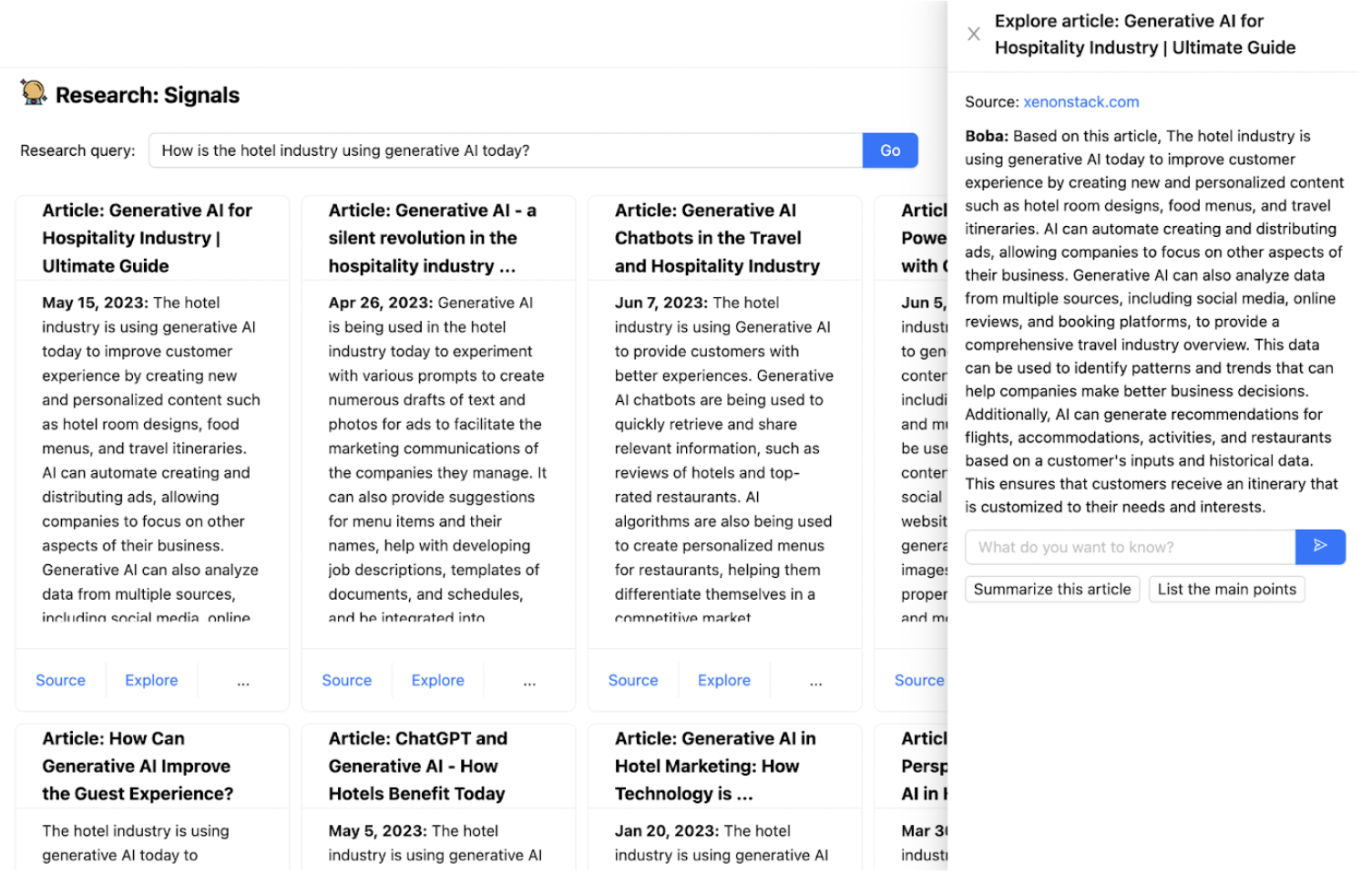

One of many difficulties with utilizing an

LLM is that it is skilled solely on information as much as some level prior to now, making

them ineffective for working with up-to-date info. Boba has a

characteristic known as analysis alerts that makes use of Embedded Exterior Data

to mix the LLM with common search

amenities. It takes the prompted analysis question, akin to “How is the

lodge trade utilizing generative AI at present?”, sends an enriched model of

that question to a search engine, retrieves the urged articles, sends

every article to the LLM to summarize.

That is an instance of how a co-pilot software can deal with

interactions that contain actions that an LLM alone is not appropriate for. Not

simply does this present up-to-date info, we will additionally guarantee we

present supply hyperlinks to the consumer, and people hyperlinks will not be hallucinations

(so long as the search engine is not partaking of the incorrect mushrooms).

Some patterns for constructing generative co-pilot functions

In constructing Boba, we learnt rather a lot about completely different patterns and approaches

to mediating a dialog between a consumer and an LLM, particularly Open AI’s

GPT3.5/4. This checklist of patterns isn’t exhaustive and is restricted to the teachings

we have learnt to this point whereas constructing Boba.

Templated Immediate

Use a textual content template to counterpoint a immediate with context and construction

The primary and easiest sample is utilizing a string templates for the prompts, additionally

often known as chaining. We use Langchain, a library that gives an ordinary

interface for chains and end-to-end chains for widespread functions out of

the field. Should you’ve used a Javascript templating engine, akin to Nunjucks,

EJS or Handlebars earlier than, Langchain offers simply that, however is designed particularly for

widespread immediate engineering workflows, together with options for perform enter variables,

few-shot immediate templates, immediate validation, and extra subtle composable chains of prompts.

For instance, to brainstorm potential future situations in Boba, you may

enter a strategic immediate, akin to “Present me the way forward for funds” or perhaps a

easy immediate just like the identify of an organization. The consumer interface appears to be like like

this:

The immediate template that powers this era appears to be like one thing like

this:

You're a visionary futurist. Given a strategic immediate, you'll create

{num_scenarios} futuristic, hypothetical situations that occur

{time_horizon} from now. Every situation should be a {optimism} model of the

future. Every situation should be {realism}.

Strategic immediate: {strategic_prompt}

As you may think about, the LLM’s response will solely be pretty much as good because the immediate

itself, so that is the place the necessity for good immediate engineering is available in.

Whereas this text isn’t meant to be an introduction to immediate

engineering, you’ll discover some methods at play right here, akin to beginning

by telling the LLM to Undertake a

Persona,

particularly that of a visionary futurist. This was a method we relied on

extensively in varied elements of the appliance to provide extra related and

helpful completions.

As a part of our test-and-learn immediate engineering workflow, we discovered that

iterating on the immediate instantly in ChatGPT affords the shortest path from

concept to experimentation and helps construct confidence in our prompts shortly.

Having stated that, we additionally discovered that we spent far more time on the consumer

interface (about 80%) than the AI itself (about 20%), particularly in

engineering the prompts.

We additionally saved our immediate templates so simple as potential, devoid of

conditional statements. After we wanted to drastically adapt the immediate primarily based

on the consumer enter, akin to when the consumer clicks “Add particulars (alerts,

threats, alternatives)”, we determined to run a unique immediate template

altogether, within the curiosity of protecting our immediate templates from turning into

too advanced and onerous to take care of.

Structured Response

Inform the LLM to reply in a structured information format

Virtually any software you construct with LLMs will probably must parse

the output of the LLM to create some structured or semi-structured information to

additional function on on behalf of the consumer. For Boba, we needed to work with

JSON as a lot as potential, so we tried many various variations of getting

GPT to return well-formed JSON. We have been fairly stunned by how effectively and

persistently GPT returns well-formed JSON primarily based on the directions in our

prompts. For instance, right here’s what the situation era response

directions would possibly appear to be:

You'll reply with solely a sound JSON array of situation objects.

Every situation object may have the next schema:

"title": <string>, //Have to be an entire sentence written prior to now tense

"abstract": <string>, //Situation description

"plausibility": <string>, //Plausibility of situation

"horizon": <string>

We have been equally stunned by the truth that it may assist pretty advanced

nested JSON schemas, even once we described the response schemas in pseudo-code.

Right here’s an instance of how we would describe a nested response for technique

era:

You'll reply in JSON format containing two keys, "questions" and "methods", with the respective schemas under:

"questions": [<list of question objects, with each containing the following keys:>]

"query": <string>,

"reply": <string>

"methods": [<list of strategy objects, with each containing the following keys:>]

"title": <string>,

"abstract": <string>,

"problem_diagnosis": <string>,

"winning_aspiration": <string>,

"where_to_play": <string>,

"how_to_win": <string>,

"assumptions": <string>

An fascinating aspect impact of describing the JSON response schema was that we

may additionally nudge the LLM to offer extra related responses within the output. For

instance, for the Artistic Matrix, we wish the LLM to consider many various

dimensions (the immediate, the row, the columns, and every concept that responds to the

immediate on the intersection of every row and column):

By offering a few-shot immediate that features a particular instance of the output

schema, we have been capable of get the LLM to “suppose” in the fitting context for every

concept (the context being the immediate, row and column):

You'll reply with a sound JSON array, by row by column by concept. For instance:

If Rows = "row 0, row 1" and Columns = "column 0, column 1" then you'll reply

with the next:

[

{{

"row": "row 0",

"columns": [

{{

"column": "column 0",

"ideas": [

{{

"title": "Idea 0 title for prompt and row 0 and column 0",

"description": "idea 0 for prompt and row 0 and column 0"

}}

]

}},

{{

"column": "column 1",

"concepts": [

{{

"title": "Idea 0 title for prompt and row 0 and column 1",

"description": "idea 0 for prompt and row 0 and column 1"

}}

]

}},

]

}},

{{

"row": "row 1",

"columns": [

{{

"column": "column 0",

"ideas": [

{{

"title": "Idea 0 title for prompt and row 1 and column 0",

"description": "idea 0 for prompt and row 1 and column 0"

}}

]

}},

{{

"column": "column 1",

"concepts": [

{{

"title": "Idea 0 title for prompt and row 1 and column 1",

"description": "idea 0 for prompt and row 1 and column 1"

}}

]

}}

]

}}

]

We may have alternatively described the schema extra succinctly and

typically, however by being extra elaborate and particular in our instance, we

efficiently nudged the standard of the LLM’s response within the route we

needed. We imagine it’s because LLMs “suppose” in tokens, and outputting (ie

repeating) the row and column values earlier than outputting the concepts offers extra

correct context for the concepts being generated.

On the time of this writing, OpenAI has launched a brand new characteristic known as

Perform

Calling, which

offers a unique method to obtain the aim of formatting responses. On this

strategy, a developer can describe callable perform signatures and their

respective schemas as JSON, and have the LLM return a perform name with the

respective parameters supplied in JSON that conforms to that schema. That is

significantly helpful in situations while you need to invoke exterior instruments, akin to

performing an internet search or calling an API in response to a immediate. Langchain

additionally offers comparable performance, however I think about they are going to quickly present native

integration between their exterior instruments API and the OpenAI perform calling

API.

Actual-Time Progress

Stream the response to the UI so customers can monitor progress

One of many first few belongings you’ll understand when implementing a graphical

consumer interface on high of an LLM is that ready for the complete response to

full takes too lengthy. We don’t discover this as a lot with ChatGPT as a result of

it streams the response character by character. This is a crucial consumer

interplay sample to bear in mind as a result of, in our expertise, a consumer can

solely wait on a spinner for therefore lengthy earlier than dropping endurance. In our case, we

didn’t need the consumer to attend quite a lot of seconds earlier than they began

seeing a response, even when it was a partial one.

Therefore, when implementing a co-pilot expertise, we extremely advocate

displaying real-time progress throughout the execution of prompts that take extra

than a number of seconds to finish. In our case, this meant streaming the

generations throughout the total stack, from the LLM again to the UI in real-time.

Fortuitously, the Langchain and OpenAI APIs present the flexibility to do exactly

that:

const chat = new ChatOpenAI({

temperature: 1,

modelName: 'gpt-3.5-turbo',

streaming: true,

callbackManager: onTokenStream ?

CallbackManager.fromHandlers({

async handleLLMNewToken(token) {

onTokenStream(token)

},

}) : undefined

});

This allowed us to offer the real-time progress wanted to create a smoother

expertise for the consumer, together with the flexibility to cease a era

mid-completion if the concepts being generated didn’t match the consumer’s

expectations:

Nonetheless, doing so provides plenty of extra complexity to your software

logic, particularly on the view and controller. Within the case of Boba, we additionally had

to carry out best-effort parsing of JSON and keep temporal state throughout the

execution of an LLM name. On the time of penning this, some new and promising

libraries are popping out that make this simpler for internet builders. For instance,

the Vercel AI SDK is a library for constructing

edge-ready AI-powered streaming textual content and chat UIs.

Choose and Carry Context

Seize and add related context info to subsequent motion

One of many largest limitations of a chat interface is {that a} consumer is

restricted to a single-threaded context: the dialog chat window. When

designing a co-pilot expertise, we advocate pondering deeply about how one can

design UX affordances for performing actions inside the context of a

choice, much like our pure inclination to level at one thing in actual

life within the context of an motion or description.

Choose and Carry Context permits the consumer to slender or broaden the scope of

interplay to carry out subsequent duties – often known as the duty context. That is usually

achieved by choosing a number of components within the consumer interface after which performing an motion on them.

Within the case of Boba, for instance, we use this sample to permit the consumer to have

a narrower, targeted dialog about an concept by choosing it (eg a situation, technique or

prototype idea), in addition to to pick out and generate variations of a

idea. First, the consumer selects an concept (both explicitly with a checkbox or implicitly by clicking a hyperlink):

Then, when the consumer performs an motion on the choice, the chosen merchandise(s) are carried over as context into the brand new process,

for instance as situation subprompts for technique era when the consumer clicks “Brainstorm methods and questions for this situation”,

or as context for a pure language dialog when the consumer clicks Discover:

Relying on the character and size of the context

you want to set up for a phase of dialog/interplay, implementing

Choose and Carry Context might be wherever from very simple to very tough. When

the context is transient and may match right into a single LLM context window (the utmost

dimension of a immediate that the LLM helps), we will implement it by immediate

engineering alone. For instance, in Boba, as proven above, you may click on “Discover”

on an concept and have a dialog with Boba about that concept. The best way we

implement this within the backend is to create a multi-message chat

dialog:

const chatPrompt = ChatPromptTemplate.fromPromptMessages([

HumanMessagePromptTemplate.fromTemplate(contextPrompt),

HumanMessagePromptTemplate.fromTemplate("{input}"),

]);

const formattedPrompt = await chatPrompt.formatPromptValue({

enter: enter

})

One other strategy of implementing Choose and Carry Context is to take action inside

the immediate by offering the context inside tag delimiters, as proven under. In

this case, the consumer has chosen a number of situations and desires to generate

methods for these situations (a method typically utilized in situation constructing and

stress testing of concepts). The context we need to carry into the technique

era is assortment of chosen situations:

Your questions and methods should be particular to realizing the next

potential future situations (if any)

<situations>

{scenarios_subprompt}

</situations>

Nonetheless, when your context outgrows an LLM’s context window, or when you want

to offer a extra subtle chain of previous interactions, you will have to

resort to utilizing exterior short-term reminiscence, which generally includes utilizing a

vector retailer (in-memory or exterior). We’ll give an instance of how one can do

one thing comparable in Embedded Exterior Data.

If you wish to study extra in regards to the efficient use of choice and

context in generative functions, we extremely advocate a chat given by

Linus Lee, of Notion, on the LLMs in Manufacturing convention: “Generative Experiences Past Chat”.

Contextual Dialog

Enable direct dialog with the LLM inside a context.

It is a particular case of Choose and Carry Context.

Whereas we needed Boba to interrupt out of the chat window interplay mannequin

as a lot as potential, we discovered that it’s nonetheless very helpful to offer the

consumer a “fallback” channel to converse instantly with the LLM. This enables us

to offer a conversational expertise for interactions we don’t assist in

the UI, and assist circumstances when having a textual pure language

dialog does take advantage of sense for the consumer.

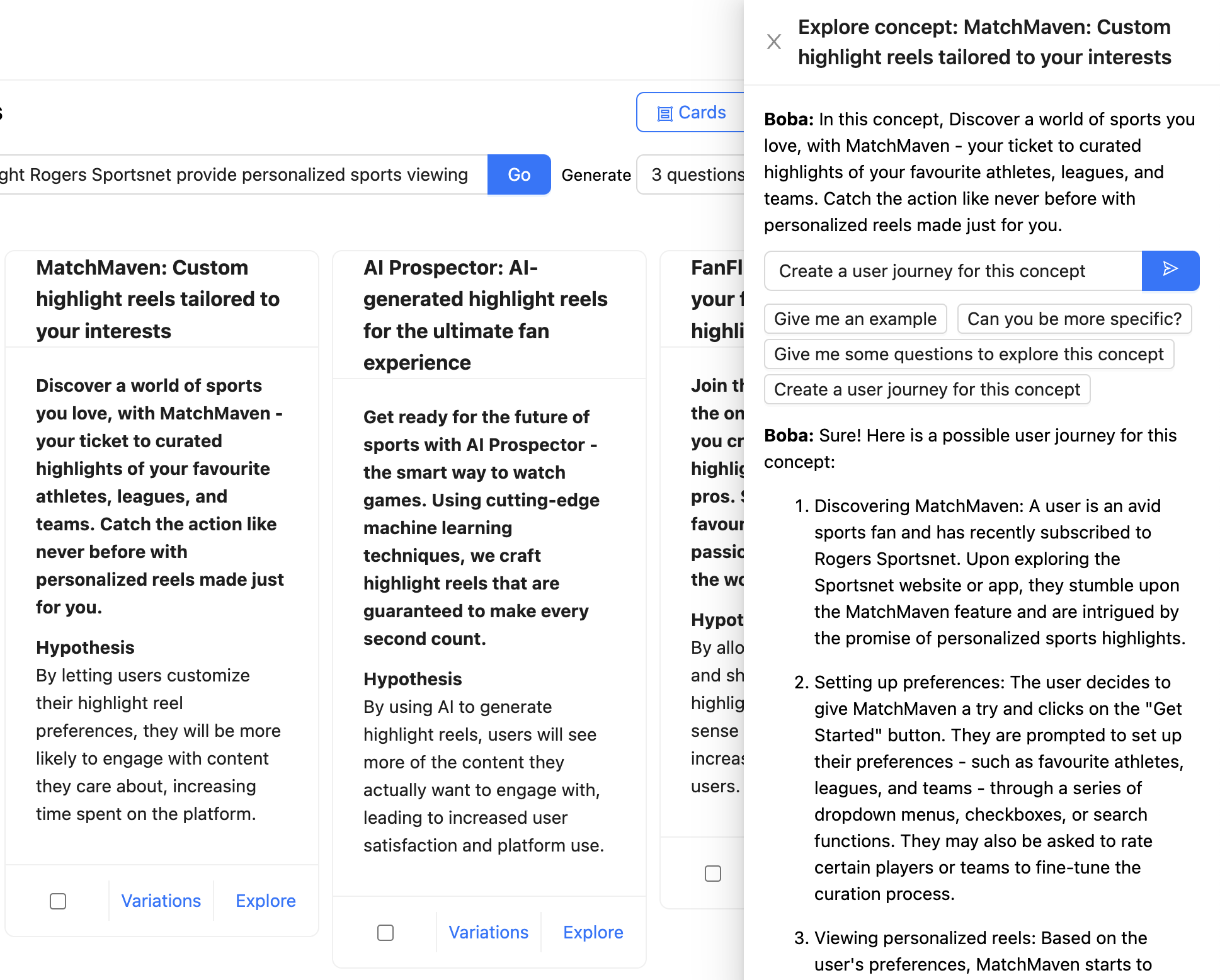

Within the instance under, the consumer is chatting with Boba a couple of idea for

personalised spotlight reels supplied by Rogers Sportsnet. The entire

context is talked about as a chat message (“On this idea, Uncover a world of

sports activities you’re keen on…”), and the consumer has requested Boba to create a consumer journey for

the idea. The response from the LLM is formatted and rendered as Markdown:

When designing generative co-pilot experiences, we extremely advocate

supporting contextual conversations along with your software. Be certain that to

supply examples of helpful messages the consumer can ship to your software so

they know what sort of conversations they’ll have interaction in. Within the case of

Boba, as proven within the screenshot above, these examples are provided as

message templates below the enter field, akin to “Are you able to be extra

particular?”

Out-Loud Considering

Inform LLM to generate intermediate outcomes whereas answering

Whereas LLMs don’t truly “suppose”, it’s price pondering metaphorically

a couple of phrase by Andrei Karpathy of OpenAI: “LLMs ‘suppose’ in

tokens.” What he means by this

is that GPTs are inclined to make extra reasoning errors when attempting to reply a

query immediately, versus while you give them extra time (i.e. extra tokens)

to “suppose”. In constructing Boba, we discovered that utilizing Chain of Thought (CoT)

prompting, or extra particularly, asking for a sequence of reasoning earlier than an

reply, helped the LLM to purpose its method towards higher-quality and extra

related responses.

In some elements of Boba, like technique and idea era, we ask the

LLM to generate a set of questions that develop on the consumer’s enter immediate

earlier than producing the concepts (methods and ideas on this case).

Whereas we show the questions generated by the LLM, an equally efficient

variant of this sample is to implement an inside monologue that the consumer is

not uncovered to. On this case, we’d ask the LLM to suppose by their

response and put that interior monologue right into a separate a part of the response, that

we will parse out and ignore within the outcomes we present to the consumer. A extra elaborate

description of this sample might be present in OpenAI’s GPT Greatest Practices

Information, within the

part Give GPTs time to

“suppose”

As a consumer expertise sample for generative functions, we discovered it useful

to share the reasoning course of with the consumer, wherever acceptable, in order that the

consumer has extra context to iterate on the following motion or immediate. For

instance, in Boba, figuring out the sorts of questions that Boba considered provides the

consumer extra concepts about divergent areas to discover, or to not discover. It additionally

permits the consumer to ask Boba to exclude sure courses of concepts within the subsequent

iteration. Should you do go down this path, we advocate making a UI affordance

for hiding a monologue or chain of thought, akin to Boba’s characteristic to toggle

examples proven above.

Iterative Response

Present affordances for the consumer to have a back-and-forth

interplay with the co-pilot

LLMs are sure to both misunderstand the consumer’s intent or just

generate responses that don’t meet the consumer’s expectations. Therefore, so is

your generative software. One of the vital highly effective capabilities that

distinguishes ChatGPT from conventional chatbots is the flexibility to flexibly

iterate on and refine the route of the dialog, and therefore enhance

the standard and relevance of the responses generated.

Equally, we imagine that the standard of a generative co-pilot

expertise is determined by the flexibility of a consumer to have a fluid back-and-forth

interplay with the co-pilot. That is what we name the Iterate on Response

sample. This could contain a number of approaches:

- Correcting the unique enter supplied to the appliance/LLM

- Refining part of the co-pilot’s response to the consumer

- Offering suggestions to nudge the appliance in a unique route

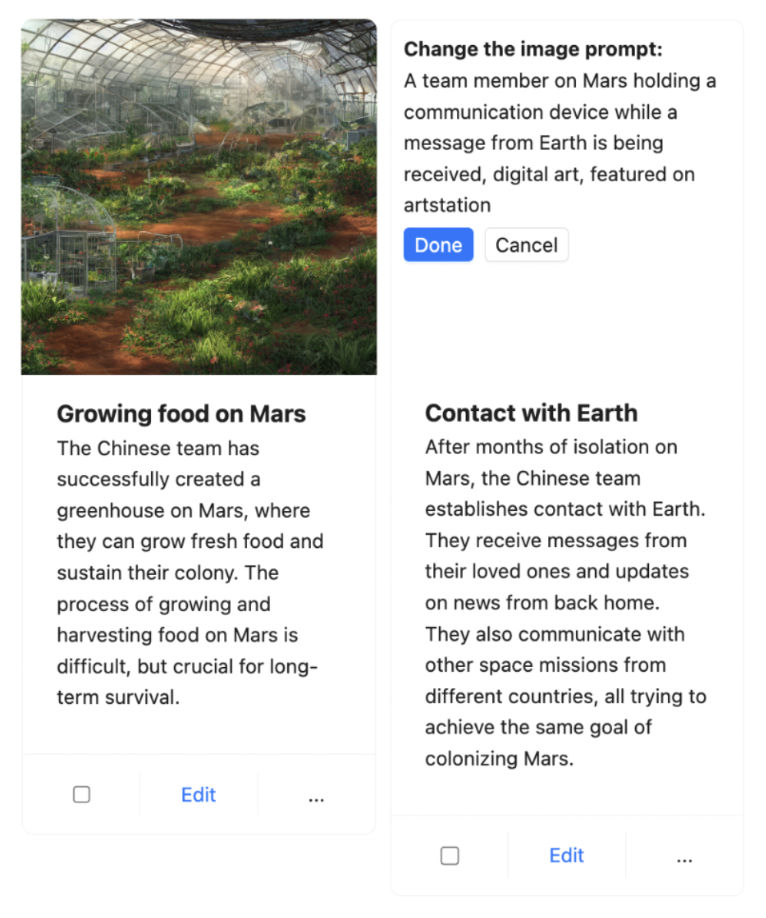

One instance of the place we’ve carried out Iterative Response

in

Boba is in Storyboarding. Given a immediate (both transient or elaborate), Boba

can generate a visible storyboard, which incorporates a number of scenes, with every

scene having a story script and a picture generated with Steady

Diffusion. For instance, under is a partial storyboard describing the expertise of a

“Resort of the Future”:

Since Boba makes use of the LLM to generate the Steady Diffusion immediate, we don’t

know the way good the pictures will prove–so it’s a little bit of a hit and miss with

this characteristic. To compensate for this, we determined to offer the consumer the

skill to iterate on the picture immediate in order that they’ll refine the picture for

a given scene. The consumer would do that by merely clicking on the picture,

updating the Steady Diffusion immediate, and urgent Accomplished, upon which Boba

would generate a brand new picture with the up to date immediate, whereas preserving the

remainder of the storyboard:

One other instance Iterative Response that we

are at present engaged on is a characteristic for the consumer to offer suggestions

to Boba on the standard of concepts generated, which might be a mixture

of Choose and Carry Context and Iterative Response. One

strategy could be to provide a thumbs up or thumbs down on an concept, and

letting Boba incorporate that suggestions into a brand new or subsequent set of

suggestions. One other strategy could be to offer conversational

suggestions within the type of pure language. Both method, we want to

do that in a method that helps reinforcement studying (the concepts get

higher as you present extra suggestions). An excellent instance of this could be

Github Copilot, which demotes code solutions which have been ignored by

the consumer in its rating of subsequent finest code solutions.

We imagine that this is without doubt one of the most necessary, albeit

generically-framed, patterns to implementing efficient generative

experiences. The difficult half is incorporating the context of the

suggestions into subsequent responses, which can typically require implementing

short-term or long-term reminiscence in your software due to the restricted

dimension of context home windows.

Embedded Exterior Data

Mix LLM with different info sources to entry information past

the LLM’s coaching set

As alluded to earlier on this article, oftentimes your generative

functions will want the LLM to include exterior instruments (akin to an API

name) or exterior reminiscence (short-term or long-term). We bumped into this

situation once we have been implementing the Analysis characteristic in Boba, which

permits customers to reply qualitative analysis questions primarily based on publicly

accessible info on the internet, for instance “How is the lodge trade

utilizing generative AI at present?”:

To implement this, we needed to “equip” the LLM with Google as an exterior

internet search device and provides the LLM the flexibility to learn probably lengthy

articles that will not match into the context window of a immediate. We additionally

needed Boba to have the ability to chat with the consumer about any related articles the

consumer finds, which required implementing a type of short-term reminiscence. Lastly,

we needed to offer the consumer with correct hyperlinks and references that have been

used to reply the consumer’s analysis query.

The best way we carried out this in Boba is as follows:

- Use a Google SERP API to carry out the net search primarily based on the consumer’s question

and get the highest 10 articles (search outcomes) - Learn the total content material of every article utilizing the Extract API

- Save the content material of every article in short-term reminiscence, particularly an

in-memory vector retailer. The embeddings for the vector retailer are generated utilizing

the OpenAI API, and primarily based on chunks of every article (versus embedding the complete

article itself). - Generate an embedding of the consumer’s search question

- Question the vector retailer utilizing the embedding of the search question

- Immediate the LLM to reply the consumer’s authentic question in pure language,

whereas prefixing the outcomes of the vector retailer question as context into the LLM

immediate.

This may occasionally sound like plenty of steps, however that is the place utilizing a device like

Langchain can pace up your course of. Particularly, Langchain has an

end-to-end chain known as VectorDBQAChain, and utilizing that to carry out the

question-answering took only some traces of code in Boba:

const researchArticle = async (article, immediate) => {

const mannequin = new OpenAI({});

const textual content = article.textual content;

const textSplitter = new RecursiveCharacterTextSplitter({ chunkSize: 1000 });

const docs = await textSplitter.createDocuments([text]);

const vectorStore = await HNSWLib.fromDocuments(docs, new OpenAIEmbeddings());

const chain = VectorDBQAChain.fromLLM(mannequin, vectorStore);

const res = await chain.name({

input_documents: docs,

question: immediate + ". Be detailed in your response.",

});

return { research_answer: res.textual content };

};

The article textual content accommodates the complete content material of the article, which can not

match inside a single immediate. So we carry out the steps described above. As you may

see, we used an in-memory vector retailer known as HNSWLib (Hierarchical Navigable

Small World). HNSW graphs are among the many top-performing indexes for vector

similarity search. Nonetheless, for bigger scale use circumstances and/or long-term reminiscence,

we advocate utilizing an exterior vector DB like Pinecone or Weaviate.

We additionally may have additional streamlined our workflow by utilizing Langchain’s

exterior instruments API to carry out the Google search, however we determined in opposition to it

as a result of it offloaded an excessive amount of determination making to Langchain, and we have been getting

blended, gradual and harder-to-parse outcomes. One other strategy to implementing

exterior instruments is to make use of Open AI’s not too long ago launched Perform Calling

API, which we

talked about earlier on this article.

To summarize, we mixed two distinct methods to implement Embedded Exterior Data:

- Use Exterior Device: Search and browse articles utilizing Google SERP and Extract

APIs - Use Exterior Reminiscence: Brief-term reminiscence utilizing an in-memory vector retailer

(HNSWLib)