On this weblog, we stroll by construct a real-time dashboard for operational monitoring and analytics on streaming occasion information from Kafka, which regularly requires advanced SQL, together with filtering, aggregations, and joins with different information units.

Apache Kafka is a broadly used distributed information log constructed to deal with streams of unstructured and semi-structured occasion information at large scales. Kafka is commonly utilized by organizations to trace stay software occasions starting from sensor information to consumer exercise, and the flexibility to visualise and dig deeper into this information could be important to understanding enterprise efficiency.

Tableau, additionally broadly common, is a software for constructing interactive dashboards and visualizations.

On this publish, we’ll create an instance real-time Tableau dashboard on streaming information in Kafka in a sequence of straightforward steps, with no upfront schema definition or ETL concerned. We’ll use Rockset as an information sink that ingests, indexes, and makes the Kafka information queryable utilizing SQL, and JDBC to attach Tableau and Rockset.

Streaming Information from Reddit

For this instance, let’s have a look at real-time Reddit exercise over the course of every week. Versus posts, let’s have a look at feedback – maybe a greater proxy for engagement. We’ll use the Kafka Join Reddit supply connector to pipe new Reddit feedback into our Kafka cluster. Every particular person remark appears to be like like this:

{

"payload":{

"controversiality":0,

"identify":"t1_ez72epm",

"physique":"I really like that they loved it too! Thanks!",

"stickied":false,

"replies":{

"information":{

"youngsters":[]

},

"sort":"Itemizing"

},

"saved":false,

"archived":false,

"can_gild":true,

"gilded":0,

"rating":1,

"creator":"natsnowchuk",

"link_title":"Our 4 month previous loves “airplane” rides. Hoping he enjoys the true airplane trip this a lot in December.",

"parent_id":"t1_ez6v8xa",

"created_utc":1567718035,

"subreddit_type":"public",

"id":"ez72epm",

"subreddit_id":"t5_2s3i3",

"link_id":"t3_d0225y",

"link_author":"natsnowchuk",

"subreddit":"Mommit",

"link_url":"https://v.redd.it/pd5q8b4ujsk31",

"score_hidden":false

}

}

Connecting Kafka to Rockset

For this demo, I’ll assume we have already got arrange our Kafka matter, put in the Confluent Reddit Connector and adopted the accompanying directions to arrange a feedback matter processing all new feedback from Reddit in real-time.

To get this information into Rockset, we’ll first have to create a brand new Kafka integration in Rockset. All we want for this step is the identify of the Kafka matter that we’d like to make use of as an information supply, and the kind of that information (JSON / Avro).

As soon as we’ve created the combination, we are able to see an inventory of attributes that we have to use to arrange our Kafka Join connector. For the needs of this demo, we’ll use the Confluent Platform to handle our cluster, however for self-hosted Kafka clusters these attributes could be copied into the related .properties file as specified right here. Nevertheless as long as we’ve got the Rockset Kafka Connector put in, we are able to add these manually within the Kafka UI:

Now that we’ve got the Rockset Kafka Sink arrange, we are able to create a Rockset assortment and begin ingesting information!

We now have information streaming stay from Reddit straight into into Rockset by way of Kafka, with out having to fret about schemas or ETL in any respect.

Connecting Rockset to Tableau

Let’s see this information in Tableau!

I’ll assume we’ve got an account already for Tableau Desktop.

To attach Tableau with Rockset, we first have to obtain the Rockset JDBC driver from Maven and place it in ~/Library/Tableau/Drivers for Mac or C:Program FilesTableauDrivers for Home windows.

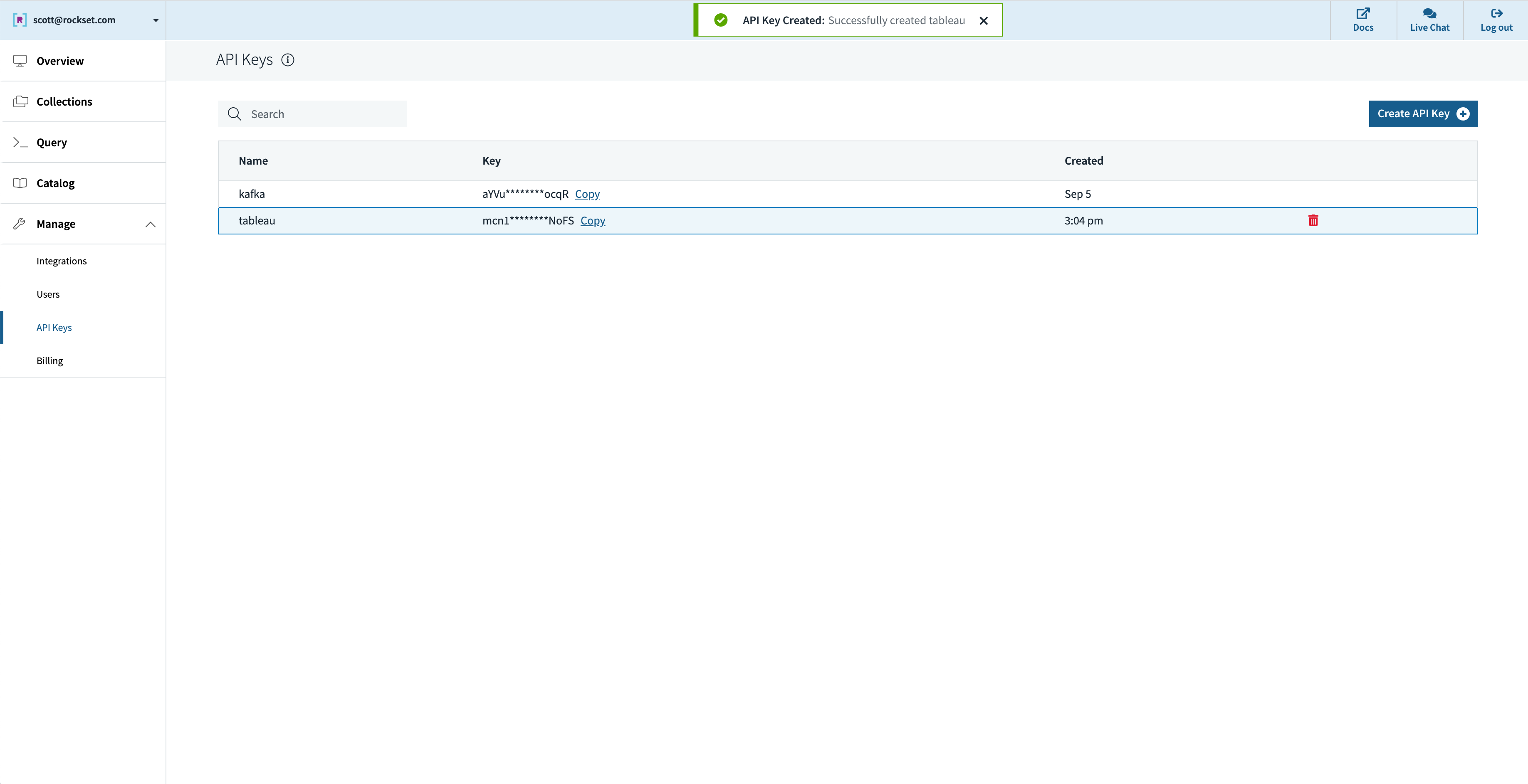

Subsequent, let’s create an API key in Rockset that Tableau will use for authenticating requests:

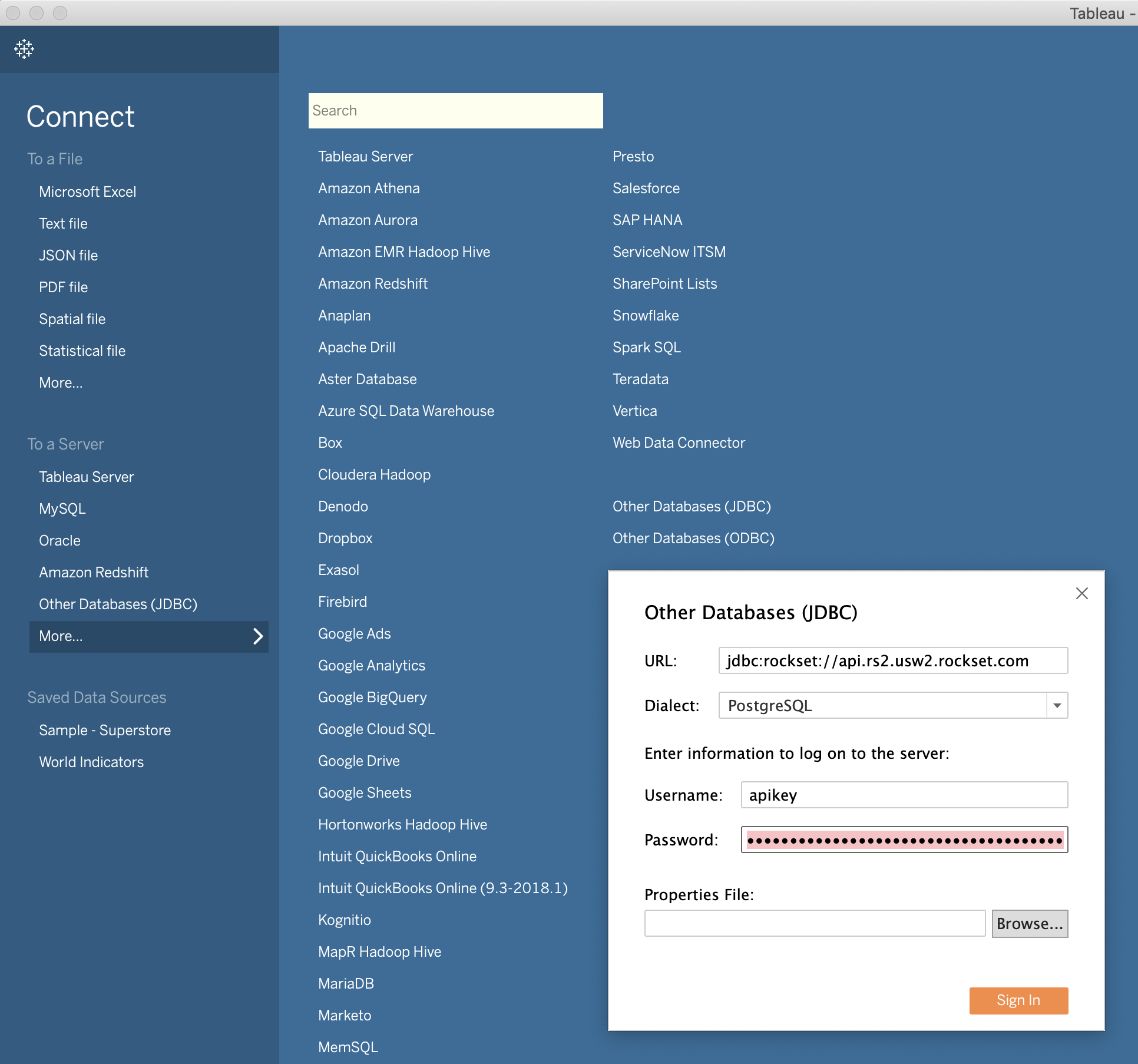

In Tableau, we connect with Rockset by selecting “Different Databases (JDBC)” and filling the fields, with our API key because the password:

That’s all it takes!

Creating real-time dashboards

Now that we’ve got information streaming into Rockset, we are able to begin asking questions. Given the character of the info, we’ll write the queries we want first in Rockset, after which use them to energy our stay Tableau dashboards utilizing the ‘Customized SQL’ characteristic.

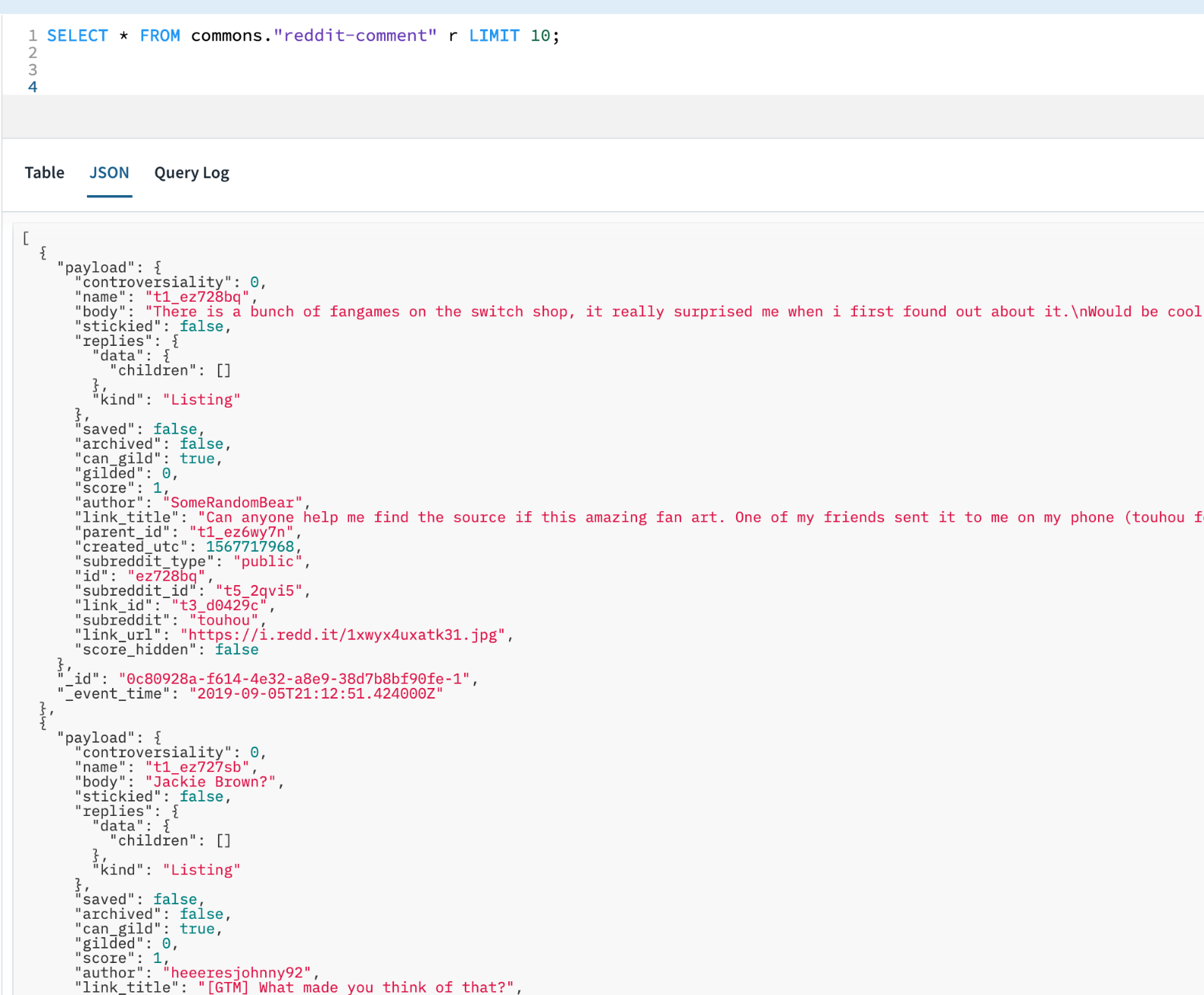

Let’s first have a look at the character of the info in Rockset:

Given the nested nature of many of the main fields, we gained’t be capable to use Tableau to straight entry them. As a substitute, we’ll write the SQL ourselves in Rockset and use the ‘Customized SQL’ choice to convey it into Tableau.

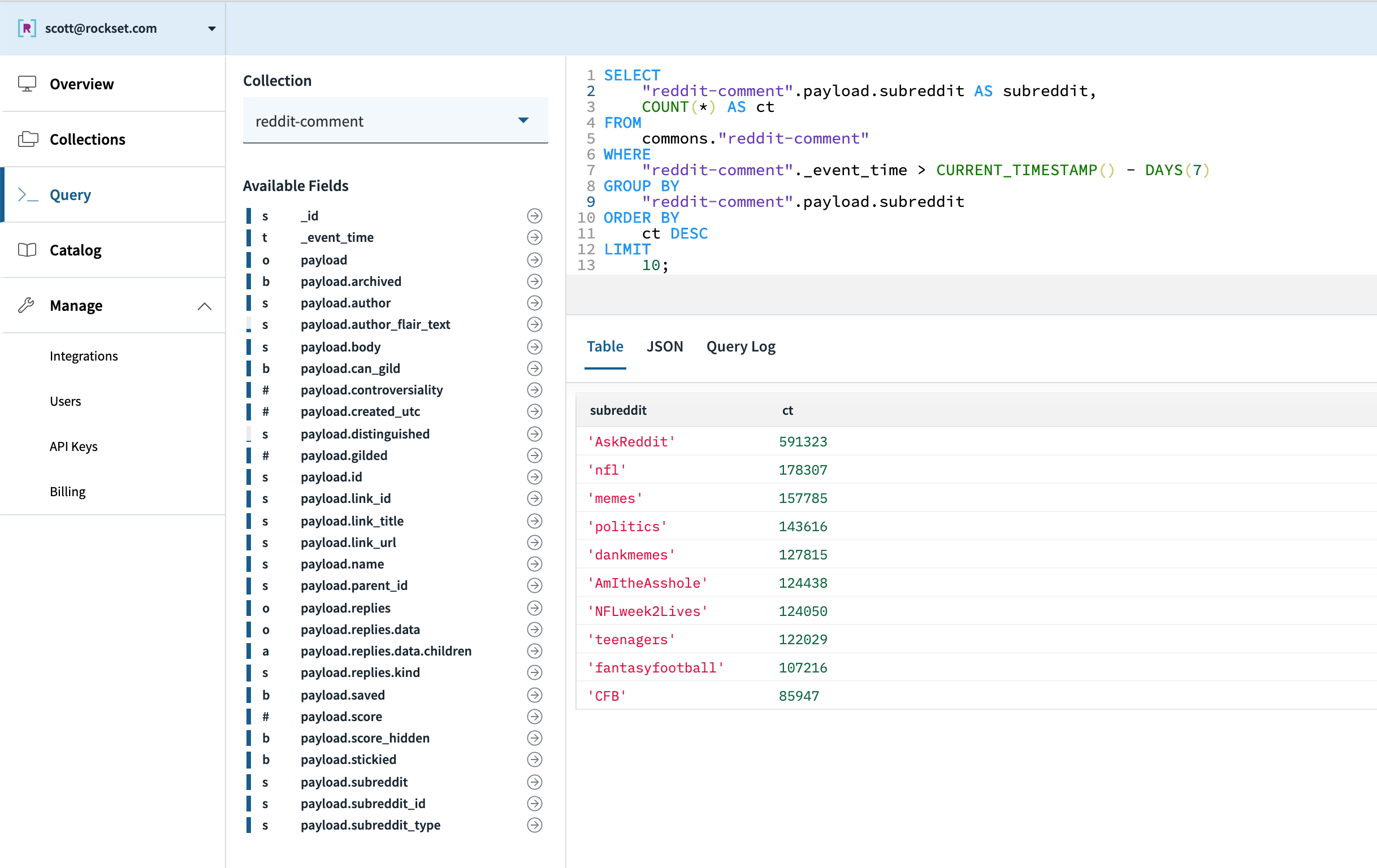

To start out with, let’s discover common Reddit developments of the final week. If feedback replicate engagement, which subreddits have essentially the most engaged customers? We will write a primary question to search out the subreddits with the very best exercise during the last week:

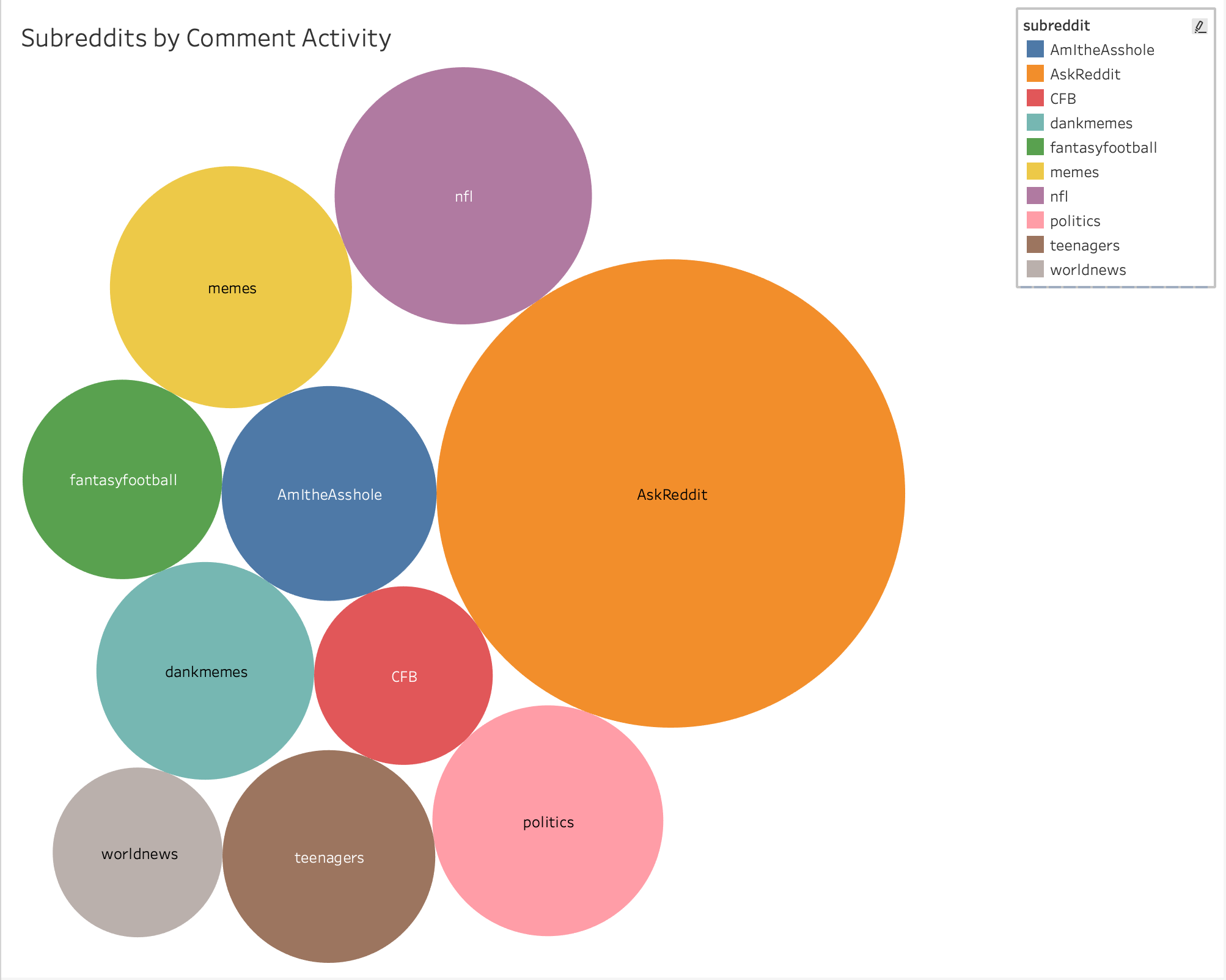

We will simply create a customized SQL information supply to signify this question and examine the ends in Tableau:

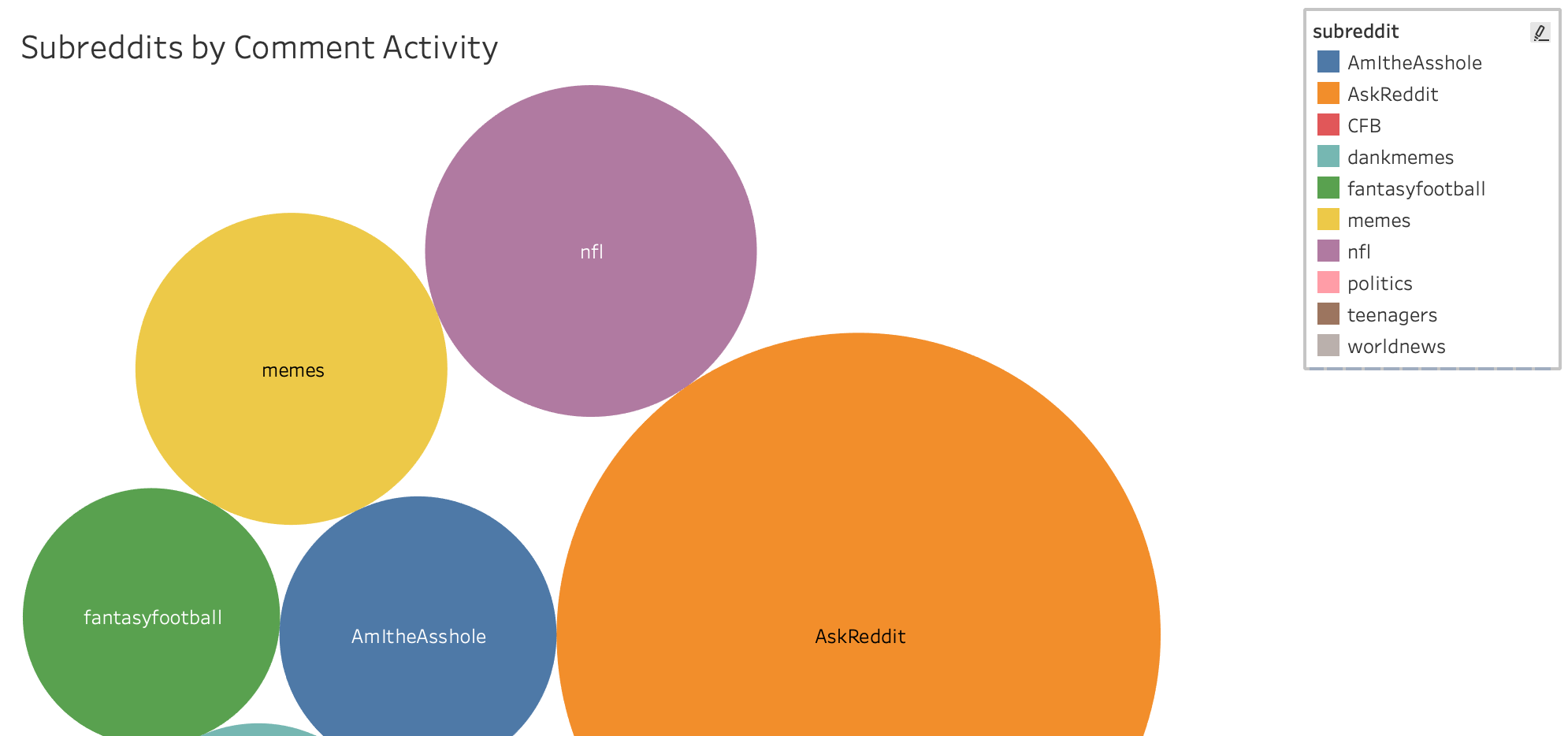

Right here’s the ultimate chart after amassing every week of information:

Apparently, Reddit appears to like soccer — we see 3 football-related Reddits within the prime 10 (r/nfl, r/fantasyfootball, and r/CFB). Or on the very least, these Redditors who love soccer are extremely energetic initially of the season. Let’s dig into this a bit extra – are there any exercise patterns we are able to observe in day-to-day subreddit exercise? One may hypothesize that NFL-related subreddits spike on Sundays, whereas these NCAA-related spike as an alternative on Saturdays.

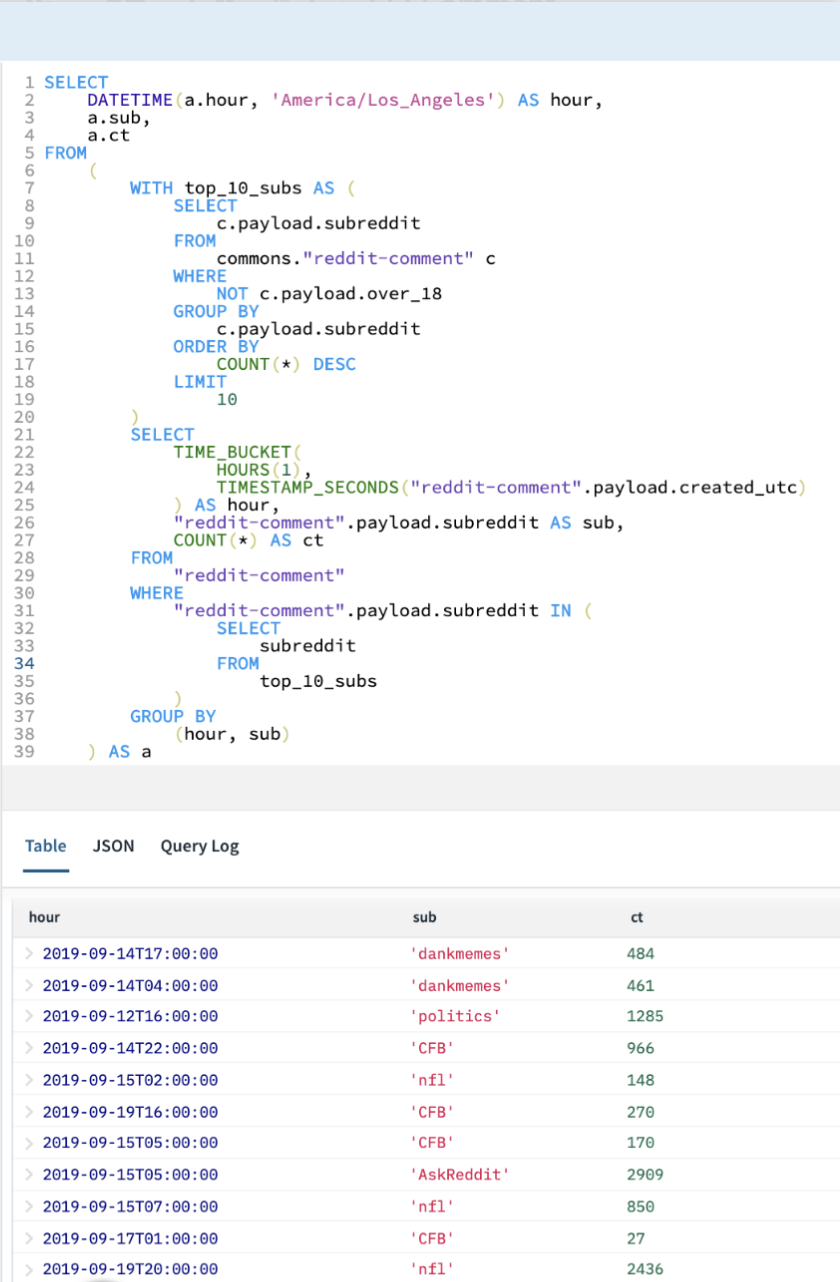

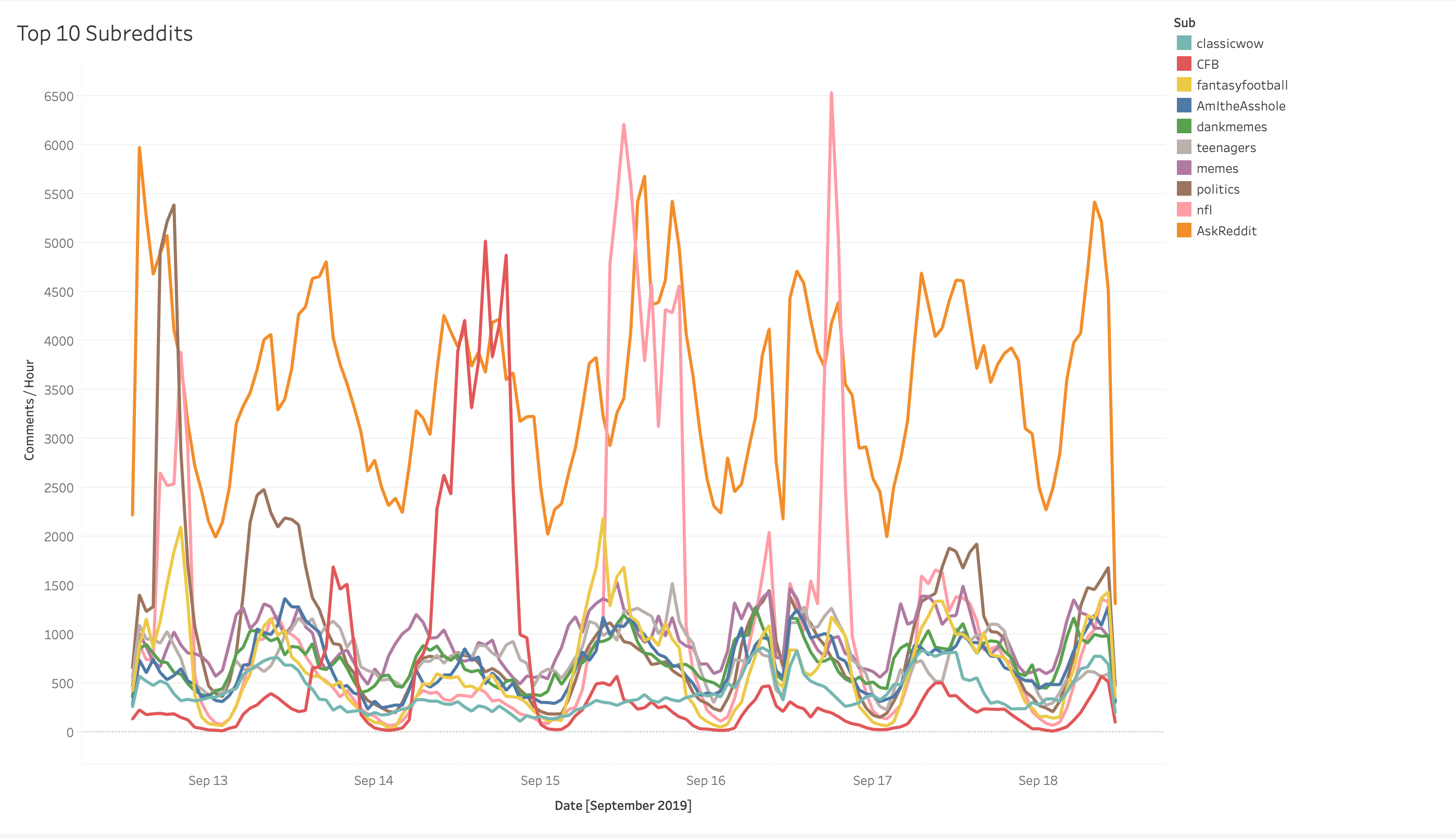

To reply this query, let’s write a question to bucket feedback per subreddit per hour and plot the outcomes. We’ll want some subqueries to search out the highest total subreddits:

Unsurprisingly, we do see massive spikes for r/CFB on Saturday and an excellent bigger spike for r/nfl on Sunday (though considerably surprisingly, essentially the most energetic single hour of the week on r/nfl occurred on Monday Evening Soccer as Baker Mayfield led the Browns to a convincing victory over the injury-plagued Jets). Additionally apparently, peak game-day exercise in r/nfl surpassed the highs of every other subreddit at every other 1 hour interval, together with r/politics throughout the Democratic Major Debate the earlier Monday.

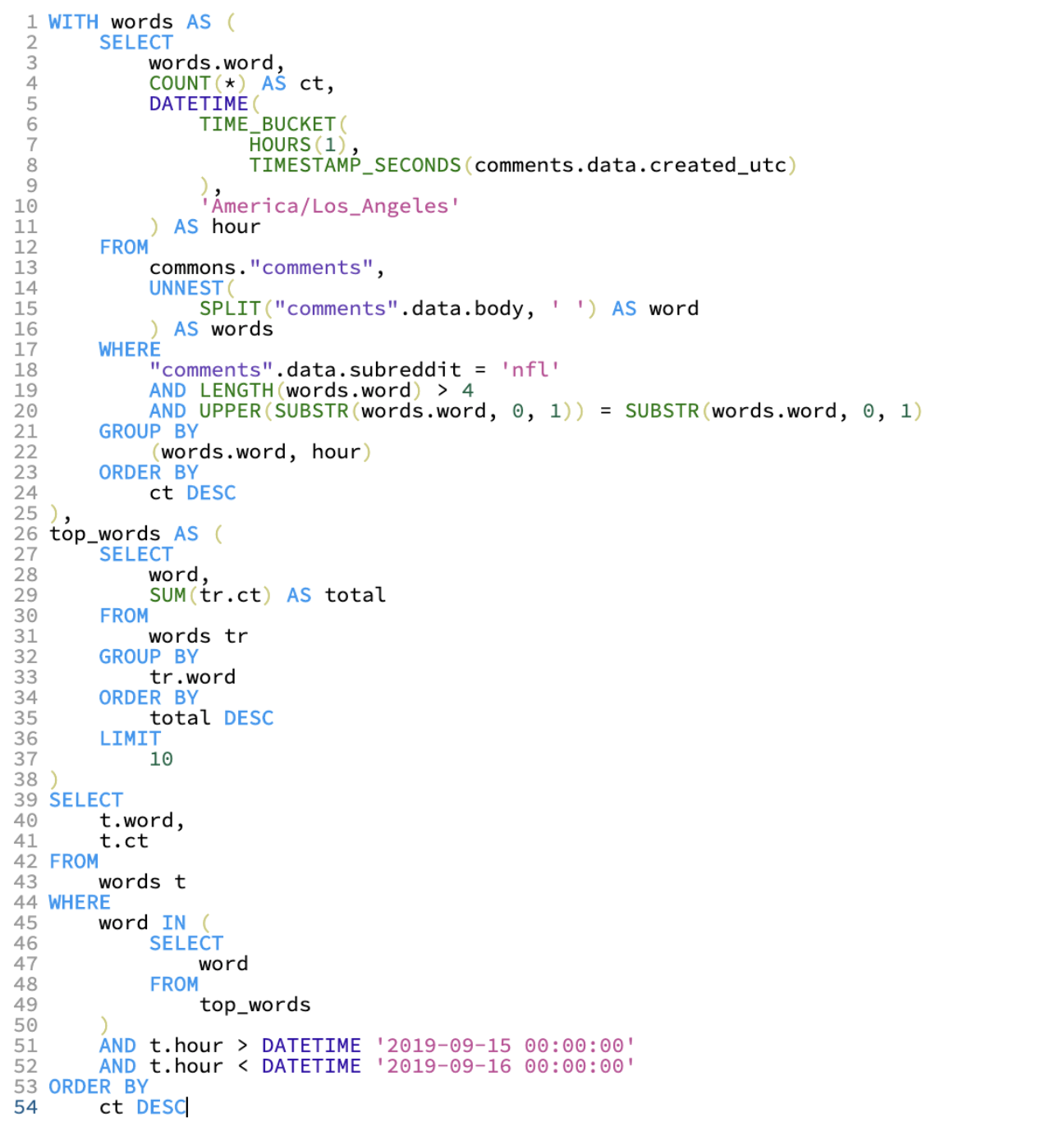

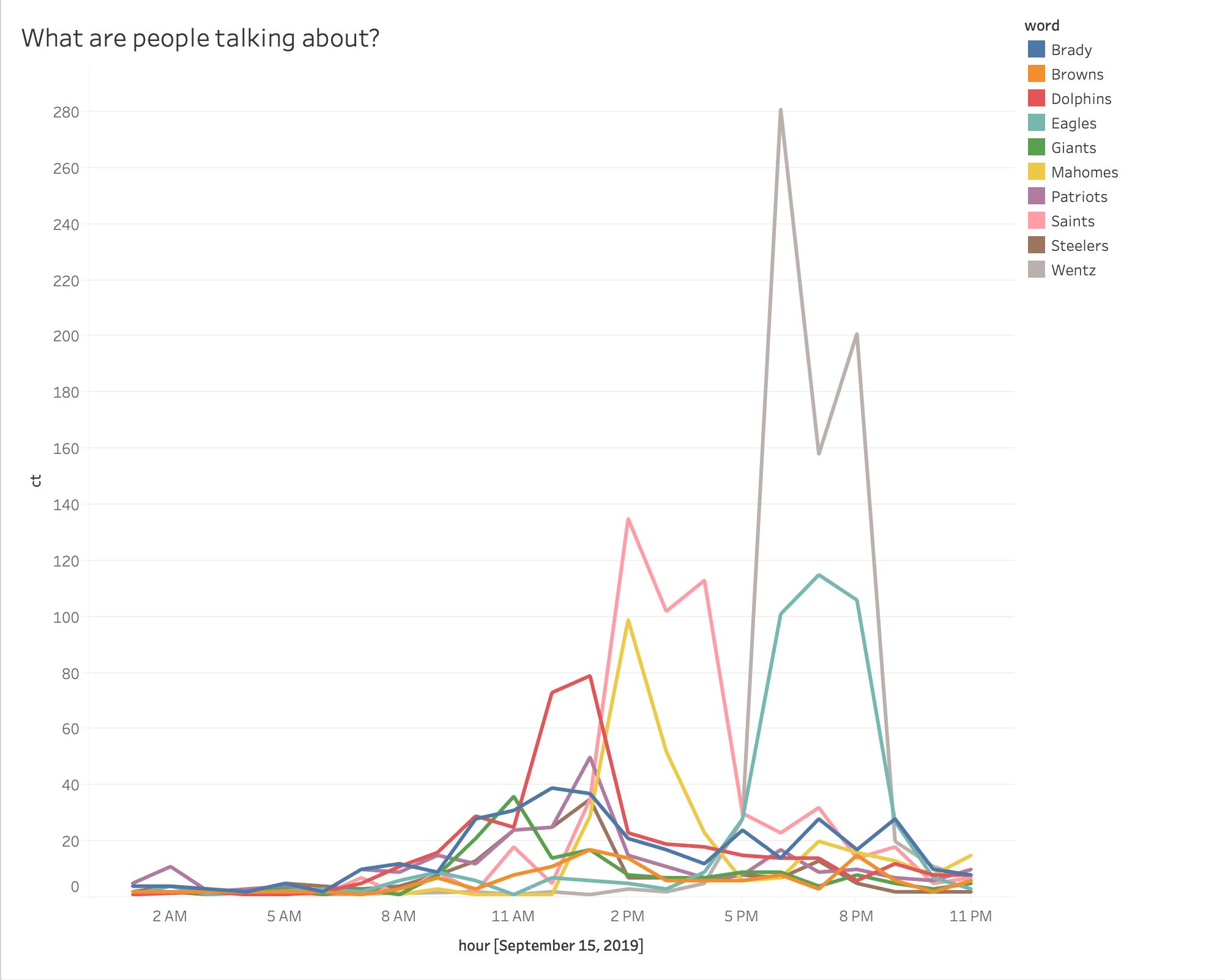

Lastly, let’s dig a bit deeper into what precisely had the oldsters at r/nfl so fired up. We will write a question to search out the ten most ceaselessly occurring participant / crew names and plot them over time as nicely. Let’s dig into Sunday particularly:

Notice that to get this information, we needed to break up every remark by phrase and be a part of the unnested ensuing array again in opposition to the unique assortment. Not a trivial question!

Once more utilizing the Tableau Customized SQL characteristic, we see that Carson Wentz appears to have essentially the most buzz in Week 2!

Abstract

On this weblog publish, we walked by creating an interactive, stay dashboard in Tableau to research stay streaming information from Kafka. We used Rockset as an information sink for Kafka occasion information, with a view to present low-latency SQL to serve real-time Tableau dashboards. The steps we adopted had been:

- Begin with information in a Kafka matter.

- Create a group in Rockset, utilizing the Kafka matter as a supply.

- Write a number of SQL queries that return the info wanted in Tableau.

- Create an information supply in Tableau utilizing customized SQL.

- Use the Tableau interface to create charts and real-time dashboards.

Go to our Kafka options web page for extra data on constructing real-time dashboards and APIs on Kafka occasion streams.