As builders proceed to construct larger autonomy into cyber-physical techniques (CPSs), corresponding to unmanned aerial automobiles (UAVs) and cars, these techniques combination information from an rising variety of sensors. The techniques use this information for management and for in any other case appearing of their operational environments. Nonetheless, extra sensors not solely create extra information and extra exact information, however they require a fancy structure to accurately switch and course of a number of information streams. This improve in complexity comes with further challenges for useful verification and validation (V&V) a larger potential for faults (errors and failures), and a bigger assault floor. What’s extra, CPSs typically can not distinguish faults from assaults.

To deal with these challenges, researchers from the SEI and Georgia Tech collaborated on an effort to map the issue house and develop proposals for fixing the challenges of accelerating sensor information in CPSs. This SEI Weblog submit offers a abstract our work, which comprised analysis threads addressing 4 subcomponents of the issue:

- addressing error propagation induced by studying parts

- mapping fault and assault eventualities to the corresponding detection mechanisms

- defining a safety index of the flexibility to detect tampering based mostly on the monitoring of particular bodily parameters

- figuring out the impression of clock offset on the precision of reinforcement studying (RL)

Later I’ll describe these analysis threads, that are half of a bigger physique of analysis we name Security Evaluation and Fault Detection Isolation and Restoration (SAFIR) Synthesis for Time-Delicate Cyber-Bodily Methods. First, let’s take a more in-depth have a look at the issue house and the challenges we’re working to beat.

Extra Information, Extra Issues

CPS builders need extra and higher information so their techniques could make higher choices and extra exact evaluations of their operational environments. To attain these targets, builders add extra sensors to their techniques and enhance the flexibility of those sensors to collect extra information. Nonetheless, feeding the system extra information has a number of implications: extra information means the system should execute extra, and extra complicated, computations. Consequently, these data-enhanced techniques want extra highly effective central processing models (CPUs).

Extra highly effective CPUs introduce numerous considerations, corresponding to vitality administration and system reliability. Bigger CPUs additionally increase questions on electrical demand and electromagnetic compatibility (i.e., the flexibility of the system to resist electromagnetic disturbances, corresponding to storms or adversarial interference).

The addition of latest sensors means techniques must combination extra information streams. This want drives larger architectural complexity. Furthermore, the information streams should be synchronized. As an illustration, the knowledge obtained from the left facet of an autonomous car should arrive similtaneously data coming from the correct facet.

Extra sensors, extra information, and a extra complicated structure additionally increase challenges in regards to the security, safety, and efficiency of those techniques, whose interplay with the bodily world raises the stakes. CPS builders face heightened stress to make sure that the information on which their techniques rely is correct, that it arrives on schedule, and that an exterior actor has not tampered with it.

A Query of Belief

As builders try to imbue CPSs with larger autonomy, one of many greatest hurdles is gaining the belief of customers who rely on these techniques to function safely and securely. For instance, take into account one thing so simple as the air stress sensor in your automobile’s tires. Prior to now, we needed to test the tires bodily, with an air stress gauge, typically miles after we’d been driving on tires dangerously underinflated. The sensors now we have at the moment tell us in actual time when we have to add air. Over time, now we have come to rely on these sensors. Nonetheless, the second we get a false alert telling us our entrance driver’s facet tire is underinflated, we lose belief within the potential of the sensors to do their job.

Now, take into account an identical system through which the sensors go their data wirelessly, and a flat-tire warning triggers a security operation that stops the automobile from beginning. A malicious actor learns easy methods to generate a false alert from a spot throughout the parking zone or merely jams your system. Your tires are high-quality, your automobile is ok, however your automobile’s sensors, both detecting a simulated downside or totally incapacitated, is not going to allow you to begin the automobile. Prolong this situation to autonomous techniques working in airplanes, public transportation techniques, or giant manufacturing amenities, and belief in autonomous CPSs turns into much more essential.

As these examples show, CPSs are vulnerable to each inside faults and exterior assaults from malicious adversaries. Examples of the latter embody the Maroochy Shire incident involving sewage companies in Australia in 2000, the Stuxnet assaults concentrating on energy crops in 2010, and the Keylogger virus in opposition to a U.S. drone fleet in 2011.

Belief is essential, and it lies on the coronary heart of the work now we have been doing with Georgia Tech. It’s a multidisciplinary downside. Finally, what builders search to ship is not only a chunk of {hardware} or software program, however a cyber-physical system comprising each {hardware} and software program. Builders want an assurance case, a convincing argument that may be understood by an exterior occasion. The peace of mind case should show that the best way the system was engineered and examined is in step with the underlying theories used to collect proof supporting the security and safety of the system. Making such an assurance case potential was a key a part of the work described within the following sections.

Addressing Error Propagation Induced by Studying Parts

As I famous above, autonomous CPSs are complicated platforms that function in each the bodily and cyber domains. They make use of a mixture of completely different studying parts that inform particular synthetic intelligence (AI) capabilities. Studying parts collect information concerning the setting and the system to assist the system make corrections and enhance its efficiency.

To attain the extent of autonomy wanted by CPSs when working in unsure or adversarial environments, CPSs make use of studying algorithms. These algorithms use information collected by the system—earlier than or throughout runtime—to allow determination making with no human within the loop. The educational course of itself, nevertheless, isn’t with out issues, and errors could be launched by stochastic faults, malicious exercise, or human error.

Many teams are engaged on the issue of verifying studying parts. Typically, they’re within the correctness of the educational element itself. This line of analysis goals to supply an integration-ready element that has been verified with some stochastic properties, corresponding to a probabilistic property. Nonetheless, the work we carried out on this analysis thread examines the issue of integrating a learning-enabled element inside a system.

For instance, we ask, How can we outline the structure of the system in order that we will fence off any learning-enabled element and assess that the information it’s receiving is appropriate and arriving on the proper time? Moreover, Can we assess that the system outputs could be managed for some notion of correctness? As an illustration, Is the acceleration of my automobile inside the velocity restrict? This type of fencing is important to find out whether or not we will belief that the system itself is appropriate (or, not less than, not that improper) in comparison with the verification of a operating element, which at the moment isn’t potential.

To deal with these questions, we described the varied errors that may seem in CPS parts and have an effect on the educational course of. We additionally offered theoretical instruments that can be utilized to confirm the presence of such errors. Our goal was to create a framework that operators of CPSs can use to evaluate their operation when utilizing data-driven studying parts. To take action, we adopted a divide-and-conquer method that used the Structure Evaluation & Design Language (AADL) to create a illustration of the system’s parts, and their interconnections, to assemble a modular setting that allowed for the inclusion of various detection and studying mechanisms. This method helps a full model-based growth, together with system specification, evaluation, system tuning, integration, and improve over the lifecycle.

We used a UAV system for example how errors propagate all through system parts when adversaries assault the educational processes and procure security tolerance thresholds. We centered solely on particular studying algorithms and detection mechanisms. We then investigated their properties of convergence, in addition to the errors that may disrupt these properties.

The outcomes of this investigation present the start line for a CPS designer’s information to using AADL for system-level evaluation, tuning, and improve in a modular vogue. This information may comprehensively describe the completely different errors within the studying processes throughout system operation. With these descriptions, the designer can robotically confirm the right operation of the CPS by quantifying marginal errors and integrating the system into AADL to guage essential properties in all lifecycle phases. To study extra about our method, I encourage you to learn the paper A Modular Method to Verification of Studying Parts in Cyber-Bodily Methods.

Mapping Fault and Assault Eventualities to Corresponding Detection Mechanisms

UAVs have develop into extra vulnerable to each stochastic faults (stemming from faults occurring on the different parts comprising the system) and malicious assaults that compromise both the bodily parts (sensors, actuators, airframe, and many others.) or the software program coordinating their operation. Different analysis communities, utilizing an assortment of instruments which are typically incompatible with one another, have been investigating the causes and effects of faults that happen in UAVs. On this analysis thread, we sought to determine the core properties and parts of those approaches to decompose them and thereby allow designers of UAV techniques to contemplate all of the different outcomes on faults and the related detection methods by way of an built-in algorithmic method. In different phrases, in case your system is underneath assault, how do you choose the perfect mechanism for detecting that assault?

The problem of faults and assaults on UAVs has been broadly studied, and plenty of taxonomies have been proposed to assist engineers design mitigation methods for numerous assaults. In our view, nevertheless, these taxonomies have been insufficient. We proposed a call course of product of two parts: first, a mapping from fault or assault eventualities to summary error varieties, and second, a survey of detection mechanisms based mostly on the summary error varieties they assist detect. Utilizing this method, designers may use each parts to pick a detection mechanism to guard the system.

To categorise the assaults on UAVs, we created a listing of element compromises, specializing in people who reside on the intersection of the bodily and the digital realms. The record is much from complete, however it’s satisfactory for representing the foremost qualities that describe the effects of these assaults to the system. We contextualized the record when it comes to assaults and faults on sensing, actuating, and communication parts, and extra complicated assaults concentrating on a number of parts to trigger system-wide errors:

|

|

|

|

|

|

|

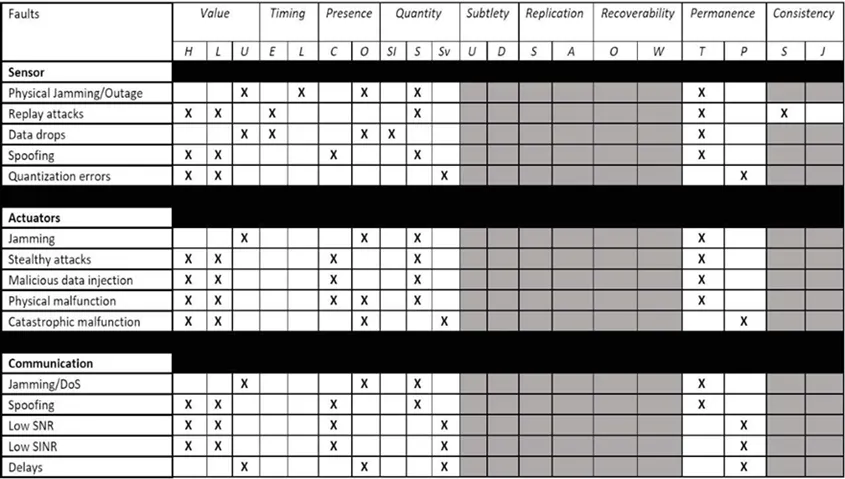

Utilizing this record of assaults on UAVs and people on UAV platforms, we subsequent recognized their properties when it comes to the taxonomy standards launched by the SEI’s Sam Procter and Peter Feiler in The AADL Error Library: An Operationalized Taxonomy of System Errors. Their taxonomy offers a set of information phrases to explain errors based mostly on their class: worth, timing, amount, and many others. Desk 1 presents a subset of these lessons as they apply to UAV faults and assaults.

Determine 1: Classification of Assaults and Faults on UAVs Primarily based on the EMV2 Error Taxonomy

We then created a taxonomy of detection mechanisms that included statistics-based, sample-based, and Bellman-based intrusion detection techniques. We associated these mechanisms to the assaults and faults taxonomy. Utilizing these examples, we developed a decision-making course of and illustrated it with a situation involving a UAV system. On this situation, the automobile undertook a mission through which it confronted a excessive likelihood of being topic to an acoustic injection assault.

In such an assault, an analyst would seek advice from the desk containing the properties of the assault and select the summary assault class of the acoustic injection from the assault taxonomy. Given the character of the assault, the suitable alternative could be the spoofing sensor assault. Primarily based on the properties given by the assault taxonomy desk, the analyst would have the ability to determine the important thing traits of the assault. Cross-referencing the properties of the assault with the span of detectable traits of the different intrusion detection mechanisms will decide the subset of mechanisms that will likely be profitable in environments with these forms of assaults.

On this analysis thread, we created a device that may assist UAV operators choose the suitable detection mechanisms for his or her system. Future work will deal with implementing the proposed taxonomy on a specific UAV platform, the place the precise sources of the assaults and faults could be explicitly identified on a low architectural stage. To study extra about our work on this analysis thread, I encourage you to learn the paper In direction of Clever Safety for Unmanned Aerial Autos: A Taxonomy of Assaults, Faults, and Detection Mechanisms.

Defining a Safety Index of the Skill to Detect Tampering by Monitoring Particular Bodily Parameters

CPSs have step by step develop into giant scale and decentralized lately, they usually rely increasingly on communication networks. This high-dimensional and decentralized construction will increase the publicity to malicious assaults that may trigger faults, failures, and even vital injury. Analysis efforts have been made on the cost-efficient placement or allocation of actuators and sensors. Nonetheless, most of those developed strategies primarily take into account controllability or observability properties and don’t consider the safety side.

Motivated by this hole, we thought-about on this analysis thread the dependence of CPS safety on the possibly compromised actuators and sensors, particularly, on deriving a safety measure underneath each actuator and sensor assaults. The subject of CPS safety has obtained rising consideration just lately, and completely different safety indices have been developed. The primary type of safety measure relies on reachability evaluation, which quantifies the dimensions of reachable units (i.e., the units of all states reachable by dynamical techniques with admissible inputs). Thus far, nevertheless, little work has quantified reachable units underneath malicious assaults and used the developed safety metrics to information actuator and sensor choice. The second type of safety index is outlined because the minimal variety of actuators and/or sensors that attackers must compromise with out being detected.

On this analysis thread, we developed a generic actuator safety index. We additionally proposed graph-theoretic situations for computing the index with the assistance of most linking and the generic regular rank of the corresponding structured switch operate matrix. Our contribution right here was twofold. We offered situations for the existence of dynamical and excellent undetectability. When it comes to good undetectability, we proposed a safety index for discrete-time linear-time invariant (LTI) techniques underneath actuator and sensor assaults. Then, we developed a graph-theoretic method for structured techniques that’s used to compute the safety index by fixing a min-cut/max-flow downside. For an in depth presentation of this work, I encourage you to learn the paper A Graph-Theoretic Safety Index Primarily based on Undetectability for Cyber-Bodily Methods.

Figuring out the Influence of Clock Offset on the Precision of Reinforcement Studying

A significant problem in autonomous CPSs is integrating extra sensors and information with out decreasing the velocity of efficiency. CPSs, corresponding to vehicles, ships, and planes, all have timing constraints that may be catastrophic if missed. Complicating issues, timing acts in two instructions: timing to react to exterior occasions and timing to have interaction with people to make sure their safety. These situations increase plenty of challenges as a result of timing, accuracy, and precision are traits key to making sure belief in a system.

Strategies for the event of safe-by-design techniques have been largely centered on the standard of the knowledge within the community (i.e., within the mitigation of corrupted alerts both because of stochastic faults or malicious manipulation by adversaries). Nonetheless, the decentralized nature of a CPS requires the event of strategies that deal with timing discrepancies amongst its parts. Problems with timing have been addressed in management techniques to evaluate their robustness in opposition to such faults, but the consequences of timing points on studying mechanisms are not often thought-about.

Motivated by this truth, our work on this analysis thread investigated the habits of a system with reinforcement studying (RL) capabilities underneath clock offsets. We centered on the derivation of ensures of convergence for the corresponding studying algorithm, on condition that the CPS suffers from discrepancies within the management and measurement timestamps. Specifically, we investigated the impact of sensor-actuator clock offsets on RL-enabled CPSs. We thought-about an off-policy RL algorithm that receives information from the system’s sensors and actuators and makes use of them to approximate a desired optimum management coverage.

Nonetheless, owing to timing mismatches, the control-state information obtained from these system parts have been inconsistent and raised questions on RL robustness. After an in depth evaluation, we confirmed that RL does retain its robustness in an epsilon-delta sense. On condition that the sensor–actuator clock offsets should not arbitrarily giant and that the behavioral management enter satisfies a Lipschitz continuity situation, RL converges epsilon-close to the specified optimum management coverage. We carried out a two-link manipulator, which clarified and verified our theoretical findings. For a whole dialogue of this work, I encourage you to learn the paper Influence of Sensor and Actuator Clock Offsets on Reinforcement Studying.

Constructing a Chain of Belief in CPS Structure

In conducting this analysis, the SEI has made some contributions within the discipline of CPS structure. First, we prolonged AADL to make a proper semantics we will use not solely to simulate a mannequin in a really exact means, but additionally to confirm properties on AADL fashions. That work permits us to purpose concerning the structure of CPSs. One consequence of this reasoning related to assuring autonomous CPSs was the thought of building a “fence” round weak parts. Nonetheless, we nonetheless wanted to carry out fault detection to ensure inputs should not incorrect or tampered with or the outputs invalid.

Fault detection is the place our collaborators from Georgia Tech made key contributions. They’ve completed nice work on statistics-based methods for detecting faults and developed methods that use reinforcement studying to construct fault detection mechanisms. These mechanisms search for particular patterns that symbolize both a cyber assault or a fault within the system. They’ve additionally addressed the query of recursion in conditions through which a studying element learns from one other studying element (which can itself be improper). Kyriakos Vamvoudakis of Georgia Tech’s Daniel Guggenheim College of Aerospace Engineering labored out easy methods to use structure patterns to deal with these questions by increasing the fence round these parts. This work helped us implement and take a look at fault detection, isolation, and recording mechanism on use-case missions that we carried out on a UAV platform.

We now have discovered that for those who do not need a very good CPS structure—one that’s modular, meets desired properties, and isolates fault tolerance—you need to have a giant fence. It’s important to do extra processing to confirm the system and achieve belief. Then again, when you have an structure you could confirm is amenable to those fault tolerance methods, then you’ll be able to add within the fault isolation tolerances with out degrading efficiency. It’s a tradeoff.

One of many issues now we have been engaged on on this undertaking is a group of design patterns which are identified within the security group for detecting and mitigating faults utilizing a simplex structure to change from one model of a element to a different. We have to outline the aforementioned tradeoff for every of these patterns. As an illustration, patterns will differ within the variety of redundant parts, and, as we all know, extra redundancy is extra pricey as a result of we want extra CPU, extra wires, extra vitality. Some patterns will take extra time to decide or change from nominal mode to degraded mode. We’re evaluating all these patterns, making an allowance for the associated fee to implement them when it comes to sources—largely {hardware} sources—and the timing side (the time between detecting an occasion to reconfiguring the system). These sensible concerns are what we need to deal with—not only a formal semantics of AADL, which is good for laptop scientists, but additionally this tradeoff evaluation made potential by offering a cautious analysis of each sample that has been documented within the literature.

In future work, we need to deal with these bigger questions:

- What can we do with fashions after we do model-based software program engineering?

- How far can we go to construct a toolbox in order that designing a system could be supported by proof throughout each part?

- You need to construct the structure of a system, however are you able to make sense of a diagram?

- What are you able to say concerning the security of the timing of the system?

The work is grounded on the imaginative and prescient of rigorous model-based techniques engineering progressing from necessities to a mannequin. Builders additionally want supporting proof they’ll use to construct a belief bundle for an exterior auditor, to show that the system they designed works. Finally, our objective is to construct a sequence of belief throughout all of a CPS’s engineering artifacts.