The web had a collective feel-good second with the introduction of DALL-E, a synthetic intelligence-based picture generator impressed by artist Salvador Dali and the lovable robotic WALL-E that makes use of pure language to provide no matter mysterious and delightful picture your coronary heart needs. Seeing typed-out inputs like “smiling gopher holding an ice cream cone” immediately spring to life clearly resonated with the world.

Getting mentioned smiling gopher and attributes to pop up in your display screen isn’t a small process. DALL-E 2 makes use of one thing referred to as a diffusion mannequin, the place it tries to encode your complete textual content into one description to generate a picture. However as soon as the textual content has numerous extra particulars, it is arduous for a single description to seize all of it. Furthermore, whereas they’re extremely versatile, they often wrestle to grasp the composition of sure ideas, like complicated the attributes or relations between totally different objects.

To generate extra complicated photos with higher understanding, scientists from MIT’s Laptop Science and Synthetic Intelligence Laboratory (CSAIL) structured the everyday mannequin from a distinct angle: they added a collection of fashions collectively, the place all of them cooperate to generate desired photos capturing a number of totally different elements as requested by the enter textual content or labels. To create a picture with two parts, say, described by two sentences of description, every mannequin would sort out a specific part of the picture.

The seemingly magical fashions behind picture era work by suggesting a collection of iterative refinement steps to get to the specified picture. It begins with a “unhealthy” image after which regularly refines it till it turns into the chosen picture. By composing a number of fashions collectively, they collectively refine the looks at every step, so the result’s a picture that reveals all of the attributes of every mannequin. By having a number of fashions cooperate, you will get rather more inventive combos within the generated photos.

Take, for instance, a crimson truck and a inexperienced home. The mannequin will confuse the ideas of crimson truck and inexperienced home when these sentences get very sophisticated. A typical generator like DALL-E 2 would possibly make a inexperienced truck and a crimson home, so it will swap these colours round. The crew’s method can deal with this kind of binding of attributes with objects, and particularly when there are a number of units of issues, it might probably deal with every object extra precisely.

“The mannequin can successfully mannequin object positions and relational descriptions, which is difficult for current image-generation fashions. For instance, put an object and a dice in a sure place and a sphere in one other. DALL-E 2 is nice at producing pure photos however has issue understanding object relations generally,” says MIT CSAIL PhD pupil and co-lead creator Shuang Li, “Past artwork and creativity, maybe we may use our mannequin for educating. If you wish to inform a toddler to place a dice on high of a sphere, and if we are saying this in language, it is likely to be arduous for them to grasp. However our mannequin can generate the picture and present them.”

Making Dali proud

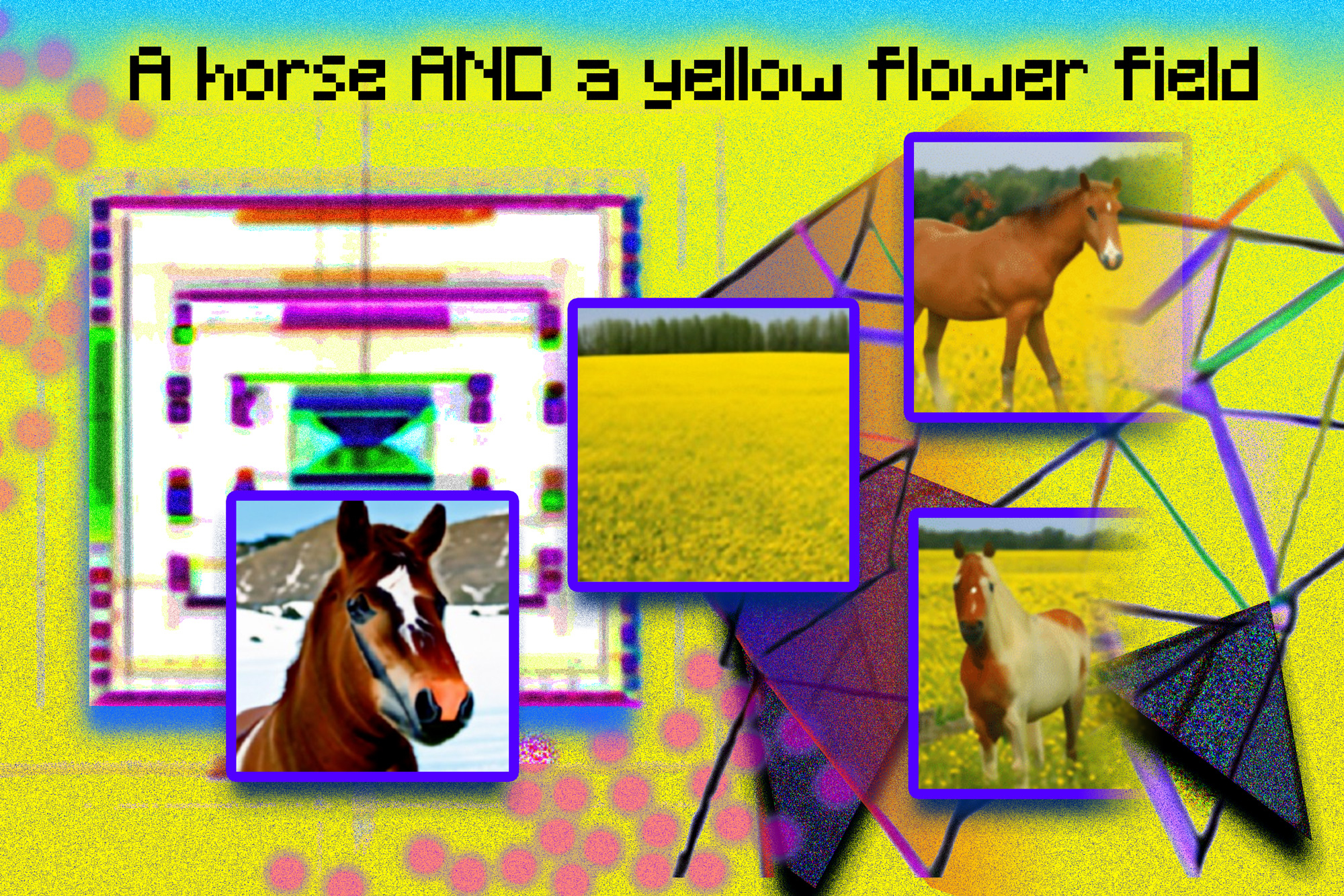

Composable Diffusion — the crew’s mannequin — makes use of diffusion fashions alongside compositional operators to mix textual content descriptions with out additional coaching. The crew’s method extra precisely captures textual content particulars than the unique diffusion mannequin, which instantly encodes the phrases as a single lengthy sentence. For instance, given “a pink sky” AND “a blue mountain within the horizon” AND “cherry blossoms in entrance of the mountain,” the crew’s mannequin was capable of produce that picture precisely, whereas the unique diffusion mannequin made the sky blue and every little thing in entrance of the mountains pink.

“The truth that our mannequin is composable means you could be taught totally different parts of the mannequin, one after the other. You may first be taught an object on high of one other, then be taught an object to the best of one other, after which be taught one thing left of one other,” says co-lead creator and MIT CSAIL PhD pupil Yilun Du. “Since we are able to compose these collectively, you may think about that our system allows us to incrementally be taught language, relations, or data, which we predict is a reasonably fascinating route for future work.”

Whereas it confirmed prowess in producing complicated, photorealistic photos, it nonetheless confronted challenges because the mannequin was skilled on a a lot smaller dataset than these like DALL-E 2, so there have been some objects it merely could not seize.

Now that Composable Diffusion can work on high of generative fashions, reminiscent of DALL-E 2, the scientists wish to discover continuous studying as a possible subsequent step. On condition that extra is often added to object relations, they wish to see if diffusion fashions can begin to “be taught” with out forgetting beforehand realized data — to a spot the place the mannequin can produce photos with each the earlier and new data.

“This analysis proposes a brand new methodology for composing ideas in text-to-image era not by concatenating them to kind a immediate, however slightly by computing scores with respect to every idea and composing them utilizing conjunction and negation operators,” says Mark Chen, co-creator of DALL-E 2 and analysis scientist at OpenAI. “It is a good concept that leverages the energy-based interpretation of diffusion fashions in order that outdated concepts round compositionality utilizing energy-based fashions might be utilized. The method can be capable of make use of classifier-free steerage, and it’s shocking to see that it outperforms the GLIDE baseline on numerous compositional benchmarks and may qualitatively produce very several types of picture generations.”

“People can compose scenes together with totally different components in a myriad of how, however this process is difficult for computer systems,” says Bryan Russel, analysis scientist at Adobe Programs. “This work proposes a sublime formulation that explicitly composes a set of diffusion fashions to generate a picture given a posh pure language immediate.”

Alongside Li and Du, the paper’s co-lead authors are Nan Liu, a grasp’s pupil in laptop science on the College of Illinois at Urbana-Champaign, and MIT professors Antonio Torralba and Joshua B. Tenenbaum. They may current the work on the 2022 European Convention on Laptop Imaginative and prescient.

The analysis was supported by Raytheon BBN Applied sciences Corp., Mitsubishi Electrical Analysis Laboratory, and DEVCOM Military Analysis Laboratory.