Actual-time analytics is utilized by many organizations to help mission-critical choices on real-time information. The actual-time journey usually begins with dwell dashboards on real-time information and shortly strikes to automating actions on that information with purposes like instantaneous personalization, gaming leaderboards and sensible IoT programs. On this put up, we’ll be specializing in constructing dwell dashboards and real-time purposes on information saved in DynamoDB, as we now have discovered DynamoDB to be a generally used information retailer for real-time use circumstances.

We’ll consider just a few standard approaches to implementing real-time analytics on DynamoDB, all of which use DynamoDB Streams however differ in how the dashboards and purposes are served:

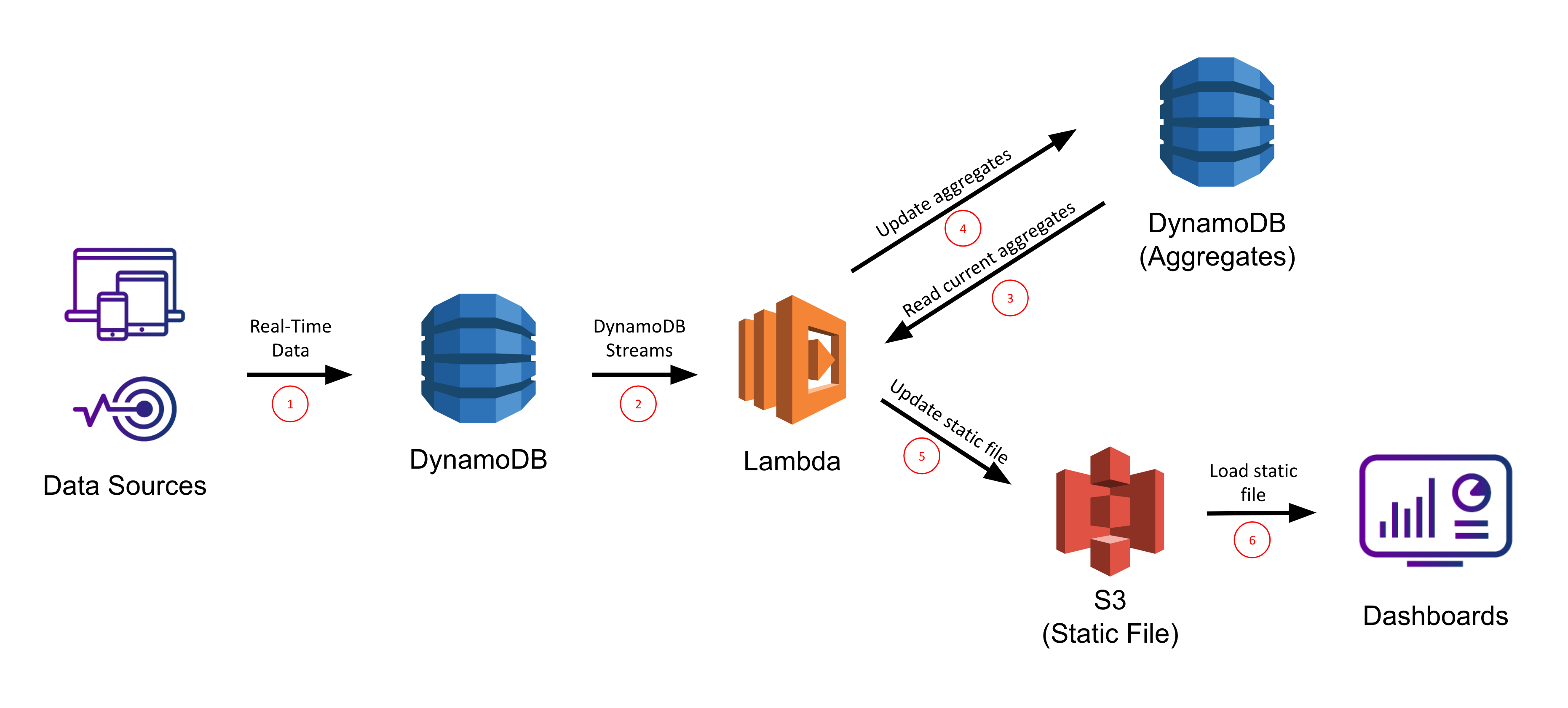

1. DynamoDB Streams + Lambda + S3

2. DynamoDB Streams + Lambda + ElastiCache for Redis

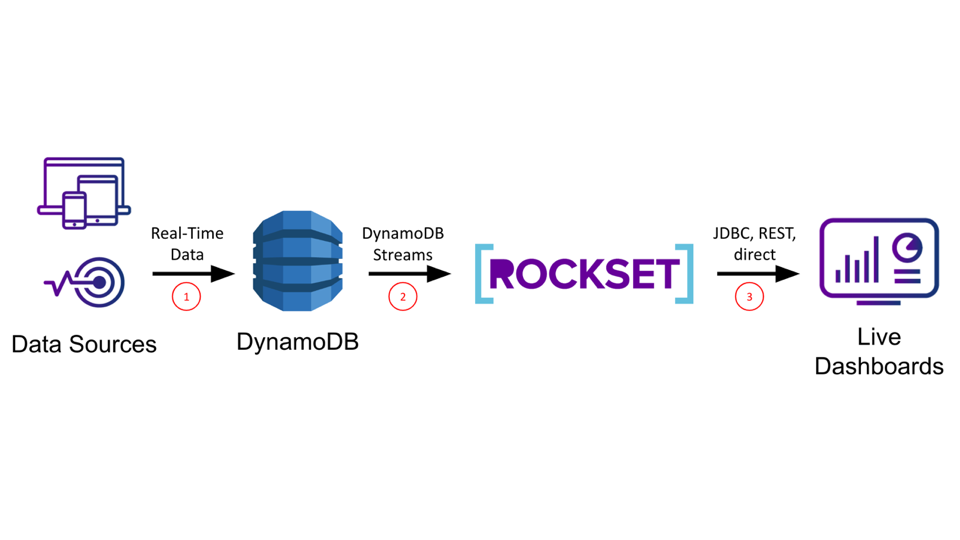

3. DynamoDB Streams + Rockset

We’ll consider every method on its ease of setup/upkeep, information latency, question latency/concurrency, and system scalability so you’ll be able to decide which method is finest for you based mostly on which of those standards are most essential in your use case.

Technical Concerns for Actual-Time Dashboards and Purposes

Constructing dashboards and purposes on real-time information is non-trivial as any answer must help extremely concurrent, low latency queries for quick load instances (or else drive down utilization/effectivity) and dwell sync from the info sources for low information latency (or else drive up incorrect actions/missed alternatives). Low latency necessities rule out straight working on information in OLTP databases, that are optimized for transactional, not analytical, queries. Low information latency necessities rule out ETL-based options which enhance your information latency above the real-time threshold and inevitably result in “ETL hell”.

DynamoDB is a completely managed NoSQL database supplied by AWS that’s optimized for level lookups and small vary scans utilizing a partition key. Although it’s extremely performant for these use circumstances, DynamoDB will not be a sensible choice for analytical queries which generally contain giant vary scans and complicated operations equivalent to grouping and aggregation. AWS is aware of this and has answered prospects requests by creating DynamoDB Streams, a change-data-capture system which can be utilized to inform different providers of latest/modified information in DynamoDB. In our case, we’ll make use of DynamoDB Streams to synchronize our DynamoDB desk with different storage programs which can be higher fitted to serving analytical queries.

Amazon S3

The primary method for DynamoDB reporting and dashboarding we’ll take into account makes use of Amazon S3’s static web site internet hosting. On this situation, adjustments to our DynamoDB desk will set off a name to a Lambda operate, which can take these adjustments and replace a separate mixture desk additionally saved in DynamoDB. The Lambda will use the DynamoDB Streams API to effectively iterate by the latest adjustments to the desk with out having to do an entire scan. The mixture desk shall be fronted by a static file in S3 which anybody can view by going to the DNS endpoint of that S3 bucket’s hosted web site.

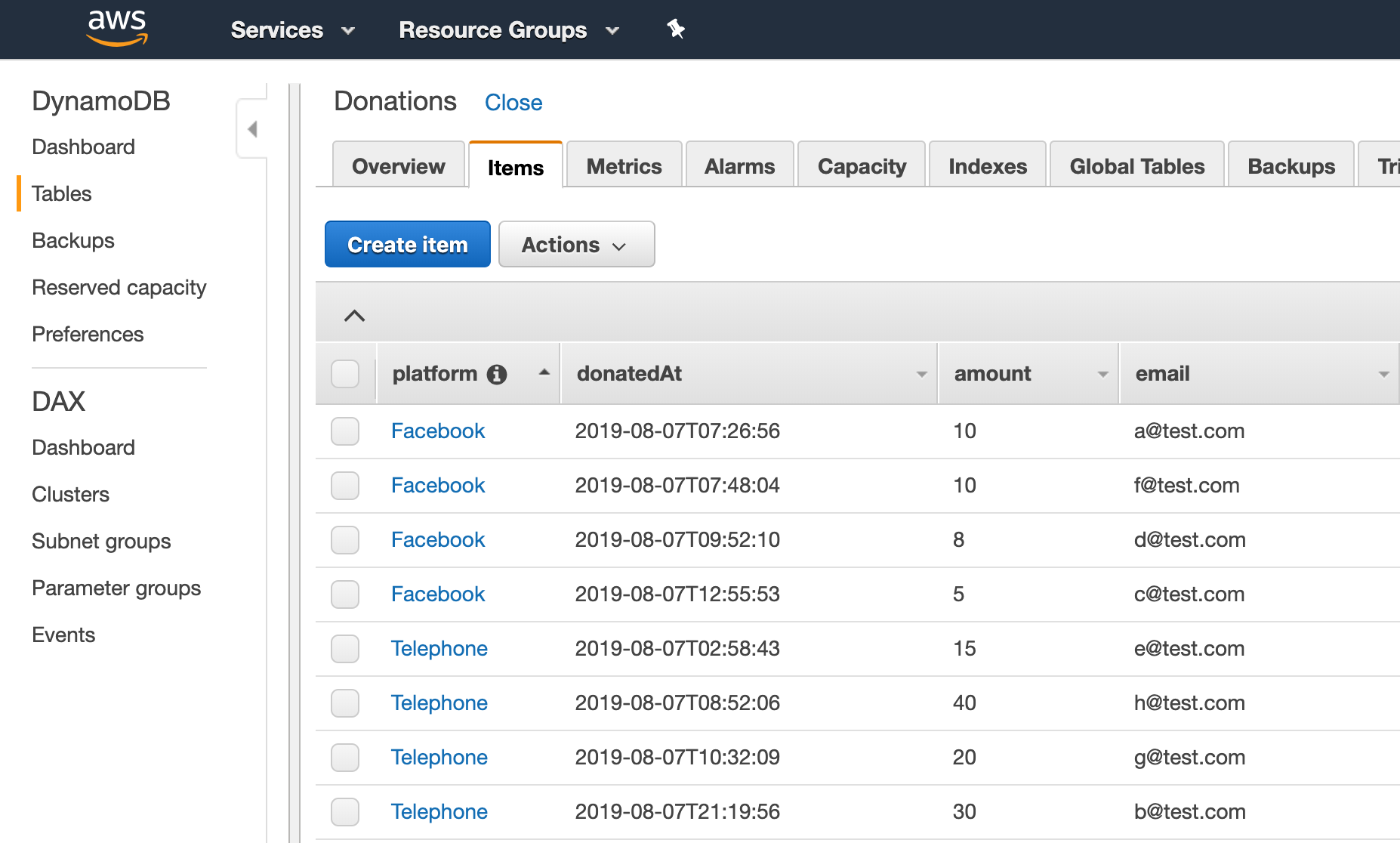

For instance, let’s say we’re organizing a charity fundraiser and desire a dwell dashboard on the occasion to indicate the progress in direction of our fundraising aim. Your DynamoDB desk for monitoring donations may appear like

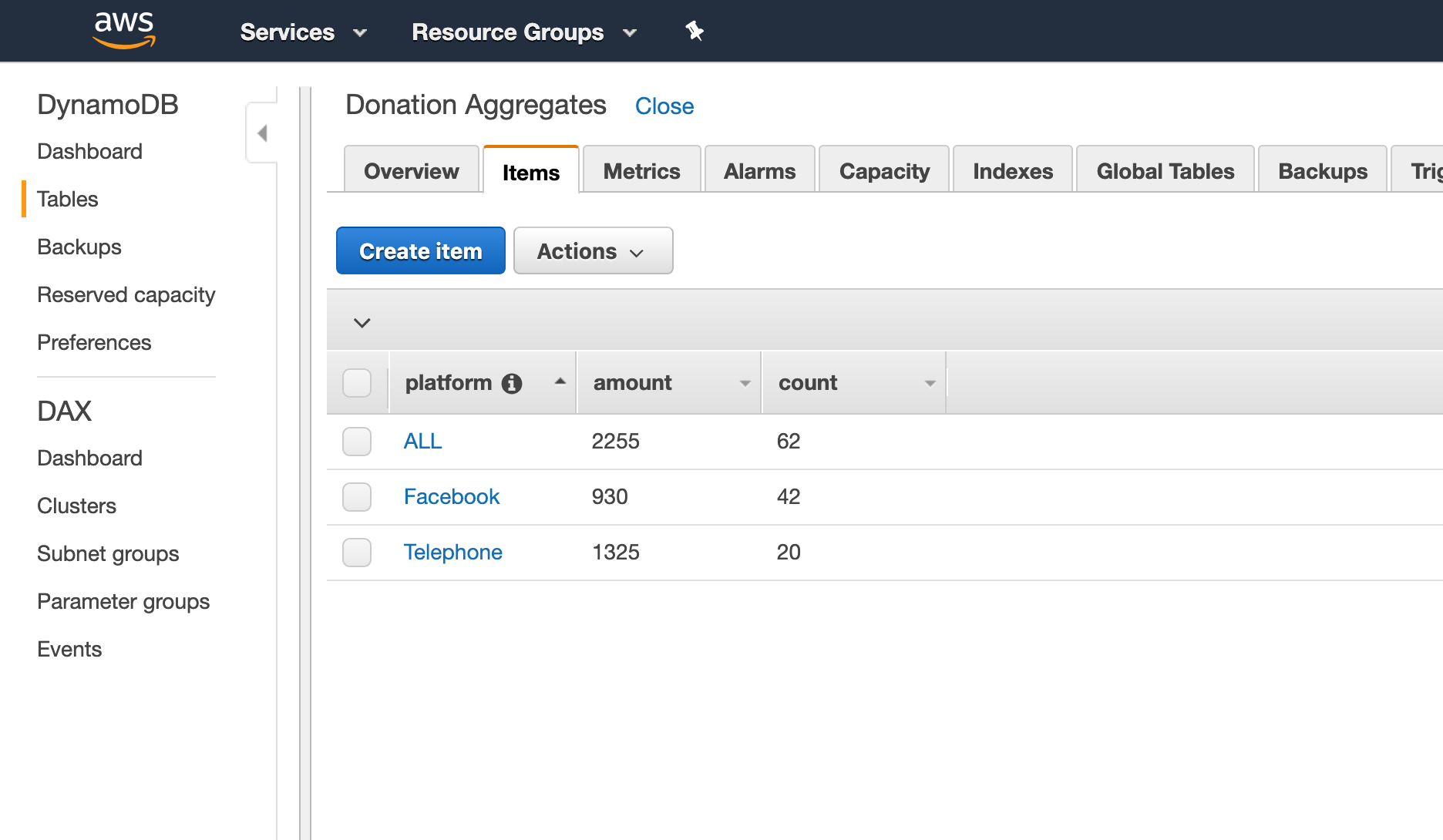

On this situation, it might be cheap to trace the donations per platform and the whole donated up to now. To retailer this aggregated information, you may use one other DynamoDB desk that might appear like

If we maintain our volunteers up-to-date with these numbers all through the fundraiser, they’ll rearrange their effort and time to maximise donations (for instance by allocating extra individuals to the telephones since telephone donations are about 3x bigger than Fb donations).

To perform this, we’ll create a Lambda operate utilizing the dynamodb-process-stream blueprint with operate physique of the shape

exports.handler = async (occasion, context) => {

for (const report of occasion.Data) {

let platform = report.dynamodb['NewImage']['platform']['S'];

let quantity = report.dynamodb['NewImage']['amount']['N'];

updatePlatformTotal(platform, quantity);

updatePlatformTotal("ALL", quantity);

}

return `Efficiently processed ${occasion.Data.size} data.`;

};

The operate updatePlatformTotal would learn the present aggregates from the DonationAggregates (or initialize them to 0 if not current), then replace and write again the brand new values. There are then two approaches to updating the ultimate dashboard:

- Write a brand new static file to S3 every time the Lambda is triggered that overwrites the HTML to mirror the latest values. That is completely acceptable for visualizing information that doesn’t change very regularly.

- Have the static file in S3 truly learn from the DonationAggregates DynamoDB desk (which could be accomplished by the AWS javascript SDK). That is preferable if the info is being up to date regularly as it’s going to save many repeated writes to the S3 file.

Lastly, we might go to the DynamoDB Streams dashboard and affiliate this lambda operate with the DynamoDB stream on the Donations desk.

Execs:

- Serverless / fast to setup

- Lambda results in low information latency

- Good question latency if the combination desk is stored small-ish

- Scalability of S3 for serving

Cons:

- No ad-hoc querying, refinement, or exploration within the dashboard (it’s static)

- Ultimate aggregates are nonetheless saved in DynamoDB, so when you’ve got sufficient of them you’ll hit the identical slowdown with vary scans, and so on.

- Troublesome to adapt this for an current, giant DynamoDB desk

- Must provision sufficient learn/write capability in your DynamoDB desk (extra devops)

- Must establish all finish metrics a priori

TLDR:

- This can be a good method to shortly show just a few easy metrics on a easy dashboard, however not nice for extra advanced purposes

- You’ll want to keep up a separate aggregates desk in DynamoDB up to date utilizing Lambdas

- These sorts of dashboards received’t be interactive because the information is pre-computed

For a full-blown tutorial of this method try this AWS weblog.

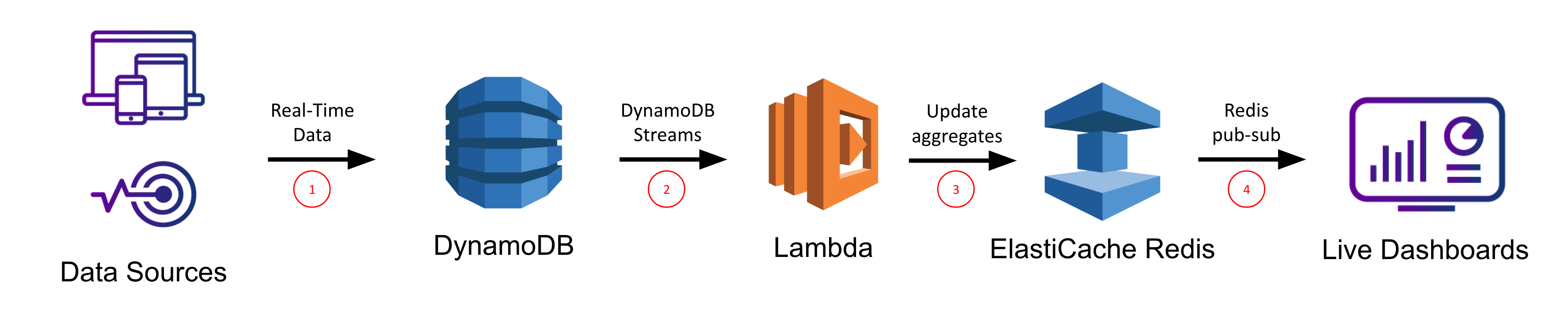

ElastiCache for Redis

Our subsequent choice for dwell dashboards and purposes on high of DynamoDB includes ElastiCache for Redis, which is a completely managed Redis service supplied by AWS. Redis is an in-memory key worth retailer which is regularly used as a cache. Right here, we’ll use ElastiCache for Redis very similar to our mixture desk above. Once more we’ll arrange a Lambda operate that shall be triggered on every change to the DynamoDB desk and that can use the DynamoDB Streams API to effectively retrieve latest adjustments to the desk without having to carry out an entire desk scan. Nevertheless this time, the Lambda operate will make calls to our Redis service to replace the in-memory information constructions we’re utilizing to maintain monitor of our aggregates. We’ll then make use of Redis’ built-in publish-subscribe performance to get real-time notifications to our webapp of when new information is available in so we will replace our utility accordingly.

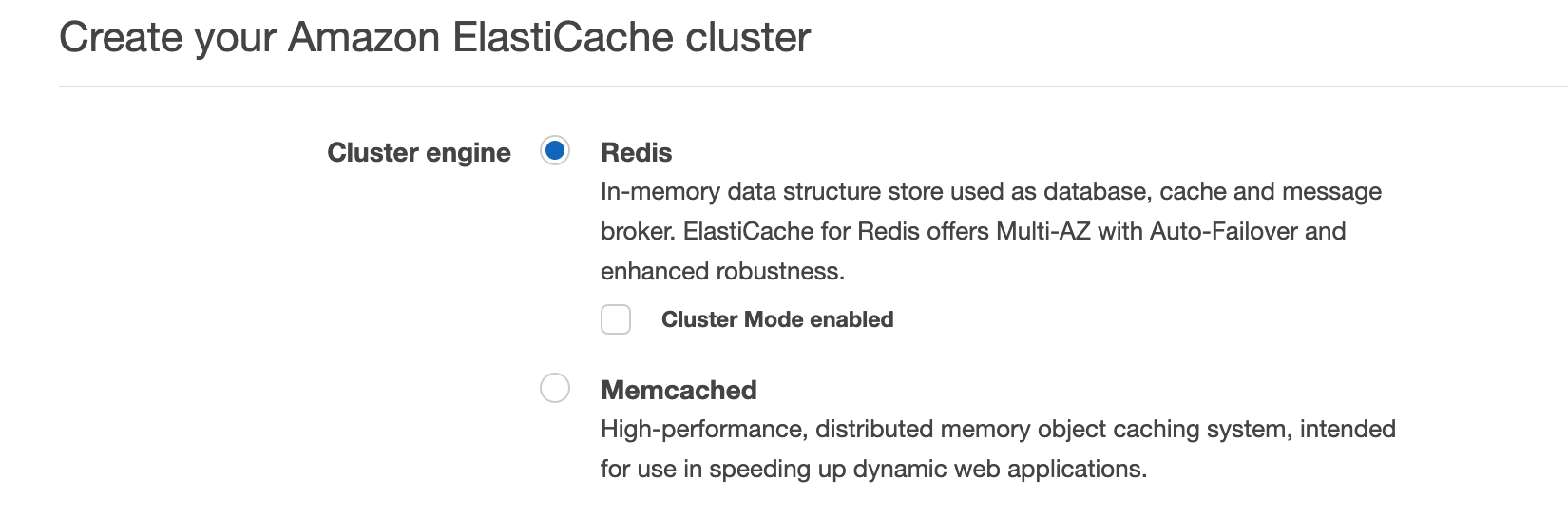

Persevering with with our charity fundraiser instance, let’s use a Redis hash to maintain monitor of the aggregates. In Redis, the hash information construction is just like a Python dictionary, Javascript Object, or Java HashMap. First we’ll create a brand new Redis occasion within the ElastiCache for Redis dashboard.

Then as soon as it’s up and working, we will use the identical lambda definition from above and simply change the implementation of updatePlatformTotal to one thing like

operate udpatePlatformTotal(platform, quantity) {

let redis = require("redis"),

let shopper = redis.createClient(...);

let countKey = [platform, "count"].be a part of(':')

let amtKey = [platform, "amount"].be a part of(':')

shopper.hincrby(countKey, 1)

shopper.publish("aggregates", countKey, 1)

shopper.hincrby(amtKey, quantity)

shopper.publish("aggregates", amtKey, quantity)

}

Within the instance of the donation report

{

"e-mail": "a@check.com",

"donatedAt": "2019-08-07T07:26:56",

"platform": "Fb",

"quantity": 10

}

This could result in the equal Redis instructions

HINCRBY("Fb:rely", 1)

PUBLISH("aggregates", "Fb:rely", 1)

HINCRBY("Fb:quantity", 10)

PUBLISH("aggregates", "Fb:quantity", 10)

The increment calls persist the donation data to the Redis service, and the publish instructions ship real-time notifications by Redis’ pub-sub mechanism to the corresponding webapp which had beforehand subscribed to the “aggregates” subject. Utilizing this communication mechanism permits help for real-time dashboards and purposes, and it offers flexibility for what sort of internet framework to make use of so long as a Redis shopper is obtainable to subscribe with.

Be aware: You may all the time use your personal Redis occasion or one other managed model apart from Amazon ElastiCache for Redis and all of the ideas would be the identical.

Execs:

- Serverless / fast to setup

- Pub-sub results in low information latency

- Redis may be very quick for lookups → low question latency

- Flexibility for selection of frontend since Redis purchasers can be found in lots of languages

Cons:

- Want one other AWS service or to arrange/handle your personal Redis deployment

- Must carry out ETL within the Lambda which shall be brittle because the DynamoDB schema adjustments

- Troublesome to include with an current, giant, manufacturing DynamoDB desk (solely streams updates)

- Redis doesn’t help advanced queries, solely lookups of pre-computed values (no ad-hoc queries/exploration)

TLDR:

- This can be a viable choice in case your use case primarily depends on lookups of pre-computed values and doesn’t require advanced queries or joins

- This method makes use of Redis to retailer mixture values and publishes updates utilizing Redis pub-sub to your dashboard or utility

- Extra highly effective than static S3 internet hosting however nonetheless restricted by pre-computed metrics so dashboards received’t be interactive

- All elements are serverless (in the event you use Amazon ElastiCache) so deployment/upkeep are simple

- Must develop your personal webapp that helps Redis subscribe semantics

For an in-depth tutorial on this method, try this AWS weblog. There the main focus is on a generic Kinesis stream because the enter, however you should use the DynamoDB Streams Kinesis adapter along with your DynamoDB desk after which comply with their tutorial from there on.

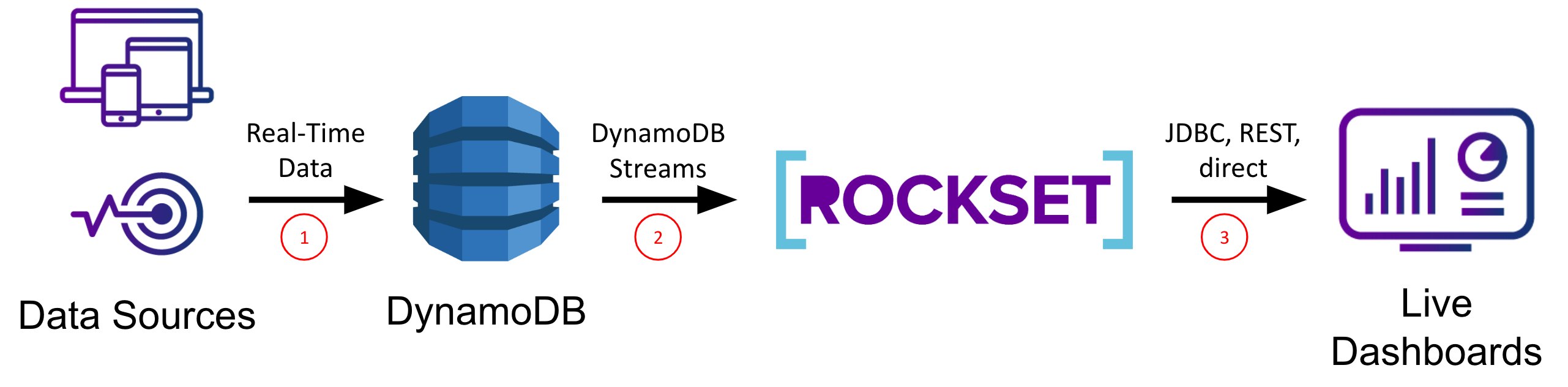

Rockset

The final choice we’ll take into account on this put up is Rockset, a real-time indexing database constructed for top QPS to help real-time utility use circumstances. Rockset’s information engine has robust dynamic typing and sensible schemas which infer subject varieties in addition to how they modify over time. These properties make working with NoSQL information, like that from DynamoDB, easy.

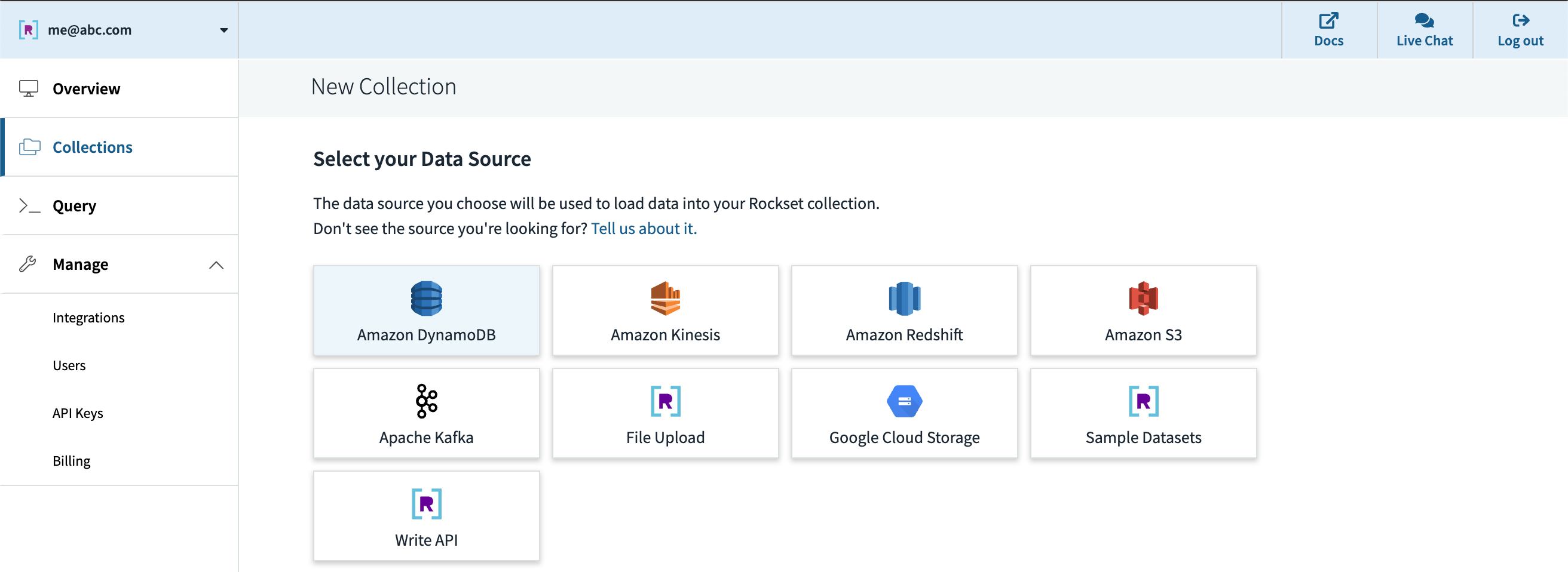

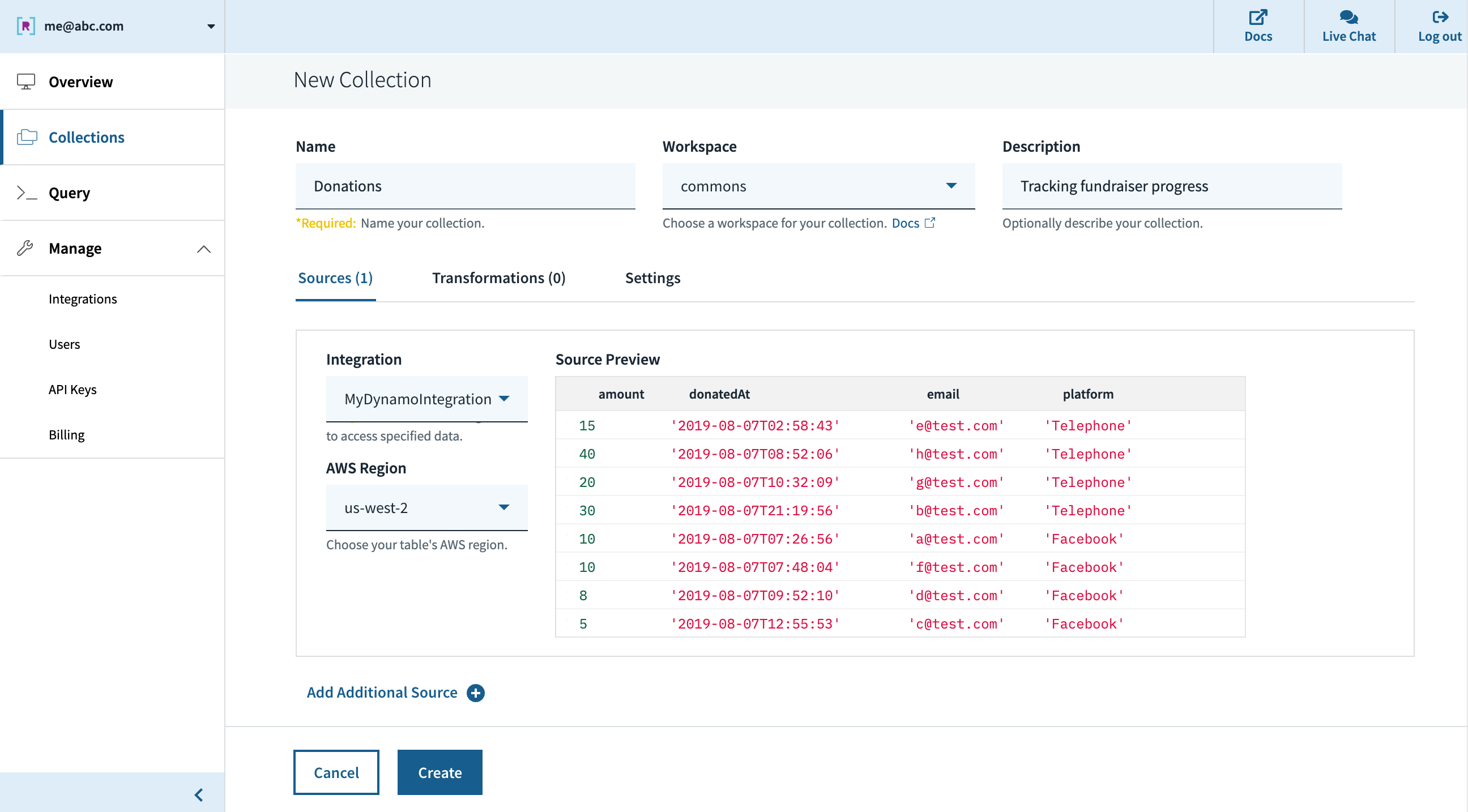

After creating an account at www.rockset.com, we’ll use the console to arrange our first integration– a set of credentials used to entry our information. Since we’re utilizing DynamoDB as our information supply, we’ll present Rockset with an AWS entry key and secret key pair that has correctly scoped permissions to learn from the DynamoDB desk we wish. Subsequent we’ll create a set– the equal of a DynamoDB/SQL desk– and specify that it ought to pull information from our DynamoDB desk and authenticate utilizing the combination we simply created. The preview window within the console will pull just a few data from the DynamoDB desk and show them to verify all the pieces labored accurately, after which we’re good to press “Create”.

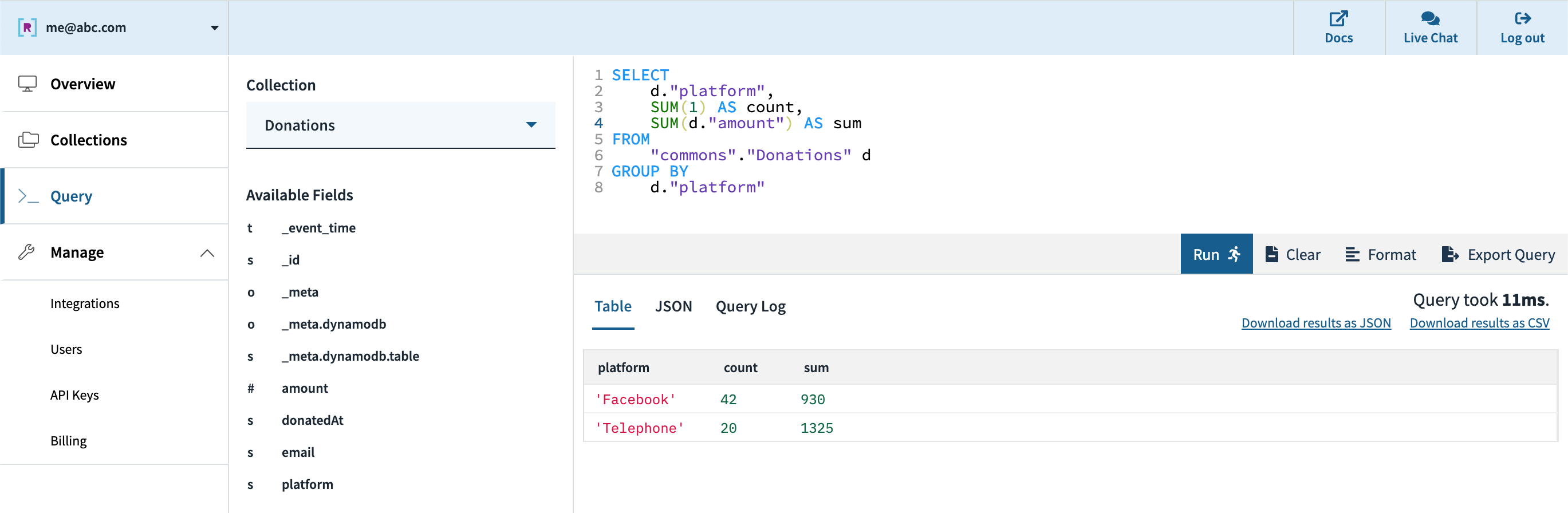

Quickly after, we will see within the console that the gathering is created and information is streaming in from DynamoDB. We are able to use the console’s question editor to experiment/tune the SQL queries that shall be utilized in our utility. Since Rockset has its personal question compiler/execution engine, there may be first-class help for arrays, objects, and nested information constructions.

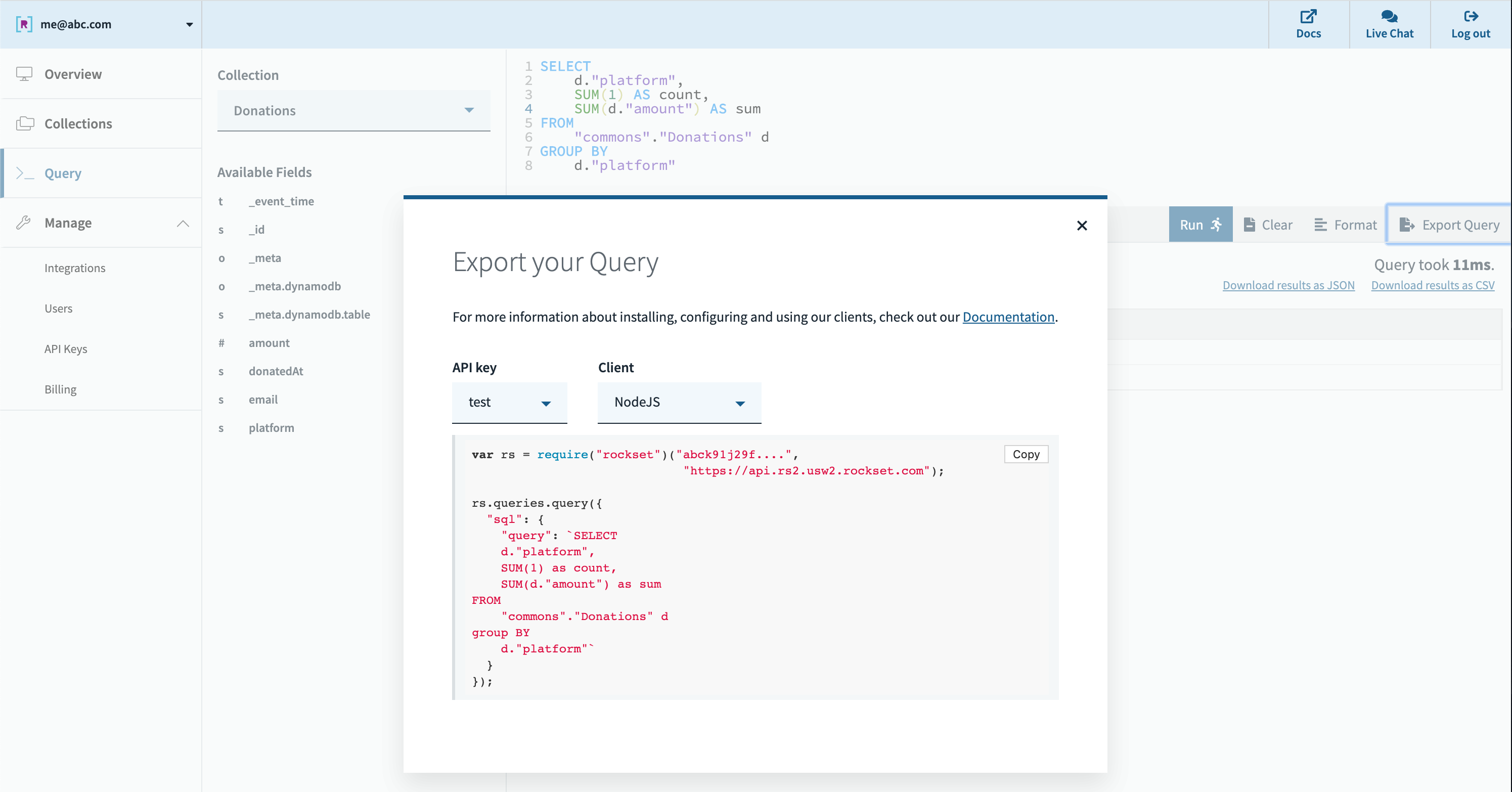

Subsequent, we will create an API key within the console which shall be utilized by the applying for authentication to Rockset’s servers. We are able to export our question from the console question editor it right into a functioning code snippet in quite a lot of languages. Rockset helps SQL over REST, which implies any http framework in any programming language can be utilized to question your information, and several other shopper libraries are supplied for comfort as effectively.

All that’s left then is to run our queries in our dashboard or utility. Rockset’s cloud-native structure permits it to scale question efficiency and concurrency dynamically as wanted, enabling quick queries even on giant datasets with advanced, nested information with inconsistent varieties.

Execs:

- Serverless– quick setup, no-code DynamoDB integration, and 0 configuration/administration required

- Designed for low question latency and excessive concurrency out of the field

- Integrates with DynamoDB (and different sources) in real-time for low information latency with no pipeline to keep up

- Sturdy dynamic typing and sensible schemas deal with combined varieties and works effectively with NoSQL programs like DynamoDB

- Integrates with quite a lot of customized dashboards (by shopper SDKs, JDBC driver, and SQL over REST) and BI instruments (if wanted)

Cons:

- Optimized for lively dataset, not archival information, with candy spot as much as 10s of TBs

- Not a transactional database

- It’s an exterior service

TLDR:

- Take into account this method when you’ve got strict necessities on having the newest information in your real-time purposes, have to help giant numbers of customers, or need to keep away from managing advanced information pipelines

- Rockset is constructed for extra demanding utility use circumstances and can be used to help dashboarding if wanted

- Constructed-in integrations to shortly go from DynamoDB (and plenty of different sources) to dwell dashboards and purposes

- Can deal with combined varieties, syncing an current desk, and plenty of low-latency queries

- Finest for information units from just a few GBs to 10s of TBs

For extra sources on how you can combine Rockset with DynamoDB, try this weblog put up that walks by a extra advanced instance.

Conclusion

We’ve coated a number of approaches for constructing real-time analytics on DynamoDB information, every with its personal execs and cons. Hopefully this will help you consider one of the best method in your use case, so you’ll be able to transfer nearer to operationalizing your personal information!

Different DynamoDB sources: