Overview

On this information, we’ll:

- Perceive the Blueprint of any fashionable advice system

- Dive into an in depth evaluation of every stage throughout the blueprint

- Talk about infrastructure challenges related to every stage

- Cowl particular circumstances throughout the phases of the advice system blueprint

- Get launched to some storage concerns for advice techniques

- And eventually, finish with what the longer term holds for the advice techniques

Introduction

In a current insightful discuss at Index convention, Nikhil, an knowledgeable within the discipline with a decade-long journey in machine studying and infrastructure, shared his priceless experiences and insights into advice techniques. From his early days at Quora to main tasks at Fb and his present enterprise at Fennel (a real-time function retailer for ML), Nikhil has traversed the evolving panorama of machine studying engineering and machine studying infrastructure particularly within the context of advice techniques. This weblog submit distills his decade of expertise right into a complete learn, providing an in depth overview of the complexities and improvements at each stage of constructing a real-world recommender system.

Suggestion Methods at a excessive stage

At a particularly excessive stage, a typical recommender system begins easy and might be compartmentalized as follows:

Be aware: All slide content material and associated supplies are credited to Nikhil Garg from Fennel.

Stage 1: Retrieval or candidate era – The concept of this stage is that we sometimes go from hundreds of thousands and even trillions (on the big-tech scale) to a whole lot or a few thousand candidates.

Stage 2: Rating – We rank these candidates utilizing some heuristic to choose the highest 10 to 50 gadgets.

Be aware: The need for a candidate era step earlier than rating arises as a result of it is impractical to run a scoring perform, even a non-machine-learning one, on hundreds of thousands of things.

Suggestion System – A common blueprint

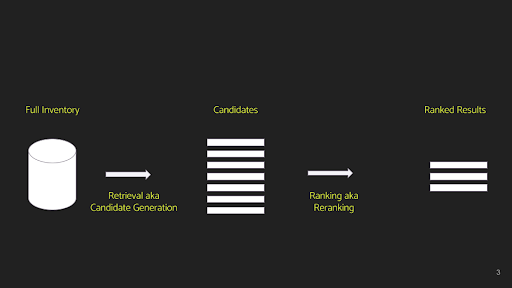

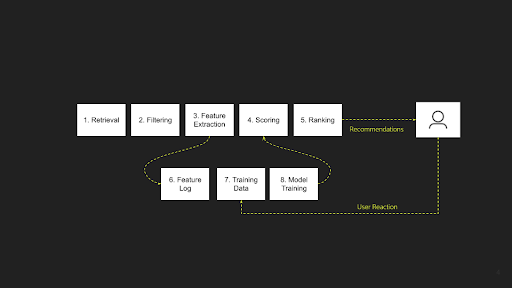

Drawing from his intensive expertise working with a wide range of advice techniques in quite a few contexts, Nikhil posits that each one varieties might be broadly categorized into the above two predominant phases. In his knowledgeable opinion, he additional delineates a recommender system into an 8-step course of, as follows:

The retrieval or candidate era stage is expanded into two steps: Retrieval and Filtering. The method of rating the candidates is additional developed into three distinct steps: Function Extraction, Scoring, and Rating. Moreover, there’s an offline element that underpins these phases, encompassing Function Logging, Coaching Information Era, and Mannequin Coaching.

Let’s now delve into every stage, discussing them one after the other to know their capabilities and the everyday challenges related to every:

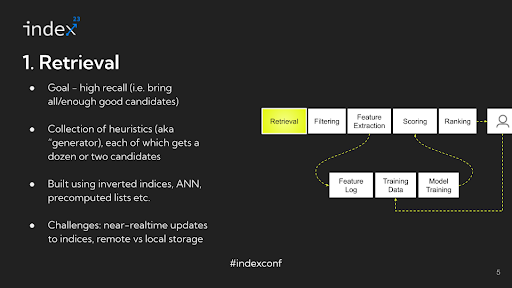

Step 1: Retrieval

Overview: The first goal of this stage is to introduce a top quality stock into the combo. The main focus is on recall — making certain that the pool features a broad vary of doubtless related gadgets. Whereas some non-relevant or ‘junk’ content material might also be included, the important thing purpose is to keep away from excluding any related candidates.

Detailed Evaluation: The important thing problem on this stage lies in narrowing down an unlimited stock, doubtlessly comprising 1,000,000 gadgets, to simply a couple of thousand, all whereas making certain that recall is preserved. This activity might sound daunting at first, but it surely’s surprisingly manageable, particularly in its fundamental type. As an illustration, take into account a easy method the place you look at the content material a consumer has interacted with, determine the authors of that content material, after which choose the highest 5 items from every writer. This technique is an instance of a heuristic designed to generate a set of doubtless related candidates. Usually, a recommender system will make use of dozens of such mills, starting from simple heuristics to extra refined ones that contain machine studying fashions. Every generator sometimes yields a small group of candidates, a couple of dozen or so, and infrequently exceeds a pair dozen. By aggregating these candidates and forming a union or assortment, every generator contributes a definite kind of stock or content material taste. Combining a wide range of these mills permits for capturing a various vary of content material sorts within the stock, thus addressing the problem successfully.

Infrastructure Challenges: The spine of those techniques regularly entails inverted indices. For instance, you may affiliate a selected writer ID with all of the content material they’ve created. Throughout a question, this interprets into extracting content material primarily based on explicit writer IDs. Fashionable techniques usually prolong this method by using nearest-neighbor lookups on embeddings. Moreover, some techniques make the most of pre-computed lists, reminiscent of these generated by knowledge pipelines that determine the highest 100 hottest content material items globally, serving as one other type of candidate generator.

For machine studying engineers and knowledge scientists, the method entails devising and implementing numerous methods to extract pertinent stock utilizing various heuristics or machine studying fashions. These methods are then built-in into the infrastructure layer, forming the core of the retrieval course of.

A main problem right here is making certain close to real-time updates to those indices. Take Fb for example: when an writer releases new content material, it is crucial for the brand new Content material ID to promptly seem in related consumer lists, and concurrently, the viewer-author mapping course of must be up to date. Though advanced, attaining these real-time updates is important for the system’s accuracy and timeliness.

Main Infrastructure Evolution: The trade has seen vital infrastructural adjustments over the previous decade. About ten years in the past, Fb pioneered using native storage for content material indexing in Newsfeed, a apply later adopted by Quora, LinkedIn, Pinterest, and others. On this mannequin, the content material was listed on the machines liable for rating, and queries have been sharded accordingly.

Nonetheless, with the development of community applied sciences, there’s been a shift again to distant storage. Content material indexing and knowledge storage are more and more dealt with by distant machines, overseen by orchestrator machines that execute calls to those storage techniques. This shift, occurring over current years, highlights a big evolution in knowledge storage and indexing approaches. Regardless of these developments, the trade continues to face challenges, notably round real-time indexing.

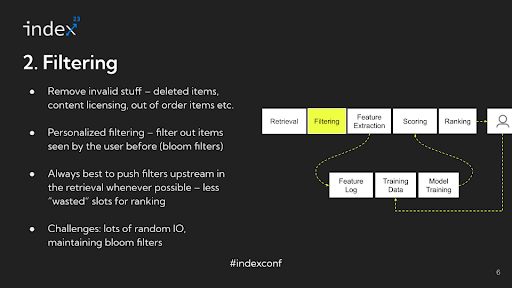

Step 2: Filtering

Overview: The filtering stage in advice techniques goals to sift out invalid stock from the pool of potential candidates. This course of shouldn’t be centered on personalization however fairly on excluding gadgets which can be inherently unsuitable for consideration.

Detailed Evaluation: To raised perceive the filtering course of, take into account particular examples throughout completely different platforms. In e-commerce, an out-of-stock merchandise shouldn’t be displayed. On social media platforms, any content material that has been deleted since its final indexing should be faraway from the pool. For media streaming providers, movies missing licensing rights in sure areas needs to be excluded. Usually, this stage may contain making use of round 13 completely different filtering guidelines to every of the three,000 candidates, a course of that requires vital I/O, usually random disk I/O, presenting a problem by way of environment friendly administration.

A key facet of this course of is customized filtering, usually utilizing Bloom filters. For instance, on platforms like TikTok, customers usually are not proven movies they’ve already seen. This entails repeatedly updating Bloom filters with consumer interactions to filter out beforehand considered content material. As consumer interactions enhance, so does the complexity of managing these filters.

Infrastructure Challenges: The first infrastructure problem lies in managing the dimensions and effectivity of Bloom filters. They should be saved in reminiscence for pace however can develop massive over time, posing dangers of information loss and administration difficulties. Regardless of these challenges, the filtering stage, notably after figuring out legitimate candidates and eradicating invalid ones, is often seen as one of many extra manageable facets of advice system processes.

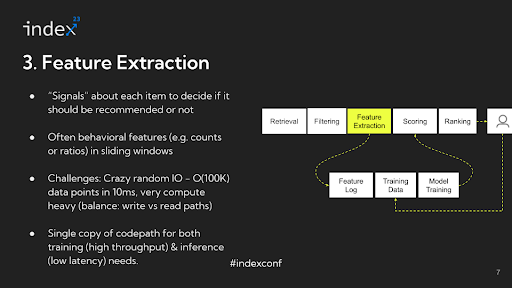

Step 3: Function extraction

After figuring out appropriate candidates and filtering out invalid stock, the following crucial stage in a advice system is function extraction. This part entails a radical understanding of all of the options and alerts that will likely be utilized for rating functions. These options and alerts are important in figuring out the prioritization and presentation of content material to the consumer throughout the advice feed. This stage is essential in making certain that probably the most pertinent and appropriate content material is elevated in rating, thereby considerably enhancing the consumer’s expertise with the system.

Detailed evaluation: Within the function extraction stage, the extracted options are sometimes behavioral, reflecting consumer interactions and preferences. A standard instance is the variety of occasions a consumer has considered, clicked on, or bought one thing, factoring in particular attributes such because the content material’s writer, subject, or class inside a sure timeframe.

As an illustration, a typical function is perhaps the frequency of a consumer clicking on movies created by feminine publishers aged 18 to 24 over the previous 14 days. This function not solely captures the content material’s attributes, just like the age and gender of the writer, but additionally the consumer’s interactions inside an outlined interval. Subtle advice techniques may make use of a whole lot and even hundreds of such options, every contributing to a extra nuanced and customized consumer expertise.

Infrastructure challenges: The function extraction stage is taken into account probably the most difficult from an infrastructure perspective in a advice system. The first purpose for that is the intensive knowledge I/O (Enter/Output) operations concerned. As an illustration, suppose you’ve got hundreds of candidates after filtering and hundreds of options within the system. This ends in a matrix with doubtlessly hundreds of thousands of information factors. Every of those knowledge factors entails wanting up pre-computed portions, reminiscent of what number of occasions a selected occasion has occurred for a specific mixture. This course of is usually random entry, and the information factors should be regularly up to date to mirror the most recent occasions.

For instance, if a consumer watches a video, the system must replace a number of counters related to that interplay. This requirement results in a storage system that should assist very excessive write throughput and even increased learn throughput. Furthermore, the system is latency-bound, usually needing to course of these hundreds of thousands of information factors inside tens of milliseconds..

Moreover, this stage requires vital computational energy. A few of this computation happens in the course of the knowledge ingestion (write) path, and a few in the course of the knowledge retrieval (learn) path. In most advice techniques, the majority of the computational assets is cut up between function extraction and mannequin serving. Mannequin inference is one other crucial space that consumes a substantial quantity of compute assets. This interaction of excessive knowledge throughput and computational calls for makes the function extraction stage notably intensive in advice techniques.

There are even deeper challenges related to function extraction and processing, notably associated to balancing latency and throughput necessities. Whereas the necessity for low latency is paramount in the course of the reside serving of suggestions, the identical code path used for function extraction should additionally deal with batch processing for coaching fashions with hundreds of thousands of examples. On this situation, the issue turns into throughput-bound and fewer delicate to latency, contrasting with the real-time serving necessities.

To handle this dichotomy, the everyday method entails adapting the identical code for various functions. The code is compiled or configured in a method for batch processing, optimizing for throughput, and in one other means for real-time serving, optimizing for low latency. Reaching this twin optimization might be very difficult because of the differing necessities of those two modes of operation.

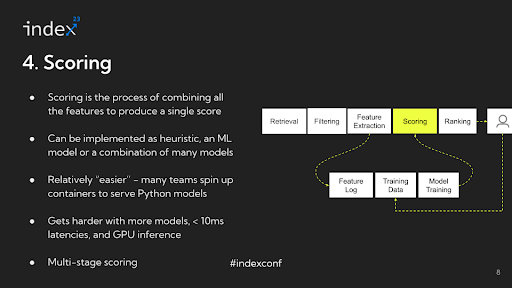

Step 4: Scoring

After you have recognized all of the alerts for all of the candidates you by some means have to mix them and convert them right into a single quantity, that is known as scoring.

Detailed evaluation: Within the technique of scoring for advice techniques, the methodology can fluctuate considerably relying on the applying. For instance, the rating for the primary merchandise is perhaps 0.7, for the second merchandise 3.1, and for the third merchandise -0.1. The best way scoring is applied can vary from easy heuristics to advanced machine studying fashions.

An illustrative instance is the evolution of the feed at Quora. Initially, the Quora feed was chronologically sorted, that means the scoring was so simple as utilizing the timestamp of content material creation. On this case, no advanced steps have been wanted, and gadgets have been sorted in descending order primarily based on the time they have been created. Later, the Quora feed developed to make use of a ratio of upvotes to downvotes, with some modifications, as its scoring perform.

This instance highlights that scoring doesn’t all the time contain machine studying. Nonetheless, in additional mature or refined settings, scoring usually comes from machine studying fashions, generally even a mix of a number of fashions. It’s normal to make use of a various set of machine studying fashions, presumably half a dozen to a dozen, every contributing to the ultimate scoring in several methods. This range in scoring strategies permits for a extra nuanced and tailor-made method to rating content material in advice techniques.

Infrastructure challenges: The infrastructure facet of scoring in advice techniques has considerably developed, changing into a lot simpler in comparison with what it was 5 to six years in the past. Beforehand a significant problem, the scoring course of has been simplified with developments in know-how and methodology. These days, a typical method is to make use of a Python-based mannequin, like XGBoost, spun up inside a container and hosted as a service behind FastAPI. This technique is easy and sufficiently efficient for many purposes.

Nonetheless, the situation turns into extra advanced when coping with a number of fashions, tighter latency necessities, or deep studying duties that require GPU inference. One other fascinating facet is the multi-staged nature of rating in advice techniques. Totally different phases usually require completely different fashions. As an illustration, within the earlier phases of the method, the place there are extra candidates to think about, lighter fashions are sometimes used. As the method narrows right down to a smaller set of candidates, say round 200, extra computationally costly fashions are employed. Managing these various necessities and balancing the trade-offs between various kinds of fashions, particularly by way of computational depth and latency, turns into a vital facet of the advice system infrastructure.

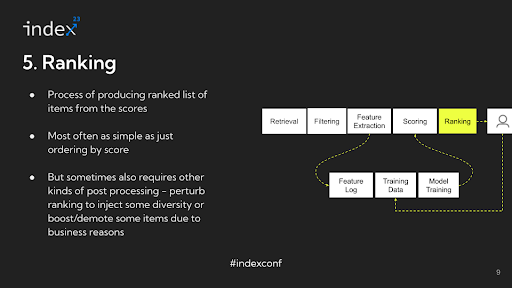

Step 5: Rating

Following the computation of scores, the ultimate step within the advice system is what might be described as ordering or sorting the gadgets. Whereas sometimes called ‘rating’, this stage is perhaps extra precisely termed ‘ordering’, because it primarily entails sorting the gadgets primarily based on their computed scores.

Detailed evaluation: This sorting course of is easy — sometimes simply arranging the gadgets in descending order of their scores. There is no extra advanced processing concerned at this stage; it is merely about organizing the gadgets in a sequence that displays their relevance or significance as decided by their scores. In refined advice techniques, there’s extra complexity concerned past simply ordering gadgets primarily based on scores. For instance, suppose a consumer on TikTok sees movies from the identical creator one after one other. In that case, it would result in a much less gratifying expertise, even when these movies are individually related. To handle this, these techniques usually alter or ‘perturb’ the scores to boost facets like range within the consumer’s feed. This perturbation is a part of a post-processing stage the place the preliminary sorting primarily based on scores is modified to take care of different fascinating qualities, like selection or freshness, within the suggestions. After this ordering and adjustment course of, the outcomes are offered to the consumer.

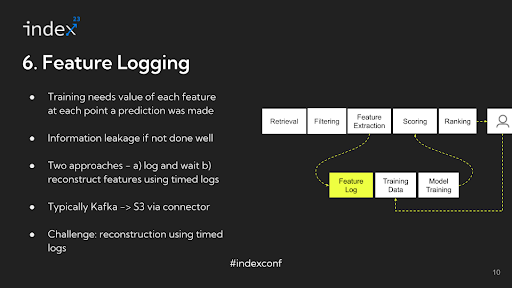

Step 6: Function logging

When extracting options for coaching a mannequin in a advice system, it is essential to log the information precisely. The numbers which can be extracted throughout function extraction are sometimes logged in techniques like Apache Kafka. This logging step is significant for the mannequin coaching course of that happens later.

As an illustration, if you happen to plan to coach your mannequin 15 days after knowledge assortment, you want the information to mirror the state of consumer interactions on the time of inference, not on the time of coaching. In different phrases, if you happen to’re analyzing the variety of impressions a consumer had on a specific video, it is advisable know this quantity because it was when the advice was made, not as it’s 15 days later. This method ensures that the coaching knowledge precisely represents the consumer’s expertise and interactions on the related second.

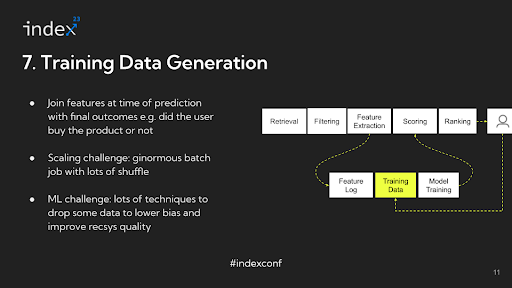

Step 7: Coaching Information

To facilitate this, a typical apply is to log all of the extracted knowledge, freeze it in its present state, after which carry out joins on this knowledge at a later time when making ready it for mannequin coaching. This technique permits for an correct reconstruction of the consumer’s interplay state on the time of every inference, offering a dependable foundation for coaching the advice mannequin.

As an illustration, Airbnb may want to think about a 12 months’s price of information as a result of seasonality components, not like a platform like Fb which could take a look at a shorter window. This necessitates sustaining intensive logs, which might be difficult and decelerate function improvement. In such eventualities, options is perhaps reconstructed by traversing a log of uncooked occasions on the time of coaching knowledge era.

The method of producing coaching knowledge entails an enormous be a part of operation at scale, combining the logged options with precise consumer actions like clicks or views. This step might be data-intensive and requires environment friendly dealing with to handle the information shuffle concerned.

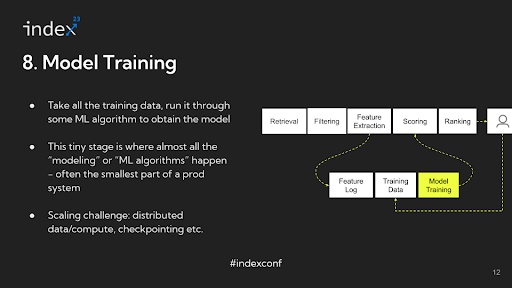

Step 8: Mannequin Coaching

Lastly, as soon as the coaching knowledge is ready, the mannequin is educated, and its output is then used for scoring within the advice system. Apparently, in your entire pipeline of a advice system, the precise machine studying mannequin coaching may solely represent a small portion of an ML engineer’s time, with the bulk spent on dealing with knowledge and infrastructure-related duties.

Infrastructure challenges: For larger-scale operations the place there’s a vital quantity of information, distributed coaching turns into crucial. In some circumstances, the fashions are so massive – actually terabytes in measurement – that they can not match into the RAM of a single machine. This necessitates a distributed method, like utilizing a parameter server to handle completely different segments of the mannequin throughout a number of machines.

One other crucial facet in such eventualities is checkpointing. On condition that coaching these massive fashions can take intensive durations, generally as much as 24 hours or extra, the danger of job failures should be mitigated. If a job fails, it is vital to renew from the final checkpoint fairly than beginning over from scratch. Implementing efficient checkpointing methods is important to handle these dangers and guarantee environment friendly use of computational assets.

Nonetheless, these infrastructure and scaling challenges are extra related for large-scale operations like these at Fb, Pinterest, or Airbnb. In smaller-scale settings, the place the information and mannequin complexity are comparatively modest, your entire system may match on a single machine (‘single field’). In such circumstances, the infrastructure calls for are considerably much less daunting, and the complexities of distributed coaching and checkpointing could not apply.

Total, this delineation highlights the various infrastructure necessities and challenges in constructing advice techniques, depending on the dimensions and complexity of the operation. The ‘blueprint’ for developing these techniques, due to this fact, must be adaptable to those differing scales and complexities.

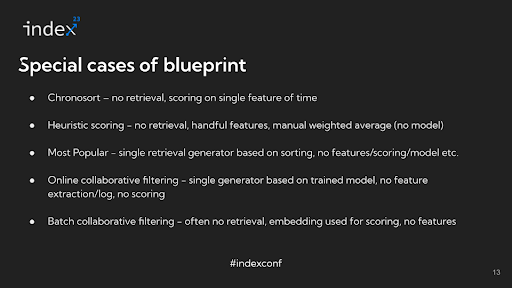

Particular Instances of Suggestion System Blueprint

Within the context of advice techniques, numerous approaches might be taken, every becoming right into a broader blueprint however with sure phases both omitted or simplified.

Let’s take a look at just a few examples for example this:

Chronological Sorting: In a really fundamental advice system, the content material is perhaps sorted chronologically. This method entails minimal complexity, as there’s basically no retrieval or function extraction stage past utilizing the time at which the content material was created. The scoring on this case is solely the timestamp, and the sorting relies on this single function.

Handcrafted Options with Weighted Averages: One other method entails some retrieval and using a restricted set of handcrafted options, possibly round 10. As a substitute of utilizing a machine studying mannequin for scoring, a weighted common calculated by way of a hand-tuned formulation is used. This technique represents an early stage within the evolution of rating techniques.

Sorting Based mostly on Reputation: A extra particular method focuses on the preferred content material. This might contain a single generator, doubtless an offline pipeline, that computes the preferred content material primarily based on metrics just like the variety of likes or upvotes. The sorting is then primarily based on these recognition metrics.

On-line Collaborative Filtering: Beforehand thought-about state-of-the-art, on-line collaborative filtering entails a single generator that performs an embedding lookup on a educated mannequin. On this case, there isn’t any separate function extraction or scoring stage; it is all about retrieval primarily based on model-generated embeddings.

Batch Collaborative Filtering: Much like on-line collaborative filtering, batch collaborative filtering makes use of the identical method however in a batch processing context.

These examples illustrate that whatever the particular structure or method of a rating advice system, they’re all variations of a elementary blueprint. In less complicated techniques, sure phases like function extraction and scoring could also be omitted or tremendously simplified. As techniques develop extra refined, they have a tendency to include extra phases of the blueprint, ultimately filling out your entire template of a posh advice system.

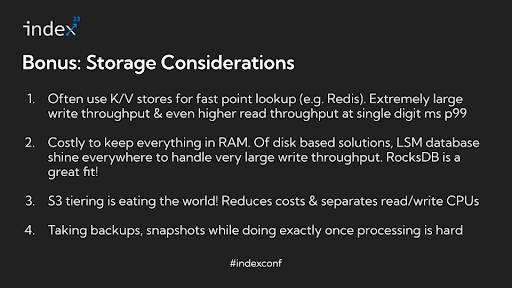

Bonus Part: Storage concerns

Though we have now accomplished our blueprint, together with the particular circumstances for it, storage concerns nonetheless type an vital a part of any fashionable advice system. So, it is worthwhile to pay some consideration to this bit.

In advice techniques, Key-Worth (KV) shops play a pivotal position, particularly in function serving. These shops are characterised by extraordinarily excessive write throughput. As an illustration, on platforms like Fb, TikTok, or Quora, hundreds of writes can happen in response to consumer interactions, indicating a system with a excessive write throughput. Much more demanding is the learn throughput. For a single consumer request, options for doubtlessly hundreds of candidates are extracted, regardless that solely a fraction of those candidates will likely be proven to the consumer. This ends in the learn throughput being magnitudes bigger than the write throughput, usually 100 occasions extra. Reaching single-digit millisecond latency (P99) beneath such situations is a difficult activity.

The writes in these techniques are sometimes read-modify writes, that are extra advanced than easy appends. At smaller scales, it is possible to maintain all the pieces in RAM utilizing options like Redis or in-memory dictionaries, however this may be pricey. As scale and price enhance, knowledge must be saved on disk. Log-Structured Merge-tree (LSM) databases are generally used for his or her capacity to maintain excessive write throughput whereas offering low-latency lookups. RocksDB, for instance, was initially utilized in Fb’s feed and is a well-liked alternative in such purposes. Fennel makes use of RocksDB for the storage and serving of function knowledge. Rockset, a search and analytics database, additionally makes use of RocksDB as its underlying storage engine. Different LSM database variants like ScyllaDB are additionally gaining recognition.

As the quantity of information being produced continues to develop, even disk storage is changing into pricey. This has led to the adoption of S3 tiering as essential answer for managing the sheer quantity of information in petabytes or extra. S3 tiering additionally facilitates the separation of write and skim CPUs, making certain that ingestion and compaction processes don’t expend CPU assets wanted for serving on-line queries. As well as, techniques should handle periodic backups and snapshots, and guarantee exact-once processing for stream processing, additional complicating the storage necessities. Native state administration, usually utilizing options like RocksDB, turns into more and more difficult as the dimensions and complexity of those techniques develop, presenting quite a few intriguing storage issues for these delving deeper into this house.

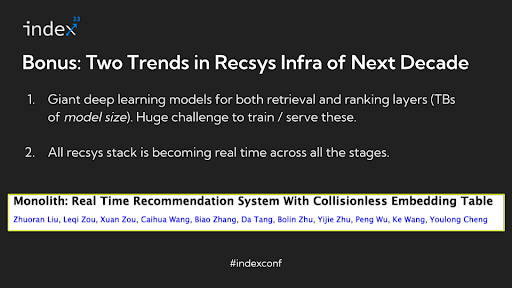

What does the longer term maintain for the advice techniques?

In discussing the way forward for advice techniques, Nikhil highlights two vital rising tendencies which can be converging to create a transformative affect on the trade.

Extraordinarily Massive Deep Studying Fashions: There is a development in direction of utilizing deep studying fashions which can be extremely massive, with parameter areas within the vary of terabytes. These fashions are so intensive that they can not match within the RAM of a single machine and are impractical to retailer on disk. Coaching and serving such large fashions current appreciable challenges. Handbook sharding of those fashions throughout GPU playing cards and different advanced strategies are at the moment being explored to handle them. Though these approaches are nonetheless evolving, and the sphere is essentially uncharted, libraries like PyTorch are creating instruments to help with these challenges.

Actual-Time Suggestion Methods: The trade is shifting away from batch-processed advice techniques to real-time techniques. This shift is pushed by the conclusion that real-time processing results in vital enhancements in key manufacturing metrics reminiscent of consumer engagement and gross merchandise worth (GMV) for e-commerce platforms. Actual-time techniques usually are not solely more practical in enhancing consumer expertise however are additionally simpler to handle and debug in comparison with batch-processed techniques. They are usually cheaper in the long term, as computations are carried out on-demand fairly than pre-computing suggestions for each consumer, lots of whom could not even interact with the platform day by day.

A notable instance of the intersection of those tendencies is TikTok’s method, the place they’ve developed a system that mixes using very massive embedding fashions with real-time processing. From the second a consumer watches a video, the system updates the embeddings and serves suggestions in real-time. This method exemplifies the revolutionary instructions during which advice techniques are heading, leveraging each the ability of large-scale deep studying fashions and the immediacy of real-time knowledge processing.

These developments recommend a future the place advice techniques usually are not solely extra correct and attentive to consumer conduct but additionally extra advanced by way of the technological infrastructure required to assist them. This intersection of enormous mannequin capabilities and real-time processing is poised to be a big space of innovation and progress within the discipline.

Keen on exploring extra?

- Discover Fennel’s real-time function retailer for machine studying

For an in-depth understanding of how a real-time function retailer can improve machine studying capabilities, take into account exploring Fennel. Fennel gives revolutionary options tailor-made for contemporary advice techniques. Go to Fennel or learn Fennel Docs.

- Discover out extra in regards to the Rockset search and analytics database

Find out how Rockset serves many advice use circumstances by way of its efficiency, real-time replace functionality, and vector search performance. Learn extra about Rockset or strive Rockset without spending a dime.