I spent the spring of my junior yr interning at Rockset, and it couldn’t have been a greater resolution. After I first arrived on the workplace on a sunny day in San Mateo, I had no concept that I used to be about to fulfill so many methods engineering gurus, or that I used to be about to devour immensely good meals from the festive neighboring streets. Working with my proficient and resourceful mentor, Ben (Software program Engineer, Programs), I’ve been in a position to be taught greater than I ever thought I may in three months! I now see myself as fairly nicely seasoned at C++ improvement, extra understanding of various database architectures, and barely higher at Tremendous Smash. Solely barely.

One factor I actually appreciated was that even on the primary day of the internship, I used to be in a position to push significant code by implementing the SUFFIXES SQL operate, one thing that was desired by and immediately impactful to Rockset’s clients.

Over the course of my internship at Rockset, I obtained to dive deeper into many features of our methods backend, two of which I’ll go into extra element for. I obtained myself into far more segfaults and lengthy hours spent debugging in GDB than I bargained for, which I can now say I got here out the higher finish of. :D.

Question Kind Optimization

One in every of my favourite initiatives over this internship was to optimize our kind course of for queries with the ORDER BY key phrase in SQL. For instance, queries like:

SELECT a FROM b ORDER BY c OFFSET 1000

would have the ability to run as much as 45% quicker with the offset-based optimization added, which is a large efficiency enchancment, particularly for queries with giant quantities of knowledge.

We use operators in Rockset to separate obligations within the execution of a question, based mostly on completely different processes resembling scans, types and joins. One such operator is the SortOperator, which facilitates ordered queries and handles sorting. The SortOperator makes use of a normal library kind to energy ordered queries, which isn’t receptive to timeouts throughout question execution since there isn’t any framework for interrupt dealing with. Which means that when utilizing normal types, the question deadline shouldn’t be enforced, and CPU is wasted on queries that ought to have already timed out.

Present sorting algorithms utilized by normal libraries are a strategic mixture of the quicksort, heapsort and insertion kind, referred to as introsort. Utilizing a strategic loop and tail recursion, we will scale back the variety of recursive calls made within the kind, thereby shaving a major period of time off the type. Recursion additionally halts at a particular depth, after which both heapsort or insertion kind is used, relying on the variety of components within the interval. The variety of comparisons and recursive calls made in a kind are very crucial when it comes to efficiency, and my mission was to cut back each in an effort to optimize bigger types.

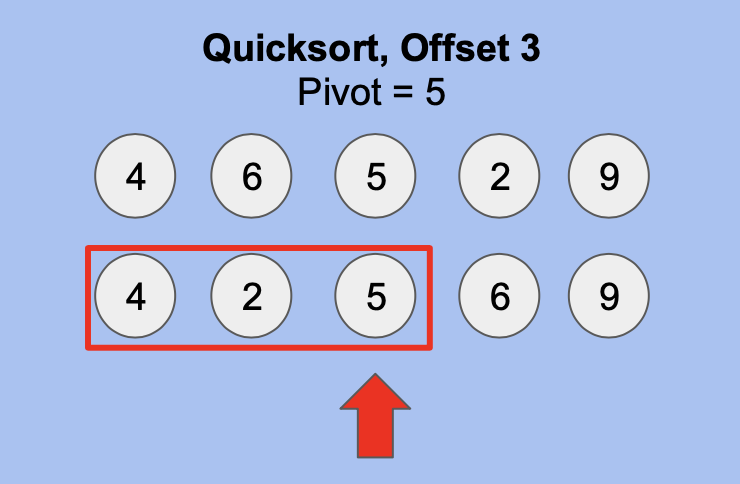

For the offset optimization, I used to be in a position to minimize recursive calls by an quantity proportional to the offset by holding observe of pivots utilized by earlier recursive calls. Primarily based on my modifications to introsort, we all know that after a single partitioning, the pivot is in its right place. Utilizing this earlier place, we will get rid of recursive calls earlier than the pivot if its place is lower than or equal to the offset requested.

For instance, within the above picture, we’re in a position to halt recursion on the values earlier than and together with the pivot, 5, since its place is <= offset.

To be able to serve cancellation requests, I needed to make it possible for these checks have been each well timed and executed at common intervals in a approach that didn’t improve the latency of types. This meant that having cancellation checks correlated 1:1 with the variety of comparisons or recursive calls immediately can be very damaging to latency. The answer to this was to correlate cancellation checks with recursion depth as a substitute, which by means of subsequent benchmarking I found {that a} recursion depth of >28 total corresponded to 1 second of execution time between ranges. For instance, between a recursion depth of 29 & 28, there’s ~1 second of execution. Comparable benchmarks have been used to find out when to verify for cancellations within the heapsort.

This portion of my internship was closely associated to efficiency and concerned meticulous benchmarking of question execution instances, which helped me perceive methods to view tradeoffs in engineering. Efficiency time is crucial since it’s more than likely a deciding think about whether or not to make use of Rockset, because it determines how briskly we will course of knowledge.

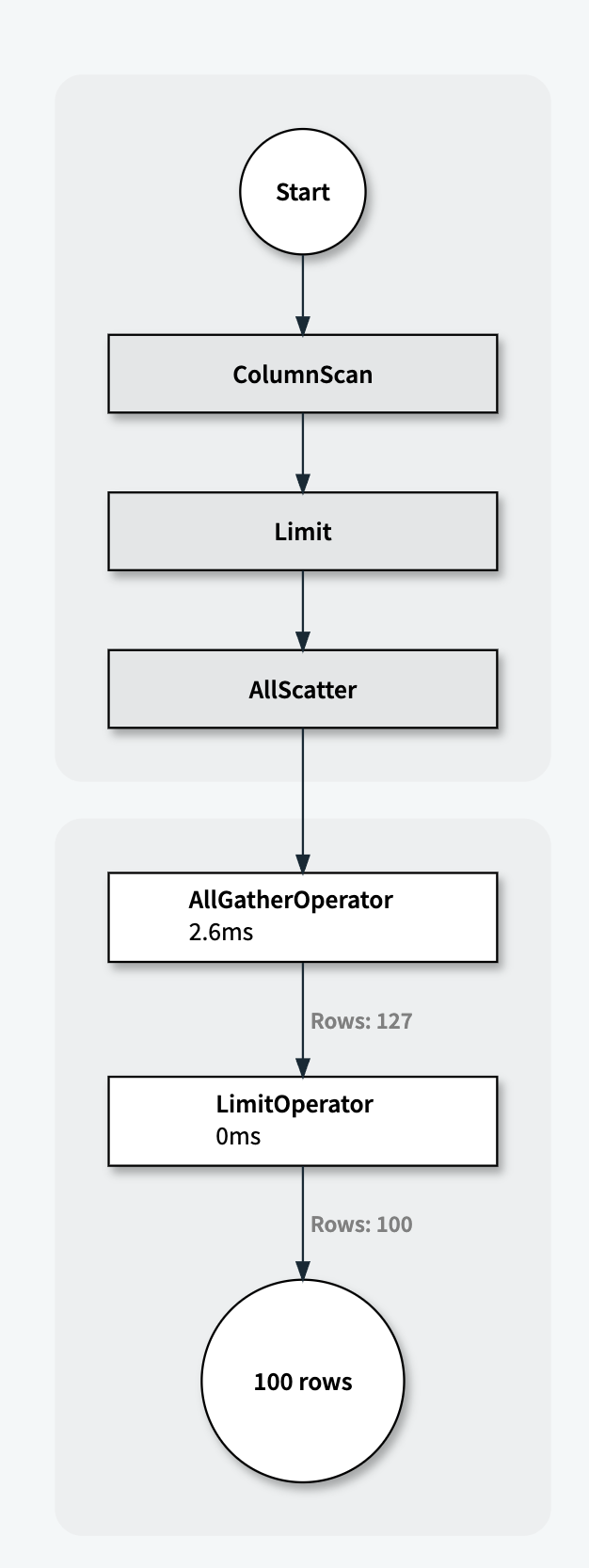

Batching QueryStats to Redis

One other fascinating challenge I labored on was reducing the latency of Rockset’s Question Stats writer after a question is run. Question Stats are necessary as a result of they supply visibility into the place the sources like CPU time and reminiscence are utilized in question execution. These stats assist our backend workforce to enhance question execution efficiency. There are lots of completely different sorts of stats, particularly for various operators, which clarify how lengthy their processes are taking and the quantity of CPU they’re utilizing. Sooner or later, we plan to share a visible illustration of those stats with our customers so that they higher perceive useful resource utilization in Rockset’s distributed question engine.

We at the moment ship the stats from operators used within the execution of queries to intermediately retailer them in Redis, from the place our API server is ready to pull them into an inside instrument. Within the execution of difficult or bigger queries, these stats are gradual to populate, principally because of the latency brought on by tens of hundreds of spherical journeys to Redis.

My job was to lower the variety of journeys to Redis by batching them by queryID, and be certain that question stats are populated whereas stopping spikes within the variety of question stats ready to be pushed. This effectivity enchancment would assist us in scaling our question stats system to execute bigger, extra complicated queries. This drawback was notably fascinating to me because it offers with the alternate of knowledge between two completely different methods in a batched and ordered trend.

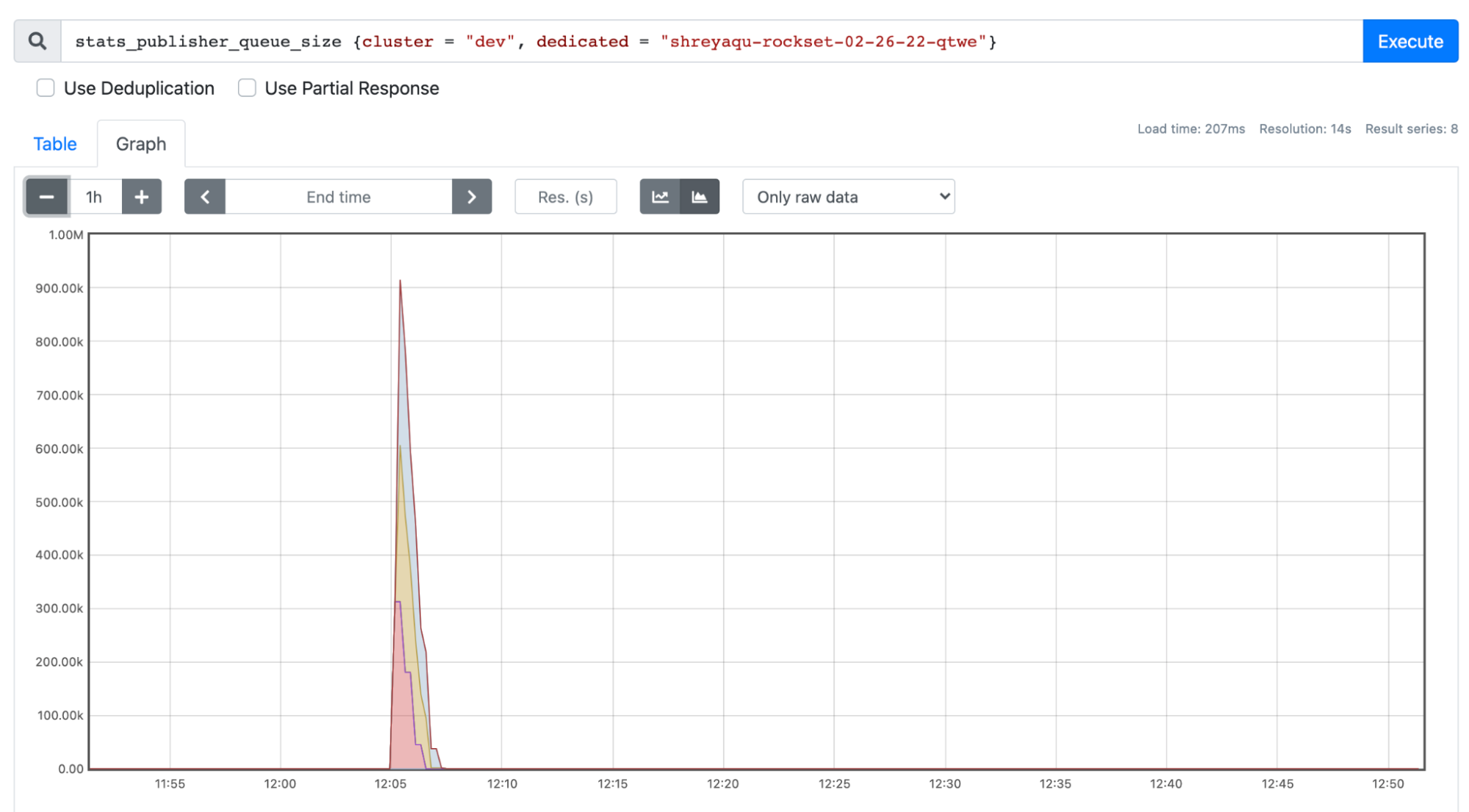

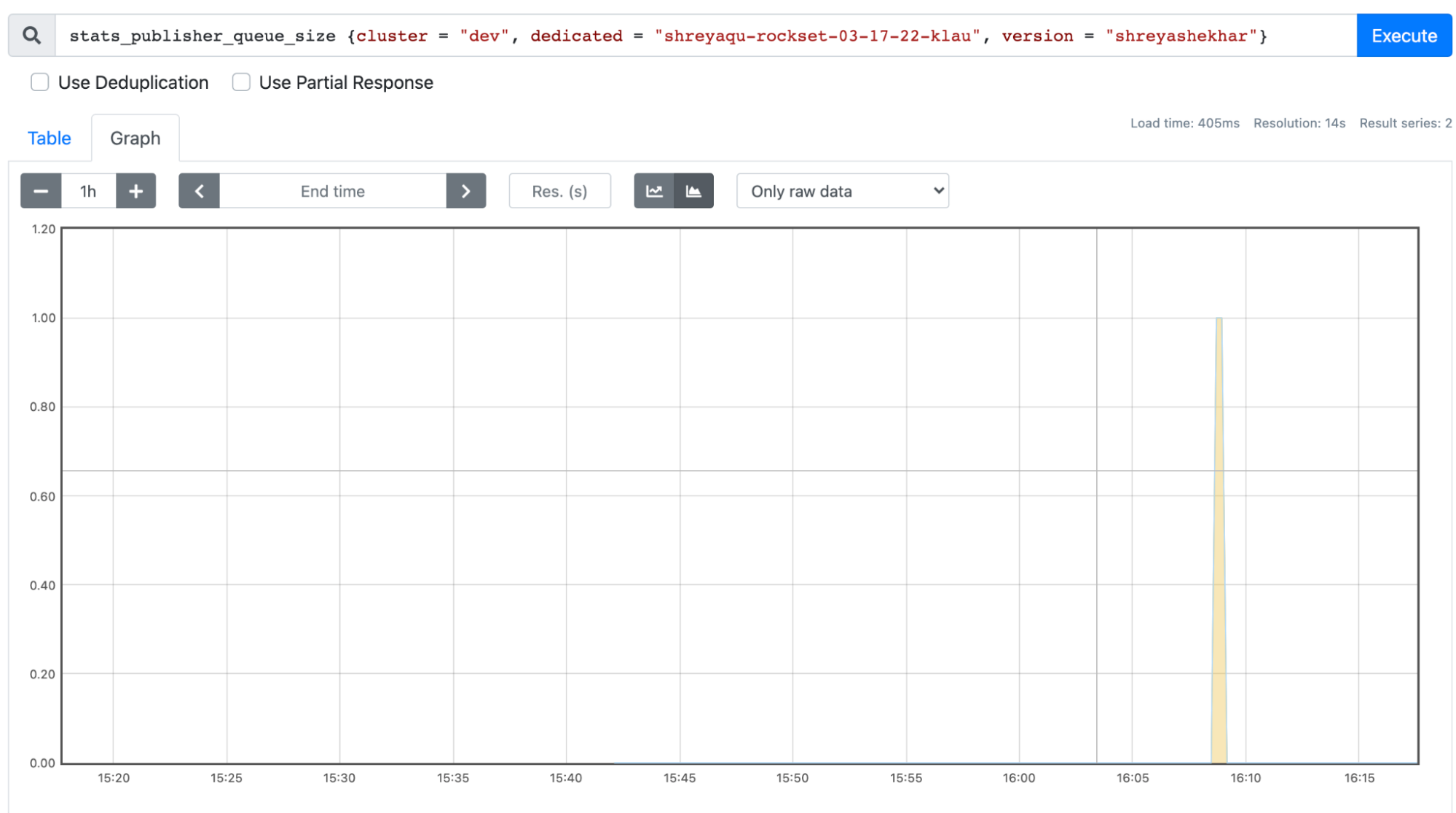

The answer to this challenge concerned the usage of a thread protected map construction of queryID ->queue, which was used to retailer and unload querystats particular to a queryId. These stats have been despatched to Redis in as few journeys as doable by eagerly unloading a queryID’s queue every time it has been populated, and pushing everything of the stats current to Redis. I additionally refactored the Redis API code we have been utilizing to ship question stats, making a operate the place a number of stats could possibly be despatched over as a substitute of simply one by one. As proven within the photographs beneath, this dramatically decreased the spikes in question stats ready to be despatched to Redis, by no means letting a number of question stats from the identical queryID refill the queue.

As proven within the screenshots above, stats writer queue dimension was drastically lowered from over 900k to a most of 1!

Extra In regards to the Tradition & The Expertise

What I actually appreciated about my internship expertise at Rockset was the quantity of autonomy I had over the work I used to be doing, and the prime quality mentorship I acquired. My each day work felt much like that of a full-time engineer on the methods workforce, since I used to be in a position to decide on and work on duties I felt have been fascinating to me whereas connecting with completely different engineers to be taught extra in regards to the code I used to be engaged on. I used to be even in a position to attain out to different groups resembling Gross sales and Advertising to be taught extra about their work and assist out with features I discovered fascinating.

One other facet I liked was the close-knit group of engineers at Rockset, one thing I obtained a number of publicity to at Hack Week, a week-long firm hackathon that was held in Lake Tahoe earlier this yr. This was a useful expertise for me to fulfill different engineers on the firm, and for all of us to hack away at options we felt needs to be built-in into Rockset’s product with out the presence of regular each day duties or obligations. I felt that this was an incredible thought, because it incentivized the engineers to work on concepts they have been personally invested in associated to the product and elevated possession for everybody as nicely. To not point out, everybody from engineers to executives have been current and dealing collectively on this hackathon, which made for an open and endearing firm atmosphere. We additionally had innumerable alternatives for bonding inside the engineering groups on this journey, considered one of which was an enormous loss for me in poker. And naturally, the excessive stakes video games of Tremendous Smash.

Total, my expertise working as as an intern at Rockset was really the whole lot I had hoped for, and extra.

Shreya Shekhar is finding out Electrical Engineering & Pc Science and Enterprise Administration at U.C. Berkeley.

Rockset is the main Actual-time Analytics Platform Constructed for the Cloud, delivering quick analytics on real-time knowledge with stunning effectivity. Be taught extra at rockset.com.