The intelligence in synthetic intelligence is rooted in huge quantities of information upon which machine studying (ML) fashions are educated—with latest giant language fashions like GPT-4 and Gemini processing trillions of tiny items of information known as tokens. This coaching dataset doesn’t merely include uncooked info scraped from the web. To ensure that the coaching information to be efficient, it additionally must be labeled.

Knowledge labeling is a course of wherein uncooked, unrefined info is annotated or tagged so as to add context and which means. This improves the accuracy of mannequin coaching, since you are in impact marking or declaring what you need your system to acknowledge. Some information labeling examples embody sentiment evaluation in textual content, figuring out objects in photographs, transcribing phrases in audio, or labeling actions in video sequences.

It’s no shock that information labeling high quality has a huge effect on coaching. Initially coined by William D. Mellin in 1957, “Rubbish in, rubbish out” has turn out to be considerably of a mantra in machine studying circles. ML fashions educated on incorrect or inconsistent labels could have a tough time adapting to unseen information and should exhibit biases of their predictions, inflicting inaccuracies within the output. Additionally, low-quality information can compound, inflicting points additional downstream.

This complete information to information labeling programs will assist your workforce increase information high quality and achieve a aggressive edge regardless of the place you might be within the annotation course of. First I’ll concentrate on the platforms and instruments that comprise an information labeling structure, exploring the trade-offs of varied applied sciences, after which I’ll transfer on to different key issues together with decreasing bias, defending privateness, and maximizing labeling accuracy.

Understanding Knowledge Labeling within the ML Pipeline

The coaching of machine studying fashions typically falls into three classes: supervised, unsupervised, and reinforcement studying. Supervised studying depends on labeled coaching information, which presents enter information factors related to appropriate output labels. The mannequin learns a mapping from enter options to output labels, enabling it to make predictions when offered with unseen enter information. That is in distinction with unsupervised studying, the place unlabeled information is analyzed in quest of hidden patterns or information groupings. With reinforcement studying, the coaching follows a trial-and-error course of, with people concerned primarily within the suggestions stage.

Most trendy machine studying fashions are educated through supervised studying. As a result of high-quality coaching information is so vital, it should be thought of at every step of the coaching pipeline, and information labeling performs a significant position on this course of.

Earlier than information may be labeled, it should first be collected and preprocessed. Uncooked information is collected from all kinds of sources, together with sensors, databases, log information, and software programming interfaces (APIs). It typically has no customary construction or format and incorporates inconsistencies akin to lacking values, outliers, or duplicate information. Throughout preprocessing, the information is cleaned, formatted, and remodeled so it’s constant and appropriate with the information labeling course of. Quite a lot of methods could also be used. For instance, rows with lacking values may be eliminated or up to date through imputation, a technique the place values are estimated through statistical evaluation, and outliers may be flagged for investigation.

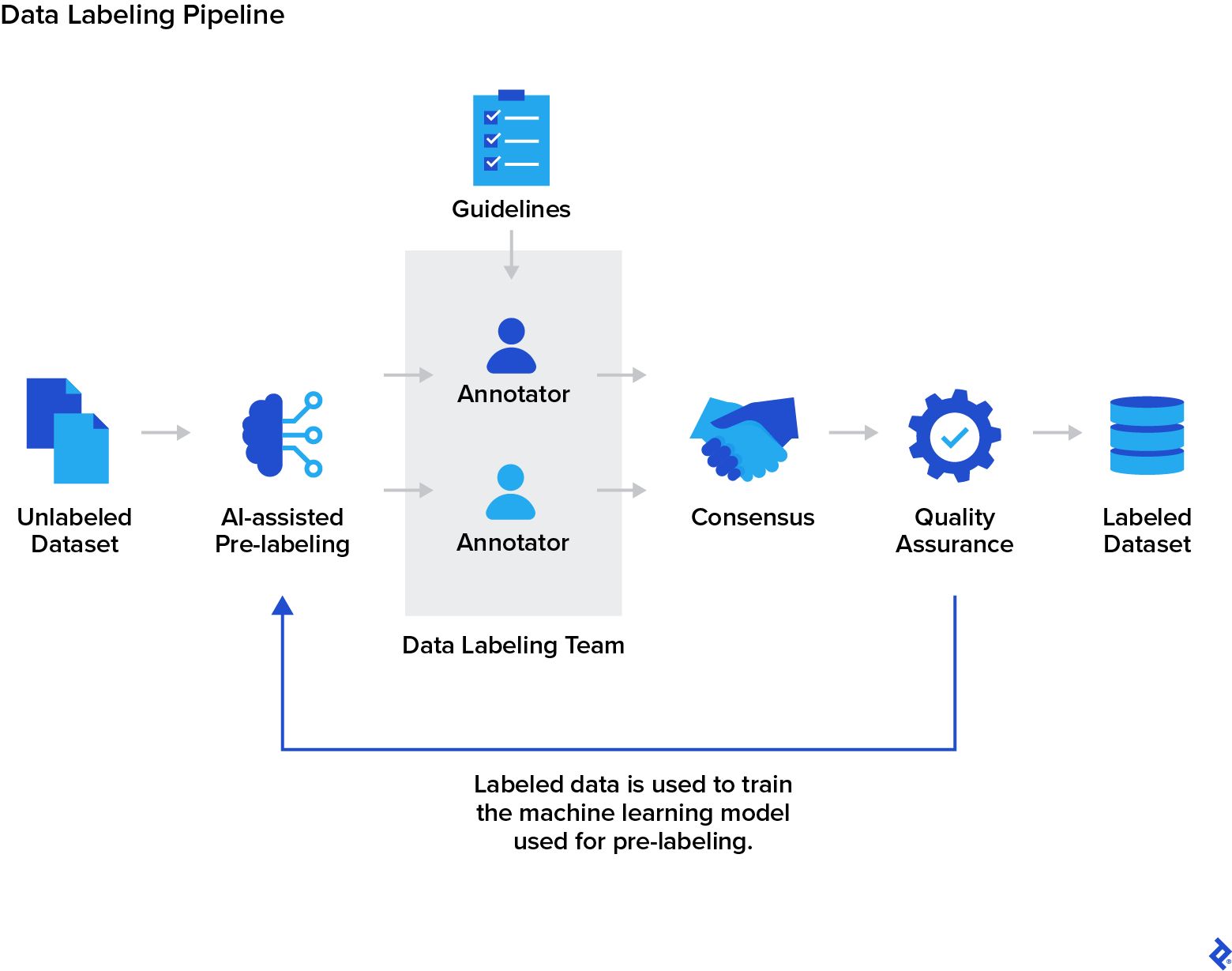

As soon as the information is preprocessed, it’s labeled or annotated with a view to present the ML mannequin with the data it must study. The precise strategy relies on the kind of information being processed; annotating photographs requires completely different methods than annotating textual content. Whereas automated labeling instruments exist, the method advantages closely from human intervention, particularly in the case of accuracy and avoiding any biases launched by AI. After the information is labeled, the high quality assurance (QA) stage ensures the accuracy, consistency, and completeness of the labels. QA groups typically make use of double-labeling, the place a number of labelers annotate a subset of the information independently and examine their outcomes, reviewing and resolving any variations.

Subsequent, the mannequin undergoes coaching, utilizing the labeled information to study the patterns and relationships between the inputs and the labels. The mannequin’s parameters are adjusted in an iterative course of to make its predictions extra correct with respect to the labels. To consider the effectiveness of the mannequin, it’s then examined with labeled information it has not seen earlier than. Its predictions are quantified with metrics akin to accuracy, precision, and recall. If a mannequin is performing poorly, changes may be made earlier than retraining, one among which is enhancing the coaching information to handle noise, biases, or information labeling points. Lastly, the mannequin may be deployed into manufacturing, the place it will possibly work together with real-world information. It is very important monitor the efficiency of the mannequin with a view to establish any points that may require updates or retraining.

Figuring out Knowledge Labeling Varieties and Strategies

Earlier than designing and constructing an information labeling structure, the entire information sorts that can be labeled should be recognized. Knowledge can are available in many alternative varieties, together with textual content, photographs, video, and audio. Every information sort comes with its personal distinctive challenges, requiring a definite strategy for correct and constant labeling. Moreover, some information labeling software program contains annotation instruments geared towards particular information sorts. Many annotators and annotation groups additionally focus on labeling sure information sorts. The selection of software program and workforce will depend upon the undertaking.

For instance, the information labeling course of for laptop imaginative and prescient would possibly embody categorizing digital photographs and movies, and creating bounding bins to annotate the objects inside them. Waymo’s Open Dataset is a publicly accessible instance of a labeled laptop imaginative and prescient dataset for autonomous driving; it was labeled by a mix of personal and crowdsourced information labelers. Different purposes for laptop imaginative and prescient embody medical imaging, surveillance and safety, and augmented actuality.

The textual content analyzed and processed by pure language processing (NLP) algorithms may be labeled in a wide range of alternative ways, together with sentiment evaluation (figuring out constructive or detrimental feelings), key phrase extraction (discovering related phrases), and named entity recognition (declaring particular folks or locations). Textual content blurbs will also be categorised; examples embody figuring out whether or not or not an e mail is spam or figuring out the language of the textual content. NLP fashions can be utilized in purposes akin to chatbots, coding assistants, translators, and engines like google.

Audio information is utilized in a wide range of purposes, together with sound classification, voice recognition, speech recognition, and acoustic evaluation. Audio information is likely to be annotated to establish particular phrases or phrases (like “Hey Siri”), classify several types of sounds, or transcribe spoken phrases into written textual content.

Many ML fashions are multimodal–in different phrases, they’re able to decoding info from a number of sources concurrently. A self-driving automotive would possibly mix visible info, like site visitors indicators and pedestrians, with audio information, akin to a honking horn. With multimodal information labeling, human annotators mix and label several types of information, capturing the relationships and interactions between them.

One other vital consideration earlier than constructing your system is the appropriate information labeling technique in your use case. Knowledge labeling has historically been carried out by human annotators; nonetheless, developments in ML are growing the potential for automation, making the method extra environment friendly and reasonably priced. Though the accuracy of automated labeling instruments is enhancing, they nonetheless can’t match the accuracy and reliability that human labelers present.

Hybrid or human-in-the-loop (HTL) information labeling combines the strengths of human annotators and software program. With HTL information labeling, AI is used to automate the preliminary creation of the labels, after which the outcomes are validated and corrected by human annotators. The corrected annotations are added to the coaching dataset and used to enhance the efficiency of the software program. The HTL strategy provides effectivity and scalability whereas sustaining accuracy and consistency, and is at the moment the most well-liked technique of information labeling.

Selecting the Elements of a Knowledge Labeling System

When designing an information labeling structure, the precise instruments are key to creating certain that the annotation workflow is environment friendly and dependable. There are a selection of instruments and platforms designed to optimize the information labeling course of, however primarily based in your undertaking’s necessities, you could discover that constructing an information labeling pipeline with in-house instruments is essentially the most applicable in your wants.

Core Steps in a Knowledge Labeling Workflow

The labeling pipeline begins with information assortment and storage. Info may be gathered manually by means of methods akin to interviews, surveys, or questionnaires, or collected in an automatic method through internet scraping. In the event you don’t have the assets to gather information at scale, open-source datasets from platforms akin to Kaggle, UCI Machine Studying Repository, Google Dataset Search, and GitHub are various. Moreover, information sources may be artificially generated utilizing mathematical fashions to reinforce real-world information. To retailer information, cloud platforms akin to Amazon S3, Google Cloud Storage, or Microsoft Azure Blob Storage scale together with your wants, offering just about limitless storage capability, and supply built-in security measures. Nonetheless, if you’re working with extremely delicate information with regulatory compliance necessities, on-premise storage is often required.

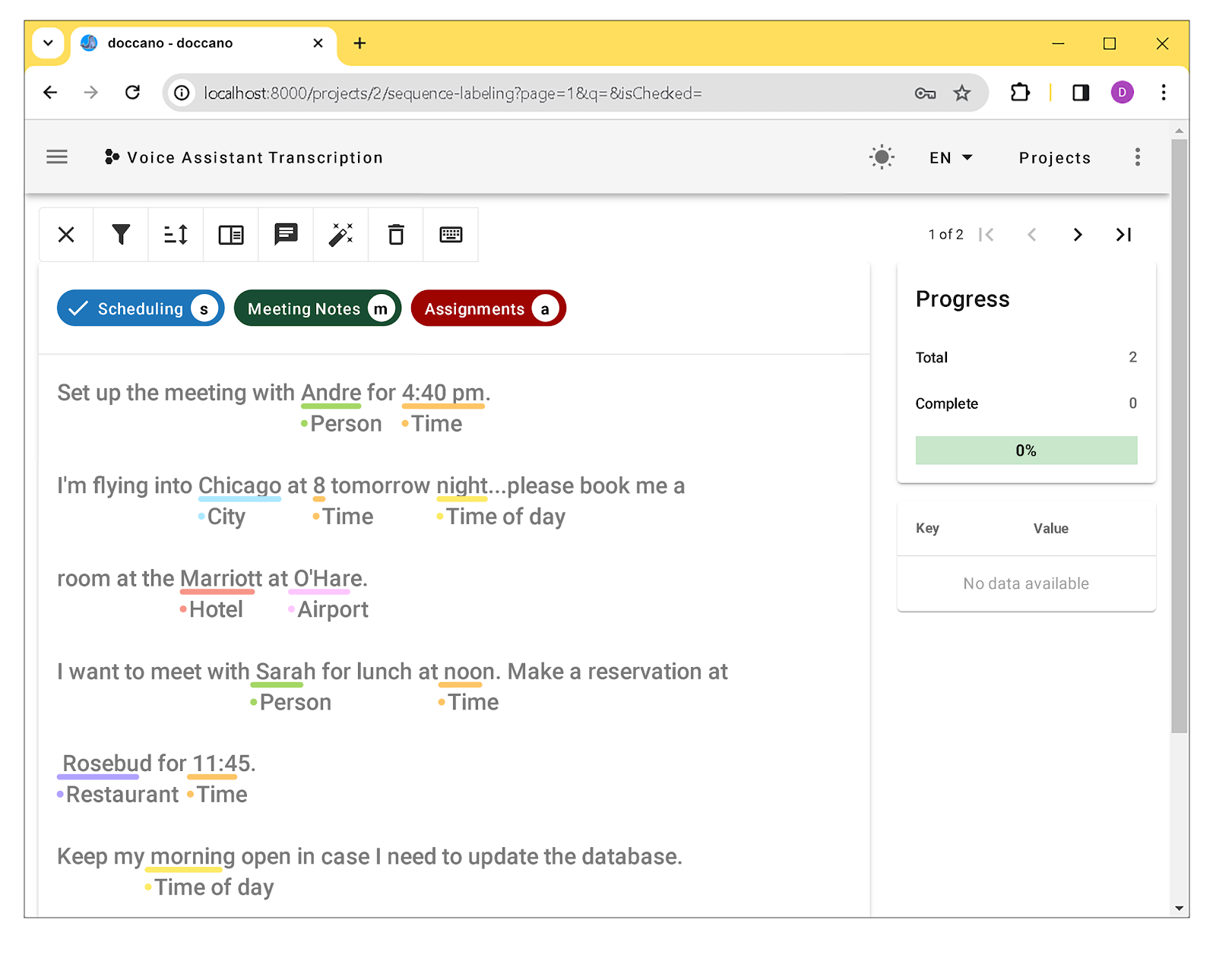

As soon as the information is collected, the labeling course of can start. The annotation workflow can fluctuate relying on information sorts, however typically, every important information level is recognized and categorised utilizing an HTL strategy. There are a selection of platforms accessible that streamline this complicated course of, together with each open-source (Doccano, LabelStudio, CVAT) and business (Scale Knowledge Engine, Labelbox, Supervisely, Amazon SageMaker Floor Reality) annotation instruments.

After the labels are created, they’re reviewed by a QA workforce to make sure accuracy. Any inconsistencies are sometimes resolved at this stage by means of handbook approaches, akin to majority choice, benchmarking, and session with subject material specialists. Inconsistencies will also be mitigated with automated strategies, for instance, utilizing a statistical algorithm just like the Dawid-Skene mannequin to combination labels from a number of annotators right into a single, extra dependable label. As soon as the right labels are agreed upon by the important thing stakeholders, they’re known as the “floor reality,” and can be utilized to coach ML fashions. Many free and open-source instruments have fundamental QA workflow and information validation performance, whereas business instruments present extra superior options, akin to machine validation, approval workflow administration, and high quality metrics monitoring.

Knowledge Labeling Device Comparability

Open-source instruments are start line for information labeling. Whereas their performance could also be restricted in comparison with business instruments, the absence of licensing charges is a major benefit for smaller initiatives. Whereas business instruments typically function AI-assisted pre-labeling, many open-source instruments additionally assist pre-labeling when related to an exterior ML mannequin.

|

Title |

Supported information sorts |

Workflow administration |

QA |

Help for cloud storage |

Extra notes |

|---|---|---|---|---|---|

|

Label Studio Neighborhood Version |

|

Sure |

No |

|

|

|

CVAT |

Sure |

Sure |

|

|

|

|

Doccano |

Sure |

No |

|

|

|

|

VIA (VGG Picture Annotator)

|

No |

No |

No |

|

|

|

No |

No |

No |

Whereas open-source platforms present a lot of the performance wanted for an information labeling undertaking, complicated machine studying initiatives requiring superior annotation options, automation, and scalability will profit from the usage of a business platform. With added security measures, technical assist, complete pre-labeling performance (assisted by included ML fashions), and dashboards for visualizing analytics, a business information labeling platform is most often nicely well worth the extra value.

|

Title |

Supported information sorts |

Workflow administration |

QA |

Help for cloud storage |

Extra notes |

|---|---|---|---|---|---|

|

Labelbox |

|

Sure |

Sure |

|

|

|

Supervisely |

|

Sure |

Sure |

|

|

|

Amazon SageMaker Floor Reality |

|

Sure |

Sure |

|

|

|

Scale AI Knowledge Engine |

|

Sure |

Sure |

|

|

|

Sure |

Sure |

|

|

In the event you require options that aren’t accessible with present instruments, you could choose to construct an in-house information labeling platform, enabling you to customise assist for particular information codecs and annotation duties, in addition to design {custom} pre-labeling, assessment, and QA workflows. Nonetheless, constructing and sustaining a platform that’s on par with the functionalities of a business platform is value prohibitive for many corporations.

In the end, the selection relies on numerous components. If third-party platforms don’t have the options that the undertaking requires or if the undertaking includes extremely delicate information, a custom-built platform is likely to be one of the best answer. Some initiatives might profit from a hybrid strategy, the place core labeling duties are dealt with by a business platform, however {custom} performance is developed in-house.

Guaranteeing High quality and Safety in Knowledge Labeling Methods

The info labeling pipeline is a posh system that includes huge quantities of information, a number of ranges of infrastructure, a workforce of labelers, and an elaborate, multilayered workflow. Bringing these parts collectively right into a easily operating system is just not a trivial job. There are challenges that may have an effect on labeling high quality, reliability, and effectivity, in addition to the ever-present problems with privateness and safety.

Enhancing Accuracy in Labeling

Automation can pace up the labeling course of, however overdependence on automated labeling instruments can scale back the accuracy of labels. Knowledge labeling duties sometimes require contextual consciousness, area experience, or subjective judgment, none of which a software program algorithm can but present. Offering clear human annotation pointers and detecting labeling errors are two efficient strategies for making certain information labeling high quality.

Inaccuracies within the annotation course of may be minimized by making a complete set of pointers. All potential label classifications ought to be outlined, and the codecs of labels specified. The annotation pointers ought to embody step-by-step directions that embody steerage for ambiguity and edge circumstances. There also needs to be a wide range of instance annotations for labelers to comply with that embody easy information factors in addition to ambiguous ones.

Having a couple of unbiased annotator labeling the identical information level and evaluating their outcomes will yield the next diploma of accuracy. Inter-annotator settlement (IAA) is a key metric used to measure labeling consistency between annotators. For information factors with low IAA scores, a assessment course of ought to be established with a view to attain consensus on a label. Setting a minimal consensus threshold for IAA scores ensures that the ML mannequin solely learns from information with a excessive diploma of settlement between labelers.

As well as, rigorous error detection and monitoring go a great distance in enhancing annotation accuracy. Error detection may be automated utilizing software program instruments like Cleanlab. With such instruments, labeled information may be in contrast towards predefined guidelines to detect inconsistencies or outliers. For photographs, the software program would possibly flag overlapping bounding bins. With textual content, lacking annotations or incorrect label codecs may be robotically detected. All errors are highlighted for assessment by the QA workforce. Additionally, many business annotation platforms supply AI-assisted error detection, the place potential errors are flagged by an ML mannequin pretrained on annotated information. Flagged and reviewed information factors are then added to the mannequin’s coaching information, enhancing its accuracy through energetic studying.

Error monitoring gives the dear suggestions essential to enhance the labeling course of through steady studying. Key metrics, akin to label accuracy and consistency between labelers, are tracked. If there are duties the place labelers continuously make errors, the underlying causes should be decided. Many business information labeling platforms present built-in dashboards that allow labeling historical past and error distribution to be visualized. Strategies of enhancing efficiency can embody adjusting information labeling requirements and pointers to make clear ambiguous directions, retraining labelers, or refining the foundations for error detection algorithms.

Addressing Bias and Equity

Knowledge labeling depends closely on private judgment and interpretation, making it a problem for human annotators to create truthful and unbiased labels. Knowledge may be ambiguous. When classifying textual content information, sentiments akin to sarcasm or humor can simply be misinterpreted. A facial features in a picture is likely to be thought of “unhappy” to some labelers and “bored” to others. This subjectivity can open the door to bias.

The dataset itself will also be biased. Relying on the supply, particular demographics and viewpoints may be over- or underrepresented. Coaching a mannequin on biased information may cause inaccurate predictions, for instance, incorrect diagnoses as a result of bias in medical datasets.

To scale back bias within the annotation course of, the members of the labeling and QA groups ought to have numerous backgrounds and views. Double- and multilabeling may also decrease the impression of particular person biases. The coaching information ought to mirror real-world information, with a balanced illustration of things akin to demographics and geographic location. Knowledge may be collected from a wider vary of sources, and if essential, information may be added to particularly tackle potential sources of bias. As well as, information augmentation methods, akin to picture flipping or textual content paraphrasing, can decrease inherent biases by artificially growing the variety of the dataset. These strategies current variations on the unique information level. Flipping a picture permits the mannequin to study to acknowledge an object whatever the approach it’s going through, decreasing bias towards particular orientations. Paraphrasing textual content exposes the mannequin to extra methods of expressing the data within the information level, decreasing potential biases brought on by particular phrases or phrasing.

Incorporating an exterior oversight course of may also assist to cut back bias within the information labeling course of. An exterior workforce—consisting of area specialists, information scientists, ML specialists, and variety and inclusion specialists—may be introduced in to assessment labeling pointers, consider workflow, and audit the labeled information, offering suggestions on methods to enhance the method in order that it’s truthful and unbiased.

Knowledge Privateness and Safety

Knowledge labeling initiatives typically contain doubtlessly delicate info. All platforms ought to combine security measures akin to encryption and multifactor authentication for consumer entry management. To guard privateness, information with personally identifiable info ought to be eliminated or anonymized. Moreover, each member of the labeling workforce ought to be educated on information safety finest practices, akin to having robust passwords and avoiding unintended information sharing.

Knowledge labeling platforms also needs to adjust to related information privateness rules, together with the Normal Knowledge Safety Regulation (GDPR) and the California Client Privateness Act (CCPA), in addition to the Well being Insurance coverage Portability and Accountability Act (HIPAA). Many business information platforms are SOC 2 Kind 2 licensed, which means they’ve been audited by an exterior celebration and located to adjust to the 5 belief rules: safety, availability, processing integrity, confidentiality, and privateness.

Future-proofing Your Knowledge Labeling System

Knowledge labeling is an invisible, however huge enterprise that performs a pivotal position within the growth of ML fashions and AI programs—and labeling structure should be capable to scale as necessities change.

Business and open-source platforms are recurrently up to date to assist rising information labeling wants. Likewise, in-house information labeling options ought to be developed with simple updating in thoughts. Modular design permits parts to be swapped out with out affecting the remainder of the system, for instance. And integrating open-source libraries or frameworks provides adaptability, as a result of they’re continuously being up to date because the business evolves.

Particularly, cloud-based options supply important benefits for large-scale information labeling initiatives over self-managed programs. Cloud platforms can dynamically scale their storage and processing energy as wanted, eliminating the necessity for costly infrastructure upgrades.

The annotating workforce should additionally be capable to scale as datasets develop. New annotators should be educated shortly on methods to label information precisely and effectively. Filling the gaps with managed information labeling companies or on-demand annotators permits for versatile scaling primarily based on undertaking wants. That mentioned, the coaching and onboarding course of should even be scalable with respect to location, language, and availability.

The important thing to ML mannequin accuracy is the standard of the labeled information that the fashions are educated on, and efficient, hybrid information labeling programs supply AI the potential to enhance the way in which we do issues and make just about each enterprise extra environment friendly.