Over the previous few years, diffusion fashions have achieved large success and recognition for picture and video era duties. Video diffusion fashions, particularly, have been gaining important consideration on account of their means to provide movies with excessive coherence in addition to constancy. These fashions generate high-quality movies by using an iterative denoising course of of their structure that regularly transforms high-dimensional Gaussian noise into actual information.

Secure Diffusion is without doubt one of the most consultant fashions for picture generative duties, counting on a Variational AutoEncoder (VAE) to map between the actual picture and the down-sampled latent options. This enables the mannequin to scale back generative prices, whereas the cross-attention mechanism in its structure facilitates text-conditioned picture era. Extra lately, the Secure Diffusion framework has constructed the muse for a number of plug-and-play adapters to attain extra modern and efficient picture or video era. Nonetheless, the iterative generative course of employed by a majority of video diffusion fashions makes the picture era course of time-consuming and relatively pricey, limiting its purposes.

On this article, we’ll speak about AnimateLCM, a personalised diffusion mannequin with adapters aimed toward producing high-fidelity movies with minimal steps and computational prices. The AnimateLCM framework is impressed by the Consistency Mannequin, which accelerates sampling with minimal steps by distilling pre-trained picture diffusion fashions. Moreover, the profitable extension of the Consistency Mannequin, the Latent Consistency Mannequin (LCM), facilitates conditional picture era. As a substitute of conducting consistency studying instantly on the uncooked video dataset, the AnimateLCM framework proposes utilizing a decoupled consistency studying technique. This technique decouples the distillation of movement era priors and picture era priors, permitting the mannequin to reinforce the visible high quality of the generated content material and enhance coaching effectivity concurrently. Moreover, the AnimateLCM mannequin proposes coaching adapters from scratch or adapting current adapters to its distilled video consistency mannequin. This facilitates the mixture of plug-and-play adapters within the household of secure diffusion fashions to attain totally different capabilities with out harming the pattern pace.

This text goals to cowl the AnimateLCM framework in depth. We discover the mechanism, the methodology, and the structure of the framework, together with its comparability with state-of-the-art picture and video era frameworks. So, let’s get began.

Diffusion fashions have been the go to framework for picture era and video era duties owing to their effectivity and capabilities on generative duties. A majority of diffusion fashions depend on an iterative denoising course of for picture era that transforms a excessive dimensional Gaussian noise into actual information regularly. Though the strategy delivers considerably passable outcomes, the iterative course of and the variety of iterating samples slows the era course of and likewise provides to the computational necessities of diffusion fashions which can be a lot slower than different generative frameworks like GAN or Generative Adversarial Networks. Prior to now few years, Consistency Fashions or CMs have been proposed as an alternative choice to iterative diffusion fashions to hurry up the era course of whereas preserving the computational necessities fixed.

The spotlight of consistency fashions is that they be taught consistency mappings that preserve self-consistency of trajectories launched by the pre-trained diffusion fashions. The educational means of Consistency Fashions permits it to generate high-quality photos with minimal steps, and likewise eliminates the necessity for computation-intensive iterations. Moreover, the Latent Consistency Mannequin or LCM constructed on high of the secure diffusion framework may be built-in into the online consumer interface with the prevailing adapters to attain a bunch of extra functionalities like actual time picture to picture translation. Compared, though the prevailing video diffusion fashions ship acceptable outcomes, progress remains to be to be made within the video pattern acceleration subject, and is of nice significance owing to the excessive video era computational prices.

That leads us to AnimateLCM, a excessive constancy video era framework that wants a minimal variety of steps for the video era duties. Following the Latent Consistency Mannequin, AnimateLCM framework treats the reverse diffusion course of as fixing CFG or Classifier Free Steerage augmented likelihood stream, and trains the mannequin to foretell the answer of such likelihood flows instantly within the latent house. Nonetheless, as an alternative of conducting consistency studying on uncooked video information instantly that requires excessive coaching and computational sources, and sometimes results in poor high quality, the AnimateLCM framework proposes a decoupled constant studying technique that decouples the consistency distillation of movement era and picture era priors.

The AnimateLCM framework first conducts the consistency distillation to adapt the picture base diffusion mannequin into the picture consistency mannequin, after which conducts 3D inflation to each the picture consistency and picture diffusion fashions to accommodate 3D options. Ultimately, the AnimateLCM framework obtains the video consistency mannequin by conducting consistency distillation on video information. Moreover, to alleviate potential characteristic corruption on account of the diffusion course of, the AnimateLCM framework additionally proposes to make use of an initialization technique. Because the AnimateLCM framework is constructed on high of the Secure Diffusion framework, it might probably change the spatial weights of its educated video consistency mannequin with the publicly out there customized picture diffusion weights to attain modern era outcomes.

Moreover, to coach particular adapters from scratch or to go well with publicly out there adapters higher, the AnimateLCM framework proposes an efficient acceleration technique for the adapters that don’t require coaching the particular instructor fashions.

The contributions of the AnimateLCM framework may be very properly summarized as: The proposed AnimateLCM framework goals to attain prime quality, quick, and excessive constancy video era, and to attain this, the AnimateLCM framework proposes a decoupled distillation technique the decouples the movement and picture era priors leading to higher era high quality, and enhanced coaching effectivity.

InstantID : Methodology and Structure

At its core, the InstantID framework attracts heavy inspiration from diffusion fashions and sampling pace methods. Diffusion fashions, also referred to as score-based generative fashions have demonstrated outstanding picture generative capabilities. Beneath the steerage of rating path, the iterative sampling technique carried out by diffusion fashions denoise the noise-corrupted information regularly. The effectivity of diffusion fashions is without doubt one of the main the explanation why they’re employed by a majority of video diffusion fashions by coaching on added temporal layers. Then again, sampling pace and sampling acceleration methods assist deal with the sluggish era speeds in diffusion fashions. Distillation primarily based acceleration methodology tunes the unique diffusion weights with a refined structure or scheduler to reinforce the era pace.

Shifting alongside, the InstantID framework is constructed on high of the secure diffusion mannequin that enables InstantID to use related notions. The mannequin treats the discrete ahead diffusion course of as continuous-time Variance Preserving SDE. Moreover, the secure diffusion mannequin is an extension of DDPM or Denoising Diffusion Probabilistic Mannequin, by which the coaching information level is perturbed regularly by the discrete Markov chain with a perturbation kennel permitting the distribution of noisy information at totally different time step to comply with the distribution.

To attain high-fidelity video era with a minimal variety of steps, the AnimateLCM framework tames the secure diffusion-based video fashions to comply with the self-consistency property. The general coaching construction of the AnimateLCM framework consists of a decoupled consistency studying technique for instructor free adaptation and efficient consistency studying.

Transition from Diffusion Fashions to Consistency Fashions

The AnimateLCM framework introduces its personal adaptation of the Secure Diffusion Mannequin or DM to the Consistency Mannequin or CM following the design of the Latent Consistency Mannequin or LCM. It’s price noting that though the secure diffusion fashions sometimes predict the noise added to the samples, they’re important sigma-diffusion fashions. It’s in distinction with consistency fashions that goal to foretell the answer to the PF-ODE trajectory instantly. Moreover, in secure diffusion fashions with sure parameters, it’s important for the mannequin to make use of a classifier-free steerage technique to generate prime quality photos. The AnimateLCM framework nevertheless, employs a classifier-free steerage augmented ODE solver to pattern the adjoining pairs in the identical trajectories, leading to higher effectivity and enhanced high quality. Moreover, current fashions have indicated that the era high quality and coaching effectivity is influenced closely by the variety of discrete factors within the trajectory. Smaller variety of discrete factors accelerates the coaching course of whereas the next variety of discrete factors ends in much less bias throughout coaching.

Decoupled Consistency Studying

For the method of consistency distillation, builders have noticed that the info used for coaching closely influences the standard of the ultimate era of the consistency fashions. Nonetheless, the foremost concern with publicly out there datasets presently is that always include watermark information, or its of low high quality, and would possibly comprise overly transient or ambiguous captions. Moreover, coaching the mannequin instantly on large-resolution movies is computationally costly, and time consuming, making it a non-feasible choice for a majority of researchers.

Given the supply of filtered prime quality datasets, the AnimateLCM framework proposes to decouple the distillation of the movement priors and picture era priors. To be extra particular, the AnimateLCM framework first distills the secure diffusion fashions into picture consistency fashions with filtered high-quality picture textual content datasets with higher decision. The framework then trains the sunshine LoRA weights on the layers of the secure diffusion mannequin, thus freezing the weights of the secure diffusion mannequin. As soon as the mannequin tunes the LoRA weights, it really works as a flexible acceleration module, and it has demonstrated its compatibility with different customized fashions within the secure diffusion communities. For inference, the AnimateLCM framework merges the weights of the LoRA with the unique weights with out corrupting the inference pace. After the AnimateLCM framework good points the consistency mannequin on the degree of picture era, it freezes the weights of the secure diffusion mannequin and LoRA weights on it. Moreover, the mannequin inflates the 2D convolution kernels to the pseudo-3D kernels to coach the consistency fashions for video era. The mannequin additionally provides temporal layers with zero initialization and a block degree residual connection. The general setup helps in assuring that the output of the mannequin is not going to be influenced when it’s educated for the primary time. The AnimateLCM framework beneath the steerage of open sourced video diffusion fashions trains the temporal layers prolonged from the secure diffusion fashions.

It is necessary to acknowledge that whereas spatial LoRA weights are designed to expedite the sampling course of with out taking temporal modeling into consideration, and temporal modules are developed by customary diffusion methods, their direct integration tends to deprave the illustration on the onset of coaching. This presents important challenges in successfully and effectively merging them with minimal battle. Via empirical analysis, the AnimateLCM framework has recognized a profitable initialization method that not solely makes use of the consistency priors from spatial LoRA weights but additionally mitigates the adversarial results of their direct mixture.

On the onset of consistency coaching, pre-trained spatial LoRA weights are built-in solely into the net consistency mannequin, sparing the goal consistency mannequin from insertion. This technique ensures that the goal mannequin, serving as the academic information for the net mannequin, doesn’t generate defective predictions that might detrimentally have an effect on the net mannequin’s studying course of. All through the coaching interval, the LoRA weights are progressively integrated into the goal consistency mannequin through an exponential shifting common (EMA) course of, reaching the optimum weight steadiness after a number of iterations.

Instructor Free Adaptation

Secure Diffusion fashions and plug and play adapters typically go hand in hand. Nonetheless, it has been noticed that though the plug and play adapters work to some extent, they have a tendency to lose management in particulars even when a majority of those adapters are educated with picture diffusion fashions. To counter this concern, the AnimateLCM framework opts for instructor free adaptation, a easy but efficient technique that both accommodates the prevailing adapters for higher compatibility or trains the adapters from the bottom up or. The method permits the AnimateLCM framework to attain the controllable video era and image-to-video era with a minimal variety of steps with out requiring instructor fashions.

AnimateLCM: Experiments and Outcomes

The AnimateLCM framework employs a Secure Diffusion v1-5 as the bottom mannequin, and implements the DDIM ODE solver for coaching functions. The framework additionally applies the Secure Diffusion v1-5 with open sourced movement weights because the instructor video diffusion mannequin with the experiments being performed on the WebVid2M dataset with none extra or augmented information. Moreover, the framework employs the TikTok dataset with BLIP-captioned transient textual prompts for controllable video era.

Qualitative Outcomes

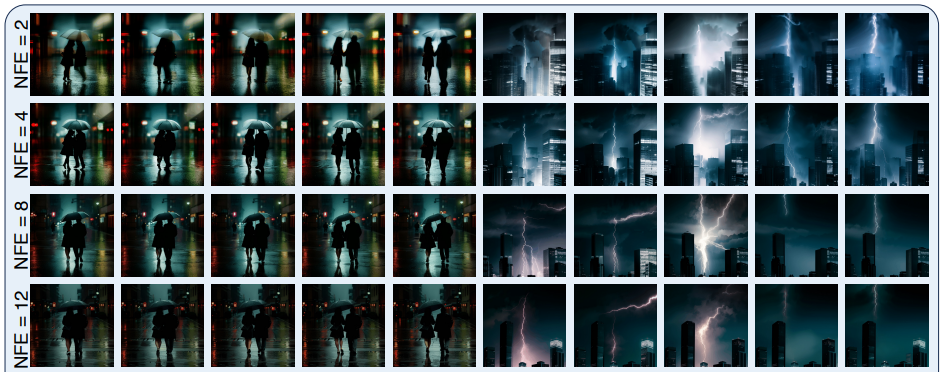

The next determine demonstrates outcomes of the four-step era methodology carried out by the AnimateLCM framework in text-to-video era, image-to-video era, and controllable video era.

As it may be noticed, the outcomes delivered by every of them are passable with the generated outcomes demonstrating the flexibility of the AnimateLCM framework to comply with the consistency property even with various inference steps, sustaining related movement and magnificence.

Quantitative Outcomes

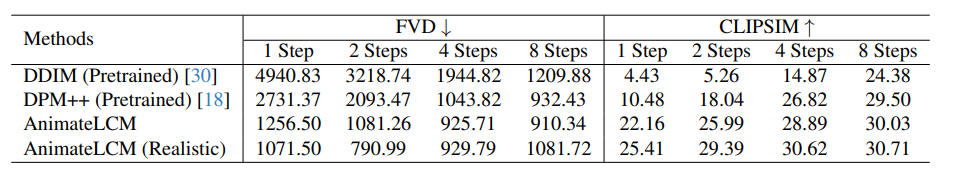

The next determine illustrates the quantitative outcomes and comparability of the AnimateLCM framework with cutting-edge DDIM and DPM++ strategies.

As it may be noticed, the AnimateLCM framework outperforms the prevailing strategies by a major margin particularly within the low step regime starting from 1 to 4 steps. Moreover, the AnimateLCM metrics displayed on this comparability are evaluated with out utilizing the CFG or classifier free steerage that enables the framework to avoid wasting almost 50% of the inference time and inference peak reminiscence price. Moreover, to additional validate its efficiency, the spatial weights throughout the AnimateLCM framework are changed with a publicly out there customized sensible mannequin that strikes a superb steadiness between constancy and variety, that helps in boosting the efficiency additional.

Closing Ideas

On this article, we now have talked about AnimateLCM, a personalised diffusion mannequin with adapters that goals to generate high-fidelity movies with minimal steps and computational prices. The AnimateLCM framework is impressed by the Consistency Mannequin that accelerates the sampling with minimal steps by distilling pre-trained picture diffusion fashions, and the profitable extension of the Consistency Mannequin, the Latent Consistency Mannequin or LCM that facilitates conditional picture era. As a substitute of conducting consistency studying on the uncooked video dataset instantly, the AnimateLCM framework proposes to make use of a decoupled consistency studying technique that decouples the distillation of movement era priors and picture era priors, permitting the mannequin to reinforce the visible high quality of the generated content material, and enhance the coaching effectivity concurrently.