In our earlier weblog, we explored the rising follow of huge language mannequin operations (LLMOps) and the nuances that set it aside from conventional machine studying operations (MLOps). We mentioned the challenges of scaling massive language model-powered functions and the way Microsoft Azure AI uniquely helps organizations handle this complexity. We touched on the significance of contemplating the event journey as an iterative course of to attain a high quality utility.

Microsoft Azure AI

Drive enterprise outcomes and enhance buyer experiences

On this weblog, we’ll discover these ideas in additional element. The enterprise improvement course of requires collaboration, diligent analysis, threat administration, and scaled deployment. By offering a strong suite of capabilities supporting these challenges, Azure AI affords a transparent and environment friendly path to producing worth in your merchandise in your clients.

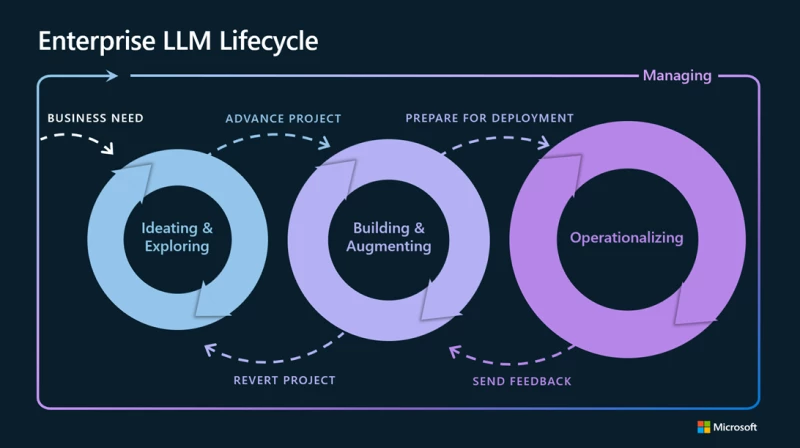

Enterprise LLM Lifecycle

Ideating and exploring loop

The primary loop usually includes a single developer looking for a mannequin catalog for giant language fashions (LLMs) that align with their particular enterprise necessities. Working with a subset of knowledge and prompts, the developer will attempt to perceive the capabilities and limitations of every mannequin with prototyping and analysis. Builders normally discover altering prompts to the fashions, totally different chunking sizes and vectoring indexing strategies, and fundamental interactions whereas attempting to validate or refute enterprise hypotheses. For example, in a buyer help state of affairs, they could enter pattern buyer queries to see if the mannequin generates acceptable and useful responses. They’ll validate this primary by typing in examples, however rapidly transfer to bulk testing with recordsdata and automatic metrics.

Past Azure OpenAI Service, Azure AI provides a complete mannequin catalog, which empowers customers to find, customise, consider, and deploy basis fashions from main suppliers corresponding to Hugging Face, Meta, and OpenAI. This helps builders discover and choose optimum basis fashions for his or her particular use case. Builders can rapidly check and consider fashions utilizing their very own information to see how the pre-trained mannequin would carry out for his or her desired eventualities.

Constructing and augmenting loop

As soon as a developer discovers and evaluates the core capabilities of their most popular LLM, they advance to the following loop which focuses on guiding and enhancing the LLM to raised meet their particular wants. Historically, a base mannequin is skilled with point-in-time information. Nonetheless, typically the state of affairs requires both enterprise-local information, real-time information, or extra basic alterations.

For reasoning on enterprise information, Retrieval Augmented Era (RAG) is most popular, which injects info from inner information sources into the immediate based mostly on the particular consumer request. Frequent sources are doc search programs, structured databases, and non-SQL shops. With RAG, a developer can “floor” their answer utilizing the capabilities of their LLMs to course of and generate responses based mostly on this injected information. This helps builders obtain custom-made options whereas sustaining relevance and optimizing prices. RAG additionally facilitates steady information updates with out the necessity for fine-tuning as the information comes from different sources.

Throughout this loop, the developer could discover instances the place the output accuracy doesn’t meet desired thresholds. One other technique to change the result of an LLM is fine-tuning. Fantastic-tuning helps most when the character of the system must be altered. Usually, the LLM will reply any immediate in the same tone and format. However for instance, if the use case requires code output, JSON, or any such modification, there could also be a constant change or restriction within the output, the place fine-tuning may be employed to raised align the system’s responses with the particular necessities of the duty at hand. By adjusting the parameters of the LLM throughout fine-tuning, the developer can considerably enhance the output accuracy and relevance, making the system extra helpful and environment friendly for the supposed use case.

It’s also possible to mix immediate engineering, RAG augmentation, and a fine-tuned LLM. Since fine-tuning necessitates further information, most customers provoke with immediate engineering and modifications to information retrieval earlier than continuing to fine-tune the mannequin.

Most significantly, steady analysis is an important ingredient of this loop. Throughout this section, builders assess the standard and general groundedness of their LLMs. The top purpose is to facilitate protected, accountable, and data-driven insights to tell decision-making whereas guaranteeing the AI options are primed for manufacturing.

Azure AI immediate stream is a pivotal part on this loop. Immediate stream helps groups streamline the event and analysis of LLM functions by offering instruments for systematic experimentation and a wealthy array of built-in templates and metrics. This ensures a structured and knowledgeable strategy to LLM refinement. Builders may effortlessly combine with frameworks like LangChain or Semantic Kernel, tailoring their LLM flows based mostly on their enterprise necessities. The addition of reusable Python instruments enhances information processing capabilities, whereas simplified and safe connections to APIs and exterior information sources afford versatile augmentation of the answer. Builders may use a number of LLMs as a part of their workflow, utilized dynamically or conditionally to work on particular duties and handle prices.

With Azure AI, evaluating the effectiveness of various improvement approaches turns into easy. Builders can simply craft and evaluate the efficiency of immediate variants towards pattern information, utilizing insightful metrics corresponding to groundedness, fluency, and coherence. In essence, all through this loop, immediate stream is the linchpin, bridging the hole between revolutionary concepts and tangible AI options.

Operationalizing loop

The third loop captures the transition of LLMs from improvement to manufacturing. This loop primarily includes deployment, monitoring, incorporating content material security programs, and integrating with CI/CD (steady integration and steady deployment) processes. This stage of the method is commonly managed by manufacturing engineers who’ve current processes for utility deployment. Central to this stage is collaboration, facilitating a easy handoff of belongings between utility builders and information scientists constructing on the LLMs, and manufacturing engineers tasked with deploying them.

Deployment permits for a seamless switch of LLMs and immediate flows to endpoints for inference with out the necessity for a fancy infrastructure setup. Monitoring helps groups monitor and optimize their LLM utility’s security and high quality in manufacturing. Content material security programs assist detect and mitigate misuse and undesirable content material, each on the ingress and egress of the appliance. Mixed, these programs fortify the appliance towards potential dangers, bettering alignment with threat, governance, and compliance requirements.

Not like conventional machine studying fashions which may classify content material, LLMs essentially generate content material. This content material typically powers end-user-facing experiences like chatbots, with the combination typically falling on builders who could not have expertise managing probabilistic fashions. LLM-based functions typically incorporate brokers and plugins to reinforce the capabilities of fashions to set off some actions, which may additionally amplify the chance. These components, mixed with the inherent variability of LLM outputs, present the significance of threat administration in LLMOps is vital.

Azure AI immediate stream ensures a easy deployment course of to managed on-line endpoints in Azure Machine Studying. As a result of immediate flows are well-defined recordsdata that adhere to revealed schemas, they’re simply integrated into current productization pipelines. Upon deployment, Azure Machine Studying invokes the mannequin information collector, which autonomously gathers manufacturing information. That approach, monitoring capabilities in Azure AI can present a granular understanding of useful resource utilization, guaranteeing optimum efficiency and cost-effectiveness by token utilization and value monitoring. Extra importantly, clients can monitor their generative AI functions for high quality and security in manufacturing, utilizing scheduled drift detection utilizing both built-in or customer-defined metrics. Builders may use Azure AI Content material Security to detect and mitigate dangerous content material or use the built-in content material security filters supplied with Azure OpenAI Service fashions. Collectively, these programs present higher management, high quality, and transparency, delivering AI options which might be safer, extra environment friendly, and extra simply meet the group’s compliance requirements.

Azure AI additionally helps to foster nearer collaboration amongst numerous roles by facilitating the seamless sharing of belongings like fashions, prompts, information, and experiment outcomes utilizing registries. Property crafted in a single workspace may be effortlessly found in one other, guaranteeing a fluid handoff of LLMs and prompts. This not solely permits a smoother improvement course of but additionally preserves the lineage throughout each improvement and manufacturing environments. This built-in strategy ensures that LLM functions are usually not solely efficient and insightful but additionally deeply ingrained inside the enterprise cloth, delivering unmatched worth.

Managing loop

The ultimate loop within the Enterprise Lifecycle LLM course of lays down a structured framework for ongoing governance, administration, and safety. AI governance can assist organizations speed up their AI adoption and innovation by offering clear and constant tips, processes, and requirements for his or her AI initiatives.

Azure AI offers built-in AI governance capabilities for privateness, safety, compliance, and accountable AI, in addition to intensive connectors and integrations to simplify AI governance throughout your information property. For instance, directors can set insurance policies to enable or implement particular safety configurations, corresponding to whether or not your Azure Machine Studying workspace makes use of a non-public endpoint. Or, organizations can combine Azure Machine Studying workspaces with Microsoft Purview to publish metadata on AI belongings routinely to the Purview Knowledge Map for simpler lineage monitoring. This helps threat and compliance professionals perceive what information is used to coach AI fashions, how base fashions are fine-tuned or prolonged, and the place fashions are used throughout totally different manufacturing functions. This info is essential for supporting accountable AI practices and offering proof for compliance studies and audits.

Whether or not constructing generative AI functions with open-source fashions, Azure’s managed OpenAI fashions, or your individual pre-trained customized fashions, Azure AI facilitates protected, safe, and dependable AI options with higher ease with purpose-built, scalable infrastructure.

Discover the harmonized journey of LLMOps at Microsoft Ignite

As organizations delve deeper into LLMOps to streamline processes, one reality turns into abundantly clear: the journey is multifaceted and requires a various vary of abilities. Whereas instruments and applied sciences like Azure AI immediate stream play an important function, the human ingredient—and numerous experience—is indispensable. It’s the harmonious collaboration of cross-functional groups that creates actual magic. Collectively, they make sure the transformation of a promising thought right into a proof of idea after which a game-changing LLM utility.

As we strategy our annual Microsoft Ignite convention this month, we’ll proceed to put up updates to our product line. Be a part of us for extra groundbreaking bulletins and demonstrations and keep tuned for our subsequent weblog on this sequence.