Assuring data system safety requires not simply stopping system compromises but additionally discovering adversaries already current within the community earlier than they’ll assault from the inside. Defensive laptop operations personnel have discovered the strategy of cyber risk looking a vital software for figuring out such threats. Nonetheless, the time, expense, and experience required for cyber risk looking typically inhibit using this method. What’s wanted is an autonomous cyber risk looking software that may run extra pervasively, obtain requirements of protection presently thought-about impractical, and considerably cut back competitors for restricted time, {dollars}, and naturally analyst sources. On this SEI weblog submit, I describe early work we’ve undertaken to use recreation idea to the event of algorithms appropriate for informing a totally autonomous risk looking functionality. As a place to begin, we’re growing what we consult with as chain video games, a set of video games through which risk looking methods could be evaluated and refined.

What’s Risk Searching?

The idea of risk looking has been round for fairly a while. In his seminal cybersecurity work, The Cuckoo’s Egg, Clifford Stoll described a risk hunt he performed in 1986. Nonetheless, risk looking as a proper observe in safety operations facilities is a comparatively latest improvement. It emerged as organizations started to understand how risk looking enhances two different widespread safety actions: intrusion detection and incident response.

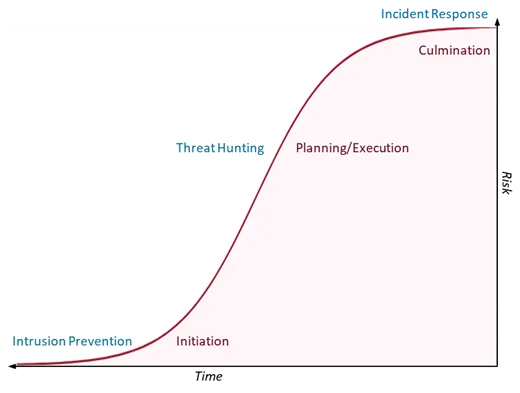

Intrusion detection tries to maintain attackers from stepping into the community and initiating an assault, whereas incident response seeks to mitigate injury achieved by an attacker after their assault has culminated. Risk looking addresses the hole within the assault lifecycle through which an attacker has evaded preliminary detection and is planning or launching the preliminary levels of execution of their plan (see Determine 1). These attackers can do important injury, however the threat hasn’t been absolutely realized but by the sufferer group. Risk looking gives the defender one other alternative to search out and neutralize assaults earlier than that threat can materialize.

Determine 1: Risk Searching Addresses a Essential Hole within the Assault Lifecycle

Risk looking, nevertheless, requires quite a lot of time and experience. Particular person hunts can take days or even weeks, requiring hunt employees to make powerful choices about which datasets and methods to research and which to disregard. Each dataset they don’t examine is one that might include proof of compromise.

The Imaginative and prescient: Autonomous Risk Searching

Quicker and larger-scale hunts may cowl extra information, higher detect proof of compromise, and alert defenders earlier than the injury is finished. These supercharged hunts may serve a reconnaissance perform, giving human risk hunters data they’ll use to raised direct their consideration. To attain this pace and financial system of scale, nevertheless, requires automation. In reality, we imagine it requires autonomy—the flexibility for automated processes to predicate, conduct, and conclude a risk hunt with out human intervention.

Human-driven risk looking is practiced all through the DoD, however normally opportunistically when different actions, comparable to real-time evaluation, allow. The expense of conducting risk hunt operations sometimes precludes thorough and complete investigation of the world of regard. By not competing with real-time evaluation or different actions for investigator effort, autonomous risk looking may very well be run extra pervasively and held to requirements of protection presently thought-about impractical.

At this early stage in our analysis on autonomous risk looking, we’re centered within the short-term on quantitative analysis, fast strategic improvement, and capturing the adversarial high quality of the risk looking exercise.

Modeling the Downside with Cyber Camouflage Video games

At current, we stay a great distance from our imaginative and prescient of a totally autonomous risk looking functionality that may examine cybersecurity information at a scale approaching the one at which this information is created. To start out down this path, we should be capable to mannequin the issue in an summary approach that we (and a future automated hunt system) can analyze. To take action, we wanted to construct an summary framework through which we may quickly prototype and take a look at risk looking methods, probably even programmatically utilizing instruments like machine studying. We believed a profitable method would mirror the concept risk looking includes each the attackers (who want to disguise in a community) and defenders (who wish to discover and evict them). These concepts led us to recreation idea.

We started by conducting a literature evaluate of latest work in recreation idea to determine researchers already working in cybersecurity, ideally in methods we may instantly adapt to our goal. Our evaluate did certainly uncover latest work within the space of adversarial deception that we thought we may construct on. Considerably to our shock, this physique of labor centered on how defenders may use deception, somewhat than attackers. In 2018, for instance, a class of video games was developed referred to as cyber deception video games. These video games, contextualized by way of the Cyber Kill Chain, sought to research the effectiveness of deception in irritating attacker reconnaissance. Furthermore, the cyber deception video games had been zero-sum video games, that means that the utility of the attacker and the defender stability out. We additionally discovered work on cyber camouflage video games, that are just like cyber deception video games, however are general-sum video games, that means the attacker and defender utility are not immediately associated and might range independently.

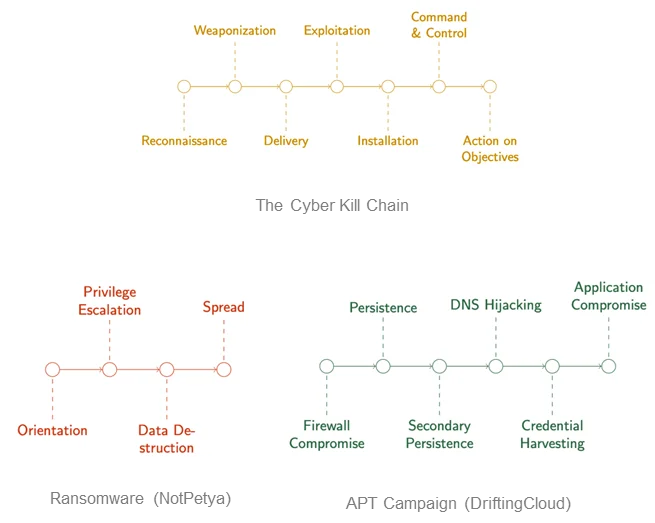

Seeing recreation idea utilized to actual cybersecurity issues made us assured we may apply it to risk looking. Essentially the most influential a part of this work on our analysis considerations the Cyber Kill Chain. Kill chains are an idea derived from kinetic warfare, and they’re normally utilized in operational cybersecurity as a communication and categorization software. Kill chains are sometimes used to interrupt down patterns of assault, comparable to in ransomware and different malware. A greater approach to think about these chains is as assault chains, as a result of they’re getting used for assault characterization.

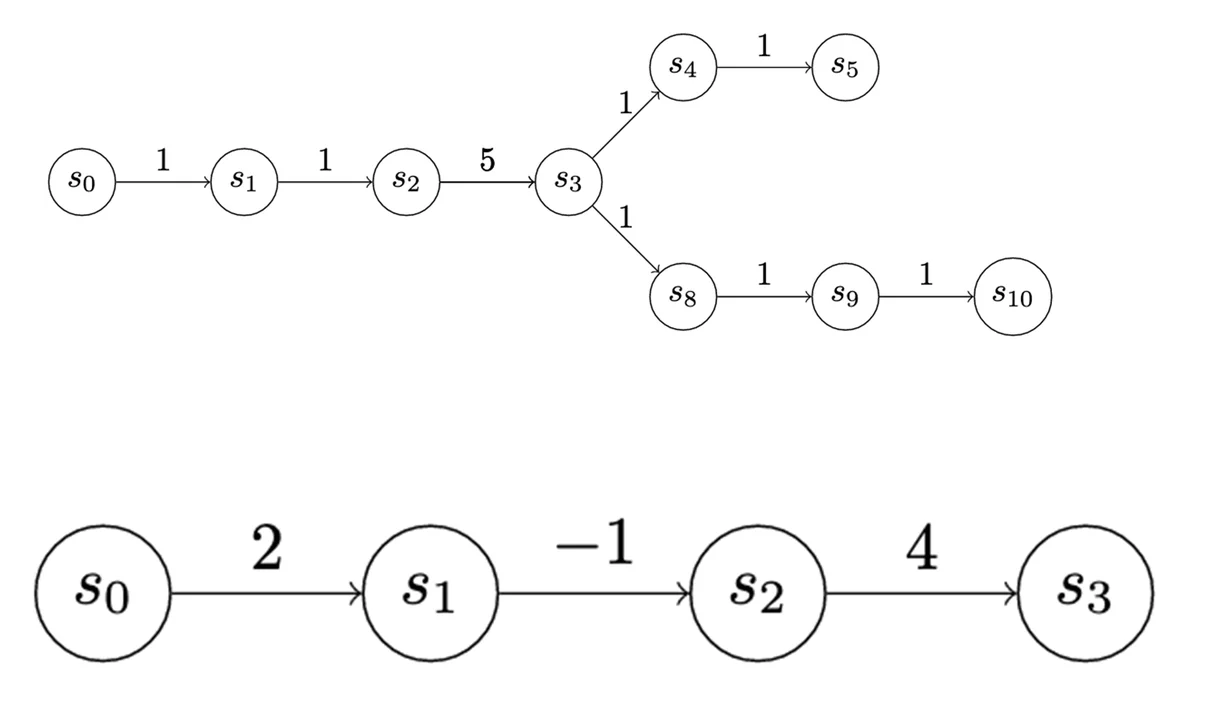

Elsewhere in cybersecurity, evaluation is finished utilizing assault graphs, which map all of the paths by which a system is likely to be compromised (see Determine 2). You may consider this sort of graph as a composition of particular person assault chains. Consequently, whereas the work on cyber deception video games primarily used references to the Cyber Kill Chain to contextualize the work, it struck us as a robust formalism that we may orient our mannequin round.

Determine 2: An Assault Graph Using the Cyber Kill Chain

Within the following sections, I’ll describe that mannequin and stroll you thru some easy examples, describe our present work, and spotlight the work we plan to undertake within the close to future.

Easy Chain Video games

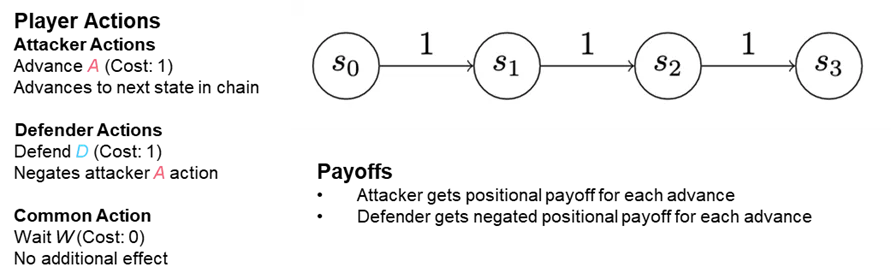

Our method to modeling cyber risk looking employs a household of video games we consult with as chain video games, as a result of they’re oriented round a really summary mannequin of the kill chains. We name this summary mannequin a state chain. Every state in a sequence represents a place of benefit in a community, a pc, a cloud software, or plenty of different completely different contexts in an enterprise’s data system infrastructure. Chain video games are performed on state chains. States symbolize positions within the community conveying benefit (or drawback) to the attacker. The utility and price of occupying a state could be quantified. Progress via the state chain motivates the attacker; stopping progress motivates the defender.

You may consider an attacker initially establishing themselves in a single state—“state zero” (see “S0” in Determine 3). Maybe somebody within the group clicked on a malicious hyperlink or an electronic mail attachment. The attacker’s first order of enterprise is to ascertain persistence on the machine they’ve contaminated to ward towards being unintentionally evicted. To ascertain this persistence, the attacker writes a file to disk and makes positive it’s executed when the machine begins up. In so doing, they’ve moved from preliminary an infection to persistence, and so they’re advancing into state one. Every extra step an attacker takes to additional their targets advances them into one other state.

Determine 3: The Genesis of a Risk Searching Mannequin: a Easy Chain Sport Performed on a State Chain

The sector isn’t extensive open for an attacker to take these actions. As an illustration, in the event that they’re not a privileged person, they won’t be capable to set their file to execute. What’s extra, attempting to take action will reveal their presence to an endpoint safety answer. So, they’ll must attempt to elevate their privileges and grow to be an admin person. Nonetheless, that transfer may additionally arouse suspicion. Each actions entail some threat, however additionally they have a possible reward.

To mannequin this case, a value is imposed any time an attacker desires to advance down the chain, however the attacker may alternatively earn a profit by efficiently transferring right into a given state. The defender doesn’t journey alongside the chain just like the attacker: The defender is someplace within the community, capable of observe (and generally cease) a few of the attacker’s strikes.

All of those chain video games are two-player video games performed between an attacker and a defender, and so they all observe guidelines governing how the attacker advances via the chain and the way the defender would possibly attempt to cease them. The video games are confined to a hard and fast variety of turns, normally two or three in these examples, and are largely general-sum video games: every participant positive aspects and loses utility independently. We conceived these video games as simultaneous flip video games: Each gamers resolve what to do on the similar time and people actions are resolved concurrently.

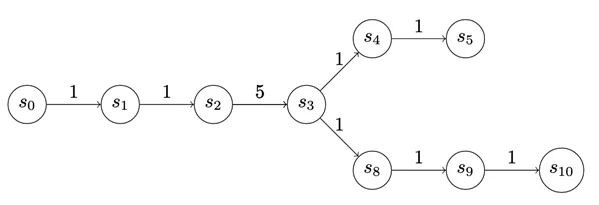

We will additionally apply graphs to trace the play (see Determine 4). From the attacker standpoint, this graph represents a selection they’ll make about the right way to assault, exploit, or in any other case function inside the defender community. As soon as the attacker makes that selection, we are able to consider the trail the attacker chooses as a sequence. So though the evaluation is oriented round chains, there are methods we are able to deal with extra advanced graphs to think about them like chains.

Determine 4: Graph Depicting Attacker Play in a Chain Sport

The payoff to enter a state is depicted on the edges of the graphs in Determine 5. The payoff doesn’t should be the identical for every state. We use uniform-value chains for the primary few examples, however there’s really a variety of expressiveness on this value task. As an illustration, within the chain under, S3 could symbolize a precious supply of knowledge, however to entry it the attacker could should tackle some internet threat.

Determine 5: Monitoring the Payoff to the Attacker for Advancing Down the Chain

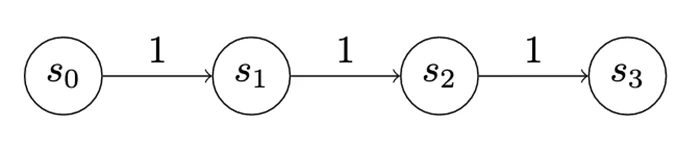

Within the first recreation, which is a quite simple recreation we are able to name “Model 0,” the attacker and defender have two actions every (Determine 6). The attacker can advance, that means they’ll go from no matter state they’re in to the following state, accumulating the utility for getting into the state and paying the fee to advance. On this case, the utility for every advance is 1, which is absolutely offset by the fee.

Determine 6: A Easy Sport, “Model 0,” Demonstrating a Uniform-Worth Chain

Nonetheless, the defender receives -1 utility each time an attacker advances (zero-sum). This scoring isn’t meant to incentivize the attacker to advance a lot as to inspire the defender to train their detect motion. A detect will cease an advance, that means the attacker pays the fee for the advance however doesn’t change states and doesn’t get any extra utility. Nonetheless, exercising the detect motion prices the defender 1 utility. Consequently, as a result of a penalty is imposed when the attacker advances, the defender is motivated to pay the fee for his or her detect motion and keep away from being punished for an attacker advance. Lastly, each the attacker and the defender can select to wait. Ready prices nothing, and earns nothing.

Determine 7 illustrates the payoff matrix of a Model 0 recreation. The matrix exhibits the whole internet utility for every participant once they play the sport for a set variety of turns (on this case, two turns). Every row represents the defender selecting a single sequence of actions: The primary row exhibits what occurs when the defender waits for 2 turns throughout all the opposite completely different sequences of actions the attacker can take. Every cell is a pair of numbers that exhibits how properly that works out for the defender, which is the left quantity, and the attacker on the proper.

Determine 7: Payoff Matrix for a Easy Assault-Defend Chain Sport of Two Turns (A=advance; W=wait; D=detect)

This matrix exhibits each technique the attacker or the defender can make use of on this recreation over two turns. Technically, it exhibits each pure technique. With that data, we are able to carry out different kinds of research, comparable to figuring out dominant methods. On this case, it seems there’s one dominant technique every for the attacker and the defender. The attacker’s dominant technique is to at all times attempt to advance. The defender’s dominant technique is, “By no means detect!” In different phrases, at all times wait. Intuitively, plainly the -1 utility penalty assessed to an attacker to advance isn’t sufficient to make it worthwhile for the defender to pay the fee to detect. So, consider this model of the sport as a instructing software. An enormous a part of making this method work lies in selecting good values for these prices and payouts.

Introducing Camouflage

In a second model of our easy chain recreation, we launched some mechanics that helped us take into consideration when to deploy and detect attacker camouflage. You’ll recall from our literature evaluate that prior work on cyber camouflage video games and cyber deception video games modeled deception as defensive actions, however right here it’s a property of the attacker.

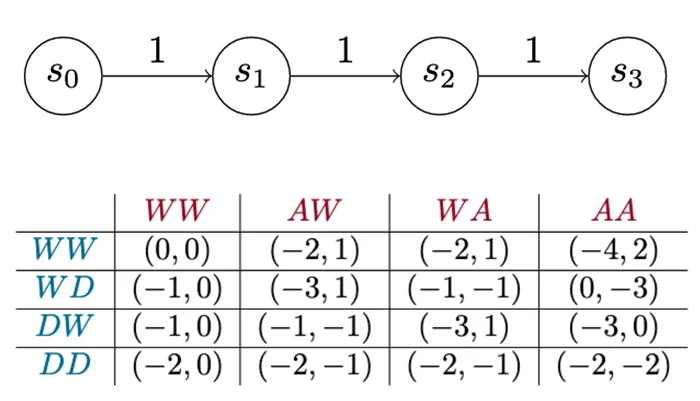

This recreation is an identical to Model 0, besides every participant’s main motion has been break up in two. As a substitute of a single advance motion, the attacker has a noisy advance motion and a camouflaged advance motion. Consequently, this model displays tendencies we see in precise cyber assaults: Some attackers attempt to take away proof of their exercise or select strategies which may be much less dependable however tougher to detect. Others transfer boldly ahead. On this recreation, that dynamic is represented by making a camouflaged advance extra pricey than a noisy advance, however it’s tougher to detect.

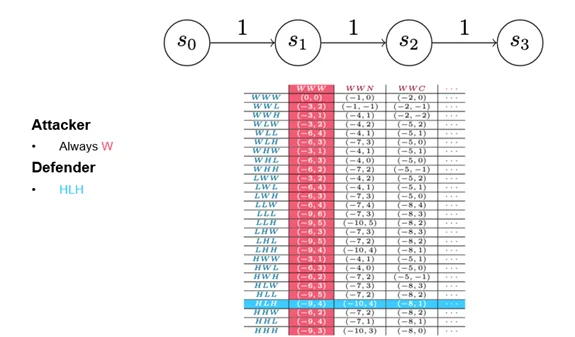

On the defender aspect, the detect motion now splits right into a weak detect and a robust detect. A weak detect can solely cease noisy advances; a robust detect can cease each forms of attacker advance, however–after all–it prices extra. Within the payout matrix (Determine 8), weak and robust detects are known as high and low detections. (Determine 8 presents the full payout matrix. I don’t anticipate you to have the ability to learn it, however I wished to offer a way of how shortly easy modifications can complicate evaluation.)

Determine 8: Payout Matrix in a Easy Chain Sport of Three Turns with Added Assault and Detect Choices

Dominant Technique

In recreation idea, a dominant technique is just not the one which at all times wins; somewhat, a method is deemed dominant if its efficiency is the most effective you’ll be able to anticipate towards a superbly rational opponent. Determine 9 gives a element of the payout matrix that exhibits all of the defender methods and three of the attacker methods. Regardless of the addition of a camouflaged motion, the sport nonetheless produces one dominant technique every for each the attacker and the defender. We’ve tuned the sport, nevertheless, in order that the attacker ought to by no means advance, which is an artifact of the way in which we’ve chosen to construction the prices and payouts. So, whereas these specific methods mirror the way in which the sport is tuned, we’d discover that attackers in actual life deploy methods aside from the optimum rational technique. In the event that they do, we’d wish to regulate our habits to optimize for that scenario.

Determine 9: Detailed View of Payout Matrix Indicating Dominant Technique

Extra Complicated Chains

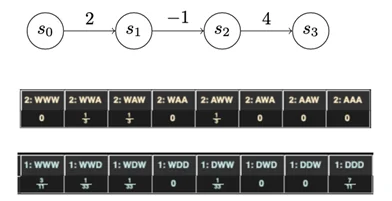

The 2 video games I’ve mentioned so far had been performed on chains with uniform development prices. Once we range that assumption, we begin to get far more attention-grabbing outcomes. As an illustration, a three-state chain (Determine 10) is a really cheap characterization of sure forms of assault: An attacker will get a variety of utility out of the preliminary an infection, and sees a variety of worth in taking a specific motion on targets, however stepping into place to take that motion could incur little, no, and even unfavorable utility.

Determine 10: Illustration of a Three-State Chain from the Gambit Sport Evaluation Device

Introducing chains with advanced utilities yields far more advanced methods for each attacker and defender. Determine 10 is derived from the output of Gambit, which is a recreation evaluation software, that describes the dominant methods for a recreation performed over the chain proven under. The dominant methods are actually blended methods. A blended technique signifies that there isn’t any “proper technique” for any single playthrough; you’ll be able to solely outline optimum play by way of chances. As an illustration, the attacker right here ought to at all times advance one flip and wait the opposite two turns. Nonetheless, the attacker ought to combine it up once they make their advance, spreading them out equally amongst all three turns.

This payout construction could mirror, as an illustration, the implementation of a mitigation of some type in entrance of a precious asset. The attacker is deterred from attacking the asset by the mitigation. However they’re additionally getting some utility from making that first advance. If that utility had been smaller, as an illustration as a result of the utility of compromising one other a part of the community was mitigated, maybe it might be rational for the attacker to both attempt to advance all the way in which down the chain or by no means attempt to advance in any respect. Clearly, extra work is required right here to raised perceive what’s happening, however we’re inspired by seeing this extra advanced habits emerge from such a easy change.

Future Work

Our early efforts on this line of analysis on automated risk looking have advised three areas of future work:

- enriching the sport area

- simulation

- mapping to the issue area

We talk about every of those areas under.

Enriching the Sport Area to Resemble a Risk Hunt

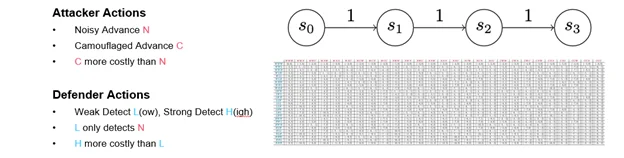

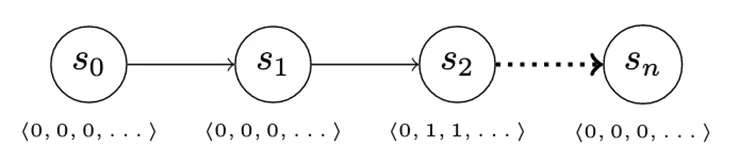

Risk looking normally occurs as a set of information queries to uncover proof of compromise. We will mirror this motion in our recreation by introducing an data vector. The data vector modifications when the attacker advances, however not all the knowledge within the vector is mechanically obtainable (and subsequently invisible) to the defender. As an illustration, because the attacker advances from S0 to S1 (Determine 11), there isn’t any change within the data the defender has entry to. Advancing from S1 to S2 modifications a few of the defender-visible information, nevertheless, enabling them to detect attacker exercise.

Determine 11: Data Vector Permits for Stealthy Assault

The addition of the knowledge vector permits plenty of attention-grabbing enhancements to our easy recreation. Deception could be modeled as a number of advance actions that differ within the elements of the knowledge vector that they modify. Equally, the defender’s detect actions can accumulate proof from completely different elements of the vector, or maybe unlock elements of the vector to which the defender usually has no entry. This habits could mirror making use of enhanced logging to processes or methods the place compromise could also be suspected, as an illustration.

Lastly, we are able to additional defender actions by introducing actions to remediate an attacker presence; for instance, by suggesting a bunch be reinstalled, or by ordering configuration modifications to a useful resource that make it tougher for the attacker to advance into.

Simulation

As proven within the earlier instance video games, small issues can lead to many extra choices for participant habits, and this impact creates a bigger area through which to conduct evaluation. Simulation can present approximate helpful details about questions which can be computationally infeasible to reply exhaustively. Simulation additionally permits us to mannequin conditions through which theoretical assumptions are violated to find out whether or not some theoretically suboptimal methods have higher efficiency in particular situations.

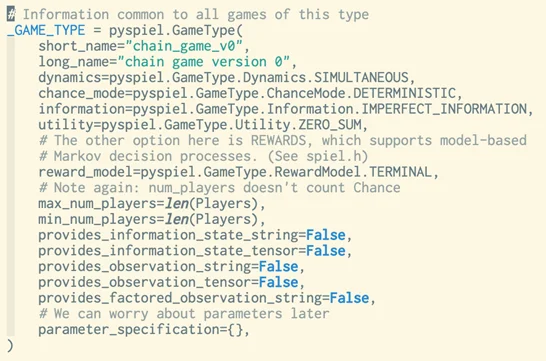

Determine 12 presents the definition of model 0 of our recreation in OpenSpiel, a simulation framework from DeepMind. We plan to make use of this software for extra energetic experimentation within the coming yr.

Determine 12: Sport Specification Created with OpenSpiel

Mapping the Mannequin to the Downside of Risk Searching

Our final instance recreation illustrated how we are able to use completely different advance prices on state chains to raised mirror patterns of community safety and patterns of attacker habits. These patterns range relying on how we select to interpret the connection of the state chain to the attacking participant. Extra complexity right here ends in a a lot richer set of methods than the uniform-value chains do.

There are different methods we are able to map primitives in our video games to extra features of the real-world risk looking downside. We will use simulation to mannequin empirically noticed methods, and we are able to map options within the data vector to data components current in real-world methods. This train lies on the coronary heart of the work we plan to do within the close to future.

Conclusion

Guide risk looking strategies presently obtainable are costly, time consuming, useful resource intensive, and depending on experience. Quicker, inexpensive, and fewer resource-intensive risk looking strategies would assist organizations examine extra information sources, coordinate for protection, and assist triage human risk hunts. The important thing to sooner, inexpensive risk looking is autonomy. To develop efficient autonomous risk looking strategies, we’re growing chain video games, that are a set of video games we use to guage risk looking methods. Within the close to time period, our targets are modeling, quantitatively evaluating and growing methods, fast strategic improvement, and capturing the adversarial high quality of risk looking exercise. In the long run, our purpose is an autonomous risk looking software that may predict adversarial exercise, examine it, and draw conclusions to tell human analysts.