A visitor publish by the XR Improvement staff at KDDI & Alpha-U

Please observe that the data, makes use of, and purposes expressed within the under publish are solely these of our visitor writer, KDDI.

|

| KDDI is integrating text-to-speech & Cloud Rendering to digital human ‘Metako’ |

VTubers, or digital YouTubers, are on-line entertainers who use a digital avatar generated utilizing laptop graphics. This digital development originated in Japan within the mid-2010s, and has change into a global on-line phenomenon. A majority of VTubers are English and Japanese-speaking YouTubers or dwell streamers who use avatar designs.

KDDI, a telecommunications operator in Japan with over 40 million clients, needed to experiment with varied applied sciences constructed on its 5G community however discovered that getting correct actions and human-like facial expressions in real-time was difficult.

Creating digital people in real-time

Introduced at Google I/O 2023 in Might, the MediaPipe Face Landmarker answer detects facial landmarks and outputs blendshape scores to render a 3D face mannequin that matches the consumer. With the MediaPipe Face Landmarker answer, KDDI and the Google Companion Innovation staff efficiently introduced realism to their avatars.

Technical Implementation

Utilizing Mediapipe’s highly effective and environment friendly Python package deal, KDDI builders have been in a position to detect the performer’s facial options and extract 52 blendshapes in real-time.

import mediapipe as mp |

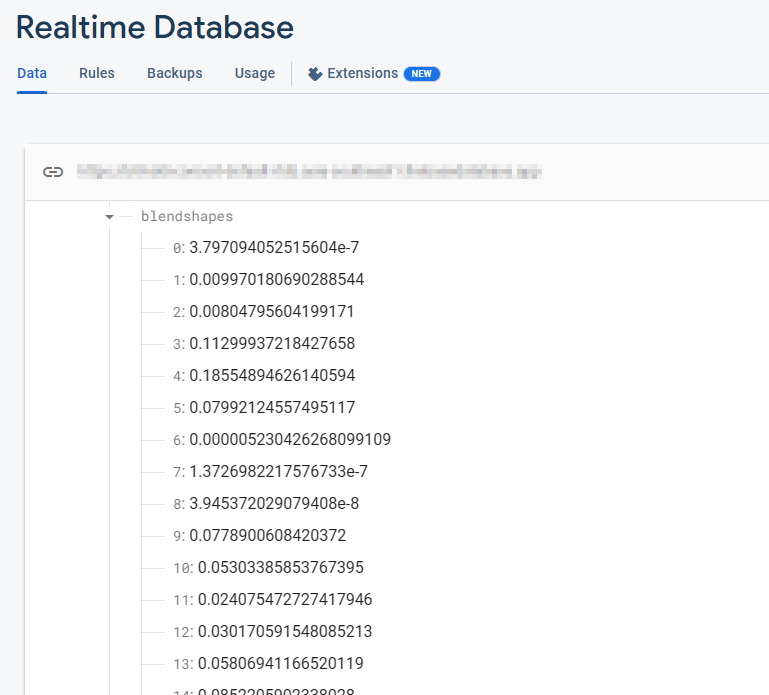

The Firebase Realtime Database shops a set of 52 blendshape float values. Every row corresponds to a selected blendshape, listed so as.

_neutral, |

These blendshape values are repeatedly up to date in real-time because the digital camera is open and the FaceMesh mannequin is operating. With every body, the database displays the newest blendshape values, capturing the dynamic modifications in facial expressions as detected by the FaceMesh mannequin.

|

After extracting the blendshapes knowledge, the subsequent step includes transmitting it to the Firebase Realtime Database. Leveraging this superior database system ensures a seamless move of real-time knowledge to the shoppers, eliminating considerations about server scalability and enabling KDDI to deal with delivering a streamlined consumer expertise.

import concurrent.futures |

To proceed the progress, builders seamlessly transmit the blendshapes knowledge from the Firebase Realtime Database to Google Cloud’s Immersive Stream for XR cases in real-time. Google Cloud’s Immersive Stream for XR is a managed service that runs Unreal Engine undertaking within the cloud, renders and streams immersive photorealistic 3D and Augmented Actuality (AR) experiences to smartphones and browsers in actual time.

This integration permits KDDI to drive character face animation and obtain real-time streaming of facial animation with minimal latency, making certain an immersive consumer expertise.

|

On the Unreal Engine aspect operating by the Immersive Stream for XR, we use the Firebase C++ SDK to seamlessly obtain knowledge from the Firebase. By establishing a database listener, we will immediately retrieve blendshape values as quickly as updates happen within the Firebase Realtime database desk. This integration permits for real-time entry to the newest blendshape knowledge, enabling dynamic and responsive facial animation in Unreal Engine initiatives.

|

After retrieving blendshape values from the Firebase SDK, we will drive the face animation in Unreal Engine by utilizing the “Modify Curve” node within the animation blueprint. Every blendshape worth is assigned to the character individually on each body, permitting for exact and real-time management over the character’s facial expressions.

|

An efficient strategy for implementing a realtime database listener in Unreal Engine is to make the most of the GameInstance Subsystem, which serves in its place singleton sample. This permits for the creation of a devoted BlendshapesReceiver occasion accountable for dealing with the database connection, authentication, and steady knowledge reception within the background.

By leveraging the GameInstance Subsystem, the BlendshapesReceiver occasion might be instantiated and maintained all through the lifespan of the sport session. This ensures a persistent database connection whereas the animation blueprint reads and drives the face animation utilizing the acquired blendshape knowledge.

Utilizing only a native PC operating MediaPipe, KDDI succeeded in capturing the actual performer’s facial features and motion, and created high-quality 3D re-target animation in actual time.

Getting began

To be taught extra, watch Google I/O 2023 classes: Straightforward on-device ML with MediaPipe, Supercharge your internet app with machine studying and MediaPipe, What’s new in machine studying, and take a look at the official documentation over on builders.google.com/mediapipe.

What’s subsequent?

This MediaPipe integration is one instance of how KDDI is eliminating the boundary between the actual and digital worlds, permitting customers to get pleasure from on a regular basis experiences reminiscent of attending dwell music performances, having fun with artwork, having conversations with associates, and purchasing―anytime, wherever.

KDDI’s αU supplies companies for the Web3 period, together with the metaverse, dwell streaming, and digital purchasing, shaping an ecosystem the place anybody can change into a creator, supporting the brand new era of customers who effortlessly transfer between the actual and digital worlds.