Deep studying has just lately pushed large progress in a big selection of functions, starting from lifelike picture technology and spectacular retrieval programs to language fashions that may maintain human-like conversations. Whereas this progress may be very thrilling, the widespread use of deep neural community fashions requires warning: as guided by Google’s AI Rules, we search to develop AI applied sciences responsibly by understanding and mitigating potential dangers, such because the propagation and amplification of unfair biases and defending person privateness.

Totally erasing the affect of the info requested to be deleted is difficult since, except for merely deleting it from databases the place it’s saved, it additionally requires erasing the affect of that information on different artifacts similar to skilled machine studying fashions. Furthermore, latest analysis [1, 2] has proven that in some instances it could be attainable to deduce with excessive accuracy whether or not an instance was used to coach a machine studying mannequin utilizing membership inference assaults (MIAs). This could increase privateness issues, because it implies that even when a person’s information is deleted from a database, it could nonetheless be attainable to deduce whether or not that particular person’s information was used to coach a mannequin.

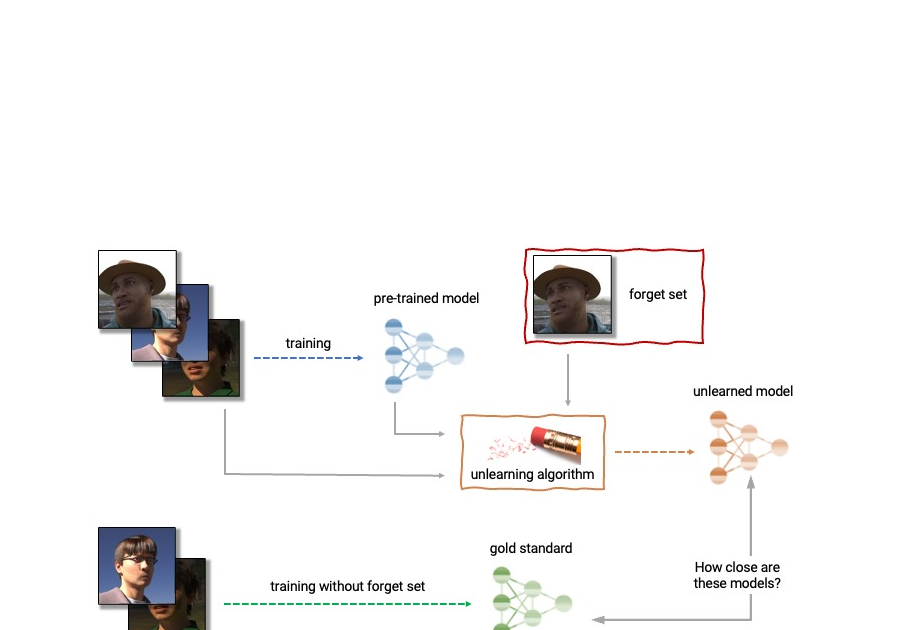

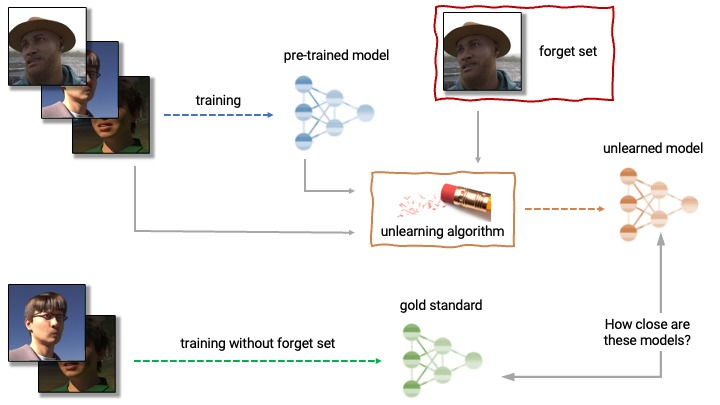

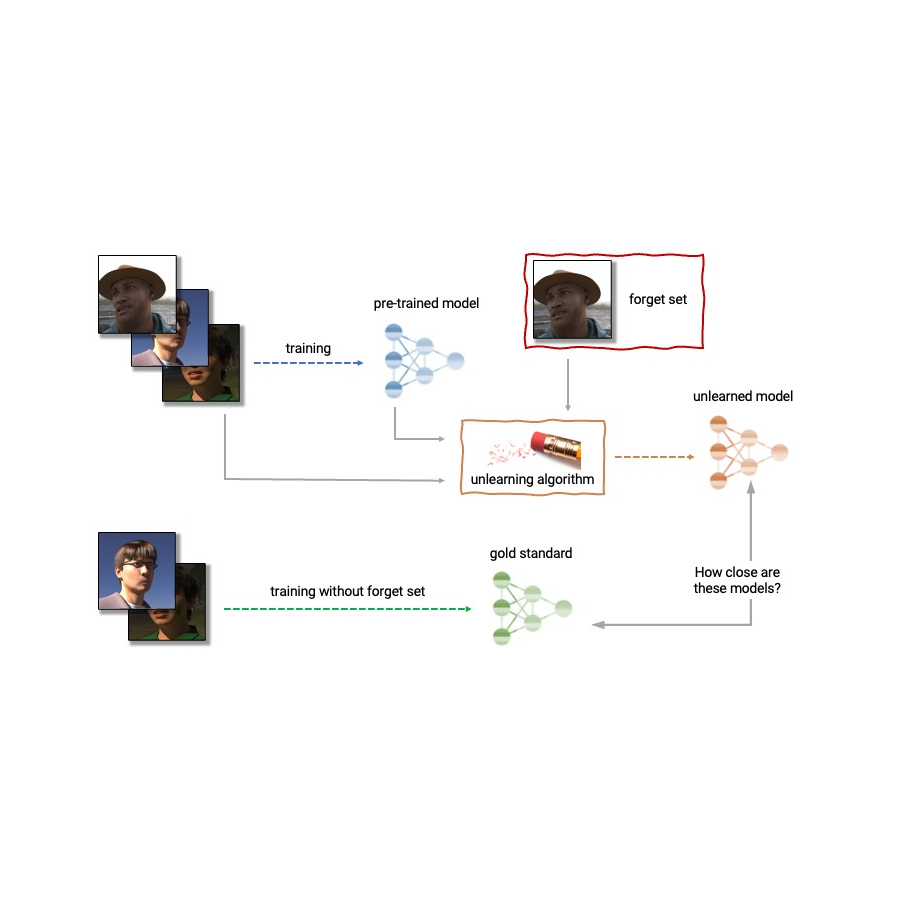

Given the above, machine unlearning is an emergent subfield of machine studying that goals to take away the affect of a selected subset of coaching examples — the “neglect set” — from a skilled mannequin. Moreover, a perfect unlearning algorithm would take away the affect of sure examples whereas sustaining different helpful properties, such because the accuracy on the remainder of the practice set and generalization to held-out examples. An easy strategy to produce this unlearned mannequin is to retrain the mannequin on an adjusted coaching set that excludes the samples from the neglect set. Nonetheless, this isn’t at all times a viable possibility, as retraining deep fashions could be computationally costly. A great unlearning algorithm would as an alternative use the already-trained mannequin as a place to begin and effectively make changes to take away the affect of the requested information.

Right now we’re thrilled to announce that we have teamed up with a broad group of educational and industrial researchers to prepare the first Machine Unlearning Problem. The competitors considers a sensible situation by which after coaching, a sure subset of the coaching photographs have to be forgotten to guard the privateness or rights of the people involved. The competitors will likely be hosted on Kaggle, and submissions will likely be routinely scored when it comes to each forgetting high quality and mannequin utility. We hope that this competitors will assist advance the cutting-edge in machine unlearning and encourage the event of environment friendly, efficient and moral unlearning algorithms.

Machine unlearning functions

Machine unlearning has functions past defending person privateness. As an illustration, one can use unlearning to erase inaccurate or outdated data from skilled fashions (e.g., as a consequence of errors in labeling or adjustments within the atmosphere) or take away dangerous, manipulated, or outlier information.

The sphere of machine unlearning is expounded to different areas of machine studying similar to differential privateness, life-long studying, and equity. Differential privateness goals to ensure that no explicit coaching instance has too giant an affect on the skilled mannequin; a stronger aim in comparison with that of unlearning, which solely requires erasing the affect of the designated neglect set. Life-long studying analysis goals to design fashions that may be taught constantly whereas sustaining previously-acquired abilities. As work on unlearning progresses, it could additionally open further methods to spice up equity in fashions, by correcting unfair biases or disparate therapy of members belonging to completely different teams (e.g., demographics, age teams, and so on.).

Challenges of machine unlearning

The issue of unlearning is advanced and multifaceted because it entails a number of conflicting goals: forgetting the requested information, sustaining the mannequin’s utility (e.g., accuracy on retained and held-out information), and effectivity. Due to this, present unlearning algorithms make completely different trade-offs. For instance, full retraining achieves profitable forgetting with out damaging mannequin utility, however with poor effectivity, whereas including noise to the weights achieves forgetting on the expense of utility.

Moreover, the analysis of forgetting algorithms within the literature has to date been extremely inconsistent. Whereas some works report the classification accuracy on the samples to unlearn, others report distance to the absolutely retrained mannequin, and but others use the error fee of membership inference assaults as a metric for forgetting high quality [4, 5, 6].

We imagine that the inconsistency of analysis metrics and the dearth of a standardized protocol is a critical obstacle to progress within the discipline — we’re unable to make direct comparisons between completely different unlearning strategies within the literature. This leaves us with a myopic view of the relative deserves and disadvantages of various approaches, in addition to open challenges and alternatives for creating improved algorithms. To handle the difficulty of inconsistent analysis and to advance the cutting-edge within the discipline of machine unlearning, we have teamed up with a broad group of educational and industrial researchers to prepare the primary unlearning problem.

Saying the primary Machine Unlearning Problem

We’re happy to announce the first Machine Unlearning Problem, which will likely be held as a part of the NeurIPS 2023 Competitors Observe. The aim of the competitors is twofold. First, by unifying and standardizing the analysis metrics for unlearning, we hope to determine the strengths and weaknesses of various algorithms by way of apples-to-apples comparisons. Second, by opening this competitors to everybody, we hope to foster novel options and make clear open challenges and alternatives.

The competitors will likely be hosted on Kaggle and run between mid-July 2023 and mid-September 2023. As a part of the competitors, right this moment we’re asserting the supply of the beginning package. This beginning package offers a basis for contributors to construct and check their unlearning fashions on a toy dataset.

The competitors considers a sensible situation by which an age predictor has been skilled on face photographs, and, after coaching, a sure subset of the coaching photographs have to be forgotten to guard the privateness or rights of the people involved. For this, we’ll make out there as a part of the beginning package a dataset of artificial faces (samples proven under) and we’ll additionally use a number of real-face datasets for analysis of submissions. The contributors are requested to submit code that takes as enter the skilled predictor, the neglect and retain units, and outputs the weights of a predictor that has unlearned the designated neglect set. We are going to consider submissions based mostly on each the energy of the forgetting algorithm and mannequin utility. We may also implement a tough cut-off that rejects unlearning algorithms that run slower than a fraction of the time it takes to retrain. A precious end result of this competitors will likely be to characterize the trade-offs of various unlearning algorithms.

|

| Excerpt photographs from the Face Synthetics dataset along with age annotations. The competitors considers the situation by which an age predictor has been skilled on face photographs just like the above, and, after coaching, a sure subset of the coaching photographs have to be forgotten. |

For evaluating forgetting, we’ll use instruments impressed by MIAs, similar to LiRA. MIAs have been first developed within the privateness and safety literature and their aim is to deduce which examples have been a part of the coaching set. Intuitively, if unlearning is profitable, the unlearned mannequin accommodates no traces of the forgotten examples, inflicting MIAs to fail: the attacker can be unable to deduce that the neglect set was, in actual fact, a part of the unique coaching set. As well as, we may also use statistical assessments to quantify how completely different the distribution of unlearned fashions (produced by a specific submitted unlearning algorithm) is in comparison with the distribution of fashions retrained from scratch. For a perfect unlearning algorithm, these two will likely be indistinguishable.

Conclusion

Machine unlearning is a strong device that has the potential to deal with a number of open issues in machine studying. As analysis on this space continues, we hope to see new strategies which can be extra environment friendly, efficient, and accountable. We’re thrilled to have the chance by way of this competitors to spark curiosity on this discipline, and we’re trying ahead to sharing our insights and findings with the neighborhood.

Acknowledgements

The authors of this submit at the moment are a part of Google DeepMind. We’re scripting this weblog submit on behalf of the group crew of the Unlearning Competitors: Eleni Triantafillou*, Fabian Pedregosa* (*equal contribution), Meghdad Kurmanji, Kairan Zhao, Gintare Karolina Dziugaite, Peter Triantafillou, Ioannis Mitliagkas, Vincent Dumoulin, Lisheng Solar Hosoya, Peter Kairouz, Julio C. S. Jacques Junior, Jun Wan, Sergio Escalera and Isabelle Guyon.